Sidecar Proxy Demystified: How Service Meshes Intercept Every Byte in Your Cluster

Your microservices are talking to each other right now. Requests are flying across pod boundaries, TLS handshakes are completing, retry budgets are being consumed—and if you’re running a service mesh, none of your application code is orchestrating any of it. That should feel strange, because it is strange. A third-party process is transparently intercepting every TCP connection your services make, mutating traffic in flight, enforcing policy, emitting telemetry—and doing all of this without a single import statement in your codebase.

Most engineers accept this as infrastructure magic and move on. That works until it doesn’t: a latency spike you can’t explain, an mTLS handshake failing in ways that don’t match your cert rotation timeline, a retry storm that your application never initiated. At that point, “the mesh handles it” stops being an answer.

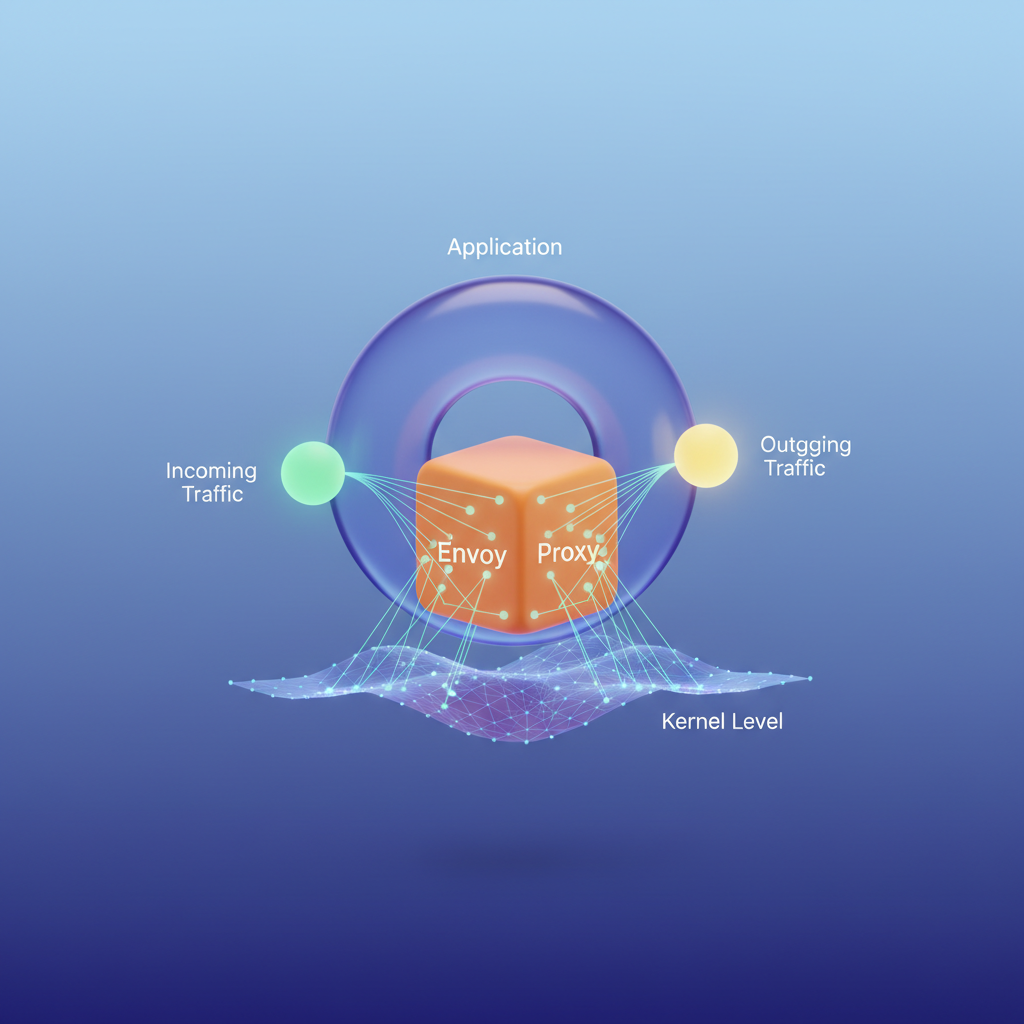

What’s actually happening is a precise collaboration between the Linux kernel’s netfilter subsystem, a set of iptables rules injected at pod startup, and an Envoy process running in the same network namespace as your application. The interception isn’t magic—it’s packet redirection at the kernel level, and the rules governing it were written into your pod’s network stack before your application container started its first listener.

Understanding this mechanism changes how you debug, how you size proxies, and how you reason about failure modes that your application observability will never surface on its own.

To understand why the industry converged on this particular architecture—and why it’s shaped the way it is—it helps to start with the problem it was actually built to solve.

The Problem Service Mesh Solves: Cross-Cutting Network Concerns at Scale

In a monolithic application, the network is a detail. One process, one deployment, one set of retry loops buried in a shared HTTP client. Promote that monolith to fifty microservices and the network becomes the application. Every service-to-service call is a distributed systems problem: latency spikes, partial failures, certificate expiry, missing trace context. The question isn’t whether you handle these concerns—it’s where.

The Library Tax

The first industry answer was libraries. Netflix OSS gave teams Hystrix for circuit breaking, Ribbon for client-side load balancing, and Eureka for service discovery. Spring Cloud wrapped these into a friendlier API. The model worked at Netflix because Netflix controlled the JVM stack. It collapsed everywhere else.

The library approach extracts a compounding organizational tax. Each language runtime needs its own implementation: the Go team ports Hystrix semantics to go-kit, the Python team ships a half-complete wrapper around requests, the Node.js service quietly omits circuit breaking because the deadline was Friday. Meanwhile, upgrading retry policy across the fleet means coordinating releases across every team simultaneously. A security patch to the mTLS handshake logic touches forty repositories. The blast radius of a misconfigured timeout is invisible until a single slow dependency cascades into a full outage.

This isn’t a culture problem. It’s an architectural one. Cross-cutting network concerns don’t belong in application code for the same reason logging frameworks don’t belong in business logic: mixing policy and implementation creates coupling that compounds with every new service.

Infrastructure as the Resolution Layer

The shift that service meshes represent is conceptual before it’s technical: treat inter-service networking as infrastructure, not application responsibility. The sidecar pattern operationalizes this. A proxy process runs alongside every application container, intercepts all inbound and outbound traffic at the network layer, and enforces policy uniformly—regardless of what language the application is written in or which team owns it.

The application never knows the proxy exists. It opens a TCP connection to a downstream service; the sidecar transparently applies mutual TLS, records latency histograms, enforces a retry budget, and injects trace headers. The application team ships business logic. The platform team ships network policy.

💡 Pro Tip: The organizational argument for a service mesh is often stronger than the technical one. When a VP of Engineering asks why onboarding a new service requires three library integrations and a compliance review, “we moved that into the platform” is a more durable answer than any benchmark.

This is the concrete problem Istio and Envoy solve. The mechanisms by which a sidecar actually intercepts traffic—without a single line of application code changing—are where the architecture gets interesting.

Anatomy of a Sidecar: How Envoy Intercepts Traffic Without Touching Your App

The magic of a service mesh is that your application code remains completely unaware of the proxy sitting beside it. No SDK changes, no configuration files, no redeployment. Yet every TCP connection your service opens or accepts passes through Envoy. Understanding how that happens requires looking at two distinct mechanisms working in concert: Linux network namespaces and iptables rules.

Shared Network Namespace: One Pod, One Network Stack

In Kubernetes, all containers within a Pod share a single network namespace. This is a fundamental Linux kernel concept: the namespace owns the network interfaces, routing tables, and iptables chains. When the Envoy sidecar starts alongside your application container, both processes bind to the same loopback interface and the same eth0. From the kernel’s perspective, there is no boundary between them at the network layer.

This shared namespace is precisely what makes transparent interception possible. The sidecar does not sit between two separate network stacks—it operates within the same one.

The istio-init Container: Wiring the Trap

Before your application or Envoy starts, Kubernetes runs an init container called istio-init. Its sole responsibility is to install iptables rules into the Pod’s network namespace. The two critical rules redirect all inbound traffic destined for any port to Envoy’s inbound listener on port 15006, and redirect all outbound traffic originating from the Pod to Envoy’s outbound listener on port 15001.

There is one deliberate exception: traffic originating from Envoy’s own user ID (UID 1337) is excluded from redirection. Without this exemption, Envoy’s own outbound connections would loop back into itself indefinitely. This UID-based bypass is the mechanism that breaks the redirection cycle.

The result: your application calls connect() to a remote service IP on port 8080, the kernel intercepts that connection via the OUTPUT chain, and delivers it to Envoy on port 15001—before a single packet leaves the machine.

The Envoy Request Pipeline

Once traffic arrives at Envoy, it flows through a four-stage processing chain:

Listeners are the entry points. Each listener binds to a port and defines a filter chain—the ordered set of network and HTTP filters applied to the connection. The listener on 15001 handles all outbound traffic; 15006 handles inbound.

Routes map request attributes—virtual host, path prefix, headers—to named clusters. This is where traffic splitting, retry policies, and header manipulation are applied.

Clusters represent upstream destinations: a logical group of endpoints, typically corresponding to a Kubernetes Service. Clusters carry load balancing configuration, connection pool settings, and circuit breaker thresholds.

Endpoints are the resolved IP:port pairs of individual Pod instances, populated dynamically via the xDS API from Istiod.

💡 Pro Tip: Run

istioctl proxy-config listeners <pod-name>andistioctl proxy-config clusters <pod-name>to inspect the live listener and cluster configuration on any Envoy instance. This is the fastest way to diagnose routing misconfigurations without reading raw xDS responses.

Why Your App Sees Localhost

When an inbound connection arrives at your application, the source IP it observes is 127.0.0.6—Envoy’s inbound handler address—not the original caller’s IP. Envoy has already terminated the original connection, applied policy, and opened a new connection to your application process over loopback. The app is talking to a local proxy, not the network.

This architecture means Envoy has full visibility into every byte of every request before your application code touches it—which is exactly what enables mTLS termination, telemetry collection, and policy enforcement without any application involvement.

The next question is how Envoy gets injected into every new Pod automatically, without operators manually modifying each workload definition. That answer lies in the Kubernetes admission control pipeline.

Automatic Sidecar Injection: Mutating Admission Webhooks in Practice

When you label a namespace istio-injection: enabled and watch a plain kubectl apply spawn two containers instead of one, that apparent magic is actually a precisely defined Kubernetes extension point: the MutatingAdmissionWebhook. Understanding its mechanics gives you fine-grained control over where Envoy appears in your cluster—and the diagnostic foundation to explain why it sometimes doesn’t.

The Webhook Interception Path

Every pod creation request passes through the Kubernetes API server’s admission chain before the object is persisted to etcd. Istio registers a MutatingWebhookConfiguration that tells the API server to forward matching pod creation and update requests to the istiod service over HTTPS. Istiod inspects the incoming pod spec, makes an injection decision, and returns a JSON Patch document that the API server applies before writing the final object.

The webhook registration itself looks like this:

apiVersion: admissionregistration.k8s.io/v1kind: MutatingWebhookConfigurationmetadata: name: istio-sidecar-injectorwebhooks: - name: namespace.sidecar-injector.istio.io namespaceSelector: matchLabels: istio-injection: enabled rules: - apiGroups: [""] apiVersions: ["v1"] resources: ["pods"] operations: ["CREATE"] clientConfig: service: name: istiod namespace: istio-system path: /inject caBundle: LS0tLS1CRUdJTi... # istiod's serving CA admissionReviewVersions: ["v1"] sideEffects: None failurePolicy: FailThe failurePolicy: Fail default is intentional and consequential: if istiod is unavailable, pod creation fails cluster-wide in injected namespaces. Some teams switch this to Ignore to preserve availability during control plane outages, accepting the trade-off that pods created during that window run without a sidecar.

Namespace-Level vs. Pod-Level Control

Injection operates at two granularities. At the namespace level, the istio-injection: enabled label opts every pod in that namespace into injection. This is the right default for application namespaces.

At the pod level, the annotation sidecar.istio.io/inject: "false" overrides the namespace default for individual workloads. This is the primary escape hatch for exceptions.

apiVersion: v1kind: Podmetadata: name: legacy-metrics-exporter namespace: production annotations: sidecar.istio.io/inject: "false"spec: containers: - name: exporter image: registry.internal.mycompany.com/metrics-exporter:2.1.4What the Injected Pod Spec Contains

After the webhook patch is applied, three structural changes appear in the pod spec:

An init container named istio-init runs before your application container. It executes iptables rules that redirect all inbound and outbound traffic through Envoy’s ports (15001 for outbound, 15006 for inbound). This container requires NET_ADMIN and NET_RAW capabilities and exits once the rules are in place.

The sidecar container (istio-proxy) runs the Envoy binary, bootstrapped with configuration pointing at istiod:15010 for xDS. It mounts a projected volume containing the pod’s SPIFFE identity certificate sourced from the node-local istio-agent.

Environment variables such as ISTIO_META_POD_NAME, ISTIO_META_NAMESPACE, and ISTIO_META_CLUSTER_ID are injected into both the proxy container and, selectively, the application container to make mesh metadata available at runtime.

💡 Pro Tip: Run

kubectl get pod <pod-name> -o yamlon an injected pod and inspect theinitContainerssection. The exactiptablesarguments are visible as container arguments—reading them directly is the fastest way to diagnose unexpected traffic behavior like a specific port being excluded from interception.

Disabling Injection Selectively

Three workload types warrant deliberate injection exclusions:

Short-lived Jobs complete before Envoy’s bootstrap handshake with istiod finishes, causing the job pod to hang. Annotate job pod templates with sidecar.istio.io/inject: "false".

DaemonSets on infrastructure nodes running monitoring agents or CNI components often hold elevated privileges incompatible with istio-init’s network manipulation. Exclude them explicitly.

Legacy workloads with hardcoded port assumptions break when iptables redirects traffic through 15001 before it reaches the application’s expected source port. Identify these by watching for connection refused errors that appear only after mesh enrollment.

For namespaces that should never receive injection regardless of pod annotations—kube-system, istio-system, monitoring stacks—configure the webhook’s namespaceSelector with a matchExpressions block that explicitly excludes them using a istio-injection: disabled label.

With injection behavior fully under your control, the natural next question is what Envoy does with traffic once it has it—and how istiod continuously drives that behavior across every proxy in the cluster through the xDS API.

Data Plane vs. Control Plane: The xDS API and How Istiod Drives Envoy

Every Envoy sidecar in your cluster is a blank slate at startup. It knows nothing about your services, your routing rules, or your mTLS policies. Istiod exists to fix that—continuously, at scale, without restarting a single proxy.

The Split That Makes Scale Possible

The control plane (Istiod) and data plane (your Envoy sidecars) have fundamentally different jobs. Istiod watches the Kubernetes API server, reconciles your Istio resources, and computes the desired configuration for every proxy in the mesh. Envoy sidecars receive that configuration and do the actual work: accepting connections, enforcing policies, collecting telemetry, and forwarding bytes.

This separation is what allows a mesh of thousands of sidecars to stay manageable. Istiod handles the complexity of translating high-level intent into low-level config once, then distributes the result. Each Envoy proxy is a dumb-but-fast recipient. When you change a VirtualService, Istiod recomputes the affected config and pushes an update—only to the proxies that need it—without any proxy restart or downtime.

xDS: The Configuration Protocol

Istiod speaks to Envoy over a gRPC streaming protocol called xDS (discovery service). It’s a family of APIs, each responsible for a different category of configuration:

| API | Name | What It Configures |

|---|---|---|

| LDS | Listener Discovery Service | Ports Envoy listens on, filter chains |

| RDS | Route Discovery Service | HTTP routing rules, retries, timeouts |

| CDS | Cluster Discovery Service | Upstream service definitions |

| EDS | Endpoint Discovery Service | Individual pod IP:port pairs per cluster |

Envoy subscribes to these APIs over a persistent gRPC stream. Istiod sends updates whenever the relevant state changes—a new pod becomes ready, a DestinationRule is applied, or a service is deleted. The protocol supports both full-state updates (State of the World) and incremental deltas (Delta xDS), with Istio defaulting to delta mode for efficiency in large meshes.

From VirtualService to Envoy Route

When you apply a VirtualService that splits traffic 90/10 between two subsets, Istiod translates that intent into a concrete Envoy route configuration. The VirtualService becomes an RDS route with weighted clusters. The DestinationRule subsets become CDS cluster entries. The pod IPs backing each subset flow in via EDS.

Nothing happens by magic—every Istio abstraction maps to a specific xDS object. Understanding that mapping is what separates operators who can debug routing problems from those who can only guess.

Inspecting Live Configuration with istioctl

The most direct way to verify that your intent reached Envoy is istioctl proxy-config. It queries the Envoy admin API through Istiod and renders the live xDS state for a specific pod.

## List all listeners on the sidecar for the orders podistioctl proxy-config listeners deploy/orders -n production

## Inspect the route table Envoy is using for outbound HTTP to paymentsistioctl proxy-config routes deploy/orders -n production \ --name 8080 -o json

## Dump all clusters and verify subset configuration from a DestinationRuleistioctl proxy-config clusters deploy/orders -n production \ --fqdn payments.production.svc.cluster.local -o json

## Check endpoints—confirms EDS is populating pod IPs correctlyistioctl proxy-config endpoints deploy/orders -n production \ --cluster "outbound|8080|stable|payments.production.svc.cluster.local"When a canary rollout isn’t behaving as expected, start with proxy-config routes. If the weighted clusters match your VirtualService, the problem is downstream. If they don’t, Istiod hasn’t pushed the update—check istioctl proxy-status to see sync state across all proxies.

💡 Pro Tip:

istioctl proxy-statusshows whether each sidecar isSYNCED,STALE, orNOT SENTfor each xDS API. ASTALECDS on a subset of pods during a rollout points directly to a push failure or a proxy that hasn’t reconnected to Istiod.

The xDS layer is where Istio’s abstractions either hold up or fall apart. Once you can read raw Envoy config and trace it back to the Kubernetes resources that produced it, policy and routing bugs go from mysterious to mechanical.

With the data plane wired up and observable, the next question is what’s actually inside those connections—specifically, how Istiod provisions certificates, rotates them transparently, and enforces SPIFFE identity for every mTLS handshake in the mesh.

mTLS Under the Hood: Certificate Rotation and SPIFFE Identity

Zero-trust networking requires that every service prove its identity before a connection is accepted. Istio implements this through SPIFFE (Secure Production Identity Framework for Everyone) X.509 certificates, issued automatically to every workload and rotated without any application involvement. Here is the mechanical reality behind that claim.

How Istiod Issues Certificates via SDS

When a pod starts with the Envoy sidecar injected, the proxy does not hold a static certificate baked into the image. Instead, Envoy connects to Istiod over a Unix domain socket and requests credentials through the Secret Discovery Service (SDS) API — the same xDS protocol family that delivers route and cluster configuration.

Istiod acts as a Certificate Authority. It validates the pod’s Kubernetes service account JWT, then issues an X.509 SVID (SPIFFE Verifiable Identity Document) with a SPIFFE URI in the Subject Alternative Name field:

spiffe://cluster.local/ns/payments/sa/payment-processorThis URI encodes the trust domain, namespace, and service account — a workload identity that is portable and not tied to IP addresses or DNS names. The certificate is written into Envoy’s in-memory SDS cache, never touching the pod filesystem.

Automatic Rotation Without Restart

Default certificate TTL in Istio is 24 hours, but Envoy begins the renewal process well before expiry — typically at the 80% mark. Istiod pushes a fresh certificate through the existing SDS connection. Envoy hot-swaps the credential in active TLS contexts. No application restart, no connection drain, no downtime.

To inspect the current certificate for a running pod:

istioctl proxy-config secret deploy/payment-processor -n paymentsRESOURCE NAME TYPE STATUS VALID CERT SERIAL NUMBER NOT AFTER NOT BEFOREdefault Cert Chain ACTIVE true 3a7f91b2c4d8e506f1234abcd567890ef 2026-02-18T11:42:00Z 2026-02-17T11:42:00ZROOTCA CA ACTIVE true 1b2c3d4e5f6a7b8c9d0e1f2a3b4c5d6e 2036-01-15T00:00:00Z 2026-01-15T00:00:00ZControlling mTLS Mode with Policy Resources

Istio provides two resources to enforce mTLS. PeerAuthentication governs what the receiving sidecar accepts; DestinationRule governs what the sending sidecar presents.

apiVersion: security.istio.io/v1beta1kind: PeerAuthenticationmetadata: name: payments-strict namespace: paymentsspec: mtls: mode: STRICTapiVersion: networking.istio.io/v1beta1kind: DestinationRulemetadata: name: payment-processor namespace: paymentsspec: host: payment-processor.payments.svc.cluster.local trafficPolicy: tls: mode: ISTIO_MUTUALSTRICT mode rejects any plaintext connection at the receiving proxy. PERMISSIVE allows both mTLS and plaintext, which is useful during migration but should never remain in production.

Verifying Enforcement in Practice

A policy applied is not a policy enforced until you verify it. Use istioctl to check the negotiated TLS state between two workloads:

istioctl x check-inject -n paymentsistioctl authn tls-check payment-processor.payments.svc.cluster.localIn Envoy access logs, a successfully mutually authenticated connection carries %DOWNSTREAM_PEER_CERT_V_START% and the SPIFFE URI in the %DOWNSTREAM_PEER_URI_SAN% field. If either field is empty, the connection was not mutually authenticated regardless of what the policy resource claims.

💡 Pro Tip: Run

istioctl proxy-config log deploy/payment-processor --level rbac:debugtemporarily to see authorization policy evaluation per-request. This is the fastest path to diagnosing “connection accepted but request denied” scenarios that occur when mTLS is working but RBAC AuthorizationPolicy is blocking the call.

With the data plane securing traffic automatically, the next question is whether that security — and the entire sidecar model — justifies its operational weight. The final section examines where sidecar proxies create friction at scale and how Istio’s ambient mesh mode restructures the architecture to reduce that cost.

Operational Costs and the Ambient Mesh Alternative

The sidecar model delivers powerful cross-cutting capabilities, but it does so by adding a process to every pod in your cluster. Before committing to that trade-off at scale, you need an honest accounting of what that process actually costs.

Memory and CPU Overhead

Each Envoy sidecar carries a baseline memory footprint of roughly 50–80 MB at idle, climbing to 150 MB or more under load in clusters with large xDS configurations—many routes, clusters, and listeners. On a node running 30 pods, that idle baseline alone consumes 1.5–2.4 GB of RAM purely for proxy processes. This directly compresses bin-packing density: workloads that otherwise fit comfortably on a node get displaced, increasing your node count and cloud spend.

CPU overhead is more workload-dependent. Healthy proxies at moderate traffic sit below 10m cores at idle, but spikes during certificate rotation or large xDS config pushes are measurable. Set resources.requests and resources.limits on the istio-proxy container explicitly—leaving them unbounded is a common cause of noisy-neighbor CPU throttling in multi-tenant clusters.

Latency: Real but Bounded

The sidecar hop adds latency in two places: the iptables redirect from the application port to Envoy’s inbound listener, and the corresponding redirect on the outbound side. In practice, the added P50 latency for a pod-to-pod call is typically 0.2–1 ms. At P99, particularly under connection churn or cert rotation, it can reach 5–10 ms.

For most synchronous service calls, that budget is acceptable. For latency-sensitive paths—financial transaction engines, real-time bidding, or high-frequency control loops—profile first. Use istio-proxy access logs with %DURATION% fields to isolate proxy processing time from application time before concluding the mesh is the bottleneck.

Cold Start and Short-Lived Workloads

Sidecar injection creates a real problem for serverless and short-lived batch workloads. The istio-proxy container must reach READY before the application container starts receiving traffic, and Envoy’s initialization—fetching certificates, syncing xDS state—takes 1–3 seconds in a healthy cluster. For a job that runs for 10 seconds, that initialization overhead is non-trivial. Exclude these workloads from injection with the sidecar.istio.io/inject: "false" annotation and handle their security posture through network policy instead.

Ambient Mesh: A Different Trade-Off

Istio’s Ambient mode removes the per-pod sidecar entirely. A per-node ztunnel process handles L4 mTLS and policy, while optional per-namespace waypoint proxies handle L7 features for workloads that need them. Memory overhead drops from per-pod to per-node, and cold start penalties disappear for short-lived workloads.

The trade-off is operational maturity: Ambient reached production-ready status with Istio 1.22, but the operational tooling, debugging workflows, and community knowledge base are considerably thinner than the sidecar model’s.

💡 Pro Tip: Use sidecar injection as your default for long-running services requiring mTLS, observability, and L7 policy. Exempt batch jobs and short-lived workloads via annotation, and evaluate Ambient seriously if your cluster exceeds 500 pods or memory pressure is a recurring capacity concern.

The control plane that drives all of this—whether sidecar or ztunnel—relies on a certificate infrastructure that must rotate continuously without dropping connections, which is where SPIFFE identity and Istio’s certificate management machinery become critical to understand.

Key Takeaways

- Audit your current sidecar injection rules with

kubectl get mutatingwebhookconfigurationsand verify that only intended namespaces have theistio-injection: enabledlabel. - Use

istioctl proxy-config routes <pod> --name 80to confirm that your VirtualService and DestinationRule resources are translating into the Envoy routing config you expect before debugging at the application layer. - Run

istioctl x describe pod <pod>to get a plain-English summary of what mTLS mode, policies, and routes apply to any given workload—make this part of your service onboarding checklist. - Before adopting a service mesh, benchmark Envoy’s idle memory footprint against your smallest pod’s memory limit; if the sidecar exceeds 20% of the app container’s allocation, factor that into capacity planning or evaluate Ambient Mesh.