Securing EKS Workloads: IAM Roles for Service Accounts and Pod-Level Authentication

You’ve moved to EKS, but your pods still use overly permissive node IAM roles, violating least privilege. When one compromised container can access your entire S3 infrastructure, it’s time to implement IAM Roles for Service Accounts (IRSA) for pod-level authentication.

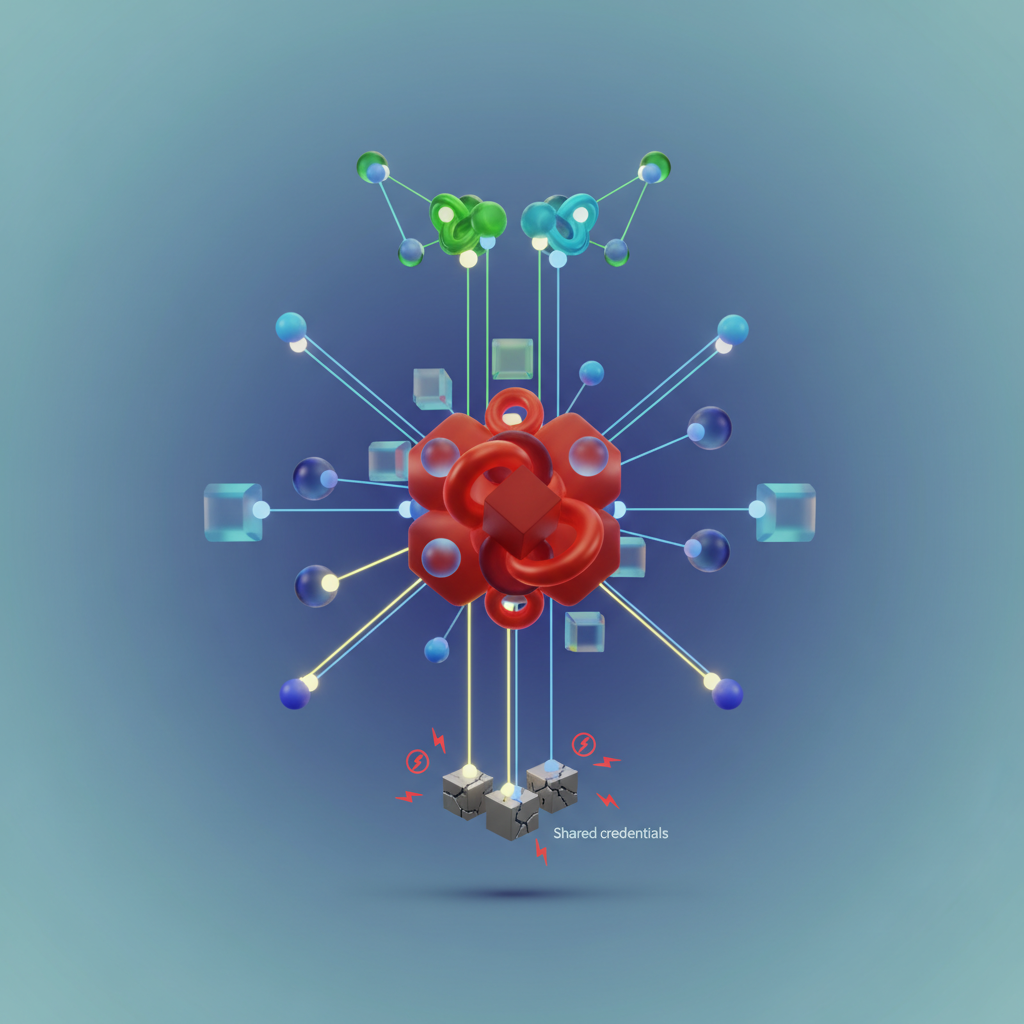

The traditional approach treats your EKS worker nodes like EC2 instances—attach an IAM role to the node, and every pod running on that node inherits those permissions. This works until you realize that your frontend service, backend API, and batch processing jobs are all sharing the same credentials. Your frontend needs read-only access to a single S3 bucket for static assets. Your backend needs DynamoDB permissions for user data. Your batch jobs need full access to multiple S3 buckets and SQS queues. Under the node role model, all three workloads get the union of these permissions.

The security implications are immediate. A single vulnerable container—whether through a supply chain attack, misconfigured application, or exploited dependency—can now access every AWS resource that any pod on that node requires. Your audit logs show the node’s role making requests, but you can’t trace which specific pod or service account initiated the action. When your security team asks “which application accessed this S3 bucket at 3 AM?”, you’re stuck parsing application logs instead of checking CloudTrail.

This isn’t theoretical. As cluster workloads grow and teams deploy more microservices, the node role becomes a permissions dumping ground. Each new service adds its requirements to the shared role, and the blast radius expands with every deployment.

The solution requires breaking the shared credential model entirely. Instead of treating pods as second-class citizens inheriting node permissions, each pod needs its own IAM identity with precisely scoped permissions.

The Node Role Problem: Why Shared Credentials Don’t Scale

Every pod running on an EKS worker node inherits the IAM permissions attached to that node’s instance profile. This inheritance model, carried over from traditional EC2 deployments, creates a fundamental security problem: all workloads share the same AWS credentials regardless of their actual permission requirements.

The Blast Radius of Shared Credentials

When you attach an IAM role to your EKS nodes, every container on those nodes gains access to the same set of AWS APIs. A frontend application that only needs to read from S3 receives the same permissions as a backend service that writes to DynamoDB and publishes to SNS. A monitoring sidecar that never touches AWS services still inherits full node-level access to RDS, Secrets Manager, and KMS.

This violation of least privilege creates an outsized blast radius. A single compromised pod—whether through a dependency vulnerability, application bug, or supply chain attack—can leverage the node role to access any AWS resource the node has permissions for. An attacker who gains code execution in your logging agent can exfiltrate production database credentials from Secrets Manager. A vulnerable third-party monitoring tool becomes a gateway to your entire S3 data lake.

Audit and Compliance Gaps

Node-level credentials obscure the audit trail. CloudTrail logs show API calls attributed to the node role, but identifying which specific pod made the request requires correlation with Kubernetes audit logs, pod lifecycle events, and IP address mapping. This forensic complexity makes incident response slower and compliance reporting unreliable.

When auditors ask “which application accessed customer PII in S3 last quarter,” you cannot answer definitively without extensive log analysis. When a security incident occurs, determining the scope of exposure means assuming every pod on affected nodes had access to every resource the node role could reach.

The IRSA Alternative

IAM Roles for Service Accounts (IRSA) eliminates shared credentials by giving each pod its own temporary IAM identity. Using OpenID Connect (OIDC) federation, EKS maps Kubernetes service accounts to IAM roles, allowing fine-grained permission assignment at the pod level.

With IRSA, your frontend pods assume a role with read-only S3 access while backend pods use a separate role scoped to DynamoDB and SNS. Monitoring sidecars run with no AWS permissions at all. Each pod receives short-lived credentials specific to its workload requirements, and CloudTrail logs attribute API calls to distinct IAM roles that map directly to application teams and services.

The shift from node-level to pod-level IAM represents a fundamental architectural change in how EKS workloads authenticate to AWS services. Understanding how IRSA implements this federation through OIDC is critical to deploying it effectively.

IRSA Architecture: How OIDC Connects Kubernetes to IAM

IRSA (IAM Roles for Service Accounts) bridges Kubernetes identity and AWS IAM through OpenID Connect (OIDC) federation. Instead of distributing long-lived AWS credentials, EKS leverages Kubernetes service accounts as identity tokens that AWS Security Token Service (STS) can validate and exchange for temporary IAM credentials.

The OIDC Provider Foundation

When you create an EKS cluster, AWS provisions an OIDC identity provider endpoint unique to that cluster. This endpoint serves as a trusted identity source in AWS IAM, similar to how enterprise single sign-on systems authenticate users. The OIDC provider URL follows the pattern https://oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE, where the hex string uniquely identifies your cluster.

AWS IAM trust policies reference this OIDC provider to validate service account tokens. When you create an IAM role for a specific service account, the trust policy specifies both the OIDC provider ARN and the exact service account name and namespace. This creates a binding between a Kubernetes identity (system:serviceaccount:prod-app:backend-sa) and an AWS IAM role with defined permissions.

Token Projection and Credential Injection

The EKS Pod Identity Webhook automatically intercepts pod creation requests that reference annotated service accounts. When it detects the eks.amazonaws.com/role-arn annotation, the webhook modifies the pod specification to mount a projected service account token volume at /var/run/secrets/eks.amazonaws.com/serviceaccount/token. This token contains cryptographically signed claims including the service account name, namespace, and audience.

The webhook also injects environment variables (AWS_ROLE_ARN, AWS_WEB_IDENTITY_TOKEN_FILE) that the AWS SDK recognizes. When application code makes AWS API calls, the SDK reads these environment variables and automatically initiates the credential exchange flow.

STS AssumeRoleWithWebIdentity Flow

The authentication sequence begins when your application container starts and the AWS SDK detects the web identity token file. The SDK calls sts:AssumeRoleWithWebIdentity, presenting the service account token to AWS STS. STS validates the token signature against the EKS OIDC provider’s public keys, verifies the token hasn’t expired, and checks that the audience claim matches the expected value.

If validation succeeds, STS issues temporary credentials (access key, secret key, session token) valid for one hour by default. The SDK caches these credentials and automatically refreshes them before expiration by repeating the token exchange. This creates a seamless authentication experience where applications gain IAM permissions without managing credentials.

With the architectural foundation established, the next section demonstrates the practical configuration steps for enabling IRSA in your EKS cluster.

Setting Up IRSA: OIDC Provider and IAM Role Configuration

With an understanding of how IRSA leverages OIDC to bridge Kubernetes service accounts with IAM roles, the next step is configuring the infrastructure. This involves creating an OIDC identity provider for your EKS cluster and crafting IAM roles with trust policies that validate pod identities.

Creating the OIDC Identity Provider

Every EKS cluster comes with an OIDC issuer URL, but you must explicitly create an IAM OIDC identity provider to establish trust between EKS and IAM. The OIDC provider acts as the trusted entity that vouches for the authenticity of service account tokens.

Using eksctl, the process is straightforward:

## Associate OIDC provider with your clustereksctl utils associate-iam-oidc-provider \ --cluster my-production-cluster \ --region us-east-1 \ --approve

## Verify the OIDC provider was createdaws iam list-open-id-connect-providers | \ grep $(aws eks describe-cluster \ --name my-production-cluster \ --region us-east-1 \ --query "cluster.identity.oidc.issuer" \ --output text | cut -d '/' -f 3-5)For Terraform users, the same configuration looks like this:

data "tls_certificate" "cluster" { url = aws_eks_cluster.main.identity[0].oidc[0].issuer}

resource "aws_iam_openid_connect_provider" "cluster" { client_id_list = ["sts.amazonaws.com"] thumbprint_list = [data.tls_certificate.cluster.certificates[0].sha1_fingerprint] url = aws_eks_cluster.main.identity[0].oidc[0].issuer

tags = { Name = "${aws_eks_cluster.main.name}-oidc-provider" }}The thumbprint validation ensures that only tokens signed by your cluster’s legitimate OIDC issuer are accepted by IAM. This cryptographic verification prevents malicious actors from presenting tokens signed by rogue identity providers. The client_id_list specifies sts.amazonaws.com as the audience, which must match the aud claim in the service account token presented during authentication.

Crafting the IAM Trust Policy

The IAM role’s trust policy is where IRSA’s security model truly shines. Unlike traditional EC2 instance profiles that grant blanket access to all pods on a node, IRSA trust policies specify exactly which service account in which namespace can assume the role.

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::1234567890:oidc-provider/oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:sub": "system:serviceaccount:data-pipeline:s3-processor", "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:aud": "sts.amazonaws.com" } } } ]}The Condition block enforces two critical validations: the sub claim verifies the exact namespace and service account (data-pipeline:s3-processor), while the aud claim confirms the token is intended for AWS STS. This prevents token reuse attacks and ensures a compromised pod in a different namespace cannot assume the role.

For multi-tenant environments or microservice architectures, you can extend the trust policy to allow multiple service accounts by using StringLike conditions with wildcards, though this broadens your security perimeter:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::1234567890:oidc-provider/oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringLike": { "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:sub": "system:serviceaccount:data-pipeline:*" }, "StringEquals": { "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:aud": "sts.amazonaws.com" } } } ]}This pattern grants access to any service account within the data-pipeline namespace, useful for shared tooling but requiring careful namespace isolation.

Automated Role Creation with eksctl

The eksctl tool simplifies the entire workflow by creating both the IAM role and the Kubernetes service account in a single operation:

eksctl create iamserviceaccount \ --name s3-processor \ --namespace data-pipeline \ --cluster my-production-cluster \ --region us-east-1 \ --attach-policy-arn arn:aws:iam::aws:policy/AmazonS3ReadOnlyAccess \ --approve \ --override-existing-serviceaccountsThis command generates the trust policy automatically, creates the IAM role with the specified managed policy, and annotates the Kubernetes service account with the role ARN—all prerequisites for IRSA to function. The --override-existing-serviceaccounts flag is particularly useful in CI/CD pipelines where you need idempotent infrastructure provisioning.

For production environments requiring custom permission boundaries or inline policies, you can attach additional policies after creation or use Terraform for more granular control over role attributes, session duration limits, and permissions boundaries.

💡 Pro Tip: Always retrieve your actual OIDC provider URL using

aws eks describe-cluster --name my-production-cluster --query "cluster.identity.oidc.issuer" --output textto avoid copy-paste errors in trust policies. A single character mismatch renders the entire IRSA configuration non-functional.

With the OIDC provider and IAM roles configured, the infrastructure foundation is complete. The next step is annotating service accounts and deploying workloads that leverage these pod-level credentials.

Implementing IRSA in Applications: Service Accounts and Annotations

With the OIDC provider and IAM role configured, the next step is integrating IRSA into your Kubernetes workloads. This process involves creating service accounts annotated with IAM role ARNs and configuring pods to use these service accounts for AWS API authentication.

Creating IRSA-Enabled Service Accounts

A Kubernetes service account becomes IRSA-enabled through a single annotation that links it to your IAM role. Create a service account manifest that specifies the eks.amazonaws.com/role-arn annotation:

apiVersion: v1kind: ServiceAccountmetadata: name: s3-reader-sa namespace: data-pipeline annotations: eks.amazonaws.com/role-arn: arn:aws:iam::123456789012:role/s3-reader-role eks.amazonaws.com/sts-regional-endpoints: "true"The sts-regional-endpoints annotation forces the AWS SDK to use regional STS endpoints instead of the global endpoint, reducing latency and improving reliability during regional outages. Apply this manifest to your cluster, and the service account is ready to provide IAM credentials to pods.

Configuring Pods to Use IRSA

Reference the service account in your pod or deployment spec using the serviceAccountName field. The EKS pod identity webhook automatically injects the necessary environment variables and volume mounts:

apiVersion: apps/v1kind: Deploymentmetadata: name: data-processor namespace: data-pipelinespec: replicas: 3 selector: matchLabels: app: data-processor template: metadata: labels: app: data-processor spec: serviceAccountName: s3-reader-sa containers: - name: processor image: mycompany/data-processor:v1.2.3 env: - name: BUCKET_NAME value: analytics-raw-data resources: requests: memory: "256Mi" cpu: "250m" limits: memory: "512Mi" cpu: "500m"When the pod starts, the webhook injects three critical environment variables: AWS_ROLE_ARN, AWS_WEB_IDENTITY_TOKEN_FILE, and AWS_REGION. These variables enable AWS SDKs to automatically discover and use IRSA credentials without code changes.

Verifying Credential Injection

Test IRSA integration by executing into a running pod and inspecting the injected credentials. The webhook mounts a projected service account token at /var/run/secrets/eks.amazonaws.com/serviceaccount/token:

kubectl exec -n data-pipeline deployment/data-processor -- sh -c \ 'echo "Role ARN: $AWS_ROLE_ARN" && \ echo "Token file: $AWS_WEB_IDENTITY_TOKEN_FILE" && \ ls -la /var/run/secrets/eks.amazonaws.com/serviceaccount/'The output confirms that the role ARN matches your IAM role and the token file exists. To validate actual AWS permissions, use the AWS CLI or SDK within the container:

kubectl exec -n data-pipeline deployment/data-processor -- \ aws sts get-caller-identityThis command returns the assumed role identity, showing the session name format eks-data-pipeline-s3-reader-sa-<random-suffix>. The session name includes the namespace and service account name, providing audit trail clarity in CloudTrail logs.

Troubleshooting Common Issues

The most frequent IRSA problem is trust policy misconfiguration. If pods fail to assume the role, verify that the IAM role’s trust policy includes the correct OIDC provider ARN and service account condition. Use kubectl describe pod to check for webhook injection failures—missing environment variables indicate the service account annotation is incorrect or the webhook is not running.

Permission errors after successful role assumption point to insufficient IAM policy permissions. Review CloudTrail events filtered by the assumed role session name to identify denied API calls. The IAM policy attached to your role must explicitly grant permissions for the AWS services your application accesses.

Token expiration issues rarely occur because the kubelet automatically rotates the projected token before expiration. However, long-running processes that cache credentials for extended periods may need to implement credential refresh logic. Modern AWS SDKs handle this automatically, but custom implementations must watch for credential expiration errors and re-assume the role.

With service accounts configured and credential injection verified, your applications can now securely access AWS services using pod-specific IAM permissions. The next section explores concrete integration patterns for commonly used services like S3, DynamoDB, and Secrets Manager, demonstrating how IRSA enables least-privilege access across different workload types.

Multi-Service Integration Patterns: S3, DynamoDB, and Secrets Manager

While the previous examples demonstrated single-service access, production applications typically interact with multiple AWS services simultaneously. A web application might read secrets from Secrets Manager at startup, query DynamoDB for user data, and write logs to S3—all requiring distinct IAM permissions. IRSA enables you to scope these permissions precisely to individual pods without bundling them into monolithic node roles.

Real-World Scenario: Data Processing Pipeline

Consider a data processing application that reads CSV files from S3, performs transformations, stores results in DynamoDB, and retrieves database credentials from Secrets Manager. Each service requires different permission boundaries:

import boto3from botocore.exceptions import ClientError

class DataProcessor: def __init__(self): # SDK automatically uses IRSA credentials via web identity token self.s3 = boto3.client('s3', region_name='us-east-1') self.dynamodb = boto3.resource('dynamodb', region_name='us-east-1') self.secrets = boto3.client('secretsmanager', region_name='us-east-1')

def process_batch(self, bucket, key): # Read input data from S3 try: response = self.s3.get_object(Bucket=bucket, Key=key) data = response['Body'].read().decode('utf-8') except ClientError as e: raise Exception(f"S3 access denied: {e}")

# Retrieve database credentials db_secret = self.secrets.get_secret_value( SecretId='prod/dataprocessor/credentials' )

# Write results to DynamoDB table = self.dynamodb.Table('processing-results') table.put_item(Item={ 'job_id': key, 'status': 'completed', 'record_count': len(data.split('\n')) })The corresponding IAM policy combines permissions for all three services with tight resource constraints:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": ["s3:GetObject", "s3:ListBucket"], "Resource": [ "arn:aws:s3:::data-input-bucket", "arn:aws:s3:::data-input-bucket/*" ] }, { "Effect": "Allow", "Action": ["dynamodb:PutItem", "dynamodb:GetItem"], "Resource": "arn:aws:dynamodb:us-east-1:123456789012:table/processing-results" }, { "Effect": "Allow", "Action": "secretsmanager:GetSecretValue", "Resource": "arn:aws:secretsmanager:us-east-1:123456789012:secret:prod/dataprocessor/*" } ]}💡 Pro Tip: Use resource-level constraints in IAM policies rather than broad service permissions. Limit S3 access to specific bucket paths and DynamoDB access to individual tables to minimize blast radius if credentials are compromised.

Permission Scoping Strategies

Different workload types demand different permission granularity. Batch jobs processing sensitive data should receive read-only S3 access to specific prefixes, while web applications serving user requests might need broader read permissions but restricted write access. Organize your IRSA roles by workload function rather than team ownership—create separate roles for data ingestion, transformation, and export stages even if the same team manages all three.

For multi-tenant applications, consider using session tags to dynamically scope permissions based on the authenticated user or tenant. While IRSA itself doesn’t support session tags directly, you can combine it with STS AssumeRole calls from application code to further restrict access. For example, a shared analytics service could assume different roles based on which customer’s data is being processed, ensuring tenant isolation at the IAM level.

Integration with External Secrets Operator

For secrets management, combining IRSA with External Secrets Operator (ESO) provides additional abstraction. ESO runs with its own IRSA role, fetching secrets from AWS Secrets Manager and populating Kubernetes Secrets automatically. This separates secret retrieval from application logic:

apiVersion: external-secrets.io/v1beta1kind: ExternalSecretmetadata: name: app-database-credentials namespace: productionspec: refreshInterval: 1h secretStoreRef: name: aws-secretsmanager kind: ClusterSecretStore target: name: db-credentials data: - secretKey: username remoteRef: key: prod/dataprocessor/credentials property: username - secretKey: password remoteRef: key: prod/dataprocessor/credentials property: passwordThe ESO ServiceAccount uses an IAM role limited to secretsmanager:GetSecretValue on specific secret paths, while application pods consume standard Kubernetes Secrets without direct AWS API calls. This pattern reduces application complexity and centralizes secret rotation logic. When secrets rotate in AWS Secrets Manager, ESO automatically updates the corresponding Kubernetes Secrets according to the refreshInterval, triggering pod restarts if configured with reloader annotations.

The ClusterSecretStore referenced in the ExternalSecret defines the authentication mechanism. Create a dedicated IRSA role for ESO with minimal permissions—only GetSecretValue on secrets matching organizational naming conventions. This prevents application developers from accidentally granting their pods access to unrelated secrets while maintaining the security benefits of IRSA’s pod-level identity.

Credential Caching and Performance

AWS SDK clients cache temporary credentials obtained through IRSA, typically refreshing them 5 minutes before expiration (default 1-hour lifetime). For high-throughput applications making thousands of requests per second, this caching prevents authentication overhead. However, pods mounting projected service account tokens must handle token rotation—Kubernetes refreshes these tokens every hour, and the SDK automatically detects changes.

Monitor your application’s AWS API call patterns using CloudWatch metrics. If you observe elevated AssumeRoleWithWebIdentity call volumes, verify that SDK credential caching is functioning correctly. Some frameworks and libraries create new SDK clients per request rather than reusing instances, bypassing the cache entirely. Singleton patterns for boto3 clients or similar constructs in other SDKs ensure credentials are cached effectively across requests.

For applications with intermittent AWS access patterns—such as cron jobs running hourly—credential caching provides minimal benefit. In these cases, optimize for startup latency by pre-warming SDK clients during pod initialization rather than deferring authentication to the first API call. This shifts potential token exchange errors to the startup phase where they’re easier to detect and debug.

In the next section, we’ll explore cross-account access scenarios where applications in one AWS account need to access resources in another, leveraging IRSA’s trust relationship capabilities for secure multi-account architectures.

Advanced Security: Cross-Account Access and Audit Logging

IRSA’s security benefits extend beyond single-account isolation. Organizations managing multi-account AWS environments can leverage IRSA for cross-account resource access while maintaining fine-grained audit trails and enforcing least-privilege policies through IAM conditions.

Cross-Account Access Configuration

When pods need to access resources in a different AWS account, configure the trust relationship to allow cross-account assumption. In the target account (123456789012), create an IAM role that trusts your EKS cluster’s OIDC provider from the source account (987654321098):

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::987654321098:oidc-provider/oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:sub": "system:serviceaccount:analytics:data-processor", "oidc.eks.us-east-1.amazonaws.com/id/EXAMPLED539D4633E53DE1B71EXAMPLE:aud": "sts.amazonaws.com" } } } ]}The sub condition binds this role to a specific service account (data-processor in the analytics namespace), preventing other pods from assuming this cross-account role. Annotate your service account with the target account’s role ARN: eks.amazonaws.com/role-arn: arn:aws:iam::123456789012:role/CrossAccountDataAccess.

This architecture enables secure multi-account patterns such as centralized logging (shipping logs to a security account’s S3 bucket), shared data lakes (analytics workloads accessing consolidated datasets), and environment segregation (development clusters accessing production read replicas). The OIDC trust relationship ensures that even with cross-account access, only explicitly authorized service accounts can assume roles—no cluster-wide permissions are granted.

Implementing Least Privilege with IAM Conditions

IAM policy conditions enforce runtime constraints beyond resource-level permissions. Restrict S3 access to specific prefixes based on the service account name:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": ["s3:GetObject", "s3:PutObject"], "Resource": "arn:aws:s3:::shared-data-bucket/*", "Condition": { "StringLike": { "s3:prefix": "${aws:PrincipalTag/eks-service-account}/*" } } } ]}This condition leverages session tags automatically injected by IRSA, ensuring each service account accesses only its designated S3 prefix. Add IP-based restrictions using aws:SourceIp conditions to limit API calls to your VPC’s CIDR range, or time-based conditions with aws:CurrentTime for scheduled batch jobs.

Additional condition keys provide defense-in-depth: use aws:SecureTransport to enforce TLS for all API calls, aws:RequestedRegion to prevent data exfiltration across regions, and kms:EncryptionContext to bind decryption operations to specific workload identities. Combining multiple conditions creates layered security policies that adapt to your threat model—for example, restricting database access to specific IP ranges during business hours while blocking all access outside maintenance windows.

Audit Logging with CloudTrail

CloudTrail records all AWS API calls made through IRSA, attributing actions to specific pods via the assumed role session name. Enable CloudTrail in both source and target accounts, ensuring data events are captured for S3 and other services. The session name follows the pattern eks-analytics-data-processor-1708012345, including namespace, service account, and timestamp.

Query CloudTrail logs to trace pod activity:

{ "eventName": "GetObject", "userIdentity": { "type": "AssumedRole", "principalId": "AROA1234567890EXAMPLE:eks-analytics-data-processor-1708012345", "arn": "arn:aws:sts::123456789012:assumed-role/CrossAccountDataAccess/eks-analytics-data-processor-1708012345" }, "requestParameters": { "bucketName": "shared-data-bucket", "key": "data-processor/report.csv" }}The principalId field correlates API calls to individual pod instances, enabling forensic analysis during security incidents. Integrate CloudTrail with Amazon Athena for SQL-based analysis of access patterns, or stream events to CloudWatch Logs Insights for real-time anomaly detection. Set up CloudWatch alarms on failed authentication attempts (errorCode: AccessDenied) to identify misconfigured IAM policies or potential unauthorized access attempts. For compliance requirements, configure CloudTrail log file validation and encrypt trail logs using KMS keys with restricted access—this creates tamper-evident audit trails suitable for SOC 2 and PCI DSS audits.

Credential Rotation and Expiration

IRSA credentials automatically rotate every hour through the kubelet’s token projection mechanism. The AWS SDK handles token refresh transparently, but long-running operations exceeding 60 minutes require explicit handling. Configure your application’s AWS client with automatic retry logic—most AWS SDKs refresh credentials before expiration. Monitor pod logs for ExpiredToken errors, which indicate misconfigured service account volumes or stale token projections.

Applications performing streaming operations or maintaining persistent database connections should implement credential refresh callbacks. In Go, use the credentials.NewCredentials() constructor with a custom provider that re-reads the token file; in Python, rely on boto3’s automatic credential chain resolution. For batch jobs exceeding token lifetime, design idempotent operations with checkpointing—this ensures that credential expiration triggers graceful retries rather than data loss. Test token expiration behavior in staging by temporarily reducing the expirationSeconds value in your service account’s volume projection to 600 seconds (10 minutes), validating that your application handles rotation without dropped requests.

With cross-account access and comprehensive audit logging configured, the next challenge is identifying and resolving common IRSA implementation issues in production environments.

Operations and Troubleshooting: Common IRSA Pitfalls

Production IRSA deployments surface issues that aren’t obvious during initial setup. Understanding these failure modes reduces mean time to resolution when authentication breaks.

Debugging ‘Unable to Locate Credentials’ Errors

The most common IRSA failure presents as NoCredentialProviders: no valid providers in chain. This error means the AWS SDK cannot find credentials—either the pod lacks the service account annotation, the service account isn’t linked to an IAM role, or the trust relationship rejects the token.

Start by verifying the service account annotation exists: kubectl get sa my-service-account -o yaml should show eks.amazonaws.com/role-arn. Check that pods mount the projected service account token at /var/run/secrets/eks.amazonaws.com/serviceaccount/token. If the token file exists but authentication fails, inspect the IAM role’s trust policy—the OIDC provider URL must match your cluster’s issuer exactly, and the StringEquals condition must reference the correct namespace and service account name.

Enable SDK debug logging to see the full credential chain resolution. For AWS SDK v3 (JavaScript/TypeScript), set AWS_SDK_JS_LOG=debug. For boto3 (Python), use boto3.set_stream_logger(''). The logs reveal whether the SDK attempts WebIdentityToken authentication and why it fails.

OIDC Thumbprint Staleness

EKS rotates OIDC signing keys periodically. While the OIDC provider in IAM caches thumbprints, certificate changes can invalidate them. If IRSA suddenly fails across all workloads, verify the thumbprint matches the current certificate:

aws iam get-open-id-connect-provider \ --open-id-connect-provider-arn arn:aws:iam::1234567890:oidc-provider/oidc.eks.us-east-1.amazonaws.com/id/EXAMPLECompare the returned thumbprint with the current cluster certificate. Update stale thumbprints using aws iam update-open-id-connect-provider-thumbprint.

Token Expiration and Refresh

Service account tokens expire after one hour by default. The kubelet automatically refreshes them, but applications caching credentials experience authentication failures if they don’t respect token rotation. AWS SDKs handle this transparently when using WebIdentityTokenFileCredentialsProvider, but custom credential implementations must re-read the token file periodically.

Monitoring IRSA Health

CloudTrail logs every AssumeRoleWithWebIdentity call, including failures. Create a CloudWatch metric filter for failed assumptions with errorCode=AccessDenied and eventName=AssumeRoleWithWebIdentity. Alert on sustained increases—this signals misconfigured trust policies or expired OIDC providers.

Track the sts:AssumeRoleWithWebIdentity API call duration as a leading indicator of OIDC provider latency issues.

With operational visibility established, the next consideration is optimizing IRSA for cost and performance in high-scale environments.

Key Takeaways

- Implement IRSA to eliminate shared node IAM roles and enforce pod-level least privilege access to AWS services

- Use OIDC federation and service account annotations to automatically inject scoped AWS credentials into pods

- Leverage CloudTrail with IRSA for detailed audit trails showing exactly which pods accessed which AWS resources

- Start with high-risk workloads (database access, secrets management) and incrementally migrate applications to IRSA