NATS vs Kafka: A Decision Framework for Cloud-Native Messaging at Scale

Your microservices are talking to each other through a message broker you picked two years ago, and the cracks are showing. Maybe it’s latency spikes during rolling deployments when your Kafka cluster rebalances partitions across a reshuffled broker topology. Maybe it’s the operational tax — ZooKeeper dependencies, partition count tuning, consumer group coordination — that scales faster than your platform team’s capacity to manage it. Or maybe you’re running NATS and discovered that “fire and forget” has a very literal meaning when a subscriber goes offline during a critical payment flow.

Both systems are genuinely good. That’s what makes the decision hard.

The engineers who pick Kafka for everything end up paying the operational complexity tax on workloads that needed a lightweight pub/sub fabric. The engineers who pick NATS for everything eventually write bespoke durability layers to compensate for gaps in their event replay requirements. Neither outcome is a failure of the technology — it’s a mismatch between the system’s core assumptions and the workload’s actual demands.

The difference isn’t raw throughput or latency benchmarks. Those numbers shift with hardware, configuration, and the particular benchmark author’s incentives. The real difference is philosophical: Kafka is a durable commit log that happens to support message routing, and NATS is a routing fabric that optionally supports persistence. That inversion shapes every operational decision downstream — from how consumers recover after failure, to how you scale under bursty traffic, to what your on-call engineer faces at 2am.

Understanding where each system’s model breaks under real cloud-native workload patterns is where the decision framework actually starts.

Two Philosophies of Messaging

Before evaluating throughput numbers or deployment complexity, you need to understand the core philosophical disagreement between Kafka and NATS. These are not two implementations of the same idea. They reflect fundamentally different answers to the question: what is a message broker actually for?

Kafka: The Durable Commit Log

Kafka’s foundational abstraction is the distributed, append-only log. Every message written to a Kafka topic is persisted to disk and retained according to a configurable policy—time-based, size-based, or indefinitely with log compaction. Consumers do not receive messages passively; they actively pull from partitions and track their position using offsets. This means Kafka is, at its core, a shared state machine. The log is the truth, and any consumer can replay it, rewind it, or start from a specific point in history.

This design makes Kafka exceptionally well-suited for workloads where message history matters: event sourcing, audit trails, stream processing pipelines, and any system that needs to reconstruct state from a sequence of events. The trade-off is structural weight. Kafka requires ZooKeeper (or KRaft in modern deployments), relies on partition-level parallelism for scaling, and imposes a producer-to-broker-to-consumer latency floor that is measured in milliseconds even under ideal conditions.

NATS: The Routing Fabric

NATS starts from a different premise. Its native abstraction is a subject-based publish-subscribe fabric optimized for low-latency, high-throughput message routing. In its core mode, NATS operates at-most-once: a message is delivered to all current subscribers and then discarded. There is no broker-side persistence, no offset tracking, no concept of consumer groups in the Kafka sense. The broker’s job is to route, not to store.

JetStream, introduced as NATS’s persistence layer, adds at-least-once and exactly-once delivery semantics on top of this routing core. But JetStream is an optional capability layered onto NATS’s architecture—not the default operating mode and not the system’s primary design center.

Why This Split Matters More Than Benchmarks

The philosophical divergence has direct operational consequences that no benchmark can fully capture. A system built on Kafka’s commit-log model is resilient to slow consumers by design—messages wait for consumers to catch up. A NATS system without JetStream has no such guarantee; a subscriber that disconnects during a burst loses those messages, full stop.

This at-most-once versus at-least-once gap is not a configuration knob you can tune away. It reflects different assumptions about the contract between producer and consumer. In IoT telemetry, losing a heartbeat packet is acceptable. In a payment processing pipeline, it is not.

💡 Pro Tip: If your team is debating these systems based on raw throughput comparisons, redirect the conversation. Ask instead: what guarantees does your system need to make to downstream consumers when they are unavailable? The answer eliminates one of these tools immediately.

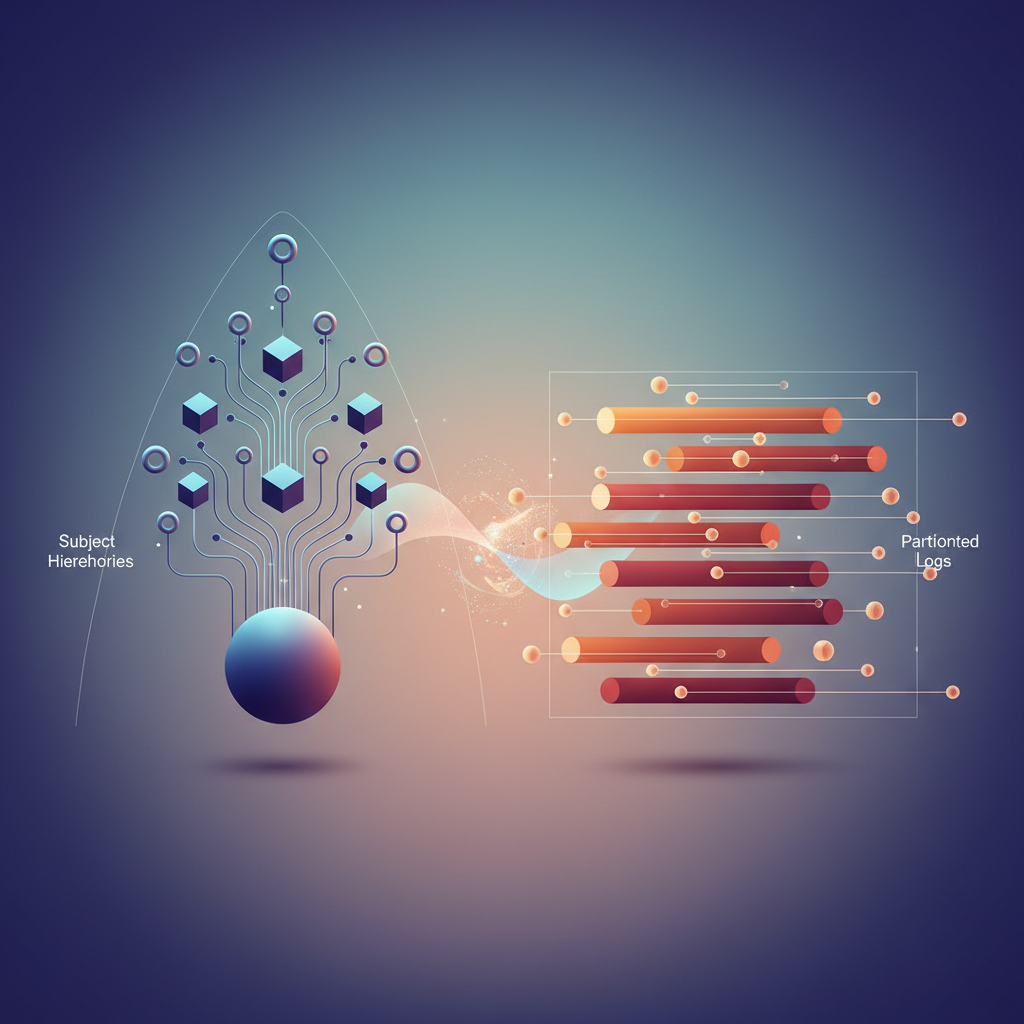

The delivery semantics question is the right starting point, but it only scratches the surface. The architectural mechanisms each system uses to implement those semantics—partitioned logs versus subject hierarchies—produce a cascade of second-order trade-offs that affect how you model data, scale consumers, and recover from failure. That is where the real engineering decisions live.

Architecture Deep-Dive: Subject Hierarchies vs Partitioned Logs

The routing model each system uses is not an implementation detail — it shapes every operational decision downstream, from how you design consumers to how you reason about failure recovery.

NATS: Subjects as First-Class Citizens

NATS routes messages through a subject hierarchy rather than discrete topics. A subject is a UTF-8 string with dot-delimited tokens: orders.us-east.created, sensors.floor3.temperature. Two wildcard operators give this hierarchy real power: * matches a single token (orders.*.created matches orders.us-east.created but not orders.us-east.warehouse.created), and > matches one or more trailing tokens (sensors.> matches everything under sensors).

Consumer load balancing happens through queue groups. Multiple subscribers join the same named group, and NATS delivers each message to exactly one member — no broker-side configuration required. This is structurally different from Kafka: there is no partition assignment negotiation, no rebalance protocol, and no group coordinator. A new consumer joining a queue group is effective immediately.

The tradeoff is that vanilla NATS is a fire-and-forget system. If no subscriber is listening when a message arrives, that message is gone. This is a deliberate design choice optimized for low-latency pub/sub, not durable replay.

Kafka: The Partitioned Log

Kafka’s data model is a distributed, append-only log partitioned across brokers. Each topic is divided into a configurable number of partitions, and each partition is an ordered, immutable sequence of records identified by offset. This structure is what gives Kafka its durability guarantees and its throughput ceiling.

Ordering in Kafka is partition-scoped, not topic-scoped. Records assigned to the same partition by a consistent key arrive at consumers in the order they were written. Consumer groups track per-partition offsets, and the group coordinator handles partition assignment during rebalance events — an operation with measurable latency impact that every Kafka operator has encountered in production.

The partition count decision made at topic creation has long-term consequences. Under-partitioning limits parallelism; over-partitioning increases broker memory pressure and replication overhead. Kafka’s model rewards upfront capacity planning in a way NATS does not.

JetStream: Bridging the Gap

JetStream, introduced in NATS 2.2, adds persistence, replay, and consumer acknowledgment semantics on top of the core messaging layer. A JetStream stream binds to one or more subjects and captures messages to storage. Named consumers attached to a stream carry their own cursor — equivalent in concept to a Kafka consumer group offset.

💡 Pro Tip: JetStream consumers support both push and pull delivery modes. Pull consumers give you explicit flow control and are the right choice for workloads where processing latency is variable — they prevent the broker from overwhelming a slow downstream service.

JetStream closes much of the durability gap with Kafka, but the operational model differs in one important way: streams are defined against subject patterns, not partitions. There is no partition-level ordering guarantee when multiple publishers write to overlapping subjects. For workloads where strict per-entity ordering matters, this distinction drives architecture decisions.

Cluster Topology and Replication

NATS clustering uses a full-mesh gossip topology with RAFT-based consensus for JetStream metadata. Clusters of three or five nodes are typical; no separate coordination service is required. Kafka historically relied on ZooKeeper for controller election and topic metadata; KRaft mode, generally available since Kafka 3.3, eliminates that dependency and simplifies the operational surface considerably.

Both systems replicate data across brokers for fault tolerance, but Kafka’s replication is partition-granular with configurable acknowledgment levels (acks=all being the safe default for durability). NATS JetStream replication operates at the stream level with a configurable R-factor.

With the architectural fundamentals established, the clearest way to internalize these differences is to observe them through code — which is exactly where the next section goes.

Connecting to Each System: Client Code That Exposes the Differences

The fastest way to understand the operational philosophy of a messaging system is to write a producer and consumer. The boilerplate required — and where errors surface — tells you more than any benchmark chart. Pay attention not just to what the code does, but to what concepts it forces you to name explicitly.

NATS: Minimal Surface Area

The NATS Go client connects, publishes, and subscribes in a handful of lines. Here is a complete example covering core pub/sub, queue groups, and a JetStream durable consumer:

package main

import ( "context" "log" "time"

"github.com/nats-io/nats.go" "github.com/nats-io/nats.go/jetstream")

func main() { nc, err := nats.Connect("nats://my-cluster:4222", nats.ReconnectWait(2*time.Second), nats.MaxReconnects(10), nats.DisconnectErrHandler(func(nc *nats.Conn, err error) { log.Printf("disconnected: %v", err) }), nats.ReconnectHandler(func(nc *nats.Conn) { log.Printf("reconnected to %s", nc.ConnectedUrl()) }), ) if err != nil { log.Fatal(err) } defer nc.Close()

// Core pub/sub — fire and forget if err := nc.Publish("orders.created", []byte(`{"id":"ord-9921"}`)); err != nil { log.Fatal(err) }

// Queue group — competing consumers, load-balanced by NATS server nc.QueueSubscribe("orders.created", "order-processors", func(msg *nats.Msg) { log.Printf("core sub received: %s", msg.Data) })

// JetStream — durable, at-least-once delivery js, _ := jetstream.New(nc) ctx := context.Background()

stream, _ := js.CreateOrUpdateStream(ctx, jetstream.StreamConfig{ Name: "ORDERS", Subjects: []string{"orders.>"}, })

cons, _ := stream.CreateOrUpdateConsumer(ctx, jetstream.ConsumerConfig{ Durable: "order-processor", AckPolicy: jetstream.AckExplicitPolicy, FilterSubject: "orders.created", })

cons.Consume(func(msg jetstream.Msg) { log.Printf("jetstream received: %s", msg.Data()) msg.Ack() })

time.Sleep(5 * time.Second)}Notice what is absent: broker partition configuration, offset tracking, consumer group epoch management, or rebalance callbacks. Reconnect logic is two options on the connection constructor. The server handles load balancing for queue groups; your application code never sees a partition number or a rebalance event.

Error handling in NATS follows the same philosophy. When the connection drops, the client library retries silently using exponential backoff, and your ReconnectHandler fires when it succeeds. Your business logic keeps running — the library buffers outbound publishes up to a configurable limit. There is no concept of a “consumer group epoch” that your code must synchronize with. The server absorbs that coordination on your behalf.

JetStream adds durability without adding a new set of client-side state machines. You declare a stream, create a durable consumer, and call Consume. Acknowledgment is explicit — msg.Ack() — but offset tracking and redelivery on failure are server-side concerns. The client contract remains thin even when persistence is involved.

Kafka: Explicit Control, Explicit Complexity

The Kafka equivalent requires you to manage concepts the broker delegates to you — partition assignment, offset commits, and rebalance lifecycle:

package main

import ( "context" "log" "os" "os/signal"

"github.com/confluentinc/confluent-kafka-go/v2/kafka")

func main() { producer, err := kafka.NewProducer(&kafka.ConfigMap{ "bootstrap.servers": "kafka-broker-0.my-cluster:9092", "acks": "all", // wait for all in-sync replicas "retries": 5, "retry.backoff.ms": 200, }) if err != nil { log.Fatal(err) } defer producer.Close()

producer.Produce(&kafka.Message{ TopicPartition: kafka.TopicPartition{Topic: strPtr("orders"), Partition: kafka.PartitionAny}, Value: []byte(`{"id":"ord-9921"}`), }, nil) producer.Flush(3000)

consumer, err := kafka.NewConsumer(&kafka.ConfigMap{ "bootstrap.servers": "kafka-broker-0.my-cluster:9092", "group.id": "order-processors", "auto.offset.reset": "earliest", "enable.auto.commit": false, // manual commit for exactly-once semantics "max.poll.interval.ms": 300000, }) if err != nil { log.Fatal(err) } defer consumer.Close()

consumer.SubscribeTopics([]string{"orders"}, func(c *kafka.Consumer, event kafka.Event) error { log.Printf("rebalance event: %v", event) // partitions assigned or revoked return nil })

sigchan := make(chan os.Signal, 1) signal.Notify(sigchan, os.Interrupt)

ctx, cancel := context.WithCancel(context.Background()) go func() { <-sigchan; cancel() }()

for { select { case <-ctx.Done(): return default: msg, err := consumer.ReadMessage(100) if err != nil { continue } log.Printf("received: %s", msg.Value) consumer.CommitMessage(msg) } }}

func strPtr(s string) *string { return &s }The rebalance callback is not optional boilerplate — it is where you handle partition revocation before committing in-flight work. If your handler is processing a batch when the broker reassigns partitions, you must checkpoint progress before returning from the revocation callback or accept that another consumer will reprocess those messages. max.poll.interval.ms compounds this: your processing loop must complete within a wall-clock deadline or the broker considers the consumer dead and triggers another rebalance. These are not edge cases; they are central to operating Kafka consumers correctly in production.

The producer side carries its own surface area. acks=all guarantees that the leader and all in-sync replicas have written the message before acknowledging — the right default for a durable pipeline, but one that adds latency proportional to your replication factor. retries and retry.backoff.ms are not automatic; they require deliberate values tuned to your broker’s failure recovery window.

Note: Set

enable.auto.committofalsefrom day one. Auto-commit silently advances offsets before your handler finishes, making message loss invisible until you have a downstream incident. The debugging story — reconstructing which messages were processed versus which were only acknowledged — is painful enough that the manual commit discipline pays for itself immediately.

What the Boilerplate Signals

The NATS client treats network interruptions and load distribution as library concerns. The Kafka client treats offset management, partition assignment, and rebalance coordination as application concerns. Neither choice is wrong — they reflect different durability contracts and different assumptions about where intelligence should live.

NATS offloads complexity to the server and accepts that at-rest durability is opt-in via JetStream. Kafka bakes durability into the protocol and accepts that clients carry more state. The operational implication is that NATS failures tend to be server-side events that your client observes passively, while Kafka failures often require your application to make correctness decisions — whether to commit, whether a rebalance is safe, whether a timeout indicates a slow consumer or a dead one.

For teams adding a fifth microservice that needs to fan out events to three consumers, the NATS surface area wins on integration speed. For teams building an audit pipeline where every message must survive a broker restart and be replayable six months later, Kafka’s explicit offset model is the right trade-off to make upfront.

With the client contracts established, the next question is raw throughput and latency under load — and the answer depends heavily on message size, consumer count, and whether your workload is bursty or steady-state.

Performance Characteristics: Where Each System Shines and Struggles

Raw performance numbers tell only half the story. The workload shape—message size, fan-out ratio, durability requirements, consumer topology—determines which system delivers on its theoretical ceiling and which hits an unexpected wall.

NATS Core: Latency as a First-Class Citizen

NATS core pub/sub is built around a fire-and-forget model that eliminates the overhead of coordination. In co-located deployments (same Kubernetes cluster, same availability zone), P99 latency sits reliably below 1ms for payloads under 64KB. That number holds across high fan-out scenarios because NATS routes by subject, not by consumer group coordination—the server fans out once, and subscriber delivery happens in parallel without cross-subscriber blocking.

Stretch that across regions and the picture changes. NATS cluster leaf nodes handle WAN topology, but you’re now paying round-trip latency to a remote node on every publish that requires acknowledgment. For globally distributed IoT telemetry where edge devices publish and regional processors subscribe, this matters. Plan for 5–30ms P99 depending on region pair, which is still competitive but no longer “invisible.”

Kafka: Throughput Over Latency

Kafka’s architecture optimizes for sustained ingestion volume, not individual message latency. The combination of sequential disk writes, producer batching (linger.ms, batch.size), and compression (LZ4 or Snappy for hot paths, zstd for archival) lets a three-broker cluster comfortably sustain 500MB/s+ write throughput on commodity hardware. This is the workload Kafka was designed for: millions of events per second that need durable, replayable storage with ordered guarantees per partition.

The latency trade-off is real. Even with linger.ms=0, producer-to-consumer round-trip latency in Kafka rarely drops below 5–10ms under load because of the commit log architecture. For request/reply patterns or low-latency control-plane messaging, this overhead is structural, not tunable.

JetStream: Persistence Has a Price

Enabling JetStream persistence in NATS introduces coordination overhead that adds 2–5ms to P99 latency for acknowledged publishes. This is the cost of writing to the stream before returning an ack. For workloads that previously relied on NATS core, this is a meaningful regression. The mitigation is to segment your subjects: keep ephemeral, latency-sensitive traffic on core pub/sub and route only durability-required messages through JetStream streams.

Backpressure and Slow Consumers

NATS drops messages to slow consumers by default on core pub/sub—there is no blocking backpressure. The server disconnects subscribers that fall too far behind. JetStream introduces push and pull consumer modes, where pull consumers control their own consumption rate. Kafka’s consumer group model inherently handles slow consumers through lag accumulation; the broker retains messages regardless of consumer pace, and max.poll.records gives consumers explicit flow control.

💡 Pro Tip: Kafka consumer lag (visible via

kafka-consumer-groups.sh --describe) is one of the most actionable operational signals in a Kafka deployment. Instrument it from day one—lag spikes are early indicators of downstream processing bottlenecks before they become incidents.

Kubernetes Footprint

A minimal production NATS cluster runs as three StatefulSet pods, each requiring roughly 64–128Mi memory and negligible CPU at rest—NATS is genuinely lightweight. A production Kafka cluster requires three brokers plus three ZooKeeper nodes (or KRaft controllers), with each broker typically provisioned at 4–8Gi heap plus OS page cache. JVM garbage collection adds operational complexity that has no equivalent in the NATS deployment model.

These resource profiles directly influence cluster density and cost, which the next section addresses through concrete Kubernetes manifests and the operational decisions that separate a working cluster from a reliable one.

Deploying on Kubernetes: Operational Reality

The gap between “it works in a demo” and “it runs reliably in production” is where most teams discover the true cost of their messaging choice. NATS and Kafka impose fundamentally different operational burdens on your Kubernetes clusters, and those differences compound over time.

NATS: Lightweight by Design, Operational Simplicity by Default

The NATS Helm chart and the NATS operator make initial deployment straightforward. A production-grade JetStream cluster with TLS and authentication fits in roughly 50 lines of values:

nats: image: tag: "2.10.14-alpine"

cluster: enabled: true replicas: 3

jetstream: enabled: true fileStore: pvc: size: 20Gi storageClassName: gp3

natsbox: enabled: false

auth: enabled: true resolver: type: full operator: /etc/nats/operator.jwt systemAccount: ADLHPBQTTQV7YMPKMV7LJXGFB2HWSVQ2XKDAJQ7DQBM6UIHVNPXOQFL

tls: secret: name: nats-tls ca: "ca.crt" cert: "tls.crt" key: "tls.key"Rolling upgrades with the NATS operator are genuinely zero-downtime. The operator drains connections from one pod, waits for the raft cluster to elect a new leader, then proceeds. The entire upgrade of a 3-node cluster typically completes in under 90 seconds with no client reconnection gaps beyond the normal retry window.

NKeys and JWT-based authentication keeps the security surface area small. You generate operator and account JWTs offline, store them in Kubernetes secrets, and the server validates them without any external auth service. Rotating credentials is an offline operation with no cluster restart required.

Kafka on Kubernetes: Strimzi Is the Right Choice, but Read the Fine Print

Running Kafka without an operator on Kubernetes is a maintenance anti-pattern at this point. StatefulSets, PodDisruptionBudgets, and rolling restart logic for brokers are all problems Strimzi has already solved. Use it. But Strimzi’s power comes with configuration surface area:

apiVersion: kafka.strimzi.io/v1beta2kind: Kafkametadata: name: prod-cluster namespace: messagingspec: kafka: version: 3.7.0 replicas: 3 listeners: - name: tls port: 9093 type: internal tls: true authentication: type: tls config: offsets.topic.replication.factor: 3 transaction.state.log.replication.factor: 3 transaction.state.log.min.isr: 2 default.replication.factor: 3 min.insync.replicas: 2 inter.broker.protocol.version: "3.7" storage: type: jbod volumes: - id: 0 type: persistent-claim size: 200Gi class: gp3 deleteClaim: false resources: requests: memory: 8Gi cpu: "2" limits: memory: 8Gi zookeeper: replicas: 3 storage: type: persistent-claim size: 10Gi class: gp3PVC sizing deserves explicit planning. Kafka retains data by time and size, and undersized volumes trigger broker shutdowns, not graceful degradation. Start with your peak throughput × retention period × replication factor, then add 30% headroom. A cluster serving 500 MB/s with 24-hour retention and RF=3 needs roughly 130 TB of total storage.

The rebalance problem is Kafka’s most painful day-2 operation. When you add brokers or replace a failed one, partition leadership and replicas do not automatically redistribute. You run kafka-reassign-partitions.sh or rely on Cruise Control (which Strimzi supports via the KafkaRebalance CRD), but either path involves careful throttling to avoid saturating broker network during reassignment.

💡 Pro Tip: Always set

replication.throttle.replicaswhen running partition reassignments in production. Unthrottled reassignments on high-throughput clusters have taken clusters from healthy to degraded in under 10 minutes.

Observability: The Tooling Gap Is Real

NATS exposes metrics via a built-in HTTP endpoint and a Prometheus exporter (nats-exporter). The metric surface is small—around 40 key metrics covering connections, subscriptions, JetStream stream lag, and consumer acks. Setup takes one Helm value change.

Kafka’s JMX metrics surface is orders of magnitude larger, which is both an asset and a burden. Meaningful monitoring requires curating which MBeans matter: UnderReplicatedPartitions, IsrShrinkRate, NetworkProcessorAvgIdlePercent, and consumer group lag via the separate kafka-consumer-groups.sh tool or Burrow. Prometheus JMX exporter configuration alone runs 200+ lines before you have actionable dashboards.

For teams already running a mature observability stack, Kafka’s richness is valuable. For teams standing up monitoring alongside their messaging infrastructure, NATS gets you to meaningful alerting in an afternoon instead of a week.

The operational picture points toward a clear conclusion: NATS minimizes SRE toil at the cost of fewer knobs; Kafka maximizes control at the cost of significant operational investment. That trade-off feeds directly into how you should match workload patterns to the right system—which the next section addresses head-on.

Decision Framework: Matching Workloads to the Right System

After examining the performance characteristics and operational realities of both systems, the decision comes down to one fundamental question: are you optimizing for message flow or message history?

Choose NATS When Your Workload Is Flow-Oriented

NATS excels in scenarios where messages are ephemeral by design and latency dominates your SLA:

- Request-reply patterns — Internal service RPC where you need sub-millisecond dispatch and automatic inbox routing. NATS request-reply is purpose-built for this; Kafka requires bolting on a correlation layer.

- IoT device fan-out — Publishing telemetry from millions of constrained devices that cannot maintain persistent TCP connections. NATS’s wildcard subject hierarchy (

sensors.*.temperature) routes without broker-side configuration changes. - Service mesh-style internal comms — When you want a fabric-level message bus decoupled from your HTTP service mesh, NATS subject-based addressing maps cleanly onto microservice boundaries.

- Command dispatch — Fire-and-forget control plane messages where at-most-once delivery is acceptable and throughput variability is low.

Choose Kafka When Your Workload Is History-Oriented

Kafka’s log-based model becomes the correct default when durability and replayability are first-class requirements:

- Event sourcing and audit logs — The immutable, partitioned log is not a feature layered on top; it is the data model. Rebuilding projections from offset zero is a first-class operation.

- Cross-team data contracts — Schema Registry, consumer group isolation, and the Kafka Connect ecosystem are infrastructure that teams have standardized on. Replacing it requires organizational alignment, not just engineering effort.

- Stream processing pipelines — Flink, Spark Structured Streaming, and ksqlDB integrate natively against Kafka’s partition model. Porting these pipelines to a non-Kafka broker is a full rewrite.

Use JetStream as the Middle Path

If you are already on NATS and encounter a use case requiring replay or at-least-once delivery, reach for JetStream before reaching for Kafka. JetStream delivers durable consumers, key-value storage, and object storage within the same binary. The operational surface stays flat; you do not inherit Kafka’s ZooKeeper legacy or broker-tuning complexity.

💡 Pro Tip: The hybrid architecture that consistently performs well in production: NATS for the internal fast-path (inter-service commands, health signals, real-time notifications) and Kafka as the durable event backbone for cross-domain data contracts and audit requirements. Both clusters run independently; a thin bridge component publishes selected NATS subjects to Kafka topics when an event crosses a domain boundary.

Migration Cost Is Asymmetric

Switching from NATS to Kafka is an application-layer change — your consumer semantics, offset management, and schema contracts all require rework. Switching from Kafka to NATS JetStream is feasible for greenfield consumers but requires replaying history from Kafka before decommissioning, which is a non-trivial data migration. Choose wrong on the durability axis and the cost is high; choose wrong on the latency axis and you pay in operational complexity, not data loss.

With the decision criteria mapped to workload patterns, the final question is what this infrastructure actually looks like at the organizational level — governance, team ownership, and the hidden costs that neither benchmark nor vendor documentation surfaces.

Key Takeaways

- Default to NATS for internal service-to-service communication where P99 latency matters and at-most-once delivery is acceptable; enable JetStream only for specific subjects that require replay guarantees.

- Choose Kafka when your use case is a durable event log that multiple teams consume independently, or when you need stream processing integration with Flink — the operational overhead is the price of that contract.

- Before committing to either system, map your workload to three dimensions: required delivery semantics (at-most-once vs at-least-once vs exactly-once), message retention needs (ephemeral vs hours vs indefinite), and consumer fan-out patterns (queue group vs broadcast vs replay).

- Run both systems in Kubernetes staging with your actual traffic shape before deciding — NATS’s low resource floor makes this cheap, and the operational difference in a rebalance scenario will tell you more than any benchmark.