NATS Streaming Core Concepts: Subjects, Queues, and JetStream Consumers

Your service crashes mid-processing. The messages it was handling are gone. No replay, no dead-letter queue, no second chance — just silence. A postmortem reveals the root cause: the team chose Core NATS for a workload that needed persistence, assuming the queue group semantics were enough to provide reliability. They weren’t. Core NATS delivered messages to exactly one subscriber, yes, but when that subscriber died before finishing, NATS had already moved on. There was nothing to acknowledge, nothing to redeliver, nothing to recover. The pipeline looked correct in staging and collapsed quietly in production.

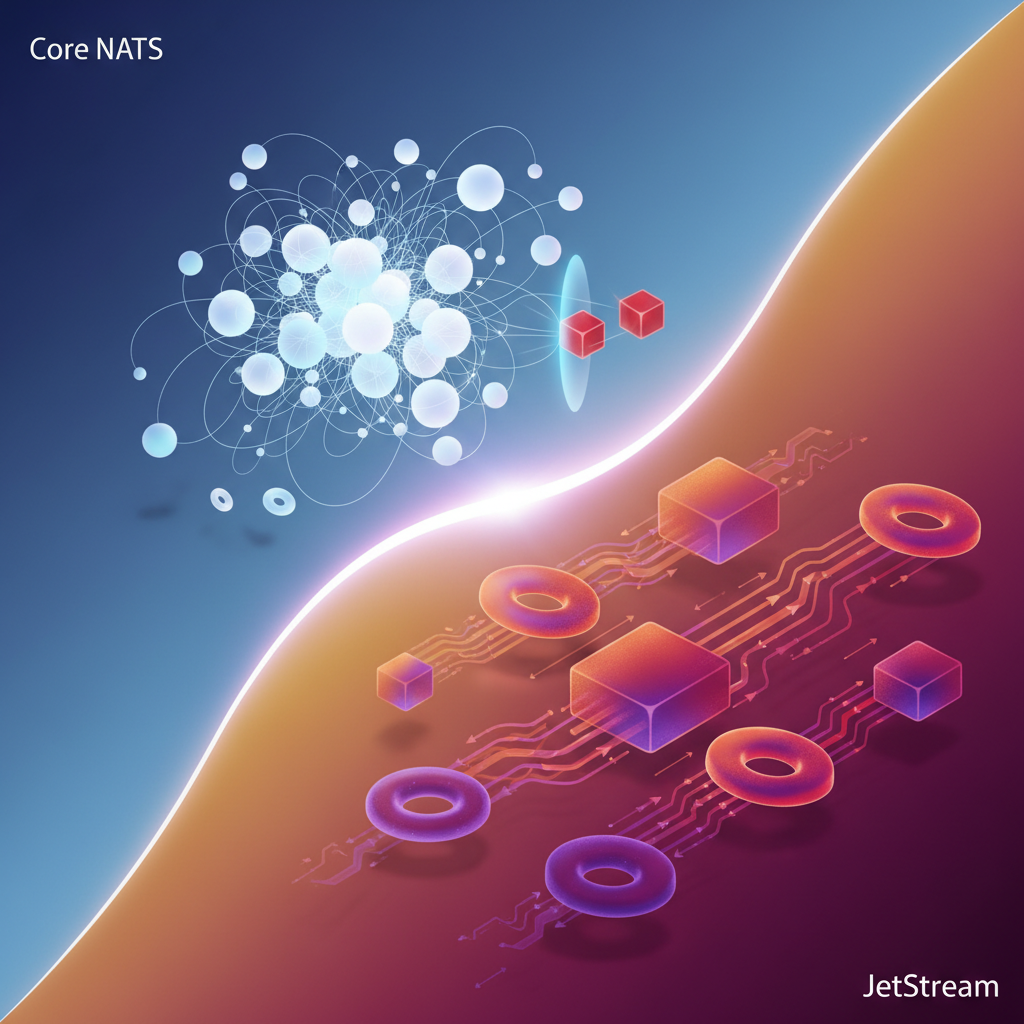

This failure mode is more common than it should be, because the two operational modes of NATS — Core and JetStream — share the same server binary, the same subject-based routing, and superficially similar consumer patterns. The distinction between them is not cosmetic. Core NATS is a pure publish-subscribe transport: no storage, no acknowledgment, no delivery guarantees beyond the moment of transmission. JetStream is a persistence layer built on top of that transport, adding durable storage, consumer tracking, acknowledgment semantics, and replay. Using one when you need the other is a category error, and the system won’t tell you until something breaks.

Getting this right means building a clear mental model of how subjects, queue groups, and JetStream consumers compose — what each primitive guarantees, where each one stops, and which combination maps to a given reliability requirement. That model starts with understanding the fundamental split between the two modes and why it exists.

The Two Modes of NATS: Core vs. JetStream

NATS operates in two fundamentally different modes, and conflating them is the most common architectural mistake engineers make when adopting the platform. Understanding the boundary between them is not an implementation detail—it is the foundation every subsequent design decision rests on.

Core NATS: Presence-Based Delivery

Core NATS is a pure publish-subscribe system built around a single guarantee: if a subscriber is connected and listening when a message arrives, it receives the message. If it is not, the message is gone. There is no persistence layer, no retry mechanism, and no acknowledgment protocol. The server acts as a routing engine, not a broker.

This constraint is a feature, not a limitation. Core NATS achieves sub-millisecond latencies precisely because it carries no persistence overhead. Metrics emission, live telemetry pipelines, cache invalidation signals, and presence notifications are all workloads where losing an occasional message is acceptable and where latency matters more than completeness. For these cases, Core NATS is the correct tool, and introducing JetStream would add complexity without adding value.

The failure mode to internalize: if your consumer restarts, scales down, or experiences a network partition during a message burst, those messages are silently dropped. No error is raised, no dead letter queue is populated, and no alarm fires. The system continues operating as if nothing happened. This silent data loss is invisible until you audit downstream state and notice the gap.

JetStream: Persistence and Delivery Guarantees

JetStream is not a separate product or a different server—it is a persistence and delivery layer built into the same nats-server binary and enabled through configuration. When JetStream is active, messages published to a stream are written to disk (or memory, depending on your retention policy) before the server acknowledges the publish. Consumers then read from that durable log, and the server tracks per-consumer acknowledgment state.

This architecture provides replay on startup, at-least-once delivery, and explicit ack/nak semantics. A consumer that crashes mid-processing will have its unacknowledged messages redelivered after a configurable timeout. The tradeoff is latency and operational complexity: JetStream requires capacity planning for storage, and the ack contract shifts responsibility for idempotent processing to the application layer.

💡 Pro Tip: The publish API for Core NATS and JetStream is intentionally similar—both use

nats publish—but they behave differently at the infrastructure level. Publishing to a subject backed by a JetStream stream without a connected consumer does not lose the message; publishing to a Core NATS subject in the same situation does. Treat the subject namespace as your mode selector and enforce the convention rigorously in team documentation.

The decision between the two modes reduces to a single question: does your application require evidence that a specific message was processed? If the answer is yes, JetStream is not optional. If the answer is no, Core NATS keeps your architecture simpler and your latency lower.

With that boundary established, the next logical question is how NATS routes messages in the first place—which is where subjects become the central concept to understand.

Subject-Based Messaging: The Routing Primitive Everything Builds On

Every message in NATS travels to a subject. Not a queue, not a topic, not an exchange—a subject. This distinction matters more than it appears on the surface, because subjects in NATS are pure routing strings with no inherent storage semantics. They are the address space that every higher-level primitive—queue groups, JetStream streams, services—is built on top of.

Subjects Are Hierarchical Strings, Not Queues

A NATS subject is a dot-delimited string: orders.us-east-1.created, payments.refunds.processed, telemetry.sensors.temperature. The hierarchy is implicit and carries no broker-side configuration. There is no “declare this subject” step. A subscriber expresses interest; a publisher sends to a name. If no subscriber is listening, the message is dropped silently unless you are using JetStream.

This is fundamentally different from Kafka topics or RabbitMQ exchanges. In Kafka, a topic is a persistent, partitioned log that exists independently of consumers. In NATS Core, a subject is ephemeral—it exists only as an agreement between publishers and subscribers.

Wildcards Enable Flexible Fan-Out Without Routing Tables

NATS provides two wildcard tokens for subscriptions:

*matches exactly one token in a segment position>matches one or more tokens at the end of a subject

package main

import ( "fmt" "log"

"github.com/nats-io/nats.go")

func main() { nc, err := nats.Connect("nats://localhost:4222") if err != nil { log.Fatal(err) } defer nc.Close()

// Matches: orders.us-east-1.created, orders.eu-west-1.created // Does NOT match: orders.created or orders.us-east-1.v2.created nc.Subscribe("orders.*.created", func(m *nats.Msg) { fmt.Printf("Region-scoped order created: %s\n", m.Subject) })

// Matches: telemetry.sensors.temperature, telemetry.sensors.humidity.raw // Also matches: telemetry.anything.at.all nc.Subscribe("telemetry.>", func(m *nats.Msg) { fmt.Printf("Telemetry event: %s\n", m.Subject) })

// Publish to trigger the subscriptions nc.Publish("orders.us-east-1.created", []byte(`{"order_id":"ord-88412"}`)) nc.Publish("telemetry.sensors.temperature", []byte(`{"value":23.4}`))

nc.Flush() select {} // block}The > wildcard is particularly powerful for catch-all monitoring subscriptions and audit logging, but it also creates a trap: a single subscriber on > receives every message on the server, which tanks throughput under load.

Subject Design Cascades Into Fan-Out and ACL Complexity

A flat subject namespace—event.created, event.updated, event.deleted—forces subscribers to receive everything and filter client-side. This pattern quietly accumulates technical debt. As the system grows, unrelated services receive each other’s traffic, ACL rules become broad and permissive, and any attempt to isolate a service’s message stream requires restructuring subjects everywhere.

Design subjects with specificity at the front, generality at the tail:

## Prefer this:payments.{region}.{merchant-id}.transaction.completed

## Over this:transaction.completedThe more specific prefix allows narrow wildcard subscriptions (payments.us-east-1.*) and fine-grained ACL policies that grant a service access to only the subjects it owns. JetStream stream filters also operate on subject prefixes, so a well-structured namespace maps directly to clean stream boundaries with no overlap.

💡 Pro Tip: Treat your subject hierarchy as a public API contract. Renaming a subject token is a breaking change with no built-in migration path—downstream subscribers break silently because NATS does not route errors back to the publisher when no one is listening.

With the subject namespace established as your routing primitive, the next question is how multiple instances of the same service share work across a subscription. That is where queue groups come in.

Queue Groups: Load Balancing Without a Broker Abstraction

When you need to distribute work across multiple service instances, NATS queue groups give you horizontal scaling with zero broker-side configuration. There are no consumer group definitions to create, no partition assignments to manage, and no coordination overhead — a subscriber opts into a queue group by supplying a group name at subscription time, and NATS handles the rest.

How Queue Groups Work

A queue group is identified by a string name shared across competing subscribers. When a message arrives on a subject, NATS selects exactly one member of the group to receive it using a random distribution algorithm. All other group members are skipped for that message. Subscribers outside the group still receive the message normally through their own subscriptions.

The critical characteristic to internalize: queue groups operate entirely within Core NATS. There is no persistence layer, no message log, and no redelivery mechanism. If the selected subscriber crashes between receiving the message and completing its work, that message is gone.

package main

import ( "log"

"github.com/nats-io/nats.go")

func main() { nc, err := nats.Connect("nats://nats.internal.example.com:4222") if err != nil { log.Fatalf("connect: %v", err) } defer nc.Close()

// All instances subscribing with the same queue group name // compete for incoming messages. NATS delivers each message // to exactly one subscriber in the group. sub, err := nc.QueueSubscribe("orders.process", "order-workers", func(msg *nats.Msg) { log.Printf("processing order: %s", msg.Data) // If this process crashes here, the message is not redelivered. }) if err != nil { log.Fatalf("subscribe: %v", err) } defer sub.Unsubscribe()

log.Println("worker ready, waiting for messages...") select {}}Deploy ten instances of this binary pointed at the same NATS server, and you have a ten-way load-balanced consumer pool. Scale to twenty by deploying more instances — no configuration change required anywhere else in the system.

The Reliability Boundary

Queue groups make a specific trade: simplicity and low latency in exchange for at-most-once delivery. Understanding exactly where the reliability boundary sits prevents incorrect assumptions from propagating into system design.

The boundary is delivery to the subscriber process. NATS considers its obligation fulfilled the moment it hands the message to the TCP connection. What happens inside the subscriber — whether the handler panics, the container is OOM-killed, or the database write fails — is invisible to the broker.

This means queue groups are appropriate when two conditions hold simultaneously:

- The workload is stateless and idempotent. Re-running the same message produces the same outcome, so accidental double-processing (from a retry at a higher layer) causes no harm.

- Losing occasional messages under failure is acceptable. Metrics aggregation, cache invalidation, analytics event ingestion, and fire-and-forget notifications all fit this profile. Payment processing and inventory reservations do not.

💡 Pro Tip: A useful heuristic — if you would be comfortable losing 0.1% of messages during a rolling deployment with no recovery path, queue groups are the right tool. If that number is unacceptable at any threshold, you need JetStream.

Mixing Direct Subscribers and Queue Groups

A single subject supports both queue group subscribers and plain subscribers simultaneously. A plain subscriber on orders.process receives every message regardless of queue group activity. This pattern is common for audit logging or metrics collection that must observe all traffic while the queue group handles the actual work distribution.

Queue groups solve the load-balancing problem cleanly for the right class of workload. When your requirements include guaranteed delivery, replay on failure, or processing acknowledgment, the architecture shifts to JetStream — which introduces a persistent log and a fundamentally different consumer model that the next section covers in detail.

JetStream Streams: Configuring Persistence and Retention

A JetStream stream is a durable log that captures messages published to one or more subjects. Unlike Core NATS, where undelivered messages vanish, a stream retains messages independently of whether any consumer is connected at the time of publication. This decoupling between producers and consumers is the foundational guarantee JetStream provides.

Stream Retention Modes

Retention policy controls when the server discards messages. Getting this wrong is one of the most common sources of unexpected data loss in JetStream deployments.

Limits retention (the default) discards messages based on configurable thresholds — maximum message count, maximum total bytes, or maximum age. The server enforces these limits regardless of whether any consumer has processed the message. Use this for event logs where you want a bounded history window.

Interest retention discards a message only after all bound consumers have acknowledged it. If no consumers are bound, messages are discarded immediately — a subtle footgun when consumers haven’t been created yet before producers start publishing.

WorkQueue retention discards a message as soon as one consumer acknowledges it. This enforces exclusive processing semantics: each message is consumed exactly once across the entire consumer group. Use this when the stream models a task queue rather than an event log.

💡 Pro Tip: Interest and WorkQueue retention modes require at least one consumer to exist before publishing begins, or messages are dropped on arrival. Create your consumers first, then start your producers.

Configuring a Production Stream

The following example creates a stream with LimitsPolicy retention backed by file storage, suitable for an order-processing pipeline that needs replay capability:

package main

import ( "context" "log" "time"

"github.com/nats-io/nats.go" "github.com/nats-io/nats.go/jetstream")

func main() { nc, err := nats.Connect("nats://nats.prod.internal:4222") if err != nil { log.Fatal(err) } defer nc.Close()

js, err := jetstream.New(nc) if err != nil { log.Fatal(err) }

cfg := jetstream.StreamConfig{ Name: "ORDERS", Subjects: []string{"orders.>"}, Retention: jetstream.LimitsPolicy, Storage: jetstream.FileStorage, MaxAge: 72 * time.Hour, MaxBytes: 10 * 1024 * 1024 * 1024, // 10 GiB MaxMsgSize: 1 * 1024 * 1024, // 1 MiB per message Replicas: 3, Discard: jetstream.DiscardOld, Compression: jetstream.S2Compression, }

stream, err := js.CreateOrUpdateStream(context.Background(), cfg) if err != nil { log.Fatal(err) }

info, _ := stream.Info(context.Background()) log.Printf("stream %s created, messages: %d", info.Config.Name, info.State.Msgs)}Storage and Replication Trade-offs

FileStorage persists messages to disk and survives server restarts. MemoryStorage offers lower latency but loses all data on restart — appropriate for ephemeral caching or staging environments, not production event logs.

Replicas: 3 instructs JetStream to maintain three synchronized copies of the stream across the NATS cluster using the Raft consensus protocol. Writes require a quorum acknowledgment before the server confirms receipt to the producer. This adds single-digit milliseconds of latency but ensures the stream survives the loss of one server without data loss.

Discard: DiscardOld drops the oldest messages when limits are hit. DiscardNew rejects incoming publishes instead — useful when you must not silently drop historical data and prefer backpressure over loss.

S2Compression reduces storage footprint by roughly 40–60% for typical JSON payloads with negligible CPU overhead. Enable it on file-backed streams unless your workload is already binary-compressed.

With a stream configured and retaining messages durably, the next question is how consumers attach to that stream, track their position, and negotiate delivery guarantees through acknowledgment modes — which is where JetStream’s consumer model becomes the central design surface.

JetStream Consumers: Durable, Ephemeral, and the Ack/Nak Contract

A JetStream stream stores messages. A consumer defines how those messages are delivered to your application — and getting the consumer configuration wrong is the primary source of silent data loss in NATS-based systems.

Every consumer sits on top of a stream and exposes a filtered, ordered view of that stream’s data. Four configuration axes determine its behavior: delivery mode (push or pull), starting position (new messages only, a specific sequence, or a timestamp), subject filter, and acknowledgment policy.

Durable vs. Ephemeral Consumers

The most operationally significant choice is durability. A durable consumer has a name, and NATS Server tracks its acknowledgment progress server-side. When a client disconnects and reconnects — whether due to a crash, a deployment, or a network partition — it resumes from the last acknowledged sequence. Nothing is replayed that was already confirmed, and nothing is skipped.

An ephemeral consumer has no name. The server creates it on demand and deletes it when the client disconnects. Use ephemeral consumers for short-lived reads: backfilling a cache on startup, one-shot debugging sessions, or scenarios where losing in-flight messages on disconnect is acceptable. For any production processing pipeline, reach for durable.

js, _ := nc.JetStream()

// Create or bind to a durable pull consumer_, err := js.AddConsumer("ORDERS", &nats.ConsumerConfig{ Durable: "order-processor", FilterSubject: "orders.>", AckPolicy: nats.AckExplicitPolicy, MaxDeliver: 5, AckWait: 30 * time.Second,})

sub, _ := js.PullSubscribe("orders.>", "order-processor", nats.Bind("ORDERS", "order-processor"))

msgs, _ := sub.Fetch(10, nats.MaxWait(5*time.Second))for _, msg := range msgs { if err := processOrder(msg); err != nil { // Nak with delay: requeue after 10s backoff msg.NakWithDelay(10 * time.Second) continue } msg.Ack()}The Ack/Nak Contract

JetStream supports three acknowledgment signals, and understanding all three is non-negotiable for correct flow control.

Ack removes the message from the consumer’s redelivery tracking. The server considers it processed. Call this only after your application has durably handled the message — written to a database, forwarded to a downstream service, or whatever constitutes successful processing in your domain.

Nak signals that processing failed and the message should be redelivered. NakWithDelay accepts a duration, which lets you implement progressive backoff directly at the consumer level. This is the primary redelivery primitive in JetStream — not a separate dead letter queue mechanism.

InProgress (also called a “work-in-progress” ack) resets the AckWait timer without acknowledging the message. Use this for long-running processing steps to prevent spurious redelivery while work is still happening.

msg, _ := sub.NextMsg(5 * time.Second)

// Signal long processing to prevent AckWait expirygo func() { ticker := time.NewTicker(10 * time.Second) defer ticker.Stop() for range ticker.C { msg.InProgress() }}()

result, err := callSlowExternalAPI(msg.Data)if err != nil { msg.NakWithDelay(30 * time.Second) return}

saveToDatabase(result)msg.Ack()💡 Pro Tip: Set

MaxDeliveron every durable consumer. Without it, a poison message cycles indefinitely at full redelivery speed, consuming resources and starving healthy messages. A boundedMaxDelivercombined with aNakWithDelaybackoff gives you controlled retry behavior before the message transitions to a dead letter stream.

The AckWait duration is the window between delivery and required acknowledgment. If your processing exceeds this window and you haven’t called InProgress, the server redelivers the message to the same consumer — potentially causing duplicate processing if your handler eventually succeeds. Size AckWait conservatively against your p99 processing latency, not your average.

Push vs. Pull Delivery

Pull consumers, as shown above, give your application explicit control over fetch cadence and batch size. Push consumers deliver messages to a subject as they arrive, which works well for low-latency pipelines but complicates backpressure management. For most microservice workloads where processing rate and resource consumption matter, pull is the safer default.

The failure modes covered here — silent drops from missing acks, infinite redelivery from uncapped delivery counts, spurious duplicates from undersized AckWait — are all recoverable at the consumer configuration level. The next section addresses what happens when messages exhaust their delivery attempts and how to route genuinely unprocessable messages without losing visibility into them.

Dead Letter Patterns and Poison Message Handling in JetStream

JetStream has no built-in dead letter queue. That absence is deliberate—the primitives exist to compose one, and the idiomatic pattern gives you more control than a black-box DLQ would. The strategy combines three JetStream mechanisms: MaxDeliver on the consumer, Nak-with-delay in the subscriber, and a dedicated dead-letter stream that receives forwarded failures.

The Retry Budget: MaxDeliver and Nak-with-Delay

Every JetStream consumer accepts a MaxDeliver setting that caps the total number of delivery attempts for any single message. Once that ceiling is hit, JetStream stops redelivering the message to that consumer. On its own, this prevents a poison message from spinning indefinitely—but without additional handling, the message is simply abandoned: invisible, unactionable, and gone from your operational picture.

The subscriber side controls when each retry occurs. Calling NakWithDelay parks the message for a specified duration before the next attempt, enabling a backing-off retry loop without external infrastructure. The combination of MaxDeliver and NakWithDelay gives you a fully configurable retry budget: how many attempts the system makes and how much time elapses between each one.

package main

import ( "context" "errors" "log" "time"

"github.com/nats-io/nats.go" "github.com/nats-io/nats.go/jetstream")

func main() { nc, _ := nats.Connect("nats://localhost:4222") js, _ := jetstream.New(nc)

ctx := context.Background()

consumer, _ := js.CreateOrUpdateConsumer(ctx, "orders", jetstream.ConsumerConfig{ Durable: "order-processor", AckPolicy: jetstream.AckExplicitPolicy, MaxDeliver: 5, AckWait: 30 * time.Second, FilterSubject: "orders.created", })

cc, _ := consumer.Consume(func(msg jetstream.Msg) { meta, _ := msg.Metadata() err := processOrder(msg)

if err != nil { delay := time.Duration(meta.NumDelivered) * 10 * time.Second if meta.NumDelivered >= 4 { forwardToDeadLetter(js, msg) msg.Ack() return } msg.NakWithDelay(delay) return } msg.Ack() }) defer cc.Stop()

select {}}

func processOrder(msg jetstream.Msg) error { return errors.New("simulated processing failure")}The delay grows linearly with each attempt—10s, 20s, 30s, 40s—before the fifth delivery triggers the dead-letter forward. Exponential backoff follows the same pattern; multiply rather than add. The forwarding logic runs on the fourth delivery (NumDelivered >= 4) rather than the fifth so the dead-letter write and the final Ack complete before MaxDeliver is reached, avoiding a race between the consumer’s delivery ceiling and your handling code.

The Dead-Letter Stream

The dead-letter stream is an ordinary JetStream stream configured with a separate subject namespace. Messages forwarded there carry the original payload plus metadata headers identifying the source stream, original subject, and failure count. Preserving this context is what separates a useful dead-letter implementation from a simple discard: operators need to know where a message came from and how many times it failed before deciding how to remediate it.

func forwardToDeadLetter(js jetstream.JetStream, msg jetstream.Msg) { meta, _ := msg.Metadata()

deadMsg := nats.NewMsg("deadletter.orders") deadMsg.Data = msg.Data() deadMsg.Header.Set("X-Original-Subject", msg.Subject()) deadMsg.Header.Set("X-Stream", meta.Stream) deadMsg.Header.Set("X-Delivery-Count", fmt.Sprintf("%d", meta.NumDelivered)) deadMsg.Header.Set("X-Failed-At", time.Now().UTC().Format(time.RFC3339))

if _, err := js.PublishMsg(context.Background(), deadMsg); err != nil { log.Printf("dead-letter forward failed: %v", err) }}The deadletter.orders stream requires its own stream definition with a retention policy suited to your observability requirements—LimitsPolicy with a long MaxAge works well for forensic review. Define it explicitly at startup rather than relying on auto-creation, so its configuration is version-controlled and predictable across environments.

Note: Add a

Nats-Msg-Idheader on the dead-letter publish using a hash of the original message ID. JetStream’s deduplication window prevents duplicate dead-letter entries if your forwarding logic runs more than once due to a race between the ack and the forward.

Replay and Observability

Messages sitting in the dead-letter stream are fully replayable. An operator publishes the payload back to orders.created with a corrected routing or payload, and the main consumer processes it fresh with its delivery counter reset. This is the critical advantage over silently dropping messages at MaxDeliver: the failure is visible in stream metrics, queryable via the NATS CLI (nats stream view deadletter.orders), and actionable without redeployment or code changes.

Dead-letter volume is also a leading indicator of upstream problems. A sudden spike in deadletter.orders message count signals a schema change, a downstream dependency failure, or a deployment regression—conditions that would otherwise surface only as silent processing gaps in your main stream. Alerting on dead-letter write rate gives you an early warning signal that retry budget alone cannot provide.

With the delivery contract fully defined—retries, backoff, and poison message capture—the next step is choosing which combination of these patterns fits a given service topology.

Choosing the Right Pattern: A Decision Framework

Three patterns cover the vast majority of NATS architectures. The right choice depends on two axes: whether you need persistence across restarts, and whether you need delivery guarantees beyond fire-and-forget.

Core NATS + Queue Groups

Use this when latency is the primary constraint and your processing logic is idempotent. Core NATS with a queue group delivers sub-millisecond routing with no broker-side state. If a subscriber crashes mid-processing, the message is gone—but if your handler can safely reprocess the same event (or the business domain tolerates occasional loss), that tradeoff is acceptable. Metric aggregation pipelines, cache invalidation signals, and real-time UI push notifications are canonical fits. The moment you need to audit which messages were processed, or replay a backlog after a deployment, this pattern runs out of road.

JetStream Push Consumers

Use this when multiple independent services each need their own complete view of a stream. A push consumer tied to a durable subscription delivers every retained message to each consumer group independently. An audit service and a billing service can both consume the same orders.confirmed stream from offset zero without competing. The server drives delivery, so this pattern is poorly suited to consumers with variable processing speed—backpressure is your responsibility to manage in the subscriber. Pair push consumers with DeliverNew or DeliverLastPerSubject policies when you want event-driven triggers on current data rather than full replay.

JetStream Pull Consumers (Durable)

Use this for any work queue where reliability is non-negotiable. Pull consumers give processing capacity control to the client: workers fetch exactly as many messages as they can handle, ack on success, nak or let the ack timeout expire on failure, and the server redelivers automatically. Durable consumers survive server restarts and client disconnects without losing position. Add a MaxDeliver limit and a dead-letter stream (as covered in the previous section) and you have a complete at-least-once processing pipeline with poison message isolation.

💡 Pro Tip: Start with the delivery guarantee you need, then work backward to the pattern. If you reach for JetStream by default, you pay persistence overhead on workloads that never needed it.

| Need | Pattern |

|---|---|

| Low latency, stateless, idempotent | Core NATS + Queue Group |

| Independent fan-out to multiple consumers | JetStream Push Consumer |

| Reliable work queue with backpressure | JetStream Pull Consumer (Durable) |

The concepts covered throughout this article—subjects, queue groups, streams, consumers, and dead-letter handling—compose into these three patterns. Understanding each primitive in isolation is what lets you combine them deliberately rather than by convention.

Key Takeaways

- Default to JetStream durable pull consumers for any workload where losing a message on crash is unacceptable — Core NATS queue groups offer no redelivery guarantee

- Design your subject hierarchy before writing any code: subject structure drives ACL policies, stream filter subjects, and consumer fan-out topology

- Implement poison message handling explicitly using MaxDeliver + a dead-letter stream rather than assuming infinite retries will eventually succeed