NATS Streaming: Building Resilient Event-Driven Systems Without Kafka's Complexity

Your microservices need reliable message delivery, but Kafka feels like overkill for your team’s scale. You’re tired of managing ZooKeeper clusters and tuning partition counts for a system that processes thousands—not millions—of messages per second. The operational overhead doesn’t match your throughput requirements, yet you can’t afford to lose messages when services restart or networks hiccup.

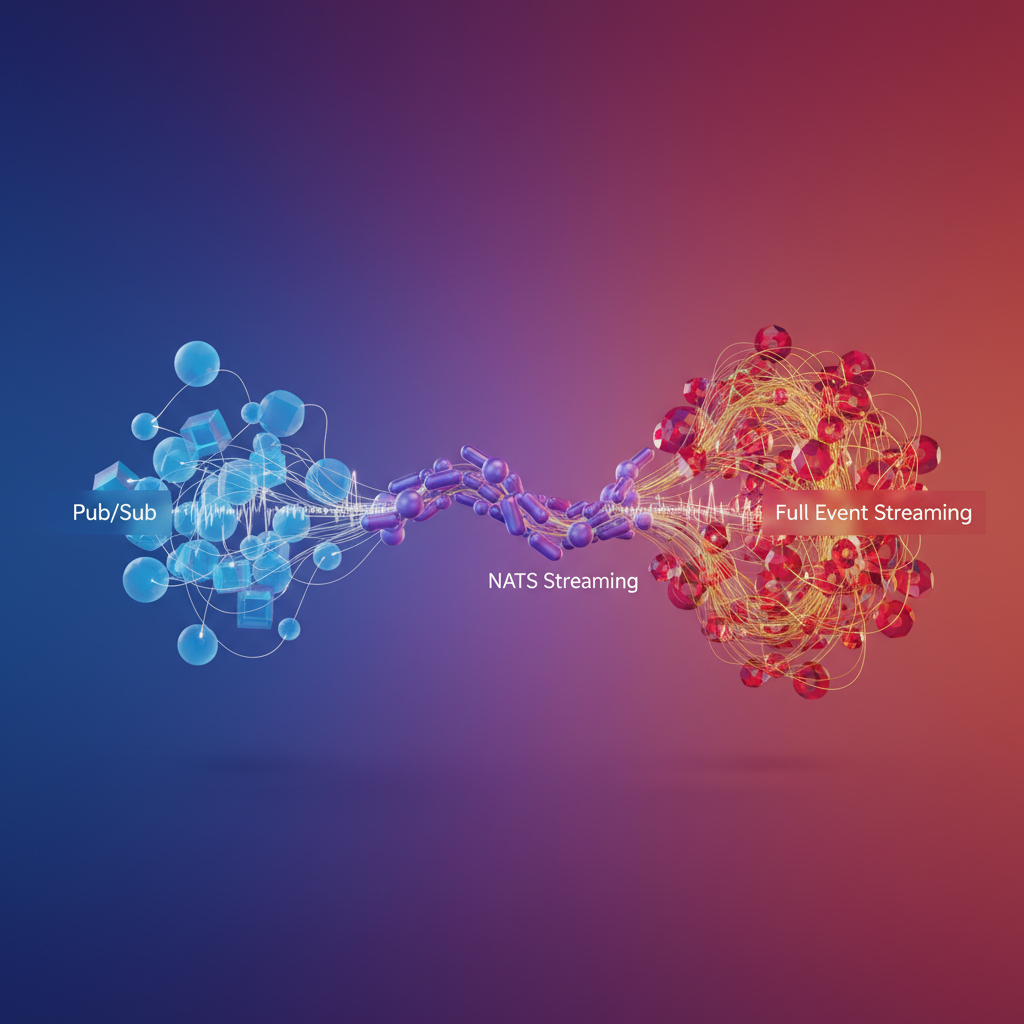

This is the infrastructure trap many engineering teams fall into: choosing between ephemeral pub/sub systems that can’t guarantee delivery and enterprise event streaming platforms that demand dedicated operations teams. Core NATS gives you blazing-fast message delivery with minimal setup, but messages vanish if no subscriber is listening. Kafka persists everything and scales to massive workloads, but you’ll spend weeks understanding consumer groups, replication factors, and compaction policies before processing your first event.

NATS Streaming emerged to bridge this gap. It takes NATS’s operational simplicity—single binary, no external dependencies, minimal configuration—and adds the persistence guarantees your business logic requires. Messages survive service restarts. Subscribers can replay event history. Failed deliveries get retried automatically. You get at-least-once delivery semantics without hiring a distributed systems expert to keep your infrastructure running.

The trade-offs matter, though. NATS Streaming makes specific design decisions about durability, ordering, and throughput that work brilliantly for certain use cases and poorly for others. Understanding where it fits requires looking at why it exists in the first place: the architectural gap between simple pub/sub and full event streaming platforms.

Why NATS Streaming Exists: The Gap Between Pub/Sub and Full Event Streaming

Modern distributed systems face a persistent challenge: choosing between simple, fast messaging and durable, reliable event streaming. Core NATS excels at the former—delivering sub-millisecond pub/sub messaging with minimal resource overhead—but it sacrifices persistence for speed. Messages published to NATS exist only in memory; if no subscriber is listening when a message arrives, that message disappears. For fire-and-forget notifications or real-time telemetry, this works perfectly. For order processing, financial transactions, or any workflow where message loss is unacceptable, it falls short.

On the opposite end of the spectrum sits Apache Kafka, the industry standard for durable event streaming. Kafka persists every message to disk, provides strong ordering guarantees, and supports massive throughput at scale. But this power comes with operational complexity: ZooKeeper coordination (or KRaft in newer versions), partition management, consumer group rebalancing, and resource-intensive brokers. For teams building microservices that handle thousands—not millions—of events per second, Kafka feels like deploying a freight train to deliver a package.

NATS Streaming bridges this gap by adding persistence and replay capabilities to NATS without inheriting Kafka’s operational burden. Built as a separate server that embeds a NATS client, NATS Streaming stores messages in channels (analogous to Kafka topics) using pluggable storage backends like file-based logs or SQL databases. When a subscriber disconnects and reconnects, it resumes from its last acknowledged message position. When a new service spins up, it can replay historical events from the beginning of a channel. This enables event sourcing patterns and audit trails without requiring a dedicated operations team.

The delivery guarantee model clarifies the tradeoff: NATS Streaming provides at-least-once delivery. Messages persist until acknowledged, and the server tracks each subscription’s position independently. If a subscriber crashes before acknowledging, NATS Streaming redelivers the message—potentially multiple times. This differs from Kafka’s at-least-once (default) and exactly-once (with transactional producers) semantics, and from core NATS’s at-most-once fire-and-forget approach. For most microservice workflows—order creation, user registration, inventory updates—at-least-once delivery paired with idempotent message handlers provides the right balance of reliability and simplicity.

💡 Pro Tip: NATS Streaming has been superseded by JetStream in NATS 2.2+, which offers better performance and tighter integration. However, NATS Streaming remains widely deployed and provides a gentler learning curve for teams migrating from core NATS.

Understanding where NATS Streaming sits in the messaging landscape—more durable than pub/sub, less complex than Kafka—helps you choose the right tool for your scale and requirements. For teams running dozens of microservices with moderate event volumes, NATS Streaming delivers persistence without operational overhead. With that foundation established, the next step is getting a NATS Streaming server running and publishing your first persistent message.

Setting Up Your First NATS Streaming Server

Getting NATS Streaming running is straightforward—you can have a production-ready server with persistent storage operational in minutes. Unlike Kafka’s multi-component architecture requiring ZooKeeper and careful broker configuration, NATS Streaming runs as a single binary with sensible defaults.

Running with Docker

The fastest way to start is with Docker. For development and testing, an in-memory configuration works well:

docker run -d --name nats-streaming \ -p 4222:4222 \ -p 8222:8222 \ nats-streaming:latest \ -p 4222 \ -m 8222 \ --store memory \ --max_channels 100 \ --max_msgs 1000000This exposes port 4222 for client connections and 8222 for HTTP monitoring. The memory store is fast but non-durable—restarts wipe all data. This trade-off makes sense for local development where you prioritize iteration speed over persistence, or for ephemeral workloads like test suites that generate disposable event streams.

For production, file-based persistence is essential:

docker run -d --name nats-streaming-persistent \ -p 4222:4222 \ -p 8222:8222 \ -v /var/nats/data:/data \ nats-streaming:latest \ -p 4222 \ -m 8222 \ --store file \ --dir /data \ --cluster_id prod-cluster \ --max_channels 1000 \ --max_msgs 10000000 \ --max_bytes 10GBThe --dir flag specifies where NATS Streaming persists message logs. Mounting /var/nats/data ensures data survives container restarts. The cluster_id identifies your streaming cluster—clients must reference this exact ID when connecting. Choose a descriptive cluster ID that reflects your environment; using generic names like “test-cluster” in production creates confusion when debugging multi-environment issues.

Configuration Deep Dive

The --max_channels, --max_msgs, and --max_bytes parameters control resource limits. Set these based on your workload: a microservices architecture with 50 event types might need 200 channels, while a high-throughput logging system needs higher message counts and byte limits. Each channel maintains its own message log, so over-provisioning channels increases memory overhead even when channels remain idle.

Beyond basic limits, consider tuning the file store’s flush interval with --file_sync_interval. The default is every 2 seconds, balancing durability against write throughput. Setting this to 0 forces synchronous fsyncs after every write—maximum durability but significant performance cost. For systems tolerating minimal data loss (seconds’ worth of messages), increasing to 5s or 10s improves throughput substantially.

File store performance depends critically on disk I/O characteristics. Use SSDs for production deployments—HDDs introduce latency that defeats NATS’s sub-millisecond messaging advantage. Beyond drive technology, filesystem choice matters: ext4 and XFS both perform well, but avoid network-mounted volumes like NFS where fsync latency becomes unpredictable.

💡 Pro Tip: Start with conservative limits and monitor actual usage through the metrics endpoint. Over-provisioning channels wastes memory; under-provisioning causes publish failures. The sweet spot emerges from observing real traffic patterns—not guessing.

Connection Strings and Authentication

Clients connect using the cluster ID and server URL:

## Basic connection (no auth)nats-streaming://localhost:4222?cluster_id=prod-cluster

## With authenticationdocker run -d --name nats-streaming-auth \ -p 4222:4222 \ nats-streaming:latest \ -p 4222 \ --cluster_id secure-cluster \ --user admin \ --pass S3cureP@ssw0rdFor production, basic username/password authentication is a starting point but not sufficient for multi-tenant systems. Use token-based authentication or integrate with NATS 2.0’s account-based security model for fine-grained access control. The account model allows you to segregate publishers and subscribers by logical boundaries, preventing cross-tenant data leakage—critical for SaaS platforms running shared NATS infrastructure.

TLS encryption adds another security layer. Enable it with --tls_cert and --tls_key flags pointing to your certificate files. Client libraries then connect using nats-streaming://localhost:4222?tls=true, ensuring message payloads remain encrypted in transit. For internal networks behind firewalls, unencrypted connections may suffice; for anything crossing untrusted networks, TLS is non-negotiable.

Health Checks and Monitoring

The monitoring endpoint at http://localhost:8222 provides real-time server statistics:

## Server healthcurl http://localhost:8222/streaming

## Channel statisticscurl http://localhost:8222/streaming/channelsz

## Client connectionscurl http://localhost:8222/streaming/clientszThese endpoints return JSON with message counts, byte usage, and active subscriptions—critical metrics for capacity planning. The /streaming/channelsz response shows per-channel message backlogs, helping identify slow consumers before they cause systemic issues. If a channel’s msgs count grows unbounded while bytes approaches your --max_bytes limit, you’ve got a consumer that’s fallen behind or died entirely.

Integrate with Prometheus using the NATS Prometheus exporter for historical trending and alerting. Configure alerts on metrics like connection count drops (potential network partition), file store write errors (disk issues), or subscription lag exceeding thresholds. Reactive monitoring catches fires; predictive trending prevents them.

With your server running and monitored, you’re ready to publish your first messages and explore NATS Streaming’s subscription durability features.

Publishing and Subscribing: From Basic Messages to Durable Subscriptions

NATS Streaming’s power lies in its ability to provide guaranteed message delivery while maintaining simplicity. Unlike core NATS’s fire-and-forget model, NATS Streaming ensures every published message is acknowledged and persisted before confirming success to the publisher.

Publishing with Acknowledgment

Publishing to NATS Streaming requires waiting for confirmation that the message has been persisted. This synchronous acknowledgment model prevents message loss at the cost of slightly higher latency:

import asynciofrom nats.aio.client import Client as NATSfrom stan.aio.client import Client as STAN

async def publish_order(stan_client, order_id, amount): """Publish order event with guaranteed delivery.""" order_data = f'{{"order_id": "{order_id}", "amount": {amount}}}'

# Publish returns an ack with the sequence number ack = await stan_client.publish( subject="orders.created", payload=order_data.encode() ) print(f"Published order {order_id}, sequence: {ack.guid}")

async def main(): nc = NATS() sc = STAN()

await nc.connect(servers=["nats://localhost:4222"]) await sc.connect("test-cluster", "order-publisher", nats=nc)

await publish_order(sc, "ORD-1001", 149.99) await publish_order(sc, "ORD-1002", 299.50)

await sc.close() await nc.close()

if __name__ == "__main__": asyncio.run(main())The publisher blocks until receiving the acknowledgment, ensuring the message is safely stored. For high-throughput scenarios, you can publish asynchronously with callbacks to handle acks without blocking.

Durable Subscriptions: Surviving Restarts

Regular subscriptions lose their position when a client disconnects. Durable subscriptions persist their progress on the server, allowing consumers to restart exactly where they left off:

import asynciofrom nats.aio.client import Client as NATSfrom stan.aio.client import Client as STAN

async def message_handler(msg): """Process incoming order events.""" print(f"Received sequence {msg.sequence}: {msg.data.decode()}") # Simulate processing time await asyncio.sleep(0.1)

async def main(): nc = NATS() sc = STAN()

await nc.connect(servers=["nats://localhost:4222"]) await sc.connect("test-cluster", "order-processor", nats=nc)

# Create durable subscription await sc.subscribe( subject="orders.created", queue="order-processors", durable_name="orders-durable", cb=message_handler, manual_acks=True, ack_wait=30 # Redeliver if not acked within 30s )

print("Listening for orders... Press Ctrl+C to exit") await asyncio.Event().wait()

if __name__ == "__main__": asyncio.run(main())The durable_name parameter creates a server-side bookmark. When this consumer restarts, it resumes from the last acknowledged message rather than starting over or missing messages.

Queue Groups for Load Balancing

Queue groups distribute messages across multiple consumers, enabling horizontal scaling. Each message is delivered to only one member of the queue group:

import asynciofrom nats.aio.client import Client as NATSfrom stan.aio.client import Client as STAN

async def worker(worker_id): """Individual worker in the processing pool.""" nc = NATS() sc = STAN()

await nc.connect(servers=["nats://localhost:4222"]) await sc.connect("test-cluster", f"worker-{worker_id}", nats=nc)

async def process_message(msg): print(f"Worker {worker_id} processing seq {msg.sequence}") await asyncio.sleep(0.5) await msg.ack()

await sc.subscribe( subject="orders.created", queue="order-processors", durable_name="orders-durable", cb=process_message, manual_acks=True, max_inflight=5 # Process up to 5 messages concurrently )

await asyncio.Event().wait()

async def main(): # Spin up 3 workers in the same queue group workers = [worker(i) for i in range(3)] await asyncio.gather(*workers)

if __name__ == "__main__": asyncio.run(main())The combination of queue groups and durable subscriptions provides both load balancing and fault tolerance. If worker-1 crashes, worker-2 and worker-3 continue processing, and unacknowledged messages are redelivered based on the ack_wait timeout.

💡 Pro Tip: Set

max_inflightbased on your processing capacity. A higher value increases throughput but requires more memory to buffer unacknowledged messages.

Handling Redelivery

NATS Streaming automatically redelivers messages that aren’t acknowledged within the ack_wait period. Your handlers must be idempotent to safely handle duplicate processing during network issues or consumer crashes. Track processed message sequences in your database or use external deduplication when necessary.

With these patterns in place, you’ve built a resilient message processing system. The next step is leveraging NATS Streaming’s unique capability: replaying historical messages to rebuild state or recover from data loss.

Message Replay and Time-Travel: Leveraging Persistent Streams

Unlike traditional pub/sub systems where messages vanish after delivery, NATS Streaming persists every message to disk, enabling powerful replay capabilities that transform how you debug production issues and build audit systems. This persistence layer turns your message stream into a queryable event log, providing both historical context and operational flexibility that ephemeral messaging simply cannot match.

Replaying from Sequence Numbers

Every message in a NATS Streaming channel receives a monotonically increasing sequence number. Subscribers can start consuming from any point in the stream’s history:

const stan = require('node-nats-streaming').connect('my-cluster', 'replay-client');

stan.on('connect', () => { const opts = stan.subscriptionOptions() .setStartAtSequence(1000) // Start from message 1000 .setManualAckMode(true) .setAckWait(30000);

const subscription = stan.subscribe('orders', 'audit-group', opts);

subscription.on('message', (msg) => { const data = JSON.parse(msg.getData()); console.log(`Seq ${msg.getSequence()}: ${data.orderId} at ${new Date(msg.getTimestamp())}`); msg.ack(); });});This pattern enables reprocessing specific message ranges when bugs corrupt downstream data. If you discover a faulty payment processor affected orders 15000-16500, spawn a new subscriber starting at sequence 15000 to reprocess only those transactions. The precision of sequence-based replay makes it ideal for targeted recovery operations where you know exactly which message range needs reprocessing.

Beyond simple recovery, sequence-based replay supports sophisticated event sourcing architectures. Rebuild read models from scratch by replaying the entire event stream, or create new projections by processing historical events through different business logic. The deterministic ordering guarantees that replaying the same sequence range always produces identical state transitions.

Time-Based Replay for Production Debugging

Sequence numbers work when you know the exact range, but production incidents often reference timestamps: “The service started failing at 3:47 PM.” NATS Streaming supports time-based replay:

const stan = require('node-nats-streaming').connect('my-cluster', 'debug-client');

stan.on('connect', () => { // Replay all messages from the last 2 hours const twoHoursAgo = Date.now() - (2 * 60 * 60 * 1000);

const opts = stan.subscriptionOptions() .setStartTime(twoHoursAgo) .setDeliverAllAvailable();

const subscription = stan.subscribe('user-events', opts);

subscription.on('message', (msg) => { const event = JSON.parse(msg.getData()); console.log(`[${new Date(msg.getTimestamp()).toISOString()}] ${event.type}: ${event.userId}`); });});This capability is invaluable during incident response. Start a temporary subscriber that replays messages from when alerts fired, pipe the output to your analysis tools, and identify the exact event that triggered the cascade failure—all without affecting production subscribers. Time-based replay aligns naturally with how engineers think during outages: correlating application behavior with specific time windows rather than abstract sequence numbers.

The timestamps NATS Streaming assigns to messages reflect when the server received them, not when publishers sent them. Account for this when debugging distributed timing issues—clock skew between publishers doesn’t affect stream ordering, but the recorded timestamps may differ from application-level timestamps in your message payloads.

Building Audit Logs with Complete History

The combination of persistence and replay makes NATS Streaming excellent for audit trails. Every state change flows through the stream and remains queryable indefinitely:

const stan = require('node-nats-streaming').connect('my-cluster', 'audit-service');

stan.on('connect', () => { const opts = stan.subscriptionOptions() .setDeliverAllAvailable() // Get entire history .setDurableName('audit-durable');

const subscription = stan.subscribe('account-changes', 'audit-group', opts);

subscription.on('message', (msg) => { const change = JSON.parse(msg.getData());

// Write to long-term storage database.insert('audit_log', { sequence: msg.getSequence(), timestamp: new Date(msg.getTimestamp()), actor: change.userId, action: change.action, resource: change.accountId, details: change.details });

msg.ack(); });});💡 Pro Tip: Use durable subscriptions for audit consumers. If the audit service crashes and restarts, it automatically resumes from the last acknowledged message without missing entries.

For compliance scenarios requiring tamper-proof audit logs, combine NATS Streaming with write-once storage backends. The stream provides the operational layer for real-time processing while immutable archives satisfy regulatory requirements. Periodically export message ranges to S3 Glacier or similar archival storage, retaining only recent data in NATS for active replay.

Understanding Retention Policies

Unlimited retention isn’t realistic for high-throughput systems. Configure retention limits when starting the NATS Streaming server:

nats-streaming-server \ --cluster_id my-cluster \ --max_msgs 1000000 \ --max_bytes 10GB \ --max_age 168hMessages are deleted when any limit is reached. For compliance requirements demanding seven-year retention, archive old messages to object storage while keeping recent data in NATS for fast replay. The max_age parameter uses duration strings (168h = 7 days), while max_msgs and max_bytes enforce hard limits on stream size. Once any threshold is exceeded, the oldest messages are purged first.

Consider your retention policy carefully based on actual replay patterns. If most debugging sessions examine the last 24 hours and reprocessing rarely looks back more than a week, a 168-hour retention window provides sufficient operational flexibility without excessive storage costs. Monitor disk usage and replay request patterns to tune these values over time.

With replay mechanics mastered, the next challenge is deploying NATS Streaming servers that can actually survive datacenter failures without losing messages.

Production Deployment: Clustering and Fault Tolerance

Moving NATS Streaming to production requires understanding its clustering model and choosing the right persistence strategy. Unlike NATS Core’s peer mesh clustering, NATS Streaming operates on an active-passive model where a single server handles all traffic while standby nodes wait to take over during failures.

Understanding NATS Streaming’s Clustering Model

NATS Streaming achieves fault tolerance through a shared state model. Multiple streaming servers connect to the same underlying NATS cluster, but only one actively processes messages at any time. The active server acquires a lock (via Raft consensus or shared storage), and if it fails, another server claims the lock and resumes operations.

This design simplifies consistency guarantees—there’s no split-brain scenario or partition resolution logic—but it means vertical scaling matters more than horizontal scaling. The active server must handle your entire message throughput.

The failover process typically completes within seconds. When the active server becomes unavailable, standby servers detect the missing heartbeat and compete for the lock. The first standby to acquire it replays any uncommitted state from shared storage and begins accepting client connections. Clients automatically reconnect when they detect the active server has changed, though you’ll experience brief message delivery pauses during the transition.

Kubernetes Deployment with StatefulSets

Deploy NATS Streaming on Kubernetes using StatefulSets to maintain stable network identities and persistent storage:

apiVersion: apps/v1kind: StatefulSetmetadata: name: nats-streamingspec: serviceName: nats-streaming replicas: 3 selector: matchLabels: app: nats-streaming template: metadata: labels: app: nats-streaming spec: containers: - name: nats-streaming image: nats-streaming:0.25.6 args: - "-store" - "file" - "-dir" - "/data/nats-streaming" - "-clustered" - "-cluster_id" - "production-cluster" - "-nats_server" - "nats://nats:4222" - "-max_channels" - "1000" - "-max_msgs" - "1000000" - "-max_bytes" - "10GB" - "-ft_group" - "streaming-ft" ports: - containerPort: 4222 name: client - containerPort: 8222 name: monitoring volumeMounts: - name: datadir mountPath: /data resources: requests: memory: "2Gi" cpu: "1000m" limits: memory: "4Gi" cpu: "2000m" livenessProbe: httpGet: path: /healthz port: 8222 initialDelaySeconds: 10 periodSeconds: 10 readinessProbe: httpGet: path: /healthz port: 8222 initialDelaySeconds: 5 periodSeconds: 5 volumeClaimTemplates: - metadata: name: datadir spec: accessModes: ["ReadWriteOnce"] storageClassName: "fast-ssd" resources: requests: storage: 100GiThe clustered flag enables fault tolerance mode, while ft_group defines which servers participate in the same fault-tolerant cluster. When the active pod crashes, Kubernetes restarts it or promotes a standby, which then claims the lock and resumes message delivery. The three-replica configuration provides redundancy without wasting resources—only one server actively processes messages, but two standbys ensure high availability.

Choose storage classes carefully. NATS Streaming performs sequential disk writes, so SSD-backed volumes (fast-ssd in the example) deliver significantly better throughput than network-attached storage. For high-throughput deployments, consider local NVMe volumes with appropriate backup strategies.

Choosing Your Persistence Backend

NATS Streaming supports two storage backends with different performance and operational characteristics:

File Store offers the best performance and simplicity. Messages write to disk in a structured file format optimized for sequential access. This works well for most deployments and handles millions of messages efficiently. The StatefulSet example above uses file store with persistent volumes.

File store organizes data into multiple files per channel: message logs, subscriber state, and indexing structures. This design enables fast lookups when clients request historical messages by sequence number. Writes complete in microseconds on SSDs, and the server can replay millions of messages per second during recovery.

SQL Store trades performance for operational familiarity. Supported databases include PostgreSQL and MySQL. Use SQL when your team prefers database-backed persistence or needs to query message metadata directly:

args:- "-store"- "sql"- "-sql_driver"- "postgres"- "-sql_source"- "postgresql://nats:secure-password-8kj2@postgres:5432/nats_streaming?sslmode=require"- "-clustered"- "-ft_group"- "streaming-ft"SQL store adds latency (typically 2-5ms per operation) but integrates with existing database backup and replication infrastructure. Multiple NATS Streaming servers can share the same database, using row-level locks to coordinate which server is active. This approach leverages your database’s high-availability features—PostgreSQL with synchronous replication or MySQL with group replication provides fault tolerance at the storage layer.

💡 Pro Tip: Start with file store unless you have a compelling reason to use SQL. File store delivers sub-millisecond writes and handles terabytes of messages with minimal tuning. SQL store makes sense when you need to integrate NATS Streaming with existing database observability tools or want to offload storage management to your database team.

Monitoring and Observability

NATS Streaming exposes Prometheus metrics on port 8222. Key metrics to monitor:

nats_streaming_server_channels: Number of active channelsnats_streaming_server_subscriptions: Total subscriptions across all channelsnats_streaming_channel_msgs_total: Messages stored per channelnats_streaming_channel_bytes_total: Storage used per channelnats_streaming_server_is_leader: Whether this server is the active leader (1 for active, 0 for standby)

Set alerts for storage approaching max_bytes limits and subscription lag exceeding acceptable thresholds. Most production deployments also track the leader metric across all replicas to verify exactly one server reports as active—if multiple servers claim leadership or none do, your fault tolerance mechanism has failed.

Monitor disk I/O saturation on file store deployments. When write latency spikes, you’re either exceeding your storage backend’s throughput or need to tune channel limits. For SQL store, track database connection pool exhaustion and query latencies—NATS Streaming issues frequent small queries, so connection overhead matters.

With your NATS Streaming cluster running reliably, you’re ready to implement real-world messaging patterns that leverage persistent streams and durable subscriptions.

Real-World Patterns: Order Processing and Event Sourcing

NATS Streaming’s persistence and replay capabilities enable architectural patterns that would be fragile or impossible with plain pub/sub messaging. Here’s how to implement saga orchestration, event sourcing, and idempotent message handling in production systems.

Saga Patterns for Distributed Transactions

In an order processing system, a successful purchase involves coordinating payment, inventory, and shipping services. With NATS Streaming, implement the saga pattern by publishing each step as a durable event and subscribing to compensating actions when failures occur.

The order service publishes an OrderCreated event to the orders channel. Payment, inventory, and shipping services each maintain durable subscriptions with unique queue groups. If payment succeeds, it publishes PaymentConfirmed. If inventory allocation fails, it publishes InventoryUnavailable, triggering the payment service’s compensating subscription to issue a refund via PaymentRefunded.

Each service processes messages with manual acknowledgment only after persisting state changes. This ensures that if a service crashes mid-transaction, NATS Streaming redelivers the unacknowledged message after the configured acknowledgment timeout. The saga progresses forward through published events, and compensating actions flow backward through failure events—all coordinated through durable message streams without a central orchestrator.

Event Sourcing with Message Replay

NATS Streaming’s ability to replay messages from any sequence number or timestamp makes it a natural fit for event sourcing. Instead of storing current state in a database, services store immutable events and rebuild state by replaying the event stream.

For an order history service, subscribe to the orders channel with StartSequence(1) to receive every event since stream inception. Process OrderCreated, PaymentConfirmed, OrderShipped, and OrderDelivered events in sequence to build the complete order state. When deploying a new read model or recovering from data loss, simply replay the entire stream.

Set a reasonable MaxInFlight value (typically 100-1000 messages) to control memory usage during replay while maintaining throughput. For long-lived streams, periodically snapshot aggregate state and replay only from the last snapshot sequence number to reduce recovery time.

Idempotency and Duplicate Handling

NATS Streaming guarantees at-least-once delivery, meaning subscribers occasionally receive duplicate messages during redelivery scenarios. Services must handle duplicates idempotently.

Store processed message sequence numbers in your database alongside state changes within the same transaction. Before processing a message, check if that sequence number already exists. If found, acknowledge the message without reprocessing. This ensures exactly-once semantics at the application level.

For services without transactional storage, use natural idempotency keys from the event payload itself—order IDs, payment transaction IDs, or request correlation IDs. Hash these keys and store them in a distributed cache like Redis with expiration matching your acknowledgment timeout plus processing window.

💡 Pro Tip: Configure acknowledgment timeouts 2-3x longer than your expected processing time to prevent unnecessary redeliveries while still ensuring progress during actual failures.

Performance Characteristics and Scaling

NATS Streaming handles 100,000-500,000 messages per second on modern hardware, sufficient for most microservice deployments. Message latency ranges from 1-10ms for non-durable subscribers and 5-50ms for durable subscribers with file persistence.

Scale horizontally using queue groups: multiple instances of the same service subscribe with identical queue group names, and NATS Streaming load-balances messages across instances. Each instance processes different messages, increasing total throughput linearly.

For higher throughput requirements, partition channels by tenant ID, region, or entity type. Deploy separate NATS Streaming clusters per partition or use JetStream (NATS Streaming’s successor) which provides native stream partitioning and improved performance characteristics.

With these patterns in place, you’re ready to deploy NATS Streaming in production. The next section covers clustering strategies, fault tolerance configurations, and operational best practices for running NATS Streaming reliably at scale.

Migration Path: When to Upgrade to JetStream

NATS Streaming served its purpose well, but the NATS ecosystem has evolved. In June 2021, the NATS team announced JetStream as the recommended replacement, and NATS Streaming is now in maintenance mode with no new features planned. Understanding the migration path helps you make informed architectural decisions.

JetStream’s Key Improvements

JetStream isn’t just NATS Streaming 2.0—it’s a fundamental redesign built directly into the NATS server. The integration eliminates the separate streaming server process, reducing operational complexity and improving performance. JetStream delivers horizontal scalability through stream replication, supporting multi-region deployments that NATS Streaming’s clustering model struggled with.

The feature set extends beyond basic persistence. JetStream provides exact message deduplication through message IDs, consumer filtering at the server level to reduce network overhead, and key-value and object stores built on the same streaming infrastructure. Storage is more flexible, with memory, file, and upcoming S3 backend options compared to NATS Streaming’s file-only approach.

Performance improvements are substantial. JetStream’s raft-based clustering delivers higher throughput and lower latency than NATS Streaming’s shared state model. The unified architecture means one less process to monitor, secure, and upgrade.

Planning Your Migration

Migration doesn’t need to be immediate or all-at-once. Run JetStream alongside NATS Streaming on the same NATS infrastructure—they coexist without interference. Start by routing new streams to JetStream while existing NATS Streaming channels continue serving legacy systems.

The NATS team provides migration tools to replay messages from NATS Streaming channels into JetStream streams, though most teams prefer a gradual cutover during natural application updates. Client library changes are minimal—the conceptual model remains similar even though the APIs differ.

When NATS Streaming Still Makes Sense

If your current NATS Streaming deployment works reliably and you’re not hitting scaling limits, immediate migration isn’t mandatory. The maintenance commitment means critical bugs get fixed. However, plan for JetStream adoption within your next 12-18 month roadmap as community momentum and tooling investment shifts entirely to JetStream.

With the migration strategy clear, let’s explore how these patterns apply to real production scenarios.

Key Takeaways

- Start with file-based persistence in development, validate with SQL stores in production for better durability guarantees

- Always use durable subscriptions with unique client IDs for services that need to survive restarts without losing messages

- Monitor queue depth and redelivery rates as leading indicators of consumer health before they become production incidents

- Evaluate JetStream for new projects, but NATS Streaming remains viable for teams seeking stability over new features