NATS JetStream vs Kafka: Choosing the Right Persistent Messaging Layer for Cloud-Native Systems

Your microservices need persistent messaging, and you’ve defaulted to Kafka because everyone uses Kafka. That’s not a criticism — Kafka earned its dominance. It’s battle-tested, the ecosystem is mature, and “nobody gets fired for choosing Kafka” has become a quiet axiom in platform engineering. But when you actually stand up a Kafka cluster for a mid-sized cloud-native workload, you inherit the full operational weight: ZooKeeper coordination (or the newer KRaft mode if you’re migrating away from it), broker count decisions, partition topology planning, replication factor tuning, and a monitoring surface area that demands dedicated attention. For teams running Kubernetes-native stacks, this often means a Kafka operator, persistent volume claims across multiple pods, and a separate ops runbook just for the messaging layer.

NATS JetStream keeps appearing as an alternative, and the pitch is genuinely compelling: the same durability guarantees — persistent streams, consumer groups, at-least-once and exactly-once delivery — inside a single binary that already handles your pub/sub traffic. No separate coordination layer. Dramatically lower resource footprint. A unified protocol surface from ephemeral fire-and-forget messages to durable, replayable streams.

The natural skepticism is warranted. “Single binary” solutions have a history of trading operational simplicity for capability gaps that surface under production load. So the question isn’t whether JetStream looks simpler on a whiteboard — it does — but whether it holds up against the same workloads you’d trust to Kafka.

Before that comparison means anything, you need to understand what JetStream actually is relative to core NATS, because conflating the two leads to a fundamentally broken evaluation.

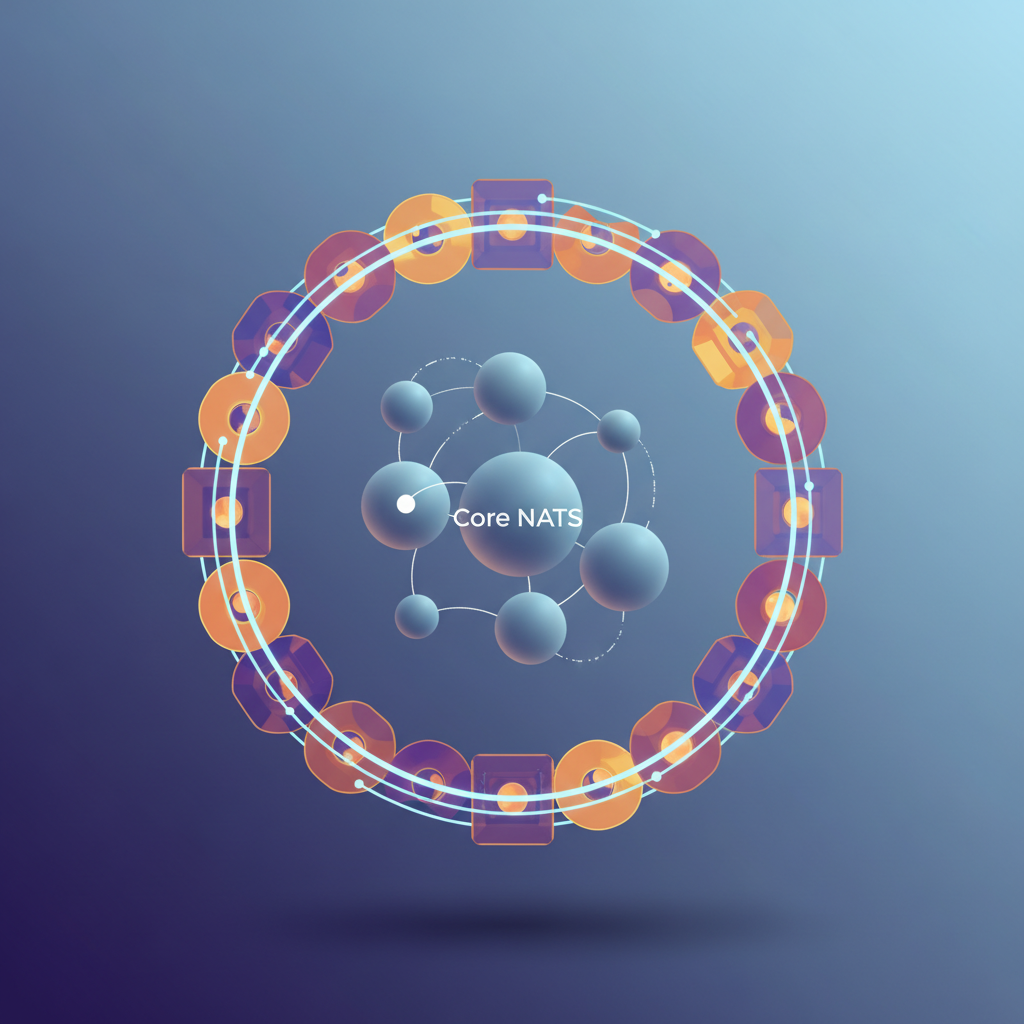

The Persistence Problem: Why Core NATS Isn’t Enough

NATS was built for speed. Its core publish-subscribe model routes messages between connected clients with microsecond latency and minimal overhead — but only while both publisher and subscriber are online simultaneously. When a subscriber disconnects, messages published during that window are gone. When a service restarts, there is no replay. When you need exactly-once processing guarantees, Core NATS offers no mechanism to provide them.

This is not a flaw. It is a deliberate design choice that makes Core NATS exceptional at what it does: low-latency, high-throughput messaging for tightly coupled, always-on services. Request-reply patterns, service mesh communication, and real-time fan-out are scenarios where Core NATS excels without any additional infrastructure. But the moment your architecture requires durable message delivery — event sourcing, audit trails, cross-service choreography with independent scaling, or any workflow where producers and consumers operate asynchronously — Core NATS alone is insufficient.

JetStream Is Not a Separate Product

This is where the comparison to Kafka typically goes wrong. Engineers evaluating NATS against Kafka often benchmark Core NATS against a Kafka cluster and conclude that NATS cannot offer persistence. That comparison is structurally invalid.

JetStream is the persistence layer built directly into the NATS server binary. There is no separate process to run, no separate cluster to manage, and no separate client library to learn. You enable JetStream with a single configuration flag on your existing NATS server and use the same NATS connection and subject model you already have. The server handles the rest — writing messages to disk, managing consumer state, enforcing retention policies, and coordinating acknowledgments.

💡 Pro Tip: A single NATS deployment can serve both Core NATS and JetStream workloads simultaneously. Services that need fire-and-forget pub-sub and services that need durable streams share the same infrastructure without any coordination overhead between two separate systems.

Structurally, JetStream stores messages in streams — ordered, persistent logs scoped to one or more subjects. This is conceptually similar to Kafka’s log-backed partitions, but the operational model diverges significantly. JetStream consumers are server-side constructs that track progress per consumer, not per partition, and they support push and pull delivery modes natively.

Understanding this layered architecture — Core NATS for ephemeral messaging, JetStream for persistence — is the prerequisite for any honest comparison with Kafka. The next section examines what Kafka’s mental model actually requires of the engineers who operate it.

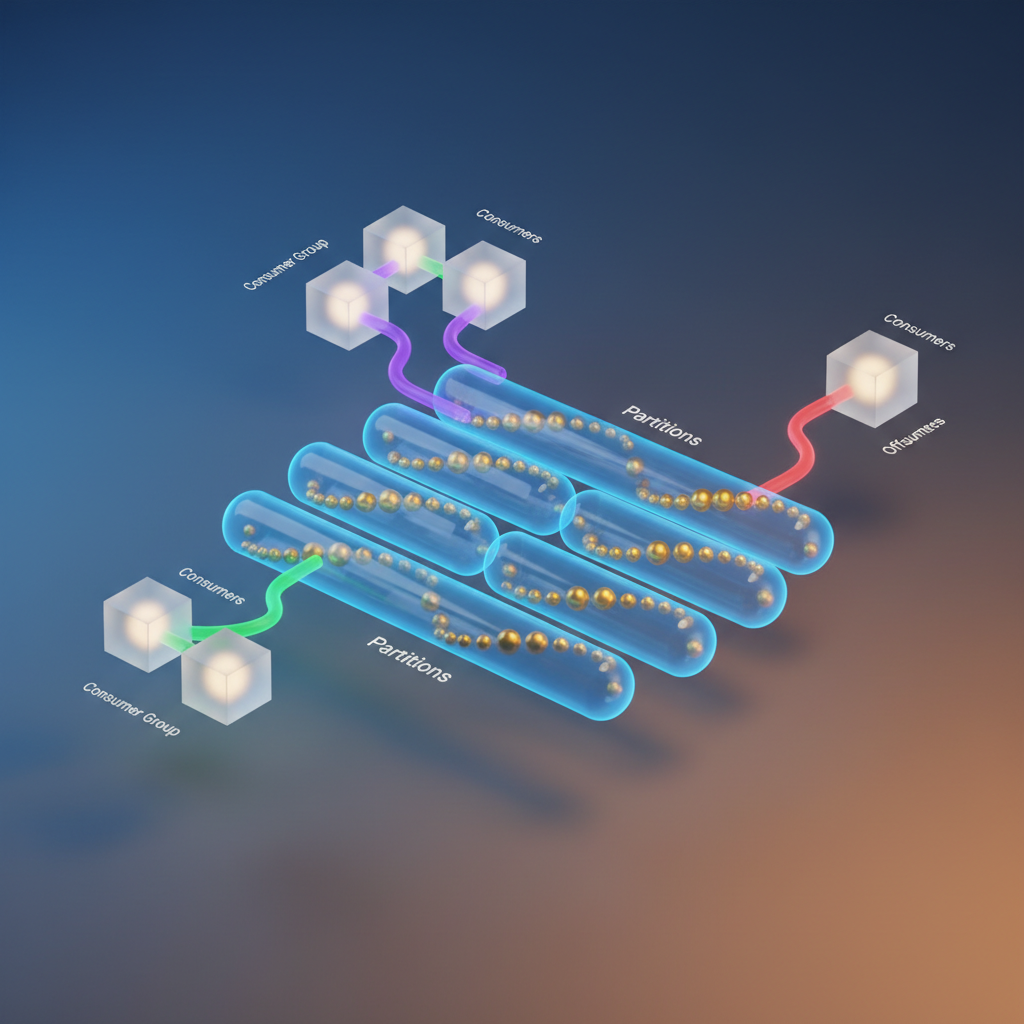

Kafka’s Mental Model: Partitions, Offsets, and Consumer Groups

Kafka’s durability story begins with a deceptively simple primitive: the append-only log. Every topic in Kafka is not a queue in the traditional sense—it is a partitioned, ordered sequence of records written to disk and retained for a configurable period regardless of whether anyone has consumed them. This distinction shapes every architectural decision that follows.

Topics Are Partitioned Logs

When you create a Kafka topic, you specify a partition count. Each partition is an independent, ordered log. Producers write to partitions—either by explicit key hash or round-robin—and that assignment is permanent for a given message. Consumers do not pull from a topic as a whole; they pull from individual partitions, and they track their position in each partition using an offset: a monotonically increasing integer that represents how far into the log a consumer has read.

This model is what gives Kafka its throughput ceiling. Partitions are the unit of parallelism, and more partitions mean more concurrent consumers. It also means partition count is a deployment-time decision with lasting consequences—you cannot reduce partitions without recreating the topic, and increasing them breaks key-based ordering guarantees for any producer that relies on deterministic routing.

Consumer Groups as the Parallelism Primitive

Kafka’s consumer group mechanism is elegant in its design: multiple consumer instances sharing a group ID collectively consume a topic, with each partition assigned to exactly one group member at a time. A group with four consumers reading a topic with eight partitions achieves 2x throughput compared to a single consumer, assuming workload is evenly distributed across partitions.

The flip side is that consumer group rebalancing—triggered by member joins, leaves, or crashes—temporarily halts consumption while Kafka’s group coordinator reassigns partition ownership. For latency-sensitive workloads, rebalance storms caused by rolling deployments or autoscaling events are a real operational concern.

Durability Through Replication and ISR

Kafka achieves fault tolerance through replication. Each partition has a configurable replication factor, and Kafka maintains an In-Sync Replica (ISR) set: the subset of replicas that are caught up with the leader. A write is acknowledged only after the ISR acknowledges it, and a partition leader election happens automatically when the current leader fails. The min.insync.replicas setting is what converts replication into an actual durability guarantee—setting it below your replication factor introduces silent data loss risk during broker failures.

💡 Pro Tip: Setting

acks=allon producers without also settingmin.insync.replicas=2on the broker side provides weaker durability than it appears. Both settings must be tuned together to close the gap.

This mental model—partitioned logs, offset-tracked consumers, ISR-backed replication—is powerful and well-understood. But it also carries significant operational weight, particularly in dynamic, containerized environments. Understanding exactly where that weight concentrates is essential before comparing it to JetStream’s approach, which starts from a different set of first principles entirely.

JetStream’s Mental Model: Streams, Consumers, and Ack Policies

JetStream introduces persistence to NATS through two orthogonal primitives: Streams and Consumers. Streams define where messages live and how long they’re retained. Consumers define how a specific client or group of clients receives and acknowledges those messages. Keeping these concerns separate is what gives JetStream its flexibility—you can add new consumers to an existing stream without touching any publisher code.

Streams and Subject Mapping

A Stream captures messages published to one or more subjects. Unlike Kafka’s topic/partition model—where a topic is both the logical address and the physical storage unit—JetStream decouples addressing from storage. A single stream can absorb messages from orders.> (all subjects under orders), while separate consumers downstream each get their own independent cursor into that stream.

js, _ := nats.NewConn("nats://nats.prod-cluster.internal:4222").JetStream()

_, err := js.AddStream(&nats.StreamConfig{ Name: "ORDERS", Subjects: []string{"orders.>"}, Retention: nats.WorkQueuePolicy, Storage: nats.FileStorage, MaxAge: 24 * time.Hour, Replicas: 3,})The Subjects field is where the mental model shift happens. You’re not naming a queue—you’re telling JetStream which subject namespace to index. Publishers don’t change a single line of code when you add JetStream to an existing NATS deployment.

Push vs. Pull Consumers

JetStream supports two consumer modes, and the choice has operational consequences:

Pull consumers require the client to explicitly request batches of messages. This puts flow control entirely in the client’s hands, making pull the right choice for worker pools and batch processors where processing time is variable.

Push consumers deliver messages to a subject the client subscribes to. They’re simpler to set up but harder to operate at scale—if a push consumer falls behind, it creates backpressure that the broker must manage.

_, err = js.AddConsumer("ORDERS", &nats.ConsumerConfig{ Durable: "order-processor", FilterSubject: "orders.new", AckPolicy: nats.AckExplicitPolicy, MaxDeliver: 5, AckWait: 30 * time.Second,})

sub, _ := js.PullSubscribe("orders.new", "order-processor")

msgs, _ := sub.Fetch(10, nats.MaxWait(5*time.Second))for _, msg := range msgs { if err := processOrder(msg); err != nil { msg.Nak() // triggers immediate redelivery } else { msg.Ack() }}Ack Policies and Flow Control

JetStream’s acknowledgment model is more expressive than Kafka’s offset commits. Three signals control redelivery behavior:

Ack()— processing succeeded; message removed from pending redeliveryNak()— processing failed; redeliver immediately (subject toMaxDeliver)InProgress()— still processing; reset theAckWaittimer without consuming a delivery attempt

The InProgress() signal solves a real problem Kafka consumers work around with hacky pause()/resume() calls or inflated session timeouts. If your order fulfillment step calls an external payment API that takes 25 seconds, you send InProgress() at the 20-second mark and continue without triggering a false redelivery.

Retention Policies vs. Kafka’s Time/Size Windows

Kafka retains messages based on time or byte limits, independent of whether anyone consumed them. JetStream adds a third option that changes how you think about stream lifecycle:

| Policy | Behavior |

|---|---|

LimitsPolicy | Retain up to configured age/size limits (closest to Kafka) |

InterestPolicy | Retain only while at least one consumer exists |

WorkQueuePolicy | Delete each message once any consumer acknowledges it |

WorkQueuePolicy eliminates the need for a separate queue system for task distribution—the stream itself becomes the queue, and acknowledged work is immediately reclaimed.

💡 Pro Tip:

WorkQueuePolicypaired with a durable pull consumer is the JetStream-native replacement for a Redis queue or SQS. You get ordered delivery, at-least-once guarantees, and retry logic without running additional infrastructure.

With JetStream’s primitives mapped out, the next natural question is where each system actually wins on performance—and where the delivery guarantee promises hold up under real load. That’s what the throughput and latency comparison in the next section quantifies.

Head-to-Head: Throughput, Latency, and Delivery Guarantees

Raw performance comparisons between Kafka and JetStream mislead more often than they inform. The numbers depend heavily on message size, fan-out pattern, and replication factor—so instead of citing synthetic benchmarks, it’s more useful to understand where each system’s architecture puts it at a structural advantage.

Throughput: Kafka’s Sequential Write Dominance

Kafka’s partitioned log model is purpose-built for sustained high-throughput ingestion. By distributing writes across independent partitions, each backed by sequential disk I/O, Kafka brokers saturate disk bandwidth rather than fighting seek latency. Producers batch messages by default, and the broker flushes to disk in contiguous segments. At scale—millions of messages per second across a well-partitioned topic—Kafka’s architecture has no serious competitor.

JetStream uses a different model. Streams are stored per-account on individual NATS server nodes, and while JetStream supports clustering via RAFT-based replication, the storage engine is not optimized for the same sequential write throughput Kafka achieves. In practice, JetStream performs well into the hundreds of thousands of messages per second for most workloads, but it does not close the gap with Kafka at extreme ingestion rates.

Latency: Where JetStream Wins

The trade-off appears clearly at the latency end. JetStream operates over NATS core, which was designed from the ground up for low-latency messaging. End-to-end publish-to-consume latency in JetStream commonly sits in the single-digit millisecond range under moderate load. Kafka’s internal batching and segment-based flushing introduce inherent latency floors—even with linger.ms=0, the overhead of the broker pipeline typically keeps p99 latencies in the tens of milliseconds.

For systems where individual message latency matters—real-time control planes, edge synchronization, or request-reply patterns layered over a stream—JetStream’s latency profile is a structural advantage, not just a configuration win.

Delivery Guarantees: Two Paths to At-Least-Once

Both systems implement at-least-once delivery, but through different mechanisms. Kafka consumers track offsets and commit them explicitly or automatically; a failure before a commit causes redelivery from the last committed offset. JetStream consumers use explicit acknowledgments at the message level—if an ack is not received within the configured AckWait period, JetStream redelivers that specific message.

JetStream’s per-message ack model is operationally simpler: consumers do not manage offset state, and redelivery is scoped precisely to unacknowledged messages rather than a full offset rollback.

Exactly-Once: Different Guarantees, Different Complexity

Kafka provides exactly-once semantics through its transactional producer API, coordinating atomic writes across partitions and consumer offset commits. This is a production-grade guarantee, but it requires transactional producer configuration, an idempotent producer setting, and careful consumer group coordination.

JetStream’s approach uses a message deduplication window. Producers include a Nats-Msg-Id header; JetStream suppresses duplicates within a configurable time window (default 2 minutes). This is effective for most workloads but is window-bounded rather than a true transactional guarantee.

💡 Pro Tip: JetStream deduplication covers the common case—at-most-once publisher retries after a network blip. If your workload requires coordinated multi-topic atomic writes, Kafka’s transactional API is the only production-ready option in this comparison.

Where Each System Degrades

Kafka’s architecture strains under small-message, high-fan-out patterns. Thousands of consumers reading from low-volume topics create disproportionate broker load—consumer group rebalancing, offset management overhead, and the coordination cost of maintaining consumer group state become friction points at scale. JetStream degrades under extreme partition-level parallelism: when throughput requirements genuinely demand dozens of parallel write streams at sustained high volume, JetStream’s storage model becomes the bottleneck before Kafka’s does.

Understanding these performance boundaries shapes the sizing conversation—but the fuller picture requires examining how each system actually behaves when you’re responsible for running it. Operational complexity is where many teams discover their performance assumptions were optimistic.

Operational Reality: Running Both in Kubernetes

The gap between “it runs in Kubernetes” and “it runs well in Kubernetes” is where most platform teams learn their most expensive lessons. Kafka and NATS JetStream sit at opposite ends of the operational complexity spectrum, and that gap is most visible when you’re managing them at scale.

Kafka on Kubernetes

The Strimzi operator is the de facto standard for self-managed Kafka on Kubernetes, and it handles the heaviest lifting: rolling restarts, broker scaling, topic rebalancing, and TLS certificate rotation. Confluent’s operator covers similar ground with a commercial support tier behind it.

Even with operator assistance, Kafka’s Kubernetes footprint is substantial. Each broker requires a dedicated PersistentVolumeClaim, and those PVCs need to be on fast storage—EBS io2 or equivalent—to keep up with write throughput. A three-broker cluster with three ZooKeeper nodes (or three KRaft controllers post-migration) means six StatefulSet pods, six PVCs, and careful anti-affinity rules to prevent co-location on the same node.

The KRaft migration deserves its own mention. Kafka’s move away from ZooKeeper is complete in recent releases, but existing clusters require a staged migration process. If you’re on ZooKeeper today, that migration is a mandatory operational event in your future, and it involves broker restarts coordinated through the Strimzi operator’s migration mode.

JVM overhead is real and not often discussed frankly. A production Kafka broker is typically configured with 6–8 GB heap, and GC pauses—even with G1GC or ZGC—introduce latency spikes that require careful tuning. Expect your Kafka brokers to consume 10–12 GB of total memory per pod once you account for page cache requirements on top of heap.

NATS JetStream on Kubernetes

NATS deploys as a single StatefulSet. A production JetStream cluster requires three nodes for quorum-based replication, and the entire thing fits in a Helm values file you can read in five minutes.

cluster: enabled: true name: prod-nats-cluster replicas: 3

nats: jetstream: enabled: true fileStore: enabled: true storageDirectory: /data/jetstream size: 20Gi resources: requests: cpu: 500m memory: 512Mi limits: cpu: 2 memory: 1Gi

podDisruptionBudget: enabled: true maxUnavailable: 1

affinity: podAntiAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchLabels: app.kubernetes.io/name: nats topologyKey: kubernetes.io/hostnameNATS clustering uses a gossip protocol for membership and a Raft-based consensus layer for JetStream persistence. There is no external coordination service. When a node fails, the remaining two maintain quorum and the cluster self-heals when the node recovers.

For multi-cluster topologies, NATS leaf nodes extend a hub cluster into remote regions without full mesh connectivity. A leaf node in eu-west-1 connects to the hub in us-east-1, and JetStream streams replicate across the link. This is architectural work, but it’s explicit routing rather than broker rack awareness configuration inside a Kafka cluster.

The memory profile reflects NATS’s Go runtime: a three-node JetStream cluster with moderate load runs comfortably at 128–256 MB per pod. Total cluster memory is an order of magnitude below an equivalent Kafka deployment.

Monitoring

Kafka monitoring goes through JMX, which means deploying a Prometheus JMX exporter as a sidecar on each broker. The useful dashboards—consumer lag, under-replicated partitions, request latency by topic—require Grafana dashboards built around the kafka_ metric namespace. Strimzi ships example dashboards, but they need customization before they’re production-useful.

NATS exposes a /metrics endpoint on port 8222 natively. The NATS Surveyor tool aggregates metrics across a cluster and pushes them to Prometheus without sidecars. Built-in JetStream metrics cover stream storage, consumer acknowledgment lag, and message delivery rates. The observability stack is simpler because the system itself is simpler.

💡 Pro Tip: For JetStream consumer lag alerting, the

nats_jetstream_consumer_num_pendingmetric is the direct equivalent of Kafka’s consumer group lag. Set a threshold alert on this metric per consumer to catch processing backlogs before they become incidents.

The operational picture is clear: NATS JetStream has a materially lower burden for teams that don’t already have Kafka expertise embedded in their platform org. But operational simplicity doesn’t automatically make it the right choice—the decision ultimately comes down to your workload’s specific requirements, which the next section addresses directly.

Decision Framework: When to Pick Each System

After examining throughput benchmarks, operational overhead, and delivery semantics, the choice between Kafka and JetStream reduces to a handful of concrete criteria. Neither system is universally superior—they optimize for different constraints.

Choose Kafka When

Throughput is your primary constraint. If your workload sustains more than 1 million messages per second across multiple producers, Kafka’s partition-based architecture and sequential disk I/O give you headroom that JetStream cannot match at equivalent hardware costs.

You already have ecosystem investment. Kafka Connect, Kafka Streams, ksqlDB, and Schema Registry represent years of integration work. If your data pipelines depend on Debezium CDC connectors or Confluent’s ecosystem, ripping that out is an organizational problem, not a technical one.

Long-term replay is a first-class requirement. Kafka’s log retention model is designed for replay as a core primitive—not an afterthought. Event sourcing systems, audit trails in regulated industries (financial services, healthcare), and ML training pipelines that need months of raw event history belong on Kafka, where retention tooling and compliance guarantees are mature.

Your team has the operational capacity. Kafka rewards teams with dedicated platform engineers who can tune broker configurations, manage partition rebalancing, and operate ZooKeeper or KRaft clusters. If that describes your organization, Kafka’s complexity is manageable and its stability record is unmatched.

Choose JetStream When

You need polyglot pub/sub and durable messaging from a single system. JetStream eliminates the operational split between a message bus and a persistence layer. NATS clients exist for over 40 languages, and the same cluster handles ephemeral fan-out and durable consumer groups.

You’re deploying at the edge or in constrained environments. A single NATS server binary under 20MB with embedded JetStream runs comfortably on IoT gateways and edge nodes. Kafka does not.

Your team runs Kubernetes and wants minimal ops burden. JetStream clusters deployed via the NATS Helm chart or the NATS Operator require significantly less day-two operational overhead than Kafka on Kubernetes—no separate ZooKeeper, no persistent volume tuning for multiple broker roles.

Your throughput sits below 500K messages per second with latency sensitivity. JetStream’s lower p99 latency at moderate throughput makes it the better fit for request-reply patterns and real-time control planes.

The Hybrid Path

If you already run NATS Core for internal service mesh traffic, adopting JetStream is incremental—enable it on your existing cluster and migrate streams one workload at a time. There is no new infrastructure to provision.

💡 Pro Tip: Teams migrating from Kafka to JetStream progressively often retain Kafka for a single high-volume analytics pipeline while moving operational event streams to JetStream. This hybrid boundary usually holds long-term because the workloads genuinely have different characteristics.

The decision matrix clarifies what to pick, but the broader ecosystem tells a different story about where each project is heading—and that trajectory matters as much as today’s benchmarks.

What the Ecosystem Doesn’t Tell You

Benchmarks measure throughput. Vendor documentation describes features. Neither tells you what you’ll discover six months into production: the ecosystem surrounding a messaging system is often more consequential than the system itself.

Client Library Maturity Is Not Uniform

NATS has excellent Go and Java clients — both are actively maintained, well-documented, and cover the full JetStream API surface. The story diverges elsewhere. The Python, .NET, and JavaScript clients lag behind in JetStream feature coverage, and community-maintained libraries for languages like Ruby or PHP are sparse. Kafka clients, by contrast, benefit from a decade of investment across every major language and framework. If your organization runs polyglot services, factor client library maturity into your evaluation before committing to JetStream.

There Is No JetStream Connect

Kafka Connect is one of Kafka’s most underappreciated assets. Its ecosystem includes hundreds of production-tested connectors for databases, object stores, SaaS platforms, and stream processors. JetStream has no equivalent. You integrate it via application code or purpose-built adapters, which means building and maintaining the glue yourself. For teams with heavy data pipeline requirements — CDC from Postgres, sinking to S3, feeding Snowflake — the absence of a connector ecosystem is a genuine gap, not a minor inconvenience.

Synadia’s Commercial Positioning Matters

NATS is open-source under the Apache 2.0 license, but the primary commercial driver behind it is Synadia, which offers managed NATS infrastructure and enterprise support. This is a smaller commercial ecosystem than Confluent or AWS MSK command. The practical implication: the open-source roadmap is heavily influenced by a single vendor’s priorities. That is not disqualifying, but it is worth understanding before treating NATS as a community-governed project on par with Apache Kafka.

AutoMQ and Cloud-Native Kafka Forks

If Kafka’s operational burden is your primary objection, AutoMQ is worth evaluating as a middle path. It reimplements the Kafka protocol over object storage, eliminating the need to manage broker disks and dramatically reducing cross-AZ replication costs. Projects like WarpStream take a similar approach. These forks preserve full Kafka API compatibility and connector ecosystem access while addressing the cloud-native fit concerns that make JetStream attractive in the first place.

💡 Pro Tip: Before ruling out Kafka on operational grounds, benchmark AutoMQ against your actual workload in Kubernetes. The storage disaggregation model eliminates most of the disk management pain that drives teams toward JetStream.

The Honest Assessment

JetStream is production-ready. For latency-sensitive, Kubernetes-native workloads at moderate scale — event-driven microservices, ephemeral task queues, telemetry pipelines — it competes with Kafka on every dimension that matters. It is not a universal Kafka replacement. If your architecture depends on a rich connector ecosystem, multi-language client parity, or a large commercial support market, Kafka’s gravity remains justified.

The final question is not which system wins in the abstract — it is which system fits the specific constraints of your team, your scale, and your operational posture.

Key Takeaways

- Benchmark JetStream against your actual message size and fanout profile before assuming Kafka’s throughput advantage applies to your workload

- Deploy a NATS JetStream cluster on Kubernetes using the official Helm chart with at least 3 replicas and file storage before evaluating it — in-memory mode will give you misleading durability behavior

- If you already run NATS for pub/sub, enable JetStream on the same cluster rather than introducing Kafka solely for persistence; only add Kafka when you need its connector ecosystem or >500k msg/sec sustained throughput

- Audit your consumer group patterns in Kafka: if you’re not exploiting partition-level parallelism, JetStream’s pull consumers with durable subscriptions cover the same semantic ground with lower operational overhead