Control Plane vs Data Plane: Building Resilient Distributed Systems

Your API gateway just went down for routine configuration updates, and now all production traffic is blocked. The health checks are failing. Requests are timing out. Your pager is exploding. And the worst part? This was supposed to be a simple config change—updating rate limit thresholds—that shouldn’t have touched active connections at all.

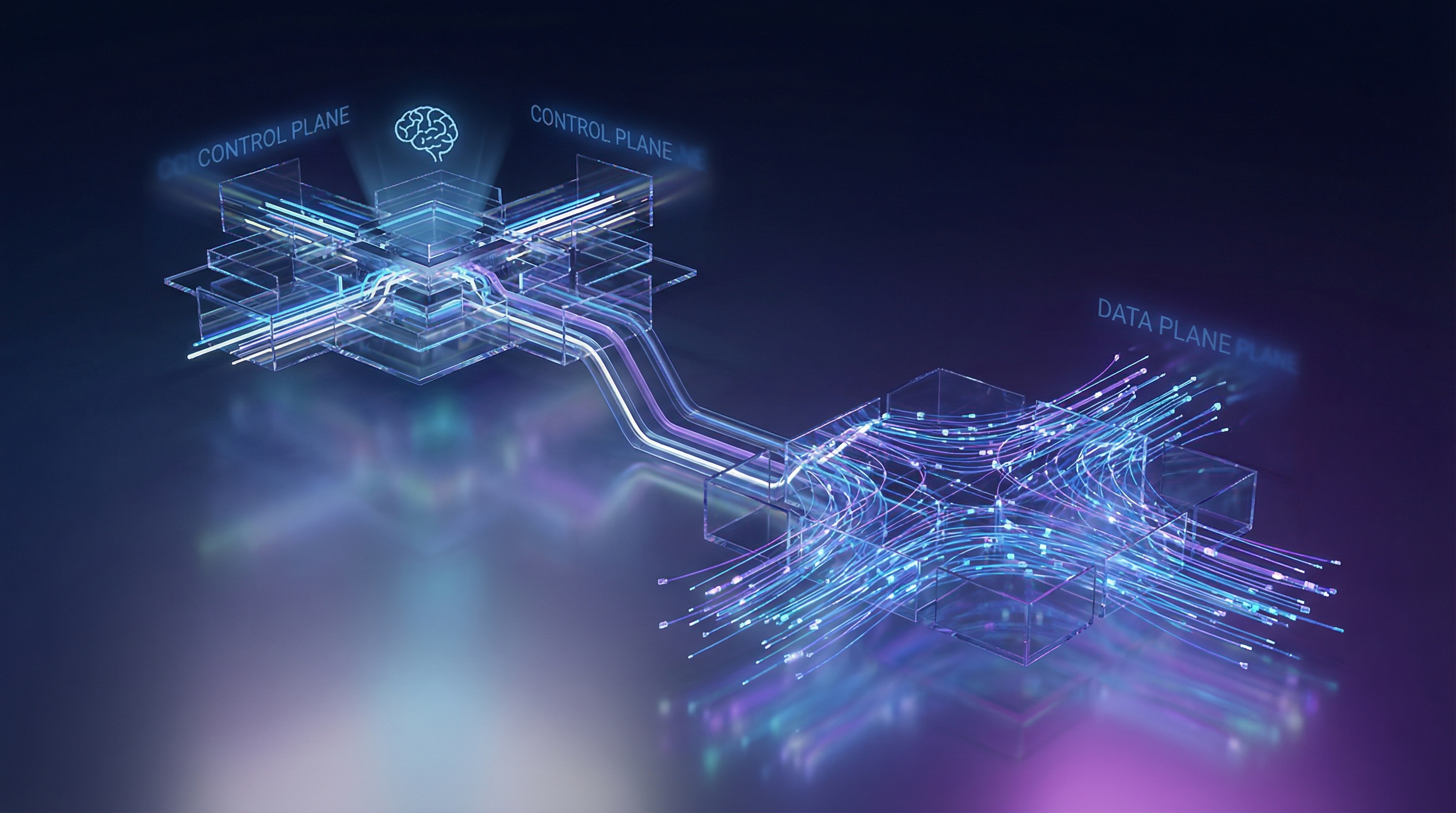

This is the classic symptom of coupling your control plane with your data plane. When the component that manages configuration (control plane) shares fate with the component that serves traffic (data plane), every administrative operation becomes a potential outage. It’s like putting the cockpit and the wings on the same structural beam—damage one, and everything goes down.

The distinction between control and data planes isn’t just academic architecture theory. It’s a fundamental separation that determines whether your system handles failure gracefully or cascades into complete unavailability. Get it right, and you can reconfigure your entire routing topology while serving millions of requests per second. Get it wrong, and updating a single feature flag takes down production.

This pattern shows up everywhere in distributed systems. Kubernetes separates the API server and controllers (control plane) from the kubelet and container runtime (data plane). Software-defined networks separate route computation from packet forwarding. Modern service meshes separate configuration distribution from the actual proxy data path. The implementation details vary, but the principle remains constant: the brains and the muscle should fail independently.

Understanding this separation—and more importantly, implementing it correctly—requires moving beyond surface-level definitions. You need to recognize what belongs in each plane, how they communicate, and what failure modes each design choice introduces.

What Are Control and Data Planes?

Every distributed system makes two fundamentally different types of decisions: what should happen and making it happen. The control plane handles the first—determining configuration, routing rules, and desired state. The data plane handles the second—processing actual requests, moving packets, and executing the work users care about.

Think of an airport. Air traffic control (the control plane) decides which runways are active, assigns gates, and coordinates flight paths. The planes, baggage handlers, and ground crews (the data plane) execute those decisions by moving passengers and cargo. Air traffic control can go offline briefly without planes falling from the sky—aircraft follow their last known instructions. This independence is exactly what we architect for in distributed systems.

The Control Plane: The Brain

The control plane manages system configuration and state. In a load balancer, it decides which backend servers are healthy and how traffic should be distributed. In a service mesh, it pushes TLS certificates and routing policies to proxies. In Kubernetes, it schedules pods and reconciles desired state with actual cluster state.

Control plane operations are typically:

- Infrequent: Configuration changes happen every few minutes or hours, not milliseconds

- Stateful: They maintain the source of truth about system topology and policy

- Eventually consistent: A brief delay in propagating config changes is acceptable

- Low throughput, high complexity: Processing hundreds of operations per second, but each requires orchestration across multiple components

The Data Plane: The Muscle

The data plane executes the decisions made by the control plane. It routes requests, processes data, and serves user traffic. In that same load balancer, the data plane forwards packets to backends based on rules from the control plane. In Kubernetes, it’s the container runtime executing your application code.

Data plane operations are:

- High frequency: Processing thousands or millions of requests per second

- Stateless or locally cached: Each request handled independently using cached configuration

- Latency-sensitive: Milliseconds matter for user experience

- High throughput, low complexity: Simple, fast operations repeated at scale

Why Separation Matters

When you blur these planes together, you create cascading failure modes. A configuration update that locks a database table suddenly blocks request processing. A traffic spike that exhausts memory prevents the system from reconfiguring to handle the spike. Your system becomes both fragile and impossible to scale efficiently.

Separating the planes gives you:

Independent failure domains: The control plane can crash without dropping user requests—the data plane continues using its last known configuration. Conversely, a data plane overload doesn’t prevent operators from pushing emergency config changes.

Targeted scaling: Scale the data plane horizontally to handle traffic spikes (add more proxies, more workers). Scale the control plane vertically for complex orchestration tasks (bigger database for state, more CPU for policy evaluation).

Operational clarity: When debugging production issues, you immediately know where to look. Traffic serving problems? Check the data plane. Configuration not propagating? Investigate the control plane.

With this mental model established, let’s examine how Kubernetes implements this separation to manage containerized workloads at scale.

Real-World Example: Kubernetes Architecture

Kubernetes provides one of the clearest examples of control and data plane separation in production systems. Understanding how Kubernetes implements this separation reveals patterns you can apply to your own distributed architectures.

The Control Plane: Decision Makers

Kubernetes’ control plane consists of several components that manage cluster state but never directly serve application traffic:

API Server (kube-apiserver) acts as the front door for all cluster operations. Every kubectl command, every controller reconciliation loop, every scheduling decision flows through this component. It validates requests, enforces RBAC policies, and persists state to etcd. Critically, the API server makes zero decisions about workload placement or execution—it purely manages state.

Scheduler (kube-scheduler) watches for unassigned pods and selects appropriate nodes based on resource requirements, affinity rules, and taints. Once it makes a decision, it updates the pod’s nodeName field through the API server. The scheduler never directly communicates with worker nodes.

Controller Manager (kube-controller-manager) runs reconciliation loops for deployments, replica sets, services, and other Kubernetes resources. When you declare “I want 3 replicas,” the controller manager continuously ensures that reality matches your desired state.

Here’s a typical control plane configuration:

apiVersion: v1kind: Podmetadata: name: kube-apiserver namespace: kube-systemspec: containers: - name: kube-apiserver image: registry.k8s.io/kube-apiserver:v1.28.0 command: - kube-apiserver - --etcd-servers=https://10.0.0.10:2379 - --service-cluster-ip-range=10.96.0.0/12 - --authorization-mode=Node,RBAC livenessProbe: httpGet: path: /livez port: 6443 scheme: HTTPS initialDelaySeconds: 10 periodSeconds: 10The Data Plane: Workload Executors

While the control plane makes decisions, the data plane executes them. On each worker node:

Kubelet monitors the API server for pods assigned to its node, manages container lifecycles through the container runtime (containerd, CRI-O), and reports status back to the control plane. The kubelet operates semi-autonomously—it can continue managing existing containers even during API server outages.

Kube-proxy maintains network rules for service routing. When traffic hits a service IP, kube-proxy’s iptables or IPVS rules route packets to healthy backend pods. This happens entirely in the data plane without control plane involvement.

apiVersion: v1kind: ConfigMapmetadata: name: kubelet-config namespace: kube-systemdata: kubelet: | apiVersion: kubelet.config.k8s.io/v1beta1 kind: KubeletConfiguration authentication: webhook: enabled: true clusterDNS: - 10.96.0.10 clusterDomain: cluster.local # Kubelet continues functioning during control plane outages nodeStatusUpdateFrequency: 10s nodeStatusReportFrequency: 5mWhy This Separation Matters

When the API server goes down, your applications keep running. Pods continue serving traffic, services maintain connectivity, and volumes stay mounted. You cannot deploy new workloads or modify existing ones, but production traffic flows uninterrupted.

This resilience pattern appears in managed Kubernetes offerings like GKE and EKS. Google and AWS run control plane components in isolated infrastructure with independent failure domains. Your data plane nodes communicate with the control plane over the network, but a control plane outage in us-east-1a doesn’t cascade to your application pods.

The separation also enables independent scaling. During a deployment storm with hundreds of new pods, you scale the API server and scheduler. During high application traffic, you scale worker nodes. These are fundamentally different resource profiles with different scaling triggers.

This architectural pattern extends beyond Kubernetes to API gateways, where similar separation between configuration management and request routing creates robust systems that degrade gracefully under failure.

Implementing Plane Separation in Your API Gateway

When building a custom API gateway or reverse proxy, separating control and data planes prevents configuration updates from impacting live traffic. The data plane handles request routing with zero external dependencies, while the control plane manages configuration updates asynchronously. This architectural separation is the difference between a gateway that degrades gracefully during infrastructure issues and one that creates cascading failures.

Architecture: Decoupling Configuration from Routing

The key pattern is storing configuration in-memory on data plane nodes and updating it through asynchronous replication. When the control plane becomes unavailable, the data plane continues routing requests using its last-known configuration. This independence is critical: a data plane node should never block a request waiting for a control plane response.

import threadingimport timefrom dataclasses import dataclassfrom typing import Dict, Optional

@dataclassclass RouteConfig: path_pattern: str backend_url: str timeout_ms: int retry_count: int

class DataPlaneRouter: def __init__(self): self._routes: Dict[str, RouteConfig] = {} self._config_version = 0 self._lock = threading.RLock()

def route_request(self, path: str) -> Optional[str]: """Route incoming request using in-memory config. No control plane dependency - fails fast if no route exists.""" with self._lock: for pattern, config in self._routes.items(): if self._matches_pattern(path, pattern): return config.backend_url return None

def update_routes(self, new_routes: Dict[str, RouteConfig], version: int): """Called by control plane sync worker. Uses optimistic locking.""" with self._lock: if version > self._config_version: self._routes = new_routes self._config_version = version return True return False

def _matches_pattern(self, path: str, pattern: str) -> bool: # Simplified pattern matching return path.startswith(pattern.rstrip('*'))Notice the route_request method contains no I/O operations, database queries, or network calls. It reads from a local dictionary protected by a lightweight lock. This design choice ensures sub-millisecond latency regardless of external system health. The worst-case scenario—returning None for an unknown route—is still fast and deterministic.

The control plane updates configuration without blocking request processing. Configuration changes propagate through a pull-based sync mechanism with exponential backoff for resilience. Pull-based synchronization is generally more reliable than push-based: if a data plane node restarts or loses network connectivity temporarily, it automatically catches up by polling the control plane rather than waiting for a push that may never arrive.

import httpximport loggingfrom typing import Optional

class ControlPlaneSyncer: def __init__(self, router: DataPlaneRouter, control_plane_url: str): self.router = router self.control_plane_url = control_plane_url self.running = False self._backoff_seconds = 1

def start_sync_loop(self): """Non-blocking background sync. Data plane stays operational if this fails.""" self.running = True threading.Thread(target=self._sync_loop, daemon=True).start()

def _sync_loop(self): while self.running: try: new_config = self._fetch_config() if new_config: success = self.router.update_routes( new_config['routes'], new_config['version'] ) if success: logging.info(f"Updated to config version {new_config['version']}") self._backoff_seconds = 1 # Reset backoff on success

except httpx.RequestError as e: logging.warning(f"Control plane unreachable: {e}") # Graceful degradation - continue with existing config

except Exception as e: logging.error(f"Config sync error: {e}")

finally: time.sleep(self._backoff_seconds) self._backoff_seconds = min(self._backoff_seconds * 2, 60)

def _fetch_config(self) -> Optional[dict]: """Fetch config with short timeout to avoid blocking.""" with httpx.Client(timeout=5.0) as client: response = client.get( f"{self.control_plane_url}/api/v1/gateway-config", params={"current_version": self.router._config_version} ) response.raise_for_status() return response.json()The exponential backoff strategy prevents thundering herd problems when the control plane experiences load spikes or temporary outages. If 100 data plane nodes all detect control plane unavailability simultaneously, they won’t all retry at the same moment. The staggered retry pattern distributes load and gives the control plane time to recover.

Eventual Consistency and Configuration Propagation

Configuration updates don’t take effect instantly across all data plane nodes. This eventual consistency model is acceptable for most API gateway use cases—routing rules rarely need atomic updates across distributed nodes. The alternative—distributed transactions or two-phase commits—would introduce the exact control plane dependencies we’re trying to eliminate.

The control plane exposes a versioned configuration API. Data plane nodes poll this endpoint and apply updates only when the version number increases. This prevents applying stale configurations during network partitions. Version numbers must be monotonically increasing integers; timestamps are insufficient because clock skew between nodes can cause older configurations to appear newer.

Consider the propagation timeline: when an operator adds a new route via the control plane API, that change might take 5-60 seconds to reach all data plane nodes depending on sync intervals. During this window, some nodes route the new path correctly while others return 404. For most systems, this brief inconsistency is acceptable—far better than blocking all traffic to ensure atomic updates.

💡 Pro Tip: Add a configuration hash to detect silent corruption. If the hash mismatches after applying an update, the data plane should reject it and alert operators rather than serving potentially broken routing rules.

Graceful Degradation Patterns

When the control plane becomes completely unavailable, the data plane enters degraded mode but continues serving traffic. This is the true test of plane separation: your gateway should handle millions of requests even if the control plane database is down, the configuration API is unreachable, or the entire control plane datacenter is offline.

Implement health check endpoints that report degraded status without failing. Load balancers often treat any non-200 response as complete failure, but degraded mode isn’t failure—it’s resilience. The health endpoint should return 200 OK with a payload indicating degradation:

from datetime import datetime, timedelta

class HealthCheck: def __init__(self, syncer: ControlPlaneSyncer): self.syncer = syncer self.last_successful_sync = datetime.now()

def check_health(self) -> dict: time_since_sync = datetime.now() - self.last_successful_sync degraded = time_since_sync > timedelta(minutes=5)

return { "status": "degraded" if degraded else "healthy", "data_plane": "operational", "control_plane_sync": "stale" if degraded else "current", "last_sync_seconds_ago": int(time_since_sync.total_seconds()), "current_config_version": self.syncer.router._config_version }This pattern ensures your API gateway survives control plane outages without dropping traffic. Load balancers can continue routing to degraded instances while alerting operators to investigate the control plane failure. The last_sync_seconds_ago metric is particularly valuable for debugging: if some nodes show 30 seconds while others show 3600 seconds, you’ve identified a network partition or misconfigured firewall rule affecting specific nodes.

Set clear operational boundaries for how long degraded mode is acceptable. Five minutes of stale configuration might be fine; five hours indicates a serious control plane failure requiring immediate intervention. Your monitoring should alert on degraded mode duration, not just binary health status.

The next section explores how observability itself requires a third architectural layer—the management plane—to maintain visibility during control or data plane failures.

The Management Plane: Observability’s Missing Piece

Most engineers think of distributed systems as a two-layer architecture: control plane for orchestration, data plane for serving traffic. But there’s a third critical component that operates independently of both—the management plane.

The management plane handles monitoring, logging, metrics collection, and operational tooling. It observes your system from the outside, providing visibility into what’s happening across both control and data planes. This separation isn’t academic—it’s essential for operational resilience.

Why Management Needs Its Own Plane

Consider what happens when your control plane fails. If your metrics system reports through the same infrastructure as your etcd cluster, you lose visibility precisely when you need it most. Your data plane might still be serving traffic perfectly, but you can’t tell because your observability stack went dark alongside your control plane.

The management plane solves this by operating through entirely separate infrastructure:

- Independent ingestion paths: Logs and metrics flow through dedicated collectors, not through your service mesh or API gateway

- Separate storage: Time-series databases and log aggregators run on different nodes with isolated resource pools

- Out-of-band access: Dashboards and alerting systems connect through dedicated networks, ensuring you can diagnose problems even when your primary networking fails

At scale, this separation becomes non-negotiable. When Netflix’s control plane experiences issues, their Atlas telemetry system continues collecting metrics from 100,000+ instances. When Stripe’s API gateway has problems, their Veneur metrics aggregation pipeline still reports data plane health. These companies survive incidents because their observability doesn’t share fate with the systems being observed.

Practical Implementation

The management plane typically includes:

- Prometheus or similar metrics collectors pulling from application endpoints

- Fluent Bit or Vector agents forwarding logs to centralized storage

- Distributed tracing systems like Jaeger or Tempo

- Alerting infrastructure evaluating conditions independent of the services being monitored

The key architectural decision is ensuring these components share minimal dependencies with your control and data planes. Run them in separate Kubernetes namespaces with dedicated node pools. Use different database clusters for storing telemetry versus application data. Configure alerts to page through external services, not through your own infrastructure.

💡 Pro Tip: Test your management plane’s independence by intentionally failing control plane components in staging. If you lose visibility during the outage, your planes aren’t properly separated.

With your management plane established as an independent observer, the next challenge is determining when and how to scale each of these three architectural layers as your system grows.

Scaling Strategies: When to Scale Each Plane

Control and data planes have fundamentally different scaling characteristics. Understanding these differences prevents over-provisioning and allows you to allocate infrastructure precisely where it delivers value.

Data Plane: Traffic-Driven Scaling

The data plane scales horizontally with request volume. Each request consumes CPU, memory, and network bandwidth proportionally. When traffic doubles, your data plane needs roughly double the capacity.

Consider an API gateway processing 10,000 requests per second. If each proxy instance handles 1,000 RPS at 50% CPU utilization, you need 10 instances. During a traffic spike to 50,000 RPS, you need 50 instances. This linear relationship makes data plane scaling predictable and automatable.

apiVersion: autoscaling/v2kind: HorizontalPodAutoscalermetadata: name: nginx-ingress-controllerspec: scaleTargetRef: apiVersion: apps/v1 kind: Deployment name: nginx-ingress-controller minReplicas: 10 maxReplicas: 100 metrics: - type: Resource resource: name: cpu target: type: Utilization averageUtilization: 70 - type: Pods pods: metric: name: nginx_connections_active target: type: AverageValue averageValue: "5000"Data plane autoscaling targets operational metrics: CPU utilization, active connections, request latency. These metrics directly correlate with user-facing performance.

Control Plane: Configuration-Driven Scaling

The control plane scales with the rate of configuration changes and the complexity of the distributed system topology. A control plane managing 100 services with updates every 10 minutes has dramatically different requirements than one managing 10,000 services with continuous deployments.

Kubernetes demonstrates this pattern. The API server scales based on the number of nodes, resources, and API calls per second—not user traffic. A cluster serving 1 million requests per second to end users needs the same control plane capacity whether those requests spike or remain steady, as long as the cluster topology stays constant.

apiVersion: apps/v1kind: Deploymentmetadata: name: config-controllerspec: replicas: 3 template: spec: containers: - name: controller image: mycompany/config-controller:v2.1 resources: requests: cpu: "2" memory: "4Gi" limits: cpu: "4" memory: "8Gi" env: - name: MAX_WATCHED_RESOURCES value: "50000" - name: RECONCILIATION_WORKERS value: "20"Control plane scaling focuses on reconciliation throughput and state management capacity. You scale when configuration change velocity increases or when managing more distributed components, not when user traffic grows.

💡 Pro Tip: Monitor control plane CPU during your highest configuration change periods (deployments, infrastructure changes) rather than peak traffic. If your control plane struggles during a 50-service deployment but idles during Black Friday traffic, you’ve validated proper separation.

Cost Optimization Through Independent Scaling

This separation delivers substantial cost savings. During a 10x traffic spike, you scale your data plane by 10x while the control plane remains unchanged. For a system where data plane instances cost $100/hour and control plane instances cost $50/hour, this prevents spending $500/hour on unnecessary control plane capacity.

The inverse applies during platform migrations or major reconfigurations. When migrating 1,000 microservices to new routing rules, your control plane processes intense configuration updates while data plane traffic remains steady. You temporarily add control plane replicas without touching the data plane.

Understanding when each plane needs resources prevents the common antipattern of scaling everything together, which typically means over-provisioning the control plane by 5-10x to match unnecessary data plane scaling triggers. With proper separation, failure modes become more predictable and contained.

Failure Modes and Recovery Patterns

When separating control and data planes, you’re not just organizing code—you’re creating a system that can fail in two fundamentally different ways. The control plane might become unavailable while your data plane continues serving traffic with cached configurations. Or the data plane might struggle while the control plane remains healthy, unable to propagate its updates. Understanding these failure modes is critical for building truly resilient distributed systems.

Data Plane Degradation with Control Plane Failure

The most important design principle: your data plane must continue operating when the control plane becomes unavailable. This means treating every control plane interaction as advisory rather than mandatory.

Consider a load balancer that fetches backend configurations from a control plane API. A naive implementation fails when the control plane is down:

class LoadBalancer: def __init__(self, control_plane_url): self.control_plane_url = control_plane_url

def get_backend(self, request): # WRONG: Synchronous dependency on control plane backends = requests.get(f"{self.control_plane_url}/backends").json() return self._select_backend(backends, request)A resilient implementation caches the last known good configuration and continues serving traffic:

import timefrom threading import Thread, Lock

class ResilientLoadBalancer: def __init__(self, control_plane_url, refresh_interval=30): self.control_plane_url = control_plane_url self.backends = [] self.last_update = 0 self.lock = Lock() self._start_background_sync(refresh_interval)

def _start_background_sync(self, interval): def sync_loop(): while True: try: response = requests.get( f"{self.control_plane_url}/backends", timeout=5 ) new_backends = response.json() with self.lock: self.backends = new_backends self.last_update = time.time() except Exception as e: # Control plane unavailable - continue with cached config print(f"Control plane sync failed: {e}") time.sleep(interval)

Thread(target=sync_loop, daemon=True).start()

def get_backend(self, request): # Data plane works independently with self.lock: if not self.backends: raise Exception("No backends available") return self._select_backend(self.backends, request)

def health_check(self): staleness = time.time() - self.last_update return { "backends": len(self.backends), "last_sync": self.last_update, "staleness_seconds": staleness, "degraded": staleness > 300 # 5 minutes }This pattern ensures the data plane survives control plane outages. The health_check method exposes degradation state without affecting request processing. Notice how the background sync thread operates on a separate execution path from request handling—failures in one don’t cascade to the other.

Circuit Breakers for Control Plane Operations

When the control plane becomes flaky, you need circuit breakers to prevent cascading failures. Implement a state machine that opens after repeated failures and allows periodic retry attempts:

from enum import Enumfrom datetime import datetime, timedelta

class CircuitState(Enum): CLOSED = "closed" OPEN = "open" HALF_OPEN = "half_open"

class ControlPlaneCircuitBreaker: def __init__(self, failure_threshold=5, timeout_seconds=60): self.state = CircuitState.CLOSED self.failure_count = 0 self.failure_threshold = failure_threshold self.timeout = timedelta(seconds=timeout_seconds) self.last_failure_time = None

def call_control_plane(self, func, *args, **kwargs): if self.state == CircuitState.OPEN: if datetime.now() - self.last_failure_time > self.timeout: self.state = CircuitState.HALF_OPEN else: raise Exception("Circuit breaker OPEN")

try: result = func(*args, **kwargs) self._on_success() return result except Exception as e: self._on_failure() raise

def _on_success(self): self.failure_count = 0 self.state = CircuitState.CLOSED

def _on_failure(self): self.failure_count += 1 self.last_failure_time = datetime.now() if self.failure_count >= self.failure_threshold: self.state = CircuitState.OPENThe half-open state is crucial—it allows the system to probe whether the control plane has recovered without immediately flooding it with requests. In production systems, combine this with exponential backoff to further reduce load during recovery.

Fallback Configuration Strategies

Beyond caching, implement multiple fallback layers for critical configuration. When the control plane is unavailable, your data plane should fall back through a hierarchy of sources:

- In-memory cache: The most recently fetched configuration

- Persistent local storage: The last successfully applied configuration written to disk

- Embedded defaults: Safe, minimal configuration baked into the binary

This layered approach protects against both transient control plane failures and data plane restarts during outages. A configuration loader implementing this pattern might look like:

import jsonimport os

class FallbackConfigLoader: def __init__(self, cache_path="/var/lib/app/config.json"): self.cache_path = cache_path self.memory_cache = None self.defaults = {"backends": ["127.0.0.1:8080"]}

def load_config(self, control_plane_source): # Try control plane first try: config = control_plane_source.fetch() self._update_caches(config) return config except Exception: pass

# Fall back to memory cache if self.memory_cache: return self.memory_cache

# Fall back to disk cache if os.path.exists(self.cache_path): with open(self.cache_path) as f: return json.load(f)

# Final fallback to defaults return self.defaults

def _update_caches(self, config): self.memory_cache = config with open(self.cache_path, 'w') as f: json.dump(config, f)Testing Plane Failures

Production incidents reveal whether your separation works. Inject failures deliberately using chaos engineering:

- Control plane network partitions: Use

iptablesor service mesh policies to block control plane traffic while monitoring data plane request success rates. Your data plane should maintain 100% availability using cached configurations. - Stale configuration scenarios: Stop control plane updates for 1 hour and verify data plane continues with cached state. Then introduce a configuration change that the data plane misses—ensure graceful staleness detection.

- Split brain testing: Run two control planes with conflicting configurations and ensure data plane handles inconsistency gracefully through version vectors or timestamp-based conflict resolution.

- Dependency cascade failures: Simulate the control plane’s dependencies failing (database, auth service) and verify that data plane isolation prevents impact propagation.

The Kubernetes ecosystem demonstrates this pattern’s effectiveness. When etcd becomes unavailable, existing pods continue running and kubelet serves traffic using its local cache. New deployments fail, but running workloads remain healthy—exactly the graceful degradation you want. Similarly, AWS service teams design for control plane failures by ensuring that EC2 instances continue running and serving traffic even when the EC2 control plane API becomes unavailable.

Effective failure mode testing requires production-like conditions. Run these chaos experiments during business hours with full observability in place. Monitor not just binary success/failure, but also latency percentiles, cache hit rates, and time-to-recovery metrics. The goal is to build confidence that your system degrades gracefully under realistic failure conditions.

With these failure handling patterns established, the next question becomes: how do you migrate an existing monolithic system to achieve this level of resilience?

Migration Strategy: Moving from Monolithic to Separated Planes

Refactoring a monolithic system to separate control and data planes requires surgical precision. You can’t afford downtime, and you need a clear path back if something breaks.

Start with Read/Write Classification

Begin by auditing your existing codebase to identify control versus data operations. Control operations modify system state—creating users, updating configurations, changing routing rules. Data operations serve requests using existing state—authenticating tokens, routing traffic, querying data.

Create a simple annotation system or spreadsheet mapping each API endpoint and background job to its plane. Look for operations that touch multiple resources or trigger cascading changes—these almost always belong to the control plane. High-frequency, stateless operations processing user requests are data plane candidates.

Apply the Strangler Pattern

Rather than a big-bang rewrite, extract one control plane capability at a time. Start with your least critical control operation—perhaps configuration validation or a rarely-used admin endpoint. Build the new control plane service, run it in shadow mode alongside your monolith, and compare outputs.

Once validated, introduce a feature flag that routes a small percentage of traffic to the new service. Gradually increase the percentage while monitoring error rates and latency. The monolith remains authoritative until you hit 100% traffic on the new service.

For data plane operations, use a similar approach but prioritize based on traffic volume. Extract your highest-throughput endpoints first—the performance improvements will be immediately visible and justify continued investment.

Build Safety Mechanisms

Feature flags are non-negotiable during migration. Use a robust feature flag system that supports percentage rollouts, user targeting, and instant rollback. Every extracted operation should have a flag controlling whether it uses the new plane or falls back to the monolith.

Implement dual-write periods for stateful control operations. When creating a new configuration object, write to both the monolith’s database and the new control plane’s state store. Run them in parallel for at least one full release cycle, validating consistency before cutting over completely.

💡 Pro Tip: Maintain API compatibility during migration by keeping your existing endpoints and routing internally to either the monolith or new services. Clients never know the difference, and you can switch backends independently of frontend changes.

Monitor split-brain scenarios where control and data planes diverge. Add health checks that verify both planes see consistent state, and alert immediately on discrepancies.

With control and data planes properly separated, your next challenge becomes ensuring you can actually observe what’s happening across both systems.

Key Takeaways

- Start identifying control vs data operations in your critical path services today—draw the boundary before implementing separation

- Design your data plane to function for at least 5-10 minutes without control plane connectivity using cached configurations

- Instrument both planes separately in your monitoring system to detect when plane-specific resource constraints emerge