Control Plane Isolation in Multi-Cluster Kubernetes: Hard Tenancy Without the Overhead

Your platform team just got a request from the security team: two business units sharing the same Kubernetes cluster need guaranteed blast-radius containment. You review the current setup—namespaces, RBAC policies, NetworkPolicies on every workload—and it looks reasonable on paper. Then you start pulling the thread.

A developer in Business Unit A has a ClusterRole that seemed harmless six months ago. A misconfigured admission webhook takes down the API server for everyone. etcd starts falling behind under write pressure from a batch job, and suddenly Business Unit B’s deployments are timing out with no clear cause. None of these are exotic failure modes. They’re the predictable consequences of sharing a control plane between tenants who have different risk profiles, different deploy cadences, and different trust boundaries.

This is the gap between isolated enough and actually isolated. Namespace-level isolation is a powerful primitive, but it operates within a shared control plane—shared API server, shared etcd, shared scheduler, shared cluster-scoped resources. The blast radius of any control plane failure is, by definition, the entire cluster. For many organizations that tradeoff is acceptable. For others—those operating under strict compliance requirements, hosting external tenants, or separating business units with genuine adversarial potential—it is not.

The answer is rarely “rip everything out and run separate clusters for every team.” Physical separation carries real operational overhead: separate upgrade cycles, separate observability stacks, separate credential management. The practical question is where on the isolation spectrum a given tenant boundary actually needs to sit, and what the cost is of moving it.

That spectrum is wider than most platform teams realize, and understanding its failure modes at each level is what separates a security posture that holds from one that only looks like it does.

The Tenancy Spectrum: Why Namespaces Alone Fall Short

Kubernetes was not designed as a multi-tenant system. It was designed as an orchestrator for workloads you trust, running on infrastructure you own. The multi-tenancy story was retrofitted—through RBAC, NetworkPolicy, ResourceQuota, and namespace boundaries—onto an architecture where the control plane remains fundamentally shared.

That distinction matters enormously when you are designing infrastructure for tenants with competing security requirements, regulatory obligations, or aggressive resource profiles.

The Spectrum Is Not a Dial

Multi-tenancy in Kubernetes exists on a continuum, but the meaningful boundaries are not evenly distributed across it. At one end, namespace isolation gives you logical separation with a shared API server, shared etcd, and shared scheduler. At the other end, dedicated physical clusters give each tenant a fully independent control plane, node pool, and network boundary. Between those poles sit virtual clusters—a pattern that synthesizes the operational simplicity of namespaces with control plane separation closer to physical isolation.

The critical insight is that soft tenancy and hard tenancy are not points on a dial you tune for cost—they represent fundamentally different threat models and failure domains.

Where Shared Control Planes Fail

The blast radius of a shared control plane is easy to underestimate until a tenant triggers it in production. Consider three concrete failure vectors:

etcd contention. etcd is a strongly consistent key-value store with documented throughput ceilings. A tenant running high-frequency watch operations, deploying hundreds of CRDs, or storing large Secrets pushes the entire cluster toward latency spikes. Every tenant’s reconciliation loops feel that degradation equally.

API server rate limiting. The Kubernetes API server enforces per-client rate limits, but “client” is coarse-grained. A tenant running a misconfigured operator that hammers the API with LIST calls can saturate request queues and trigger throttling for unrelated tenants. Admission webhooks add latency that compounds under load across the shared surface.

Cluster-scoped resource collisions. CRDs, ClusterRoles, PodSecurityAdmission policies, and ValidatingWebhookConfigurations are cluster-scoped. A tenant that needs a specific CRD version conflicts directly with another tenant’s dependency on a different version. There is no namespaced escape hatch for this class of problem.

Threat Models Require Different Guarantees

Noisy-neighbor resource exhaustion, privilege escalation through misconfigured RBAC, and data exfiltration via compromised workloads are three distinct threat categories—and they do not share a solution. ResourceQuota addresses the first but does nothing for the second. RBAC hardening reduces escalation risk but cannot prevent a kernel exploit in a shared node pool from crossing tenant boundaries.

💡 Pro Tip: Map your threat model before you choose an isolation pattern. The operational overhead of dedicated clusters is only justified when your threat model includes privilege escalation or data exfiltration—not just noisy neighbors.

Understanding where shared control planes structurally fail sets the foundation for evaluating the isolation primitives available inside Kubernetes—starting with what the control plane actually consists of and which components isolation patterns target.

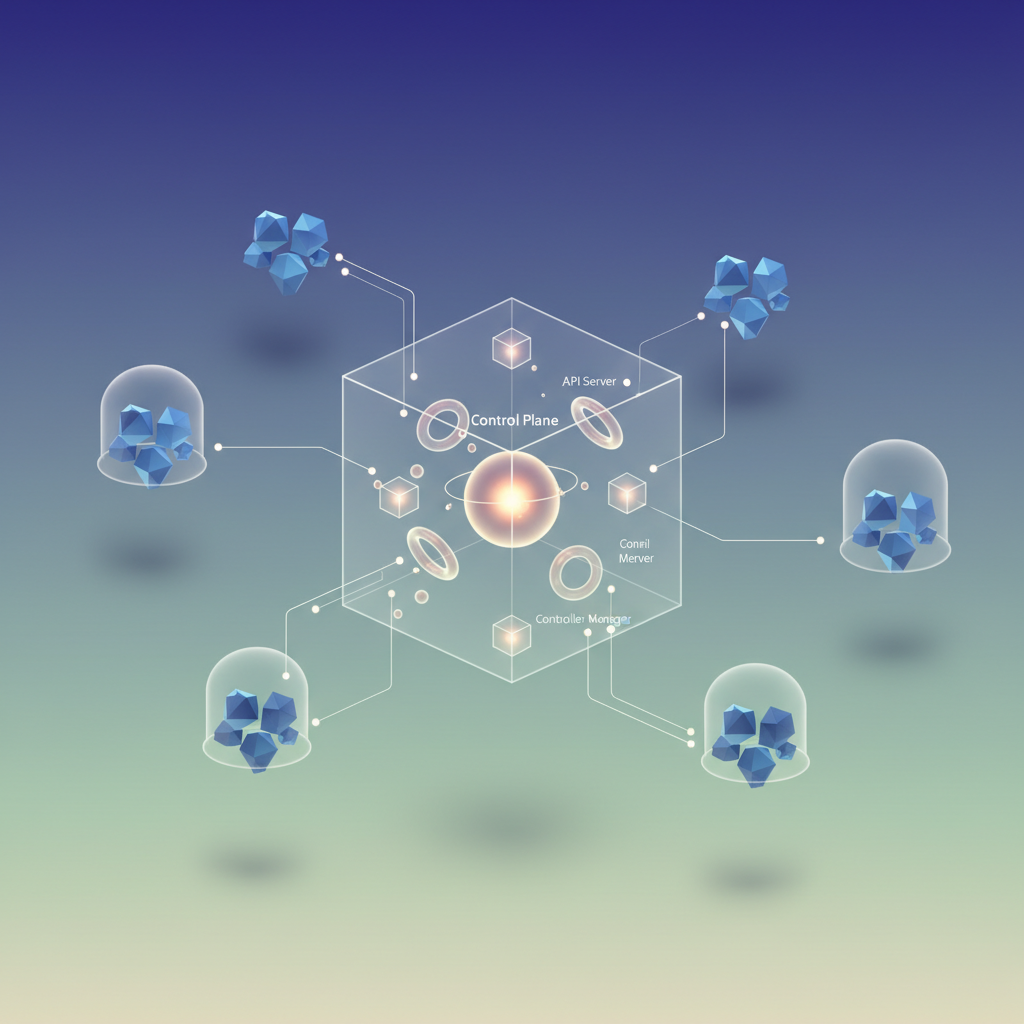

Anatomy of a Kubernetes Control Plane: What You’re Actually Isolating

Before choosing an isolation strategy, you need a precise mental model of what the control plane actually is—and what happens when tenants share it. Multi-tenancy discussions often treat the control plane as a black box, when in reality it’s four distinct components, each with its own failure modes and attack surface.

The Four Components and Why They Matter for Tenancy

kube-apiserver is the sole entry point for all cluster operations. Every kubectl command, every controller reconciliation loop, and every webhook callback routes through it. Under shared tenancy, a tenant generating excessive API calls—through a runaway operator, a misconfigured HPA, or deliberate abuse—saturates the API server’s request queue for everyone. Rate limiting via --max-requests-inflight and --max-mutating-requests-inflight helps, but these are cluster-wide knobs, not per-tenant controls.

etcd is where the blast radius of a control plane compromise becomes starkest. It stores the entire cluster state: every Secret, every ServiceAccount token, every ConfigMap—for all tenants, unpartitioned. A single etcd node under memory pressure triggers leader re-elections that cause API server request failures cluster-wide. A misconfigured RBAC policy granting a tenant read access to etcd directly exposes every other tenant’s Secrets. etcd has no native concept of namespaces or tenants; isolation is entirely an API server concern layered on top.

kube-scheduler evaluates pod placement against the full node pool. A tenant flooding the scheduler with unschedulable pods—requesting GPU resources that don’t exist, for example—creates a scheduling queue backlog. Other tenants experience pod startup latency even though their workloads are perfectly valid.

kube-controller-manager runs every built-in reconciliation loop: Deployments, StatefulSets, garbage collection, certificate rotation. These loops operate across all namespaces simultaneously. A broken CRD with a pathological reconciliation pattern can starve controller threads that other tenants depend on.

The Hidden Chokepoints: Webhooks and Aggregated APIs

RBAC controls who can do what through the API server. What it does not control is the path requests take once admitted. Admission webhooks—both validating and mutating—intercept requests before they reach etcd. A poorly written webhook that times out or panics under load fails open or blocks all matching requests cluster-wide, regardless of which tenant triggered the failure.

Aggregated APIs registered via APIService objects extend the API server at the routing layer. A tenant-deployed aggregated API that crashes takes down that API group for every client in the cluster.

💡 Pro Tip: Map your webhook coverage before designing tenancy boundaries. A single

MutatingWebhookConfigurationwithmatchPolicy: Equivalentand no namespace selector is a cross-tenant chokepoint by definition.

Understanding which components have genuine per-tenant knobs versus which operate only at cluster scope is the foundation for the patterns that follow. The next section examines the first and most common approach—namespace-based soft tenancy—and the specific guardrails that make it viable for less sensitive workloads.

Isolation Pattern 1: Namespace-Based Soft Tenancy with Guard Rails

Namespace-based tenancy is the lowest-friction path to multi-tenancy: no additional infrastructure, no control plane duplication, and no new operator surface area. Done correctly, it enforces meaningful boundaries for the majority of internal tenants. Done naively, it creates a false sense of isolation that collapses the moment a tenant escalates privileges.

The pattern works by combining three enforcement layers: resource governance, network segmentation, and admission control. Each layer addresses a distinct failure mode.

Resource Governance with ResourceQuotas and LimitRanges

ResourceQuotas cap aggregate consumption per namespace. LimitRanges set per-container defaults and ceilings, preventing unbounded pods from consuming node resources before the scheduler can act.

apiVersion: v1kind: ResourceQuotametadata: name: tenant-alpha-quota namespace: team-alphaspec: hard: requests.cpu: "8" requests.memory: 16Gi limits.cpu: "16" limits.memory: 32Gi count/pods: "50" count/services.loadbalancers: "2"---apiVersion: v1kind: LimitRangemetadata: name: tenant-alpha-limits namespace: team-alphaspec: limits: - type: Container default: cpu: "500m" memory: 512Mi defaultRequest: cpu: "100m" memory: 128Mi max: cpu: "4" memory: 8GiWithout a LimitRange, any pod without explicit resource requests slips through quota accounting entirely. Both objects are required for the guarantee to hold.

Network Segmentation with NetworkPolicies

A default-deny ingress policy applied at namespace creation prevents lateral movement between tenants. Traffic is then selectively re-admitted for legitimate cross-namespace communication.

apiVersion: networking.k8s.io/v1kind: NetworkPolicymetadata: name: default-deny-ingress namespace: team-alphaspec: podSelector: {} policyTypes: - IngressNetworkPolicy enforcement depends entirely on the CNI plugin. Flannel ignores these objects. Calico, Cilium, and WeaveNet enforce them. Verify your CNI before treating this as a hard guarantee.

Delegated Administration with Hierarchical Namespace Controller

Granting ClusterAdmin to a tenant so they can manage their own namespaces is a common shortcut that breaks the entire isolation model. The Hierarchical Namespace Controller (HNC) solves this by introducing a parent-child namespace tree where subnamespace creation rights propagate without ClusterRole escalation.

apiVersion: hnc.x-k8s.io/v1alpha2kind: SubnamespaceAnchormetadata: name: team-alpha-staging namespace: team-alphaA tenant with admin on team-alpha creates this anchor to provision team-alpha-staging. HNC propagates RoleBindings, LimitRanges, and NetworkPolicies from the parent automatically. The tenant never touches the cluster-scoped API.

Where Soft Tenancy Breaks

Namespace isolation has three well-defined failure boundaries that operators must explicitly accept or mitigate.

ClusterRole aggregation labels. Any ClusterRole with rbac.authorization.k8s.io/aggregate-to-admin: "true" merges into the admin role cluster-wide. A tenant with admin on their namespace inherits any permissions added via aggregation by any other operator or Helm chart in the cluster.

CRD writes are cluster-scoped. A tenant with access to install CRDs writes to a cluster-scoped resource. A malicious or misconfigured CRD can shadow existing API group paths, affecting all tenants. There is no namespace-scoped CRD API.

PodSecurityAdmission gaps at the node boundary. PSA enforces policy at admission time, but it does not cover all privilege escalation vectors—particularly those exploiting node kernel vulnerabilities or misconfigured container runtimes. Namespace labels like pod-security.kubernetes.io/enforce: restricted reduce the attack surface but do not eliminate host escape risk.

💡 Pro Tip: Audit aggregated ClusterRoles with

kubectl get clusterrole -o json | jq '.items[] | select(.metadata.labels["rbac.authorization.k8s.io/aggregate-to-admin"] == "true") | .metadata.name'before assuming your tenant admin bindings are scoped.

Namespace tenancy is the right choice for internal teams operating under a shared trust model where the primary risks are noisy neighbors and accidental misconfiguration, not adversarial tenants. For workloads that require control plane isolation—separate API server audit logs, independent RBAC root-of-trust, or regulatory separation—the namespace boundary is insufficient. That gap is where virtual clusters become the relevant tool.

Isolation Pattern 2: Virtual Clusters with vCluster

Namespace isolation sets guardrails on a shared control plane. Physical cluster separation eliminates sharing entirely but multiplies operational cost linearly with tenant count. Virtual clusters occupy the precise middle ground: each tenant receives a fully functional Kubernetes API server and etcd instance, yet the underlying compute stays pooled on the host cluster.

vCluster achieves this by running a lightweight Kubernetes distribution (k3s by default, or full upstream k8s) inside a namespace on the host cluster. From a tenant’s perspective, they interact with a real API server—complete with their own RBAC, CRDs, and admission webhooks. From the host cluster’s perspective, a vCluster is just a StatefulSet, a Service, and a handful of synced workload resources.

Deploying a Virtual Cluster

The fastest path to a running vCluster uses the official Helm chart:

controlPlane: distro: k3s: enabled: true image: tag: "v1.31.0-k3s1" statefulSet: resources: requests: cpu: 200m memory: 256Mi limits: cpu: 1000m memory: 1Gi

sync: toHost: pods: enabled: true persistentVolumeClaims: enabled: true services: enabled: true fromHost: nodes: enabled: true selector: all: true storageClasses: enabled: true

networking: replicateServices: toHost: - from: default/frontend to: tenant-a/tenant-a-frontendhelm repo add loft-sh https://charts.loft.shhelm repo update

helm install tenant-a vcluster \ --repo https://charts.loft.sh \ --namespace tenant-a \ --create-namespace \ --values vcluster-tenant-a.yaml \ --version 0.20.0Once the StatefulSet reaches a ready state, retrieve the kubeconfig and hand it to the tenant:

vcluster connect tenant-a --namespace tenant-a --server=https://tenant-a.internal.example.comThe tenant now operates against an API server that knows nothing about adjacent tenants, host-level system namespaces, or cluster-scoped resources they were never granted.

Understanding the Sync Boundary

The sync boundary is where vCluster’s complexity concentrates. The virtual control plane maintains its own object graph, but workloads must eventually land on host nodes. vCluster’s syncer translates virtual Pods into host Pods, rewriting names and injecting the host namespace as a prefix to prevent collisions.

Resources that travel upward (virtual → host): Pods, PersistentVolumeClaims, Services, Endpoints, Secrets referenced by Pods, ConfigMaps referenced by Pods.

Resources that travel downward (host → virtual): Node objects, StorageClasses, IngressClasses, and optionally PersistentVolumes.

Resources that stay entirely virtual: Deployments, StatefulSets, DaemonSets, RBAC, CRDs, ValidatingWebhookConfigurations. The tenant’s controller-plane objects never appear on the host.

This boundary has direct security implications. CRDs installed inside the virtual cluster do not pollute the host’s API surface. A broken admission webhook in the tenant’s virtual cluster does not affect host-cluster admission. Etcd data for tenant A is never co-mingled with tenant B’s state.

💡 Pro Tip: By default, vCluster syncs Secrets that Pods reference directly. If your tenants run workloads that mount Secrets via projected volumes or CSI drivers, audit your sync configuration explicitly—missing sync rules cause silent Pod scheduling failures that are non-obvious to debug.

Where the Model Has Limits

The host cluster still owns node scheduling. A tenant cannot configure taints, node selectors, or topology spread constraints that influence node provisioning—only what the host exposes through synced Node objects. Network policies defined inside the virtual cluster apply to the synced Pod objects on the host, so your CNI must honor them at the host layer. If the CNI enforces policy at the namespace level using labels, the synced namespace (not the virtual one) carries those labels, and you need explicit host-side policy to enforce boundaries between tenants.

Storage is similarly mediated. StorageClasses are read from the host and reflected into the virtual cluster, but PersistentVolume lifecycle management flows through the syncer. Provisioner plugins that require CRDs on the host cluster (like Rook-Ceph) work, but only because their CRDs live on the host, not inside the virtual cluster.

Virtual clusters eliminate shared-state failure modes and API surface exposure without requiring dedicated node pools per tenant. For organizations running tens of tenants with meaningful isolation requirements but finite infrastructure budgets, it is the most operationally tractable option available today.

The final isolation tier—dedicated clusters managed through a shared control plane—removes every shared component except the management layer itself. That architecture is worth examining when your threat model requires hard node-level boundaries.

Isolation Pattern 3: Dedicated Clusters with a Shared Management Plane

When namespace quotas and virtual clusters do not provide sufficient isolation—whether due to regulatory requirements, customer contracts, or blast-radius concerns—dedicated clusters per tenant or environment represent the strongest available boundary. Each tenant gets an independent API server, etcd, scheduler, and controller manager. No shared kernel primitives, no overlapping API server audit logs, no etcd keyspace co-mingling. The trade-off is operational surface area: without deliberate tooling choices, per-cluster sprawl consumes your platform team faster than the security gains justify.

Control Plane Topology: Stacked vs. External etcd

kubeadm HA deployments give you two topologies. In the stacked topology, etcd members run on the same nodes as the control plane components. This reduces node count and simplifies networking, and works well for most dedicated-cluster scenarios where you are running one cluster per tenant with predictable workload profiles.

apiVersion: kubeadm.k8s.io/v1beta3kind: ClusterConfigurationclusterName: tenant-acme-prodcontrolPlaneEndpoint: "acme-prod-lb.internal:6443"etcd: local: dataDir: /var/lib/etcd extraArgs: quota-backend-bytes: "8589934592" auto-compaction-retention: "1"kubernetesVersion: "v1.30.2"networking: podSubnet: "10.244.0.0/16" serviceSubnet: "10.96.0.0/12"External etcd makes sense when tenants have dramatically different write amplification profiles—a CI workload generating thousands of pod events per minute can saturate etcd I/O and degrade API server latency for unrelated tenants sharing the same etcd ring. Separating etcd onto dedicated nodes with isolated NVMe disks eliminates that interference and lets you right-size storage independently of control plane compute. For managed offerings (EKS, AKS, GKE), the control plane and etcd are fully abstracted; you pay per cluster in API costs but eliminate the etcd operations burden entirely, which is the right trade-off for most teams below 100 clusters.

Pro Tip: On managed clusters, the per-cluster cost is rarely the bottleneck. The bottleneck is configuration drift across clusters and the absence of a single pane of glass for fleet state. Invest in fleet management before you invest in optimizing control plane topology.

Fleet Management to Prevent Operational Sprawl

The operational leverage comes from treating clusters as cattle, not pets. Cluster API (CAPI) models cluster lifecycle as Kubernetes resources, which means your existing GitOps pipeline provisions, upgrades, and decommissions clusters the same way it manages application deployments. Rancher Fleet is an alternative when you need a higher-level abstraction over heterogeneous providers without building CAPI infrastructure providers yourself.

apiVersion: cluster.x-k8s.io/v1beta1kind: Clustermetadata: name: tenant-acme-prod namespace: capi-system labels: tenant: acme environment: production tier: dedicatedspec: clusterNetwork: pods: cidrBlocks: ["10.244.0.0/16"] controlPlaneRef: apiVersion: controlplane.cluster.x-k8s.io/v1beta1 kind: KubeadmControlPlane name: tenant-acme-prod-cp infrastructureRef: apiVersion: infrastructure.cluster.x-k8s.io/v1beta2 kind: AWSCluster name: tenant-acme-prodOnce CAPI provisions the cluster and registers it with ArgoCD, the ApplicationSet controller distributes baseline platform configuration—logging agents, network policies, RBAC scaffolding, admission webhooks—across the fleet without per-cluster manual intervention.

apiVersion: argoproj.io/v1alpha1kind: ApplicationSetmetadata: name: platform-baseline namespace: argocdspec: generators: - clusters: selector: matchLabels: tier: dedicated template: metadata: name: "platform-baseline-{{name}}" spec: project: platform source: repoURL: https://github.com/my-org/platform-config targetRevision: main path: baseline/ destination: server: "{{server}}" namespace: kube-system syncPolicy: automated: prune: true selfHeal: trueThis pattern keeps configuration consistent across 5 clusters and 50 clusters alike. The platform team defines the baseline once; CAPI and ArgoCD enforce it everywhere. The critical insight is that the management plane itself remains shared and centralized—only the data planes are isolated. Your platform team retains a single operational surface for upgrades, policy enforcement, and observability, while each tenant retains a hard isolation boundary at the control plane level.

The dedicated-cluster model eliminates shared kernel exposure, cross-tenant API server traffic, and etcd keyspace co-mingling. It is the only pattern that satisfies strict compliance requirements around data residency and audit log separation. The next section translates these three patterns into a decision framework tied directly to your threat model and team capacity.

Decision Framework: Matching Isolation to Your Threat Model

Choosing an isolation pattern is not primarily a technical decision—it is a risk management decision that technical constraints then shape. The following framework maps organizational variables to isolation patterns without requiring you to prototype all three options before committing.

The Four-Axis Scoring Matrix

Evaluate your environment across four axes, each scored 1–3:

Regulatory exposure — Are tenants subject to compliance regimes (SOC 2, PCI-DSS, HIPAA) that require demonstrable control-plane separation? Score 1 for internal tooling with no compliance scope, 2 for compliance requirements satisfied by logical separation, 3 for requirements mandating cryptographic or physical isolation boundaries.

Tenant trust level — Do tenants share an organization (internal platform teams), represent vetted external customers, or represent arbitrary third-party workloads? Score 1 for fully trusted internal teams, 2 for vetted external customers under contract, 3 for untrusted or adversarial tenants.

Blast-radius tolerance — What is the acceptable failure domain? Score 1 if a single cluster outage is acceptable, 2 if tenant-level isolation from noisy neighbors is required but shared control plane is tolerable, 3 if a compromised tenant must have zero lateral movement potential.

Operational capacity — How large is your platform team relative to tenant count? Score 1 for a small team managing fewer than 20 tenants, 2 for a dedicated platform team with automation tooling, 3 for a large SRE organization with a GitOps-driven fleet management layer.

Sum your scores. A total of 4–6 maps to namespace-based soft tenancy with robust RBAC and admission controls. A total of 7–9 maps to virtual clusters, which deliver dedicated API servers and separate etcd state without proportional operational cost. A total of 10–12 maps to dedicated physical clusters behind a shared management plane.

Inflection Points That Override the Score

Two conditions override the matrix entirely. First, if any tenant runs privileged workloads or requires kernel module loading, namespace isolation is disqualified regardless of score—the syscall surface is shared. Second, if your tenant count exceeds 150 and your platform team is fewer than five engineers, virtual clusters with a GitOps provisioning layer become operationally mandatory; the per-cluster overhead of dedicated clusters is not sustainable at that ratio without significant automation investment.

Migration Path: Soft to Hard Without Re-Architecting Tenants

The practical migration sequence is additive, not disruptive. Namespace tenants are promoted to virtual clusters by deploying a vCluster instance and syncing existing workloads via label selectors—tenant manifests require no changes. Virtual cluster tenants are promoted to dedicated clusters by migrating the vCluster control plane to a real cluster and updating the fleet management plane’s routing configuration. Tenants observe a DNS and kubeconfig change; their workloads remain identical.

💡 Pro Tip: Instrument tenant promotion as a first-class platform operation from day one. The teams that migrate smoothly are those that treated isolation level as a mutable attribute of a tenant object in their control plane, not as a deployment-time constant baked into cluster naming conventions.

The isolation decision is never permanent. What matters is that your initial choice is reversible without forcing tenants to re-architect—which is exactly what the operational layer must guarantee, and what the next section addresses directly.

Operational Considerations: Monitoring, Upgrades, and Incident Scope

Running a fleet of isolated control planes shifts operational burden in predictable ways. You gain blast radius containment; you pay for it with multiplied instrumentation, upgrade coordination, and runbook complexity. Understanding exactly where those costs land lets you staff and automate accordingly.

Per-Control-Plane Observability Without Alert Fatigue

Each dedicated control plane exposes its own /metrics endpoint on the API server and etcd. Scraping these across a fleet of twenty clusters without drowning your on-call rotation requires a federated approach: a central Prometheus with remote-write receivers per cluster, or a Thanos/Mimir layer that aggregates across tenants while preserving cluster labels.

The critical label is cluster. Every alert rule must carry it, or you lose the ability to route incidents to the right team. Establish this label convention before your first cluster is provisioned—retrofitting it across a live fleet is painful.

scrape_configs: - job_name: kube-apiserver kubernetes_sd_configs: - role: endpoints kubeconfig_file: /etc/prometheus/kubeconfigs/tenant-acme.yaml scheme: https tls_config: ca_file: /etc/prometheus/certs/tenant-acme-ca.crt relabel_configs: - source_labels: [__meta_kubernetes_namespace] action: keep regex: default - target_label: cluster replacement: tenant-acme-prod - job_name: etcd static_configs: - targets: ["10.0.1.15:2381", "10.0.1.16:2381", "10.0.1.17:2381"] labels: cluster: tenant-acme-prod scheme: https tls_config: cert_file: /etc/prometheus/certs/etcd-client.crt key_file: /etc/prometheus/certs/etcd-client.key ca_file: /etc/prometheus/certs/etcd-ca.crtGate your alerting on cluster-scoped aggregations. A single etcd_disk_wal_fsync_duration_seconds spike in tenant-acme-prod fires a targeted page, not a fleet-wide incident. Pair this with per-cluster SLO burn-rate alerts so you catch gradual API server degradation before tenants file tickets. Recording rules that pre-aggregate per-cluster request latency and error rates keep query costs manageable as your fleet scales.

Upgrade Sequencing Across a Cluster Fleet

Upgrading twenty control planes in lockstep is a single outage waiting to happen. Canary sequencing with Cluster API gives you structured rollout without custom scripting.

apiVersion: cluster.x-k8s.io/v1beta1kind: ClusterClassmetadata: name: tenant-standard namespace: fleet-managementspec: controlPlane: machineInfrastructure: ref: apiVersion: infrastructure.cluster.x-k8s.io/v1beta1 kind: AWSMachineTemplate name: cp-template-v1-28-6 variables: - name: kubernetesVersion required: true schema: openAPIV3Schema: type: string default: "1.28.6"Roll upgrades in tiers: canary cluster (one low-traffic tenant), then a 10% cohort, then the remainder. Each tier soaks for 48 hours before promotion. Automate the promotion gate using health checks against the API server’s /readyz endpoint and a minimum threshold of zero etcd leader elections during the soak window. Managed control plane providers like EKS and GKE support rolling API server updates natively—use their maintenance windows rather than reimplementing the logic yourself.

💡 Pro Tip: Pin your

ClusterClassversion in version control and use a GitOps controller to detect drift. A control plane running1.27.xwhile itsClusterClasstargets1.28.xis an upgrade debt that compounds silently.

Incident Containment: What the Runbook Actually Covers

Hard tenancy changes the incident scope in concrete terms. An etcd compaction failure in tenant-acme-prod does not affect tenant-globex-prod. Your runbook branch point moves earlier: the first triage question is “which cluster?” not “how many tenants are affected?” This scoping change reduces mean time to diagnosis because responders work with a bounded failure domain from the first page.

Structure your runbooks to reflect this explicitly. The initial decision tree should branch on cluster identity, then on component—API server, etcd, scheduler, or controller manager—before any tenant-impact assessment begins. Responders who default to shared-cluster instincts will waste time checking unaffected clusters.

What remains cross-cluster: your management plane, shared ingress or service mesh infrastructure, and any shared identity providers. These are your true blast radius boundaries. Document them explicitly in your runbooks so responders do not waste time checking tenant clusters during a management plane incident. Treat the management plane as a separate tier in your severity definitions—an outage there affects every tenant regardless of control plane isolation.

The patterns and operational trade-offs covered across these sections reduce to a clear decision sequence: audit your threat model, match it against the isolation spectrum, choose the simplest pattern that satisfies your actual requirements, and build reversible tenant promotion into the platform from the start. The teams that get this right treat isolation level as a runtime attribute—not a permanent architectural commitment—and build the automation to move tenants across the spectrum as their requirements evolve.

Key Takeaways

- Audit your current tenancy model against a concrete threat matrix before choosing isolation depth—namespace RBAC is sufficient for trusted internal teams but inadequate when tenants have different blast-radius or compliance requirements

- Deploy vCluster as a low-infrastructure-overhead stepping stone to hard tenancy: you get dedicated API server and etcd isolation without provisioning separate nodes, making it the right default for most multi-tenant platform builds

- Automate cluster lifecycle management with Cluster API or a managed fleet tool before scaling beyond three physical clusters—manual kubeadm HA setups become the bottleneck faster than the control plane isolation itself