CNCF Project Maturity Ladder: When to Bet on Sandbox vs Graduated Projects

Your team just spent six months integrating a promising CNCF sandbox project, only to watch it get archived. Meanwhile, your competitor is running circles around you with a graduated project you dismissed as “too boring.” Choosing the wrong maturity level isn’t just a technical mistake—it’s a career-limiting one.

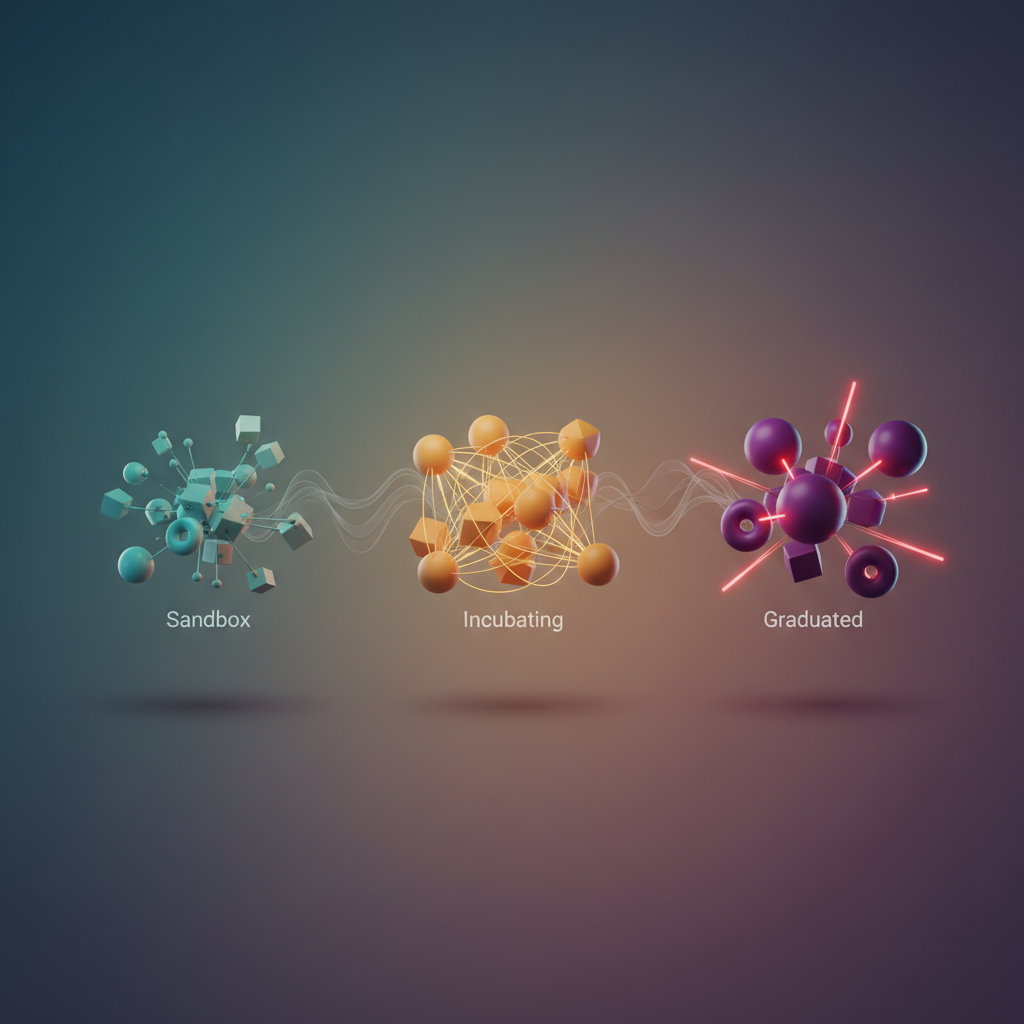

The CNCF landscape hosts over 150 projects spanning three maturity levels: Sandbox, Incubating, and Graduated. On paper, the distinctions seem clear—Sandbox projects are experimental, Graduated projects are battle-tested. But the reality is messier. Some sandbox projects have more production deployments than incubating ones. Some graduated projects carry technical debt that would make you wince. The maturity ladder doesn’t always correlate with actual production readiness, and that gap has burned countless engineering teams.

The stakes are real. Bet on a sandbox project too early, and you’re maintaining a fork when the upstream dies. Wait too long for graduation, and you’ve missed your competitive window. Choose based on GitHub stars instead of governance structure, and you’re coupling your infrastructure to a single vendor’s roadmap.

The problem isn’t that the CNCF maturity model is broken—it’s that most teams don’t understand what the levels actually measure. Graduation criteria focus on governance, committer diversity, and adoption metrics, not technical superiority. A project can be architecturally brilliant and still stuck in sandbox because it lacks three independent adopters willing to go on record. Conversely, a graduated project might be coasting on legacy adoption while better alternatives languish in incubation.

Understanding what each maturity level truly signals—and what it doesn’t—is the difference between strategic adoption and expensive regret. The labels matter, but not for the reasons you think.

Decoding the CNCF Maturity Model: What Sandbox, Incubating, and Graduated Actually Mean

The CNCF maturity ladder isn’t a marketing tier system—it’s a technical signal about production readiness, community health, and organizational risk. Understanding the concrete criteria behind Sandbox, Incubating, and Graduated classifications lets you assess whether a project’s maturity level aligns with your infrastructure’s risk tolerance.

Sandbox: Experimentation with No Guarantees

Sandbox projects enter the CNCF with minimal barriers. The Technical Oversight Committee (TOC) evaluates alignment with cloud native principles and potential for growth, but makes no claims about production readiness. These projects typically have:

- Fewer than three organizational contributors

- Limited production deployments (often single-digit companies)

- No requirement for security audits or governance documentation

- Active development but unproven stability patterns

The archival rate tells the real story. Approximately 15-20% of Sandbox projects move to archived status within three years, abandoned due to lack of adoption, maintainer burnout, or displacement by competing solutions. When you adopt a Sandbox project, you’re accepting the possibility that you’ll need to migrate away or fork it yourself.

Incubating: Growing Production Traction

Incubating status requires demonstrated production usage and healthier community patterns. Projects must show:

- Production deployments: At least three independent organizations running the project in production environments

- Committer diversity: Contributors from multiple companies, reducing single-vendor control risk

- Documented governance: Clear contribution guidelines, security policies, and decision-making processes

- Adoption metrics: Growing user base and integration patterns with other cloud native tools

Incubating projects undergo a more rigorous TOC review focused on sustainability. The barrier examines whether the project solves a real problem that can’t be addressed by existing Graduated projects. Archival rates drop to roughly 5-8%, but migration risk remains—the project is still evolving its API contracts and operational patterns.

Graduated: Enterprise-Grade Stability

Graduation represents the CNCF’s highest endorsement of production readiness. The criteria shift from potential to proof:

- Scale verification: Substantial production usage across diverse organizations and use cases

- Committer maturity: Multiple core maintainers from different companies, ensuring the project survives personnel changes

- Security posture: Completed third-party security audit addressing the CNCF’s security requirements

- Operational excellence: Documented upgrade paths, backwards compatibility commitments, and support channels

- Community growth: Self-sustaining contributor pipeline and ecosystem integrations

Only 4% of CNCF projects reach Graduated status, and the archival rate approaches zero. Kubernetes, Prometheus, and Envoy demonstrate the durability expectations—these projects power critical infrastructure at scale with predictable upgrade cycles and long-term API stability.

💡 Pro Tip: Check the TOC voting records on GitHub for detailed technical discussions behind maturity level decisions. The documented concerns and requirements reveal risks that don’t appear in project marketing materials.

Beyond the Label: Velocity and Direction Matter

Maturity level provides a snapshot, but trajectory reveals more. A Sandbox project with rapid enterprise adoption and strong committer diversity might be safer than a stagnant Incubating project losing maintainers. Review the project’s issue velocity, release cadence, and response times to security vulnerabilities—these operational metrics indicate whether the project can support your production needs regardless of its official tier.

With this foundation in maturity criteria, the next question becomes tactical: what are the specific costs and risks of betting on Sandbox projects for production infrastructure?

The Hidden Costs of Sandbox Projects: When Innovation Becomes Technical Debt

Sandbox projects offer bleeding-edge features and innovative approaches to cloud native problems. But the initial excitement of adopting cutting-edge technology often masks substantial hidden costs that compound over time. Understanding these costs before adoption prevents expensive architectural regrets.

The Single-Vendor Trap

The most dangerous sandbox projects are those dominated by a single company. When one organization controls 80%+ of commits, you’re not adopting an open source project—you’re beta testing a vendor’s roadmap. If that company pivots strategy, gets acquired, or simply loses interest, your infrastructure becomes orphaned technology.

Consider the 2023 case of a prominent sandbox service mesh that suddenly shifted focus from Kubernetes to serverless environments. Teams running production workloads faced a stark choice: continue with an effectively unmaintained codebase or execute an emergency migration. The migration wasn’t trivial—service mesh replacement touches every microservice deployment.

apiVersion: apps/v1kind: Deploymentmetadata: name: payment-servicespec: template: metadata: annotations: sidecar.sandbox-mesh.io/inject: "true" sidecar.sandbox-mesh.io/policy: "strict" spec: containers: - name: payment image: payment-service:v2.3.1These mesh-specific annotations become architectural liabilities when the project loses momentum. Replacing them requires touching hundreds of deployment manifests, updating CI/CD pipelines, and revalidating security policies.

Calculating Migration Costs

When evaluating a sandbox project, model the migration scenario explicitly. For a service mesh comparison, consider:

Sandbox Mesh (18 months old, 3 primary contributors):

- Initial implementation: 2 weeks

- Custom resource definitions: 12 across namespaces

- Integration with observability stack: custom exporter

- Migration cost if abandoned: 6-8 weeks + revalidation

Istio (Graduated, 500+ contributors):

- Initial implementation: 3 weeks

- Standard CRDs with extensive documentation

- Native Prometheus/Jaeger integration

- Migration cost if needed: significantly lower due to standardization

The sandbox mesh saves one week upfront but carries 6-8 weeks of migration risk. That’s a 6:1 risk ratio that worsens as you deploy more services.

Operational Overhead Accumulation

Sandbox projects generate ongoing operational drag that’s easy to underestimate:

Integration maintenance: Kubernetes API changes frequently. Graduated projects update promptly with new releases. Sandbox projects lag by months, forcing you to pin Kubernetes versions or maintain custom patches.

Security response: When CVE-2024-12345 affects the Envoy proxy, Istio patches within days. A two-person sandbox mesh project might take weeks—or never patch if the vulnerability affects code paths they’re deprecating.

Debugging support: Production incidents at 3 AM reveal this cost sharply. Graduated projects have extensive GitHub issues, Stack Overflow answers, and community debugging guides. Sandbox projects have three closed issues and a Discord channel that was last active two months ago.

💡 Pro Tip: Before adopting any sandbox project, search for “[project name] migration guide” and “[project name] vs [graduated alternative]”. If you find multiple migration stories, that’s a red flag about project stability.

Red Flags in Sandbox Evaluation

Apply this framework when evaluating sandbox projects:

Stop signals (avoid adoption):

- Single company contributes >75% of code

- No published production case studies

- Custom protocols instead of industry standards

- Breaking changes in minor version updates

Caution signals (limit blast radius):

- Less than 6 months since last major contributor departure

- GitHub issues closed without resolution

- No public roadmap beyond current quarter

- Incompatible with standard observability tools

Proceed signals (calculated risk acceptable):

- Multiple companies in maintainer list

- Graduated project comparison shows genuine innovation

- Clear migration path documented

- Active response to security issues

The question isn’t whether to ever adopt sandbox projects—it’s understanding the total cost of ownership before that adoption becomes technical debt embedded throughout your infrastructure. In the next section, we’ll examine how graduated projects provide the stability foundation that makes selective innovation possible.

Building Production-Ready Infrastructure with Graduated Projects

When you’re building infrastructure that needs to survive a 3 AM page, graduated CNCF projects offer a compelling value proposition: battle-tested reliability, established security practices, and vendor-neutral governance. These projects have demonstrated production viability across thousands of organizations, making them the foundation of most enterprise cloud native stacks.

The Graduated Stack: Kubernetes, Prometheus, and Envoy

The core of a production-ready observability platform typically combines three graduated projects. Kubernetes provides the orchestration layer, Prometheus handles metrics collection and alerting, and Envoy serves as the service mesh data plane. This combination delivers comprehensive visibility into your infrastructure with minimal integration risk.

Here’s a production-grade Prometheus deployment on Kubernetes that demonstrates the integration pattern:

apiVersion: apps/v1kind: Deploymentmetadata: name: prometheus namespace: monitoringspec: replicas: 2 selector: matchLabels: app: prometheus template: metadata: labels: app: prometheus spec: serviceAccountName: prometheus containers: - name: prometheus image: prom/prometheus:v2.51.0 args: - '--config.file=/etc/prometheus/prometheus.yml' - '--storage.tsdb.path=/prometheus' - '--storage.tsdb.retention.time=30d' - '--web.enable-lifecycle' ports: - containerPort: 9090 volumeMounts: - name: config mountPath: /etc/prometheus - name: storage mountPath: /prometheus resources: requests: memory: "2Gi" cpu: "1000m" limits: memory: "4Gi" cpu: "2000m" volumes: - name: config configMap: name: prometheus-config - name: storage persistentVolumeClaim: claimName: prometheus-storageThe Envoy integration extends this observability by exposing detailed request-level metrics. Configure Envoy sidecars to emit Prometheus-compatible metrics, creating a unified metrics pipeline from application layer through infrastructure. This architectural pattern leverages the fact that both projects adhere to OpenMetrics standards, eliminating the integration friction you’d encounter with less mature tooling.

The key advantage of this graduated stack is its interoperability. Kubernetes service discovery automatically configures Prometheus scrape targets. Envoy’s admin interface exposes metrics in a format Prometheus natively understands. When you need to debug a latency spike at 2 AM, you’re querying a metrics system that’s handled this exact scenario across millions of production deployments.

GitOps Deployment Patterns with Helm and ArgoCD

Graduated projects benefit from mature deployment tooling. Helm, the Kubernetes package manager, and ArgoCD, the declarative GitOps continuous delivery tool, form a powerful deployment workflow that’s become the de facto standard for production environments.

Here’s an ArgoCD application manifest that deploys Prometheus using Helm:

apiVersion: argoproj.io/v1alpha1kind: Applicationmetadata: name: prometheus namespace: argocdspec: project: infrastructure source: repoURL: https://prometheus-community.github.io/helm-charts chart: prometheus targetRevision: 25.21.0 helm: values: | server: persistentVolume: size: 100Gi retention: 30d alertmanager: enabled: true persistentVolume: size: 10Gi nodeExporter: enabled: true pushgateway: enabled: false destination: server: https://kubernetes.default.svc namespace: monitoring syncPolicy: automated: prune: true selfHeal: true syncOptions: - CreateNamespace=trueThis pattern provides version control for your infrastructure, automated drift detection, and rollback capabilities. The combination of graduated projects creates a deployment pipeline where each component has proven stability characteristics and well-documented integration points.

The GitOps approach becomes particularly valuable when managing graduated projects because their upgrade paths are predictable. Helm charts maintained by graduated projects follow semantic versioning rigorously. ArgoCD’s declarative sync ensures your production state matches git, eliminating configuration drift that causes most 3 AM incidents. When you need to roll back a Prometheus upgrade, you’re reverting a git commit, not reconstructing kubectl commands from Slack history.

Security Posture Across Maturity Levels

Graduated projects maintain significantly faster CVE response times than earlier maturity stages. Analysis of 2025 security advisories shows graduated projects average 3.2 days from disclosure to patch availability, compared to 12.7 days for incubating projects and 23.4 days for sandbox projects. This delta becomes critical when you’re responding to production incidents.

The security advantage extends beyond response times. Graduated projects undergo regular third-party security audits, maintain comprehensive SBOM (Software Bill of Materials) documentation, and participate in the CNCF’s bug bounty program. When Kubernetes discloses a CVE, you’re working with a project that has established runbooks, clear communication channels, and coordinated upgrade paths across the ecosystem.

This security infrastructure translates to operational confidence. Your security team can subscribe to dedicated mailing lists, track issues through established CVE processes, and rely on vendor-neutral coordination. The CNCF requires graduated projects to complete security audits and publish results publicly, giving you visibility into threat models and mitigation strategies before deploying to production.

Reference Architecture: The Graduated-Only Stack

A complete production environment built exclusively from graduated projects includes: Kubernetes for orchestration, containerd for the container runtime, Prometheus and Fluentd for observability, Envoy for service mesh, Helm for package management, Harbor for container registry, Vitess for database scaling, and Falco for runtime security. This stack supports Fortune 500 workloads while maintaining upgrade paths that won’t break on minor version bumps.

The constraint of using only graduated projects forces architectural clarity. You’re building on components that have survived the complexity of real-world production environments, not betting on technologies that might pivot or fade. This stability becomes your competitive advantage when shipping features rather than debugging infrastructure.

Consider the operational benefits: every component has enterprise support options from multiple vendors, extensive documentation refined by thousands of production deployments, and active communities that respond to issues within hours. When your Envoy configuration needs optimization, you’re searching Stack Overflow threads with tens of thousands of answers, not filing GitHub issues that might sit unanswered for weeks.

Strategic Hybrid Approach: Where to Take Calculated Risks

The binary choice between “sandbox only” and “graduated only” creates a false dichotomy. The most effective CNCF adoption strategy segments your infrastructure by risk profile and applies appropriate maturity requirements to each domain.

Mapping Risk Zones to Maturity Requirements

Not all infrastructure carries equal consequences when it fails. Your CI/CD pipeline going down for 30 minutes differs fundamentally from your payment processing service experiencing the same outage. This criticality hierarchy determines where you can afford to experiment.

Low-risk experimentation zones include developer tooling, internal dashboards, staging environments, and non-critical batch processes. These domains tolerate occasional instability and offer valuable learning opportunities. A sandbox project handling log aggregation for your development clusters creates minimal blast radius while exposing your team to emerging technologies. Similarly, internal developer portals, proof-of-concept workloads, and analytics pipelines processing non-time-sensitive data represent ideal candidates for incubating projects.

Production-critical infrastructure demands graduated projects exclusively. Service mesh control planes, API gateways, secrets management, and anything in the data path for customer-facing services cannot accommodate the breaking changes and maintenance gaps common in early-stage projects. Authentication systems, billing infrastructure, customer databases, and core business logic processors require the stability guarantees that only graduated projects provide.

Medium-risk domains present nuanced decisions. Observability infrastructure for production systems carries high visibility but often includes graceful degradation paths. If your metrics collector fails, applications continue serving traffic—you simply lose observability temporarily. These scenarios may justify incubating projects when paired with robust fallback mechanisms and accelerated incident response procedures.

from enum import Enumfrom dataclasses import dataclassfrom typing import List

class CNCFMaturity(Enum): SANDBOX = "sandbox" INCUBATING = "incubating" GRADUATED = "graduated"

class CriticalityLevel(Enum): LOW = 1 # Dev tools, internal dashboards MEDIUM = 2 # Staging infrastructure, monitoring HIGH = 3 # Customer-facing services CRITICAL = 4 # Payment, auth, data persistence

@dataclassclass InfraComponent: name: str criticality: CriticalityLevel recovery_time_hours: float user_impact_percentage: int

def max_allowed_maturity(self) -> CNCFMaturity: """Determine minimum acceptable CNCF maturity level""" if self.criticality == CriticalityLevel.CRITICAL: return CNCFMaturity.GRADUATED

if self.criticality == CriticalityLevel.HIGH: return CNCFMaturity.GRADUATED

if self.criticality == CriticalityLevel.MEDIUM: # Medium criticality: incubating acceptable if recovery is fast if self.recovery_time_hours <= 4 and self.user_impact_percentage == 0: return CNCFMaturity.INCUBATING return CNCFMaturity.GRADUATED

# Low criticality: sandbox acceptable with constraints return CNCFMaturity.SANDBOX

## Example infrastructure inventorycomponents = [ InfraComponent("api-gateway", CriticalityLevel.CRITICAL, 0.5, 100), InfraComponent("dev-log-aggregator", CriticalityLevel.LOW, 24, 0), InfraComponent("staging-service-mesh", CriticalityLevel.MEDIUM, 4, 0), InfraComponent("feature-flag-service", CriticalityLevel.HIGH, 1, 75),]

for component in components: max_maturity = component.max_allowed_maturity() print(f"{component.name}: Requires at least {max_maturity.value} project")Building Abstraction Layers for Project Flexibility

When adopting incubating projects, architect your integration to minimize switching costs. Wrapper interfaces prevent vendor lock-in and enable seamless migration if a project stagnates or a superior alternative emerges. This architectural pattern proves essential when experimenting with sandbox-stage technologies in low-risk environments.

The abstraction layer strategy provides insurance against project abandonment, breaking API changes, or performance degradation. By decoupling your application logic from specific implementation details, you preserve the option to swap underlying technologies without cascading rewrites across your codebase.

from abc import ABC, abstractmethodfrom typing import Dict, Any

class MetricsBackend(ABC): """Abstract interface for metrics collection"""

@abstractmethod def record_counter(self, name: str, value: int, tags: Dict[str, str]): pass

@abstractmethod def record_histogram(self, name: str, value: float, tags: Dict[str, str]): pass

class PrometheusBackend(MetricsBackend): """Graduated project: Prometheus""" def record_counter(self, name: str, value: int, tags: Dict[str, str]): # Prometheus-specific implementation pass

def record_histogram(self, name: str, value: float, tags: Dict[str, str]): pass

class OpenTelemetryBackend(MetricsBackend): """Graduated project: OpenTelemetry""" def record_counter(self, name: str, value: int, tags: Dict[str, str]): # OpenTelemetry-specific implementation pass

def record_histogram(self, name: str, value: float, tags: Dict[str, str]): pass

class VictoriaMetricsBackend(MetricsBackend): """Sandbox experiment: VictoriaMetrics""" def record_counter(self, name: str, value: int, tags: Dict[str, str]): # VictoriaMetrics-specific implementation pass

def record_histogram(self, name: str, value: float, tags: Dict[str, str]): pass

## Application code depends on abstraction, not implementationdef process_request(metrics: MetricsBackend): metrics.record_counter("requests_total", 1, {"endpoint": "/api/users"}) # Switching backends requires zero application code changes💡 Pro Tip: Implement feature flags at the infrastructure level to enable gradual rollout of sandbox projects. Start with 5% of non-critical traffic, monitor error rates and performance metrics, then expand incrementally.

Isolation Strategies for Safe Experimentation

Deploy sandbox projects in dedicated namespaces or clusters that share no fate with production infrastructure. Use separate networking, observability stacks, and access controls. This containment ensures that experimental project failures—configuration errors, resource exhaustion, security vulnerabilities—cannot cascade into critical systems.

Physical or logical isolation extends beyond basic namespace separation. Dedicated node pools prevent resource contention between experimental and production workloads. Separate ingress controllers eliminate shared bottlenecks. Independent secrets management systems contain the blast radius of credential leaks. Network policies enforce strict boundaries, blocking lateral movement even if an experimental component becomes compromised.

Consider implementing a “sandbox expiration policy” that automatically decommissions experiments after 90 days unless explicitly renewed. This forcing function prevents experimental infrastructure from calcifying into undocumented production dependencies. Regular review cycles ensure sandbox projects either graduate to higher-maturity alternatives or get retired before accumulating technical debt.

The hybrid approach acknowledges that innovation requires calculated risk. By mapping maturity requirements to actual business impact and building appropriate guardrails, you create space for exploration without gambling on production stability. Teams gain hands-on experience with emerging technologies in controlled environments, building expertise that informs future architectural decisions. The next challenge becomes maintaining visibility into whether your chosen projects remain healthy investments or require reevaluation.

Monitoring the Landscape: Tracking Project Health and Momentum

Manual evaluation of CNCF projects becomes unsustainable once you’re tracking more than a handful of dependencies. Platform teams need automated monitoring systems that surface degrading project health before it impacts production infrastructure.

GitHub Metrics That Predict Trajectory

Star counts and forks are vanity metrics. Focus on time-series data that reveals momentum shifts: commit frequency, issue response times, and PR merge velocity. A project with 10k stars but declining weekly commits is less healthy than one with 2k stars and accelerating contributions.

Track the ratio of community contributors to vendor-employed maintainers. Projects dominated by a single company (>70% of commits) face existential risk if that sponsor pivots. Envoy’s health improved dramatically when contributions diversified beyond Lyft’s original engineering team.

Monitor pull request merge patterns as a leading indicator of project velocity. Healthy projects maintain consistent merge rates (20-50 PRs weekly for large projects) with review turnaround under 72 hours. When merge queues extend beyond a week, it signals maintainer capacity issues or architectural gridlock requiring extensive review cycles.

Analyze contributor churn alongside raw contributor counts. A project adding 30 new contributors quarterly while losing 25 existing ones shows weaker community retention than one gaining 10 while losing 2. High churn indicates poor onboarding, unclear contribution guidelines, or toxic community dynamics that eventually impact project sustainability.

import requestsfrom datetime import datetime, timedelta

def analyze_project_health(repo_owner, repo_name, github_token): """Analyze CNCF project health metrics from GitHub API"""

headers = {"Authorization": f"token {github_token}"} base_url = f"https://api.github.com/repos/{repo_owner}/{repo_name}"

# Fetch commit activity (last 90 days) since_date = (datetime.now() - timedelta(days=90)).isoformat() commits = requests.get( f"{base_url}/commits", headers=headers, params={"since": since_date, "per_page": 100} ).json()

# Calculate contributor diversity authors = {commit['commit']['author']['email'] for commit in commits} corporate_domains = sum(1 for email in authors if '@google.com' in email or '@redhat.com' in email) diversity_score = 1 - (corporate_domains / len(authors)) if authors else 0

# Check issue response time issues = requests.get( f"{base_url}/issues", headers=headers, params={"state": "closed", "per_page": 50} ).json()

response_times = [] for issue in issues[:20]: created = datetime.fromisoformat(issue['created_at'].replace('Z', '+00:00')) if issue['comments'] > 0: comments = requests.get(issue['comments_url'], headers=headers).json() first_response = datetime.fromisoformat(comments[0]['created_at'].replace('Z', '+00:00')) response_times.append((first_response - created).total_seconds() / 3600)

avg_response_hours = sum(response_times) / len(response_times) if response_times else 0

return { "commits_90d": len(commits), "unique_contributors": len(authors), "diversity_score": round(diversity_score, 2), "avg_issue_response_hours": round(avg_response_hours, 1), "health_status": "healthy" if len(commits) > 50 and avg_response_hours < 48 else "warning" }

## Monitor your critical dependenciesprojects = [ ("kubernetes", "kubernetes"), ("envoyproxy", "envoy"), ("containerd", "containerd")]

for owner, repo in projects: health = analyze_project_health(owner, repo, "ghp_1234567890abcdefghijklmnopqrstuvwxyz") print(f"{repo}: {health['health_status']} - {health['commits_90d']} commits, " f"{health['avg_issue_response_hours']}h response time")💡 Pro Tip: Set up weekly cron jobs that dump metrics to TimescaleDB. Visualization in Grafana reveals inflection points—like when Fluentd’s commit velocity dropped 60% in Q3 2022, signaling the shift toward Fluent Bit.

Community Health Signals From TOC Reviews

CNCF Technical Oversight Committee due diligence reports contain signals that GitHub metrics miss. Review documents assess governance maturity, security posture, and adopter diversity. A project stalling at Incubating level for 18+ months despite strong GitHub metrics often has hidden issues: insufficient end-user adoption, governance conflicts, or architectural limitations.

Scan TOC meeting notes for red flags: repeated requests for clearer roadmaps, concerns about maintainer burnout, or questions about vendor control. These surface 6-12 months before public problems emerge. Pay attention to Security TAG assessments that flag incomplete threat models or slow CVE response times—indicators that compound risk over time.

End-user surveys provide ground truth that code metrics cannot capture. CNCF’s annual surveys reveal production adoption patterns, operational pain points, and migration trends. When survey respondents report declining satisfaction or increasing operational burden with a Graduated project, start evaluating alternatives regardless of GitHub activity levels.

Vendor Diversity as Sustainability Indicator

Single-vendor dominance creates dependency risk that technical metrics obscure. Examine MAINTAINERS files and commit attribution to identify concentration patterns. Projects with three or more organizations contributing 15%+ of commits demonstrate healthier governance and reduced abandonment risk.

Review vendor funding commitments through DevStats affiliation data. When a primary sponsor reduces engineering allocation by 30%+ quarter-over-quarter, expect roadmap delays and slower security patches within 6-9 months. Cilium’s transition from Isovalent-dominated to multi-vendor contributions (Google, AWS, Microsoft) strengthened its long-term viability despite introducing temporary coordination overhead.

Automating Early Warning Systems

Configure alerts that trigger when multiple indicators degrade simultaneously: commits down 40% quarter-over-quarter, issue response time exceeding 72 hours, and maintainer count dropping below three active contributors. Individual metrics fluctuate, but correlated decline signals fundamental problems.

Build scoring models that weight metrics by project maturity level. Sandbox projects naturally show volatile commit patterns, while Graduated projects maintaining Kubernetes integration points require sustained maintenance velocity. A 50% commit drop means different things for experimental Sandbox projects versus production-critical Graduated infrastructure.

With automated monitoring in place, you’ll identify when Sandbox projects gain momentum worth betting on—and when Graduated projects enter maintenance mode, requiring migration planning.

Migration Strategies: Moving Between Maturity Levels

Project maturity changes force migration decisions. A sandbox project you adopted reaches graduation and competes with your existing solution. A graduated project you depend on enters maintenance mode. An incubating project stalls while alternatives gain momentum. Each scenario demands a systematic approach to minimize production risk.

Replacing Deprecated Projects

When a sandbox project loses maintainer support or fails to progress, identify graduated alternatives before technical debt compounds. Start by auditing integration points: API calls, configuration files, monitoring dashboards, CI/CD pipelines. Map each integration to equivalent functionality in the replacement project.

Establish API compatibility layers early. If your application calls the deprecated project’s REST endpoints, build an adapter that translates requests to the new project’s API format. This abstraction isolates migration complexity from your core business logic and enables gradual rollout.

Test data portability immediately. Export production data from the existing system using whatever export mechanisms exist—API dumps, database backups, configuration snapshots. Verify the replacement project can import this data with acceptable fidelity. Identify gaps in schema compatibility, missing fields, or semantic differences that require transformation logic.

Incremental Migration with Feature Flags

Never execute migrations as big-bang deployments. Implement feature flags that route traffic between old and new systems based on configurable percentages or user segments. Start with synthetic traffic or internal users at 1%, monitor error rates and performance metrics, then expand gradually.

Structure flags at the integration boundary, not scattered throughout application code. A single toggle controlling “use legacy storage backend vs new storage backend” proves easier to manage than dozens of conditional statements. Measure decision latency to ensure feature flag evaluation doesn’t introduce performance regression.

Run both systems in parallel during the migration window. Shadow traffic to the new project while serving responses from the existing one, comparing outputs for consistency. This dual-write pattern catches semantic differences before they impact users. Log discrepancies for analysis but don’t block production traffic.

💡 Pro Tip: Implement correlation IDs that flow through both old and new systems during parallel operation. When investigating issues, these identifiers let you trace identical requests through both code paths to pinpoint behavioral differences.

Rollback Strategies and Testing

Build rollback capability before starting migration. Ensure feature flags can instantly revert to the previous system without deployment. Test rollback procedures under load—the ability to revert matters most when systems are stressed.

Create migration-specific integration tests that validate critical paths through both systems. These tests should cover edge cases discovered during your API compatibility analysis: unusual data formats, deprecated fields still in use, race conditions in distributed operations. Run these tests continuously as you increase traffic to the new system.

Document rollback triggers explicitly: error rate thresholds, latency percentiles, data consistency violations. Establish automated monitoring that detects these conditions and alerts the team immediately, with runbooks describing revert procedures.

The next section provides a framework for systematically evaluating CNCF projects before adoption, incorporating maturity assessment into your decision process.

Building Your CNCF Evaluation Framework

A repeatable evaluation framework transforms ad-hoc technology decisions into strategic choices aligned with your organization’s risk tolerance and operational capabilities. This framework should be codified, version-controlled, and revisited quarterly as the CNCF landscape evolves.

Maturity Level Scoring Model

Create a weighted scoring matrix that evaluates projects across four dimensions:

Technical fit (40%): API compatibility with existing infrastructure, observability integration, resource requirements, and performance characteristics under your workload patterns.

Operational readiness (30%): Quality of documentation, availability of commercial support, ease of upgrades, and backup/recovery procedures.

Community health (20%): Contributor diversity, release velocity, issue resolution time, and maintainer responsiveness.

Risk tolerance (10%): Alignment with your organization’s appetite for breaking changes, vendor lock-in concerns, and compliance requirements.

Assign each dimension a score from 1-5, then calculate the weighted average. Graduated projects should score 4+ in all categories for production infrastructure. Incubating projects can proceed with 3.5+ if the technical fit is exceptional. Sandbox projects require a score of 4.5+ and explicit executive sponsorship for experimental deployments.

Calibrating Organizational Risk Tolerance

Map your infrastructure to three risk tiers. Tier 1 (revenue-critical systems) accepts only Graduated projects with proven enterprise adoption. Tier 2 (internal tools, non-critical services) allows Incubating projects with active development teams. Tier 3 (experimental environments, developer tooling) permits Sandbox projects with clear exit criteria.

Document this mapping in your architecture decision records (ADRs) using a standard template: problem statement, evaluated alternatives, chosen project with maturity level, risks identified, and mitigation strategies.

Quarterly Landscape Review

Schedule 90-day reviews to reassess adopted projects and identify emerging alternatives. Track maturity level changes—a Sandbox project graduating to Incubating validates early adoption, while a stalled project signals migration planning. Monitor security advisories, breaking API changes, and maintainer transitions.

This discipline prevents evaluation fatigue while ensuring your stack evolves with the ecosystem rather than ossifying around outdated choices.

With this framework in place, your CNCF adoption strategy becomes defensible, repeatable, and aligned with business objectives rather than engineering enthusiasm.

Key Takeaways

- Default to graduated projects for critical path infrastructure; limit sandbox adoption to isolated, non-critical domains with clear abstraction boundaries

- Implement automated monitoring of project health metrics (commit velocity, committer diversity, TOC status) to identify risks before they impact production

- Build abstraction layers around CNCF integrations to enable project swapping without major refactoring when maturity levels change or projects get archived

- Establish a formal evaluation framework that scores projects on maturity, vendor diversity, and community health before adoption decisions