Building Production-Ready Spark on Kubernetes with the Operator Pattern

You’ve run spark-submit hundreds of times. You’ve written wrapper scripts to poll job status. You’ve SSH’d into edge nodes at 2 AM because a critical ETL pipeline silently failed and left no trace in your monitoring system. You’ve explained to stakeholders why you can’t give them a simple dashboard showing which Spark jobs are running, which failed, and why—because spark-submit treats every job as an ephemeral, fire-and-forget operation.

This is the reality of running Spark on Kubernetes with the native submission mechanism. It works—technically. You can point spark-submit at your cluster, it spins up driver and executor pods, your code runs, and if everything goes well, you get results. But production data engineering isn’t about things going well. It’s about handling the 3 AM failures, maintaining audit trails, enabling self-service for data scientists, and integrating with CI/CD pipelines that expect declarative, version-controlled infrastructure.

The gap is fundamental: spark-submit is an imperative tool in a world that’s moved to declarative infrastructure. You can’t kubectl get sparkapplications to see what’s running. You can’t store job definitions in Git and have them automatically reconcile to desired state. You can’t leverage Kubernetes-native RBAC, resource quotas, or admission controllers. You’re operating outside the Kubernetes API, managing Spark jobs through a separate, incompatible mechanism.

The Spark Operator bridges this gap by treating Spark applications as first-class Kubernetes resources. But before we explore how it works, we need to understand exactly what breaks when you try to run production Spark workloads with spark-submit alone.

Why spark-submit on Kubernetes Isn’t Enough

Apache Spark has supported Kubernetes as a cluster manager since version 2.3, allowing you to run spark-submit with --master k8s://... and deploy driver and executor pods directly. While this native integration works for experimentation and one-off jobs, it falls short of production requirements in several critical ways.

The Imperative Deployment Problem

Native spark-submit operates imperatively. You execute a command, Spark creates pods, the job runs, and pods terminate. There’s no declarative manifest, no version-controlled state, and no reconciliation loop. If a driver pod fails due to node eviction or resource pressure, the job simply dies—there’s no automatic retry mechanism. You’re responsible for detecting failures and resubmitting jobs manually, which doesn’t scale in production environments running dozens or hundreds of daily jobs.

This imperative model also breaks GitOps workflows. You can’t represent a Spark job as a Kubernetes resource that Flux or ArgoCD manages. Instead, you’re stuck with shell scripts, cron jobs, or external orchestrators like Airflow calling spark-submit repeatedly. Configuration drift becomes inevitable when job parameters live in CI/CD scripts rather than version-controlled manifests.

Missing Lifecycle Management

Kubernetes excels at lifecycle management through controllers and custom resources, but spark-submit bypasses this entirely. Jobs submitted via spark-submit don’t integrate with native Kubernetes patterns like rolling updates, canary deployments, or declarative scheduling policies. Want to run a job on a specific node pool using taints and tolerations? You’ll pass those as submit arguments, not declarative pod specs. Need to enforce resource quotas or priority classes consistently? You’re manually adding flags to every invocation.

The lack of job history is equally problematic. Once pods terminate, you lose visibility into what ran, when it ran, and why it failed unless you’ve built separate logging infrastructure. Kubernetes Events expire after an hour. Pod logs disappear when pods are garbage collected. There’s no built-in mechanism to query “show me all Spark jobs from the last week” or “what was the configuration of the job that failed yesterday?”

Observability and Debugging Gaps

Native Spark on Kubernetes doesn’t expose job state through Kubernetes APIs. You can’t run kubectl get sparkjobs or inspect job status with standard tooling. Prometheus metrics require custom exporters. The Spark UI runs temporarily on the driver pod and becomes inaccessible after job completion unless you configure external history servers manually—another operational burden.

Resource management also suffers. Dynamic allocation works differently on Kubernetes than on YARN or standalone clusters, and tuning executor scaling requires deep understanding of both Spark and Kubernetes autoscaling mechanisms. Without a control plane managing these concerns, you’re optimizing parameters job-by-job rather than enforcing platform-wide policies.

The Spark Operator addresses these gaps by implementing the Kubernetes Operator pattern, bringing declarative configuration, automated lifecycle management, and first-class observability to Spark workloads.

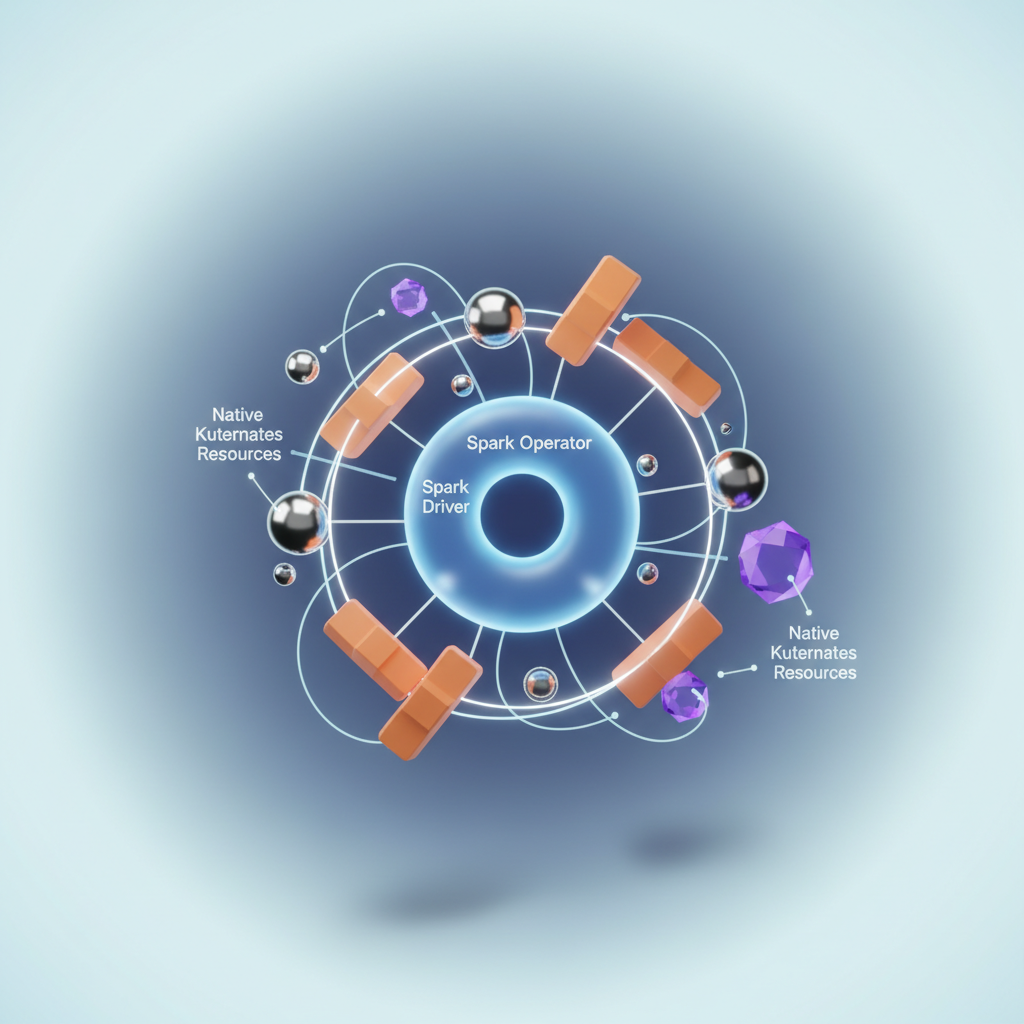

Understanding the Spark Operator Architecture

The Spark Operator transforms how you run Apache Spark on Kubernetes by leveraging the Operator pattern—a design principle that extends Kubernetes’ native capabilities to manage domain-specific workloads. Instead of treating Spark jobs as opaque pods or deployments, the Operator pattern brings Spark’s lifecycle management directly into the Kubernetes API surface.

Custom Resource Definitions: Spark as a First-Class Citizen

At the core of the Spark Operator lies a Custom Resource Definition (CRD) called SparkApplication. CRDs extend the Kubernetes API to represent application-specific resources—in this case, Spark jobs—as native Kubernetes objects. When you create a SparkApplication resource, you’re declaring the desired state of a Spark job using the same YAML manifests you’d use for Deployments or Services.

The CRD defines the schema for Spark-specific configurations: driver and executor specifications, dependency management, monitoring endpoints, and runtime parameters. This declarative approach replaces imperative spark-submit commands with version-controlled manifests that integrate seamlessly with GitOps workflows.

The Reconciliation Loop: From Desired State to Running Job

The Spark Operator runs as a controller within your Kubernetes cluster, continuously monitoring SparkApplication resources through a reconciliation loop. This control loop compares the desired state (your manifest) against the actual state (running pods, services, and ConfigMaps) and takes corrective action to eliminate drift.

When you apply a SparkApplication manifest, the controller springs into action: it creates the driver pod with appropriate container specifications, sets up headless services for Spark’s internal communication, injects configuration through ConfigMaps, and monitors executor pod creation. If an executor pod fails, the controller detects the discrepancy and orchestrates replacement pods according to your retry and failure policies.

This reconciliation model provides self-healing capabilities that spark-submit lacks. The operator automatically recovers from transient failures, reschedules pods on node failures, and maintains the desired replica count for executors—all without manual intervention.

SparkApplication Lifecycle and State Transitions

A SparkApplication progresses through distinct lifecycle states: SUBMITTED, RUNNING, COMPLETED, FAILED, and INVALIDATING. The operator updates the resource’s status subresource as the job transitions between states, providing observability through standard kubectl get sparkapplications commands.

The status field captures rich metadata: application start and completion times, driver pod names, executor state counts, and failure reasons. This information becomes queryable through the Kubernetes API, enabling programmatic monitoring and alerting without parsing driver logs or connecting to external tracking systems.

💡 Pro Tip: The SparkApplication status persists even after pod termination, giving you a historical record of job executions without relying on external metadata stores.

RBAC Integration and Service Account Management

The Spark Operator integrates tightly with Kubernetes Role-Based Access Control (RBAC) through service accounts. Each SparkApplication can specify a service account that determines the permissions available to driver and executor pods. This enables least-privilege security models where Spark jobs access only the specific secrets, ConfigMaps, or persistent volumes they require.

The operator itself requires cluster-level permissions to create and manage pods, services, and ConfigMaps across namespaces. However, the SparkApplications you submit run with the limited permissions of their assigned service accounts, creating a clear security boundary between the control plane and data plane.

With this architectural foundation in place, you’re ready to deploy the Spark Operator into your cluster and start converting spark-submit workflows into declarative SparkApplication manifests.

Deploying Spark Operator with Helm

The Spark Operator transforms your Kubernetes cluster into a declarative Spark platform. Installing it requires more than a simple helm install—you need to make deliberate choices about scope, tenancy, and webhook configuration that will affect how your teams submit and manage jobs.

Installing the Operator

Add the Kubeflow Helm repository and install the operator:

helm repo add spark-operator https://kubeflow.github.io/spark-operatorhelm repo update

helm install spark-operator spark-operator/spark-operator \ --namespace spark-operator \ --create-namespace \ --set webhook.enable=true \ --set webhook.port=8080This default installation creates a cluster-scoped operator that watches all namespaces for SparkApplication and ScheduledSparkApplication resources. The webhook validates your manifests before they reach the API server, catching configuration errors like invalid driver memory specifications or missing image references.

The operator runs as a Deployment with a single replica by default. It reconciles custom resources through a control loop that watches for changes, creates driver pods, and monitors job lifecycle. The webhook component runs as part of the same pod, listening on the specified port for admission requests from the API server.

Namespace-Scoped vs Cluster-Scoped Deployment

For multi-tenant clusters where different teams operate in isolated namespaces, a namespace-scoped operator provides better security boundaries:

helm install spark-operator spark-operator/spark-operator \ --namespace data-team-prod \ --create-namespace \ --set sparkJobNamespace=data-team-prod \ --set webhook.enable=true \ --set rbac.create=trueThe sparkJobNamespace parameter restricts the operator to managing Spark applications only in the specified namespace. This prevents privilege escalation where a compromised operator in one team’s namespace could affect another team’s workloads. Each team deploys their own operator instance with isolated RBAC permissions scoped to their namespace.

Cluster-scoped operators work well for platform teams managing Spark infrastructure centrally. They reduce operational overhead—one operator instance handles all namespaces—but require careful RBAC configuration to prevent unauthorized access to sensitive workloads. The tradeoff is simplicity versus isolation: cluster-scoped gives you a single control plane, while namespace-scoped gives you blast radius containment.

In hybrid scenarios, you might run a cluster-scoped operator for shared development environments and namespace-scoped operators for production workloads. This balances convenience for low-stakes experimentation with strict isolation for business-critical pipelines.

💡 Pro Tip: Use namespace-scoped operators when teams manage their own Spark deployments. Use cluster-scoped operators when a central platform team provisions Spark as a service.

Configuring the Mutating Webhook

The operator’s webhook intercepts SparkApplication creation requests and injects default values, like service accounts and driver/executor pod configurations. Verify webhook readiness:

kubectl get mutatingwebhookconfigurations

kubectl describe mutatingwebhookconfiguration \ spark-operator-webhook-configThe webhook configuration should show a CA bundle and a service reference pointing to spark-operator-webhook in the operator namespace. If the webhook fails, pods will hang in Pending state with validation errors. Common webhook issues include certificate expiration, service endpoint unavailability, or firewall rules blocking API server access to the webhook service.

The webhook serves two purposes: mutation and validation. Mutation injects default configurations like spark.kubernetes.executor.request.cores if you don’t specify them explicitly. Validation rejects malformed specs before they create failed driver pods, saving you debugging time. You can disable the webhook with --set webhook.enable=false, but you lose these guardrails and must ensure your manifests are complete.

Validating Operator Installation

Confirm the operator controller is running and watching for custom resources:

kubectl get deployment -n spark-operatorkubectl logs -n spark-operator \ deployment/spark-operator-controller \ --tail=50

## Verify CRDs are registeredkubectl get crd | grep sparkoperator.k8s.ioYou should see sparkapplications.sparkoperator.k8s.io and scheduledsparkapplications.sparkoperator.k8s.io CRDs. The controller logs will show lines like Starting workers and Started Spark application controller, indicating it’s ready to reconcile resources.

Check that the operator has appropriate permissions by describing its ServiceAccount and associated RoleBindings or ClusterRoleBindings. If you see permission errors in the logs—like forbidden: User "system:serviceaccount:spark-operator:spark-operator" cannot list resource "sparkapplications"—your RBAC configuration is incomplete. Re-run the Helm install with --set rbac.create=true to generate the necessary roles.

With the operator running and webhooks configured, your cluster is ready to accept declarative Spark workloads. The next step is writing your first SparkApplication manifest that replaces imperative spark-submit commands with version-controlled, GitOps-ready YAML.

Writing Your First SparkApplication Manifest

The shift from spark-submit to declarative manifests represents a fundamental change in how you manage Spark workloads. Instead of piecing together command-line flags and environment variables, you define everything in a version-controlled YAML file that serves as the single source of truth for your job.

From Imperative to Declarative

Consider a typical spark-submit command:

spark-submit \ --master k8s://https://kubernetes.default.svc:443 \ --deploy-mode cluster \ --name data-pipeline \ --class com.example.DataPipeline \ --conf spark.executor.instances=5 \ --conf spark.executor.memory=4g \ --conf spark.executor.cores=2 \ --conf spark.driver.memory=2g \ local:///opt/spark/jars/pipeline.jarThis becomes a SparkApplication manifest:

apiVersion: sparkoperator.k8s.io/v1beta2kind: SparkApplicationmetadata: name: data-pipeline namespace: spark-jobsspec: type: Scala mode: cluster image: "my-registry.io/spark:3.5.0" imagePullPolicy: Always mainClass: com.example.DataPipeline mainApplicationFile: "local:///opt/spark/jars/pipeline.jar" sparkVersion: "3.5.0" restartPolicy: type: OnFailure onFailureRetries: 3 onFailureRetryInterval: 10 onSubmissionFailureRetries: 5 driver: cores: 1 coreLimit: "1200m" memory: "2g" labels: version: "3.5.0" serviceAccount: spark-driver executor: cores: 2 instances: 5 memory: "4g" labels: version: "3.5.0"The declarative approach offers immediate advantages: your job configuration lives in Git, changes are auditable through pull requests, and you can apply the same GitOps workflows used for deploying applications. The manifest also exposes Kubernetes-native features like restart policies and service accounts that were awkward or impossible to configure through spark-submit flags.

Notice how the restartPolicy section handles failure scenarios explicitly. The OnFailure type with retry intervals provides granular control over job recovery, while onSubmissionFailureRetries handles transient cluster issues during job submission—a common production scenario when API servers experience load spikes or network partitions.

Configuring Driver and Executor Specifications

The driver and executor sections replace scattered --conf flags with structured configuration. The coreLimit field prevents CPU throttling by setting a Kubernetes CPU limit slightly higher than the request—a common production pattern to account for JVM overhead and garbage collection spikes.

Resource requests translate directly to Kubernetes pod specifications. When you set executor.memory: "4g", the operator configures both the Spark executor memory and the Kubernetes memory request, ensuring your pods get scheduled on nodes with sufficient capacity. The operator also handles memory overhead calculations automatically, adding the necessary buffer for off-heap memory, Python workers, and other non-executor memory usage.

For production workloads, align your executor sizing with node capacity to maximize bin-packing efficiency. If your nodes have 16GB of allocatable memory, consider 4GB or 8GB executor sizes rather than odd values like 5GB that leave resources stranded:

executor: cores: 2 instances: 10 memory: "7g" # Leaves ~1GB for system overhead on 8GB nodes memoryOverhead: "1g" # Explicit overhead for off-heap usageManaging Dependencies and Application Code

Application packaging follows Kubernetes-native patterns. Your JAR files live inside the container image, referenced with the local:// URI scheme:

spec: mainApplicationFile: "local:///opt/spark/jars/app.jar" sparkConf: "spark.jars": "local:///opt/spark/jars/dependencies/postgres-jdbc.jar,local:///opt/spark/jars/dependencies/aws-sdk.jar"This approach eliminates dependency distribution headaches. When using spark-submit with remote JARs, Spark downloads dependencies to every executor, creating network bottlenecks and inconsistent classpath behavior. Baking dependencies into the image ensures every pod starts with identical code, and image layer caching makes subsequent launches fast.

For Python applications, you have multiple options. The simplest is building your code into the image:

spec: type: Python pythonVersion: "3" mainApplicationFile: "local:///opt/spark/app/main.py" deps: packages: - numpy==1.24.0 - pandas==2.0.0Alternatively, mount code via ConfigMaps for rapid iteration during development:

spec: type: Python pythonVersion: "3" mainApplicationFile: "local:///opt/spark/app/main.py" driver: volumeMounts: - name: app-code mountPath: /opt/spark/app executor: volumeMounts: - name: app-code mountPath: /opt/spark/app volumes: - name: app-code configMap: name: pyspark-codeThe ConfigMap approach works for small scripts but hits size limits quickly—ConfigMaps have a 1MB limit. For production deployments, build your Python code into the container image and use virtual environments to manage dependencies cleanly.

Integrating Secrets and Configuration

Environment variables and secrets integrate through standard Kubernetes resources:

spec: driver: env: - name: DATABASE_HOST valueFrom: configMapKeyRef: name: spark-config key: db.host - name: DATABASE_PASSWORD valueFrom: secretKeyRef: name: spark-secrets key: db.password executor: env: - name: AWS_REGION value: "us-east-1" - name: S3_BUCKET valueFrom: configMapKeyRef: name: spark-config key: s3.bucketThis approach separates configuration from code and enables environment-specific deployments without modifying the application manifest. Your dev, staging, and production environments reference different ConfigMaps and Secrets while using identical SparkApplication definitions.

The env section supports the full Kubernetes environment variable specification, including fieldRef for accessing pod metadata and resourceFieldRef for container resource limits. This enables patterns like passing the pod’s namespace or node name to your Spark application:

driver: env: - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace - name: MEMORY_LIMIT valueFrom: resourceFieldRef: containerName: spark-kubernetes-driver resource: limits.memory💡 Pro Tip: Use

kustomizeoverlays to manage environment-specific variations in executor counts, memory allocations, and configuration references without duplicating the entire manifest.

With your SparkApplication manifest in place, the next challenge is ensuring these jobs run reliably at scale. Production workloads require careful attention to scheduling constraints, resource quotas, and failure recovery—patterns we’ll explore in the next section.

Production Patterns: Scheduling and Resource Management

Moving SparkApplications to production requires going beyond basic manifests to implement robust resource management, operational tooling, and cleanup policies. This section covers the production patterns that separate proof-of-concept deployments from reliable, cost-effective Spark infrastructure.

Managing Jobs with sparkctl

The Spark Operator provides sparkctl, a kubectl-style CLI for managing Spark workloads. Install it from the operator’s releases page, then use it to streamline job operations:

## List all SparkApplications in a namespacesparkctl list --namespace spark-jobs

## Get detailed status of a specific jobsparkctl status word-count --namespace spark-jobs

## View logs from driver and executorssparkctl log word-count --follow

## Delete a completed applicationsparkctl delete word-count --namespace spark-jobs

## Create a new application from a manifestsparkctl create -f spark-app.yaml

## Forward ports to access Spark UIsparkctl forward word-count 4040:4040The log command automatically aggregates logs from the driver and all executor pods, eliminating the need to manually track pod names. For debugging failed jobs, sparkctl describe surfaces operator events and status conditions that don’t appear in standard kubectl output. The port forwarding capability is particularly valuable for accessing the Spark UI without exposing services publicly—use it to inspect job DAGs, executor metrics, and stage-level performance data.

When troubleshooting stuck or failing jobs, combine sparkctl status with sparkctl events to correlate application state transitions with cluster events. This reveals resource quota issues, pod scheduling failures, or image pull errors that might not be obvious from application logs alone.

Advanced Pod Customization with Templates

Pod templates give you fine-grained control over executor and driver pods beyond what SparkApplication spec fields expose. Use them to inject sidecars, mount volumes, or set affinity rules:

apiVersion: sparkoperator.k8s.io/v1beta2kind: SparkApplicationmetadata: name: data-pipeline namespace: spark-jobsspec: driver: cores: 2 memory: "4g" podTemplate: spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: workload-type operator: In values: - spark-driver volumes: - name: config configMap: name: app-config containers: - name: spark-kubernetes-driver volumeMounts: - name: config mountPath: /etc/config env: - name: SPARK_DRIVER_BIND_ADDRESS valueFrom: fieldRef: fieldPath: status.podIP executor: instances: 5 cores: 4 memory: "8g" podTemplate: spec: tolerations: - key: spark-workload operator: Equal value: "true" effect: NoSchedule affinity: podAntiAffinity: preferredDuringSchedulingIgnoredDuringExecution: - weight: 100 podAffinityTerm: labelSelector: matchExpressions: - key: spark-role operator: In values: - executor topologyKey: kubernetes.io/hostnameThe spark-kubernetes-driver and spark-kubernetes-executor container names are required in pod templates—the operator merges your template with its generated pod spec using these identifiers. Any fields you specify in the template take precedence over operator defaults, allowing you to override resource limits, security contexts, or environment variables.

Common pod template use cases include mounting cloud credentials from secrets, injecting monitoring sidecars (Prometheus exporters, log shippers), and configuring pod anti-affinity to spread executors across failure domains. The executor anti-affinity pattern shown above distributes executors across nodes to improve fault tolerance for long-running jobs.

Dynamic Resource Allocation

Enable dynamic allocation to let Spark scale executors based on workload demands, reducing costs for variable workloads:

apiVersion: sparkoperator.k8s.io/v1beta2kind: SparkApplicationmetadata: name: adaptive-jobspec: dynamicAllocation: enabled: true initialExecutors: 2 minExecutors: 1 maxExecutors: 10 shuffleTrackingTimeout: 600 sparkConf: spark.dynamicAllocation.executorIdleTimeout: "60s" spark.dynamicAllocation.schedulerBacklogTimeout: "10s" spark.dynamicAllocation.sustainedSchedulerBacklogTimeout: "5s" spark.dynamicAllocation.executorAllocationRatio: "0.5"Dynamic allocation requires the Spark shuffle service to track shuffle data across executor scaling events. The operator automatically configures this when dynamicAllocation.enabled is true. Set shuffleTrackingTimeout to control how long shuffle data persists after executors terminate.

The executorIdleTimeout determines how quickly idle executors are removed—tune this based on your job’s stage patterns. Jobs with many short stages benefit from lower timeouts (30-60s) to release resources quickly, while iterative workloads (machine learning training) need higher values (5-10 minutes) to avoid thrashing. The executorAllocationRatio parameter controls how aggressively Spark requests new executors when tasks are pending; values below 1.0 prevent over-provisioning for bursty workloads.

Monitor the spark.dynamicAllocation.executorIdle and spark.dynamicAllocation.executorAdded metrics to validate your scaling configuration. Executors that immediately idle after allocation indicate overly aggressive scaling, while sustained scheduler backlogs suggest increasing maxExecutors or lowering schedulerBacklogTimeout.

Executor Cleanup and TTL Policies

Failed or completed Spark jobs leave behind executor pods that consume cluster resources. Configure time-to-live (TTL) settings to automate cleanup:

apiVersion: sparkoperator.k8s.io/v1beta2kind: SparkApplicationmetadata: name: batch-processorspec: timeToLiveSeconds: 3600 restartPolicy: type: Never driver: cores: 2 memory: "4g"The timeToLiveSeconds field deletes the entire SparkApplication resource—including driver and executor pods—after the specified duration following job completion or failure. For debugging, set higher TTL values in development (3600-7200 seconds) and lower values (300-600 seconds) in production to balance observability with resource utilization.

Combine TTL policies with restartPolicy.type: Never for batch jobs to prevent automatic restarts of failed applications that would otherwise consume quota indefinitely. For scheduled jobs managed by ScheduledSparkApplication resources, the parent resource handles cleanup based on its own successfulRunHistoryLimit and failedRunHistoryLimit settings, making per-job TTLs less critical.

💡 Pro Tip: Combine TTL policies with centralized logging (Loki, CloudWatch) to retain logs after pods are garbage collected. Set

spec.driver.serviceAccountto use a service account with permissions to ship logs to your logging backend. Configure log aggregation before enabling aggressive TTL policies to avoid losing diagnostic data from failed jobs.

For production workloads, implement a tiered cleanup strategy: keep failed jobs for 1-2 hours for immediate debugging, successful jobs for 30 minutes to verify completion, and rely on centralized logs and metrics for historical analysis. This approach minimizes cluster resource consumption while maintaining operational visibility.

These production patterns establish the operational foundation for reliable Spark deployments. The next section covers monitoring strategies and troubleshooting techniques to maintain visibility into operator-managed workloads.

Monitoring and Troubleshooting Operator-Managed Jobs

The Spark Operator transforms job observability by exposing rich status information through Kubernetes-native APIs and integrating seamlessly with cloud-native monitoring stacks. Understanding how to read operator state, collect metrics, and diagnose failures is essential for production operations.

Reading SparkApplication Status

The operator continuously reconciles SparkApplication resources and updates their status with detailed state information. Inspect the current state of any job:

kubectl get sparkapplication spark-etl-pipeline -n data-platform -o yamlThe status section reveals the complete lifecycle:

kubectl get sparkapplication spark-etl-pipeline -n data-platform \ -o jsonpath='{.status.applicationState.state}'State transitions follow a predictable flow: SUBMITTED → RUNNING → COMPLETED or FAILED. The .status.executorState map tracks individual executor pods, while .status.lastSubmissionAttemptTime helps identify retry behavior. For detailed failure analysis, examine the termination message:

kubectl get sparkapplication spark-etl-pipeline -n data-platform \ -o jsonpath='{.status.applicationState.errorMessage}'The status object also surfaces critical metadata including submission timestamps, driver and executor information, and completion times. The .status.sparkApplicationId field contains the actual Spark application ID used in the Spark UI and history server, enabling correlation between Kubernetes resources and Spark’s native monitoring tools. Use kubectl describe to view a human-readable summary including recent events and state changes that provide context around job progression.

Prometheus Integration

The Spark Operator exposes metrics at :8080/metrics by default. Configure a ServiceMonitor to enable Prometheus scraping:

apiVersion: monitoring.coreos.com/v1kind: ServiceMonitormetadata: name: spark-operator namespace: spark-operatorspec: selector: matchLabels: app.kubernetes.io/name: spark-operator endpoints: - port: metrics interval: 30sKey metrics include spark_app_count (total applications by state), spark_app_submit_count (submission attempts), and spark_app_success_count. These operator-level metrics provide visibility into overall cluster health and workload patterns. For application-level metrics, configure Spark’s Prometheus sink in your SparkApplication:

spec: monitoring: exposeDriverMetrics: true exposeExecutorMetrics: true prometheus: jmxExporterJar: "/prometheus/jmx_prometheus_javaagent-0.17.2.jar" port: 8090The operator creates headless services exposing driver and executor metrics, which Prometheus can scrape using pod-based discovery. Build dashboards around critical Spark metrics like JVM heap usage, task completion rates, shuffle read/write volumes, and garbage collection pause times. Alert on sustained high memory pressure, task failures exceeding thresholds, or executor loss rates that indicate node instability.

Debugging Failed Jobs

When jobs fail, begin with operator logs to understand the reconciliation loop:

kubectl logs -n spark-operator deployment/spark-operator \ --tail=100 | grep spark-etl-pipelineOperator logs reveal whether the failure occurred during submission, resource creation, or state monitoring. Look for RBAC errors, API server communication issues, or webhook validation failures that prevent the operator from managing the application correctly.

Examine Kubernetes events for scheduling issues, image pull failures, or resource constraints:

kubectl get events -n data-platform \ --field-selector involvedObject.name=spark-etl-pipeline \ --sort-by='.lastTimestamp'Events surface pod-level problems before they manifest as application failures. Failed scheduling, volume mount errors, and liveness probe failures all generate events that narrow the troubleshooting scope.

Driver logs contain the Spark application output:

kubectl logs -n data-platform \ $(kubectl get pods -n data-platform \ -l spark-role=driver,sparkoperator.k8s.io/app-name=spark-etl-pipeline \ -o jsonpath='{.items[0].metadata.name}')For long-running jobs, stream driver logs with -f to observe real-time progress. If the driver pod has been deleted due to retention policies, configure persistent logging to external systems like Elasticsearch or cloud-native logging solutions. Executor logs provide additional context when data processing logic fails—check executor stderr for exceptions and stdout for application-level logging.

Common Failure Patterns

OOMKilled executors indicate insufficient memory allocation. Increase spark.executor.memory or reduce spark.executor.memoryOverhead if the overhead calculation is too aggressive. Memory issues often correlate with data skew—examine stage metrics to identify partitions processing disproportionate data volumes.

Pending executor pods suggest resource quota exhaustion—check namespace ResourceQuotas and node availability. Use kubectl describe nodes to verify allocatable resources haven’t been consumed by other workloads. Cluster autoscaling delays can also cause temporary pending states during scale-up events.

ImagePullBackOff errors point to registry authentication issues; verify imagePullSecrets are correctly attached. Registry rate limiting, missing tags, or misconfigured private registry endpoints all manifest as pull failures. Test image accessibility by manually pulling from a node using crictl or docker.

CrashLoopBackOff on drivers often stems from misconfigured dependencies or classpath issues—inspect the driver logs for ClassNotFoundException or NoSuchMethodError. Application code bugs, incompatible library versions, or missing configuration files trigger immediate crashes. Validate your container image locally before deploying to Kubernetes.

The operator’s restart policy (spec.restartPolicy.type: OnFailure) automatically retries failed jobs up to maxSubmissionRetries. Monitor retry storms by tracking submission timestamps to prevent runaway resource consumption. Exponential backoff isn’t automatic—implement it through external controllers or scheduled jobs if you need intelligent retry logic.

Integrating with Observability Platforms

Beyond Prometheus, consider integrating the Spark Operator with distributed tracing systems like Jaeger or cloud-native APM tools. The operator emits Kubernetes events that can be ingested by log aggregation platforms for centralized searching and alerting. Configure structured logging in the operator deployment to enrich log metadata with application names, namespaces, and custom labels. Correlate operator events with application metrics to build comprehensive observability that spans infrastructure and application layers.

With comprehensive monitoring and systematic debugging workflows in place, the next step is integrating SparkApplication manifests into GitOps pipelines for automated, auditable deployments.

GitOps Integration and CI/CD Patterns

The declarative nature of SparkApplication manifests makes them ideal candidates for GitOps workflows. By treating your Spark jobs as versioned YAML configurations in Git, you gain the same deployment automation and audit trail that modern platform teams expect for their application workloads.

Version-Controlled Spark Applications

Store your SparkApplication manifests in a dedicated repository structure that mirrors your deployment environments:

spark-jobs/├── base/│ ├── etl-pipeline.yaml│ └── ml-training.yaml├── overlays/│ ├── staging/│ │ └── kustomization.yaml│ └── production/│ └── kustomization.yamlThis structure leverages Kustomize to manage environment-specific configurations—resource limits, executor counts, S3 bucket paths—while keeping your base job definitions DRY. Each commit to the repository creates an immutable snapshot of your Spark application configuration, enabling rollbacks and change attribution through standard Git workflows.

When structuring your repository, consider grouping jobs by domain or data pipeline rather than by technology. A /customer-analytics directory containing both Spark ETL jobs and downstream ML training jobs better reflects your business logic than a flat list of SparkApplications. This organization simplifies ownership, makes impact analysis easier during code review, and aligns deployment boundaries with team responsibilities.

ArgoCD Continuous Deployment

ArgoCD monitors your Git repository and automatically syncs SparkApplication resources to your Kubernetes cluster. Create an Application resource pointing to your Spark manifests:

apiVersion: argoproj.io/v1alpha1kind: Applicationmetadata: name: spark-jobsspec: project: data-platform source: repoURL: https://github.com/your-org/spark-jobs targetRevision: main path: overlays/production destination: server: https://kubernetes.default.svc namespace: spark-jobs syncPolicy: automated: prune: false selfHeal: trueSetting prune: false prevents ArgoCD from deleting completed SparkApplication objects, preserving historical execution records. The Spark Operator handles cleanup based on restartPolicy and timeToLiveSeconds settings in your manifests. If you need tighter control over completed job retention, implement a separate cleanup controller that archives metrics before pruning old SparkApplications.

💡 Pro Tip: Use ArgoCD sync waves to deploy dependencies before Spark jobs. Add

argocd.argoproj.io/sync-wave: "1"annotations to ConfigMaps containing job configurations, ensuring they exist before the SparkApplication referencing them.

Rolling Updates and Deployment Strategies

Unlike stateless web services, Spark jobs don’t support in-place updates. When you modify a SparkApplication manifest, the Operator creates a new execution rather than updating running pods. This behavior necessitates careful consideration of update strategies.

For scheduled jobs managed by ScheduledSparkApplication resources, updates apply to the next scheduled run. This natural boundary makes rolling out changes straightforward—commit your manifest change, let ArgoCD sync it, and the updated job runs at the next cron trigger.

For long-running streaming jobs, implement blue-green deployments by maintaining two SparkApplication definitions in your repository. Deploy the new version alongside the existing one, validate its behavior by monitoring lag metrics and output correctness, then delete the old version once you’ve confirmed the migration. This approach requires coordination with your data sources—typically Kafka consumer groups or checkpoint directories—to ensure exactly-once processing semantics during the transition.

Testing and Validation Strategies

Implement CI pipelines that validate manifests before they reach production. Use kubectl --dry-run=server to catch schema violations and resource quota conflicts. Tools like kubeval or kube-linter enforce policy compliance—verifying that production jobs specify resource requests, use approved base images, and configure monitoring labels.

For functional testing, deploy to a staging namespace with scaled-down executors and sample datasets. Run smoke tests that verify job completion and output correctness before promoting changes to production branches. Consider using ephemeral test clusters with tools like vcluster to isolate validation runs, preventing test data pollution in shared staging environments.

Integration tests should validate not just the SparkApplication manifest but also the Docker images referenced within. Pin image tags to specific SHAs rather than using :latest to ensure reproducible deployments. Your CI pipeline should build the Spark application image, push it to your registry, and update the manifest’s image field with the new SHA before running validation tests.

This GitOps approach transforms Spark deployment from manual kubectl commands into auditable, repeatable pipelines. Every job modification flows through code review, automated validation, and controlled promotion across environments—the foundation for operating Spark workloads at enterprise scale.

Key Takeaways

- Replace imperative spark-submit with declarative SparkApplication CRDs for better lifecycle management and observability

- Use Helm to deploy the Spark Operator with namespace-scoped configuration for multi-tenant environments

- Leverage pod templates and sparkctl to implement production-grade resource management and debugging workflows

- Integrate SparkApplication manifests into GitOps pipelines with ArgoCD for automated, version-controlled deployments