Building Fault-Tolerant Event Streams with NATS JetStream

Your microservices are losing messages during deployments. A payment confirmation arrives, but the service restarts before processing it—gone forever. The customer sees a successful payment, your logs show the event was published, but the order never shipped. You dig through distributed traces, check your message broker, and find nothing. The message vanished into the gap between publish and consume.

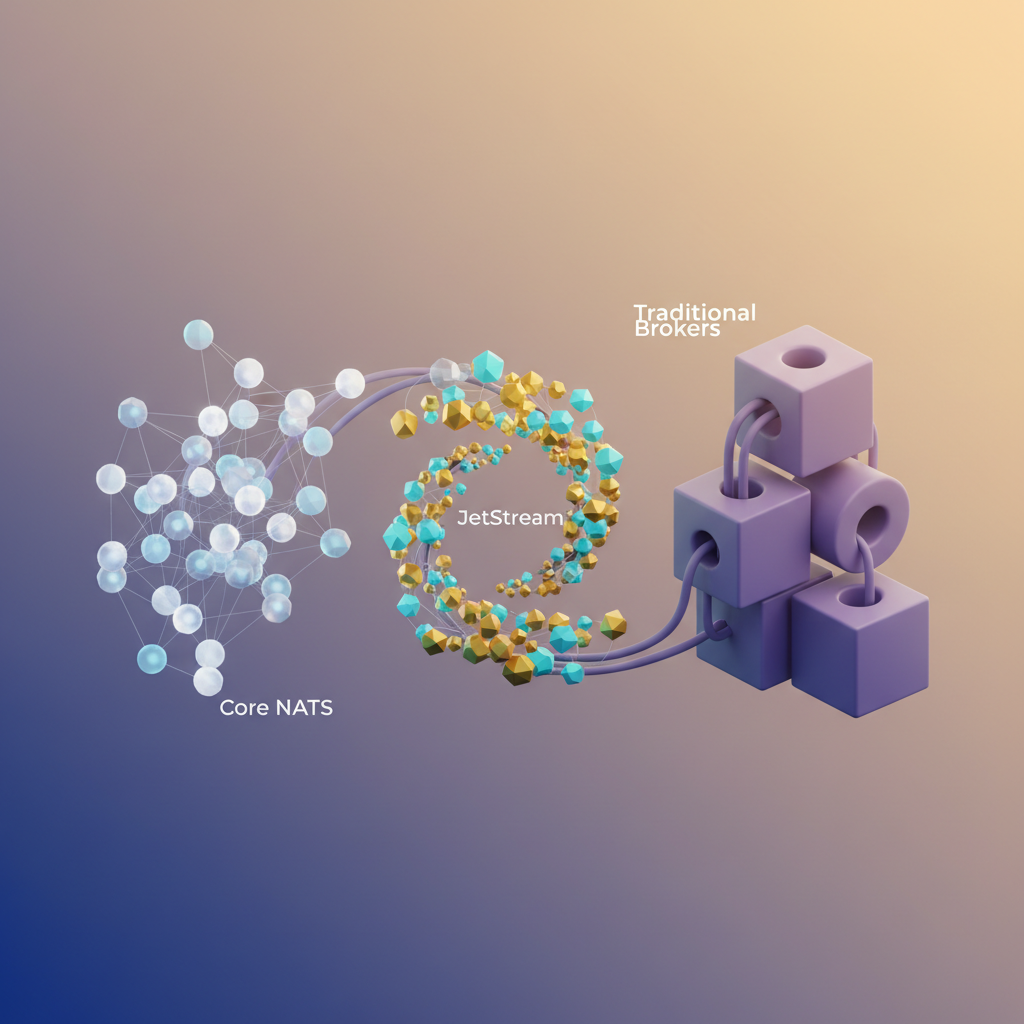

This isn’t a rare edge case. It’s the fundamental trade-off of at-most-once delivery: maximum throughput, zero guarantees. Core NATS excels at this pattern—millions of messages per second with minimal latency—but the moment you need persistence or delivery guarantees, you’re forced to bolt on external systems. Suddenly you’re running Kafka clusters, managing RabbitMQ nodes, or building retry logic into every service. The operational complexity scales faster than your team.

The traditional answer has been to accept this complexity as the cost of reliability. Want guaranteed delivery? Deploy a message broker with its own cluster, replication topology, and operational playbook. Want event replay? Add a distributed log system. Want both? Run multiple platforms and map between their different mental models—topics versus exchanges, partitions versus queues, consumer groups versus subscriptions.

NATS JetStream eliminates this false choice. It adds persistence, replay, and exactly-once delivery directly into NATS—no separate brokers, no topology migrations, no paradigm shifts. You keep NATS’s subject-based routing and operational simplicity while gaining the guarantees that distributed systems actually need. The question isn’t whether to add persistence to your messaging layer. It’s whether you want to operate a separate platform to get it.

Why JetStream Exists: The Gap Between Core NATS and Traditional Brokers

Core NATS operates on a simple principle: publish a message to a subject, and any active subscribers receive it exactly once—or not at all if they’re offline. This at-most-once delivery model delivers sub-millisecond latency and can handle millions of messages per second with minimal resource overhead. But it comes with a critical constraint: zero persistence. If a subscriber is down or slow, messages vanish. There’s no replay, no acknowledgment, no delivery guarantee beyond “we tried.”

For many real-time use cases—metrics collection, command-and-control systems, transient notifications—this trade-off is acceptable. But distributed systems often require guarantees that core NATS cannot provide: persistent event logs, exactly-once processing semantics, message replay for new consumers, and reliable delivery even when services restart.

Traditional message brokers like Kafka and RabbitMQ solve these problems through persistent storage and complex coordination protocols. Kafka’s partition-based log provides excellent throughput and replay capabilities, but requires managing ZooKeeper or KRaft clusters, monitoring consumer lag across topic partitions, and handling rebalancing storms. RabbitMQ offers flexible routing through exchanges and queues, but introduces operational complexity around disk alarms, memory management, and cluster split-brain scenarios. Both systems demand dedicated infrastructure, specialized expertise, and ongoing operational attention.

JetStream eliminates this false choice. Built directly into the NATS server binary, it adds a persistence layer that maintains NATS’s operational simplicity while delivering the durability guarantees required for event-driven architectures. There’s no separate broker to deploy, no additional cluster to manage, no new protocol to learn. The same NATS server that handles ephemeral pub/sub now provides persistent streams, guaranteed delivery, and distributed consensus through Raft replication—all configured through the same subject-based API you already know.

Subject-Based Routing vs Topic Paradigms

Unlike Kafka’s rigid topic-partition model or RabbitMQ’s exchange-binding abstractions, JetStream extends NATS’s subject hierarchy directly into persistence. A stream captures messages from subjects matching wildcard patterns like orders.* or events.>, letting you organize data flows using the same dot-separated namespaces that power NATS routing. This eliminates the mental model switch between routing logic and storage configuration—subjects define both message delivery paths and persistence boundaries.

Consumers attach to streams with configurable delivery semantics: push or pull, durable or ephemeral, with exactly-once or at-least-once guarantees. Acknowledgments happen at the protocol level, not through application-managed offsets. Replay starts from any point in the stream without external coordination.

This architectural integration means JetStream delivers Kafka-class persistence with NATS-class operational overhead. For cloud-native systems that need reliability without infrastructure bloat, it’s the persistence layer that should have existed from the start.

Creating Your First Stream: Capturing Event History

Streams are JetStream’s fundamental abstraction for message persistence. Unlike core NATS’s fire-and-forget semantics, streams capture and store messages published to specific subjects, making them available for replay, processing, and auditing long after they’re published. Think of a stream as a durable log that sits between publishers and consumers, decoupling message production from consumption.

Defining Your First Stream

Creating a stream requires specifying which subjects to capture and how to manage retention. Here’s a practical example that stores user activity events:

package main

import ( "log" "time"

"github.com/nats-io/nats.go")

func main() { nc, _ := nats.Connect(nats.DefaultURL) defer nc.Close()

js, _ := nc.JetStream()

// Create stream configuration streamConfig := &nats.StreamConfig{ Name: "USER_EVENTS", Subjects: []string{"events.user.*"}, Storage: nats.FileStorage, MaxAge: 24 * time.Hour * 30, // 30 days retention MaxBytes: 10 * 1024 * 1024 * 1024, // 10GB limit MaxMsgs: 1000000, Discard: nats.DiscardOld, Replicas: 3, // Cluster replication factor }

stream, err := js.AddStream(streamConfig) if err != nil { log.Fatal(err) }

log.Printf("Stream created: %s", stream.Config.Name)}This configuration captures all messages published to subjects matching events.user.* (like events.user.login or events.user.signup) and persists them with three-way replication for fault tolerance. The stream name USER_EVENTS serves as an administrative identifier—it doesn’t affect message routing, which depends entirely on the subjects list.

Storage Types and Performance Trade-offs

JetStream offers two storage backends, each suited to different use cases:

File storage writes messages to disk, providing durability across restarts. Use this for critical business events, audit logs, or any data that must survive server failures. Performance tops out around 100K messages per second on modern SSDs, with latency in the single-digit milliseconds. File storage enables features like stream replication and data recovery, making it the default choice for production workloads where data loss is unacceptable.

Memory storage keeps messages in RAM for maximum throughput—millions of messages per second with sub-millisecond latency. This works well for high-volume telemetry data, real-time analytics pipelines, or temporary work queues where losing recent data during a crash is acceptable. Memory streams consume less CPU since they bypass disk I/O, but they’re inherently ephemeral: a server restart wipes the entire stream.

memoryStreamConfig := &nats.StreamConfig{ Name: "METRICS_STREAM", Subjects: []string{"metrics.>"}, Storage: nats.MemoryStorage, MaxAge: 5 * time.Minute, // Short retention window Discard: nats.DiscardOld,}Choose file storage by default unless you’ve profiled your system and confirmed that memory storage’s risks are acceptable for your use case.

Retention Policies and Limits

Streams enforce boundaries through three independent limit types. When any limit is reached, the discard policy determines what happens:

- MaxAge removes messages older than the specified duration

- MaxBytes caps total stream storage size

- MaxMsgs limits the message count

The DiscardOld policy removes the oldest messages first, maintaining a rolling window. DiscardNew rejects incoming messages when limits are hit, protecting historical data at the cost of lost current events. This second policy acts like a circuit breaker—once the stream fills up, publishers receive errors until consumers drain enough messages to free space.

💡 Pro Tip: Always set multiple limits as safeguards. A bug that publishes oversized messages can exhaust

MaxByteslong before hittingMaxAge, so combining both prevents runaway storage growth.

Setting MaxAge to zero disables time-based expiration, while setting MaxBytes or MaxMsgs to -1 removes those constraints entirely. However, unbounded streams pose operational risks. Even if you expect low message volume, set reasonable upper bounds to contain potential issues—a malfunctioning service won’t fill your disk or exhaust cluster memory.

Replication for Fault Tolerance

The Replicas parameter controls how many cluster nodes store copies of the stream. A replica count of 3 means three nodes maintain synchronized copies, allowing the stream to survive two simultaneous failures. Replication uses the RAFT consensus protocol, requiring a quorum of replicas to acknowledge writes before they’re considered committed.

Single-replica streams (the default) offer no redundancy. If that node fails, you lose access to the stream until it recovers. Production deployments should use Replicas: 3 for critical streams, balancing durability against the overhead of cross-node synchronization.

Stream Templates for Dynamic Subjects

For multi-tenant systems or partitioned data, stream templates automatically create streams as new subjects appear:

templateConfig := &nats.StreamConfig{ Name: "TENANT_*", Subjects: []string{"tenant.*.events"}, Storage: nats.FileStorage, MaxAge: 7 * 24 * time.Hour, Replicas: 1,}

js.AddStreamTemplate("tenant_template", templateConfig, 100)Publishing to tenant.acme-corp.events automatically creates a TENANT_acme-corp stream, isolating data per tenant while maintaining consistent retention policies. The final parameter (100) limits how many streams the template can spawn, preventing resource exhaustion if tenant IDs aren’t validated.

Stream templates reduce operational overhead when dealing with dynamic subject hierarchies, but they introduce management complexity. Each auto-created stream consumes cluster resources, and monitoring hundreds of templated streams requires tooling beyond what manual stream creation demands.

With streams configured to capture your event history, the next challenge is getting that data back out reliably. Consumers provide durable subscriptions with delivery guarantees that ensure no message gets lost in processing.

Consumers and Delivery Guarantees: At-Least-Once That Actually Works

Streams capture your events, but consumers process them. JetStream consumers come in two flavors—push and pull—each optimized for different workload patterns. Unlike traditional message brokers where choosing the wrong consumer type means refactoring weeks later, JetStream makes the distinction clear from the start.

Push vs Pull: Choose Based on Control Flow

Push consumers have JetStream deliver messages to your application. They work well for low-latency processing where you want messages flowing continuously. The server manages backpressure through configurable flow control, making push ideal for stateless workers that process messages independently. Think webhooks or API callbacks—fire and forget, but with guaranteed delivery.

Pull consumers let your application request batches when ready. Use pull consumers when you need precise control over processing rates or when your workers have variable capacity. Database batch inserts, API rate-limited operations, or computationally expensive tasks all benefit from pull semantics. The consumer dictates pace, not the server.

import { connect, AckPolicy } from 'nats';

const nc = await connect({ servers: 'nats://localhost:4222' });const js = nc.jetstream();

// Push consumer delivers to a subjectconst consumer = await js.consumers.get('orders-stream', 'order-processor');const subscription = await consumer.consume({ callback: async (msg) => { const order = JSON.parse(msg.data.toString()); await processOrder(order); msg.ack(); }});Pull consumers give you batch control:

const consumer = await js.consumers.get('orders-stream', 'batch-processor');

while (true) { const messages = await consumer.fetch({ max_messages: 50, expires: 5000 });

for await (const msg of messages) { try { const order = JSON.parse(msg.data.toString()); await processOrder(order); msg.ack(); } catch (err) { msg.nak(2000); // Negative ack, retry after 2s } }}The expires parameter on fetch operations prevents indefinite blocking. If fewer than max_messages arrive within the timeout window, you receive what’s available. This lets you maintain responsive batch processing even during low-traffic periods.

Acknowledgment Patterns That Prevent Data Loss

JetStream provides three acknowledgment operations: ack(), nak(), and working(). Explicit acks confirm successful processing. Call nak() when processing fails—the message returns to the stream for redelivery. Use working() to extend processing time for long-running operations without triggering timeouts.

The acknowledgment window starts when JetStream delivers a message. Without an ack within AckWait duration, JetStream considers the message unprocessed and redelivers it. This guarantees at-least-once delivery but requires idempotent processing logic. Hash message IDs, check database constraints, or use external deduplication stores to handle duplicate processing safely.

const consumer = await js.consumers.get('events-stream', 'processor');

for await (const msg of await consumer.fetch({ max_messages: 10 })) { const startTime = Date.now();

try { const event = JSON.parse(msg.data.toString());

// Long processing - send work-in-progress signals if (event.type === 'complex') { const interval = setInterval(() => msg.working(), 15000); await complexProcessing(event); clearInterval(interval); }

msg.ack(); } catch (err) { if (err.retryable) { msg.nak(5000); // Retry after 5 seconds } else { msg.term(); // Terminal error, don't redeliver } }}The AckWait configuration controls how long JetStream waits for acknowledgment before considering a message unacknowledged. Set this based on your typical processing time plus buffer. Too short and you get spurious redeliveries. Too long and failures stall your pipeline. Profile your processing times in production and add 50-100% margin for network variance and occasional slowdowns.

await js.consumers.add('orders-stream', { durable_name: 'order-processor', ack_policy: AckPolicy.Explicit, ack_wait: 30_000_000_000, // 30 seconds in nanoseconds max_deliver: 5, deliver_policy: DeliverPolicy.All});Max Delivery and Dead Letter Handling

Set max_deliver to prevent infinite retry loops. When a message exceeds this limit, JetStream stops redelivering it. Without explicit handling, these messages disappear silently. Implement dead letter handling by monitoring msg.info.redeliveryCount:

for await (const msg of await consumer.fetch({ max_messages: 20 })) { const info = msg.info;

if (info.redeliveryCount >= 4) { // Final attempt - log to dead letter queue await deadLetterQueue.publish({ original: msg.data, error: 'Max retries exceeded', stream: info.stream, sequence: info.streamSequence }); msg.term(); continue; }

try { await processMessage(msg); msg.ack(); } catch (err) { msg.nak(Math.pow(2, info.redeliveryCount) * 1000); // Exponential backoff }}Exponential backoff prevents thundering herd problems when downstream dependencies fail. Transient errors—network hiccups, database connection pool exhaustion—often resolve within seconds. Immediate retries amplify the problem. Backing off gives systems time to recover while keeping messages in flight.

Durable vs Ephemeral: Persistence Semantics

Durable consumers maintain their state—stream position, unacknowledged messages—across restarts. Name them explicitly and they survive application crashes. Multiple instances of your application can share a durable consumer, forming a worker pool that distributes messages across instances. JetStream ensures each message goes to exactly one worker.

Ephemeral consumers exist only while connected. They’re perfect for temporary monitoring or one-off processing tasks. When your connection closes, the consumer and its state disappear. No cleanup, no lingering resources.

// Durable - survives restartsawait js.consumers.add('events-stream', { durable_name: 'analytics-processor', ack_policy: AckPolicy.Explicit});

// Ephemeral - deleted when connection closesawait js.consumers.add('events-stream', { ack_policy: AckPolicy.Explicit, inactive_threshold: 30_000_000_000 // 30 seconds});The inactive_threshold on ephemeral consumers sets a timeout. If no client fetches messages within this window, JetStream deletes the consumer automatically. This prevents resource leaks from crashed monitoring scripts or abandoned debug sessions.

💡 Pro Tip: Use durable consumers for production workloads where exactly-once semantics matter. Reserve ephemeral consumers for debugging, monitoring dashboards, or temporary data exports.

With consumers handling reliable delivery and acknowledgment, your application code focuses on business logic rather than retry mechanics. Next, we’ll examine how stream replication ensures these guarantees survive datacenter failures.

Stream Replication and Mirroring for High Availability

JetStream streams are ephemeral by default—if the server crashes, your messages vanish. For production systems, you need replication across cluster nodes to survive hardware failures, network partitions, and planned maintenance windows.

Configuring Stream Replicas

Stream replication uses the RAFT consensus algorithm to maintain copies across cluster nodes. Setting Replicas to 3 ensures your stream survives two simultaneous node failures:

package main

import ( "log" "time"

"github.com/nats-io/nats.go")

func main() { nc, _ := nats.Connect("nats://localhost:4222") defer nc.Close()

js, _ := nc.JetStream()

// Create replicated stream across 3 nodes _, err := js.AddStream(&nats.StreamConfig{ Name: "orders", Subjects: []string{"orders.*"}, Replicas: 3, Storage: nats.FileStorage, MaxAge: 24 * time.Hour, }) if err != nil { log.Fatal(err) }

// Check stream status including replica health info, _ := js.StreamInfo("orders") log.Printf("Stream replicas: %d", info.Config.Replicas) log.Printf("Cluster: %+v", info.Cluster)}Each replica acknowledges writes independently. JetStream won’t confirm a publish until the majority (quorum) of replicas have persisted the message. With 3 replicas, you need 2 acknowledgments—providing durability even if one node is down.

The RAFT leader handles all writes, automatically replicating to followers. If the leader fails, the remaining nodes elect a new leader within seconds, typically 2-5 seconds depending on network latency. During this election window, publishers receive temporary errors until the new leader is established. Client libraries with retry logic handle this transparently.

Placement constraints control replica distribution. By default, RAFT distributes replicas across different availability zones if your cluster spans multiple zones. You can verify placement using the cluster information from StreamInfo, which shows which nodes currently host replicas and their synchronization status.

💡 Pro Tip: Odd replica counts (1, 3, 5) are required for RAFT quorum. Even numbers like 2 or 4 don’t improve availability and waste resources.

Handling Split-Brain Scenarios

Network partitions create split-brain risks where cluster segments operate independently. RAFT’s quorum requirement prevents this—a partition without majority cannot accept writes. If you configure 3 replicas and the network splits into 2+1 nodes, only the partition with 2 nodes continues accepting writes. The isolated node becomes read-only until reconnected.

Monitoring info.Cluster.Leader tells you which node currently leads. If this field is empty, the stream is unavailable due to lost quorum. Set alerts when streams lack leaders for more than 10 seconds—this indicates either network partitions or cluster health issues requiring immediate intervention.

Mirror Streams for Disaster Recovery

Mirrors create read-only copies of a stream, typically in a different geographic region or cluster. Unlike replicas which participate in consensus, mirrors asynchronously replicate from a source stream:

package main

import ( "log" "time"

"github.com/nats-io/nats.go")

func createMirror() { // Connect to DR cluster nc, _ := nats.Connect("nats://dr-cluster:4222") defer nc.Close()

js, _ := nc.JetStream()

// Mirror the primary orders stream _, err := js.AddStream(&nats.StreamConfig{ Name: "orders-mirror", Replicas: 3, Storage: nats.FileStorage, Mirror: &nats.StreamSource{ Name: "orders", External: &nats.ExternalStream{ APIPrefix: "$JS.us-east-1.API", }, }, }) if err != nil { log.Fatal(err) }

// Monitor replication lag info, _ := js.StreamInfo("orders-mirror") if info.Mirror != nil { lag := info.State.LastSeq - info.Mirror.Lag log.Printf("Replication lag: %d messages", lag) }}The External.APIPrefix connects to the source stream in another cluster. Monitors should alert on excessive Mirror.Lag—if the mirror falls behind by thousands of messages, your DR strategy degrades.

Mirror streams should themselves be replicated (note Replicas: 3 in the mirror config). This ensures the DR copy survives node failures in the secondary cluster. Without replication, your mirror becomes a single point of failure.

Replication lag depends on network latency between clusters and message throughput. Cross-region mirrors typically lag by 100-500ms under normal conditions. Sustained lag exceeding 5 seconds indicates network congestion, bandwidth saturation, or the mirror cluster struggling to keep pace with write volume.

Source Streams for Multi-Region Aggregation

Sources aggregate multiple streams into one, useful for collecting events from regional clusters:

package main

import ( "github.com/nats-io/nats.go")

func createAggregateStream() { nc, _ := nats.Connect("nats://global-cluster:4222") defer nc.Close()

js, _ := nc.JetStream()

js.AddStream(&nats.StreamConfig{ Name: "orders-global", Storage: nats.FileStorage, Sources: []*nats.StreamSource{ { Name: "orders", External: &nats.ExternalStream{ APIPrefix: "$JS.us-east-1.API", }, }, { Name: "orders", External: &nats.ExternalStream{ APIPrefix: "$JS.eu-west-1.API", }, }, }, })}The global stream now contains messages from both regions, enabling centralized analytics without duplicating publishers. Unlike mirrors which replicate a single source, source streams merge multiple origins, preserving message ordering within each source but interleaving between sources based on arrival time.

Source streams preserve the original message sequence numbers in metadata, allowing you to trace messages back to their originating region. This is critical for debugging distributed systems where understanding message provenance matters.

Beyond replication, you need efficient local state management. JetStream’s built-in Key-Value and Object stores provide this without external dependencies.

Key-Value and Object Store: Built-in State Management

JetStream includes KV and Object stores as first-class primitives, both built directly on top of streams. This eliminates the need for an external database in many scenarios—you’re already running JetStream for your event streams, so why add Redis or S3 to store application state?

The KV store provides a familiar key-value interface with automatic versioning and the ability to watch for changes. Under the hood, each bucket is a stream where keys map to subjects. When you put a value, JetStream appends it to the stream; when you get a value, it retrieves the latest message for that key. This stream-based foundation means you inherit all of JetStream’s guarantees: replication, persistence, and exactly-once semantics come standard.

import { connect } from 'nats';

const nc = await connect({ servers: 'nats://localhost:4222' });const js = nc.jetstream();

// Create a KV bucket (backed by a stream)const kv = await js.views.kv('service-config', { history: 10, // Keep last 10 revisions per key ttl: 3600000, // Keys expire after 1 hour});

// Put and get valuesawait kv.put('feature.rate_limit', '1000');await kv.put('feature.enabled', 'true');

const entry = await kv.get('feature.rate_limit');console.log(`Value: ${entry.string()}, Revision: ${entry.revision}`);

// Watch for changes to react to configuration updatesconst watcher = await kv.watch({ key: 'feature.*' });(async () => { for await (const entry of watcher) { console.log(`${entry.key} changed to ${entry.string()}`); // Reload application config, adjust rate limiters, etc. }})();The watch API is particularly powerful for service coordination. Instead of polling a database or implementing a custom notification system, your services subscribe to key changes and react immediately when configuration or feature flags update. This pattern is remarkably useful for distributed configuration management: update a key in one place, and every service watching that key receives the change within milliseconds. No cache invalidation logic, no polling intervals, no consistency issues.

Because KV buckets are streams, you also get history for free. Setting history: 10 means JetStream retains the last 10 values for each key, allowing you to retrieve previous revisions or implement audit trails without additional infrastructure. You can even purge individual keys or set per-key TTLs to manage memory usage as your state evolves.

For larger payloads, JetStream provides an Object store that automatically chunks data into manageable pieces. This is useful for storing artifacts, uploaded files, or serialized datasets without worrying about message size limits. While NATS messages are typically capped at 1MB by default, Object store handles multi-gigabyte files transparently.

import { connect } from 'nats';import { readFile, writeFile } from 'fs/promises';

const nc = await connect({ servers: 'nats://localhost:4222' });const js = nc.jetstream();

const obj = await js.views.os('artifacts', { storage: 'file', // Use file-based storage for durability replicas: 3, // Replicate across cluster});

// Store a large binary fileconst modelData = await readFile('./model-v2.onnx');await obj.put({ name: 'models/recommendation-v2' }, modelData);

// Retrieve it laterconst result = await obj.get('models/recommendation-v2');await writeFile('./downloaded-model.onnx', result.data);

// List objects with metadataconst list = await obj.list();for await (const info of list) { console.log(`${info.name}: ${info.size} bytes, ${info.chunks} chunks`);}Objects are chunked at 128KB by default, stored as individual messages in a stream. Retrieval is resumable—if a download fails partway through, the client can restart from the last successfully received chunk. This makes Object store reliable even over flaky network connections or when transferring large files between distributed nodes.

The chunking strategy also means you can delete or replace objects without rewriting the entire file. JetStream tracks metadata for each object—size, chunk count, modification time—and the client library handles reassembly automatically. For teams building early-stage products or internal tooling, this is often sufficient to replace S3 for build artifacts, uploaded assets, or ML model storage.

When to use KV/Object store vs external databases: Use KV for ephemeral or frequently-changing state where history matters—feature flags, service discovery, distributed locks, or cached computations. The built-in versioning and watch capabilities make it ideal for coordination patterns. Use Object store for blobs that would otherwise go to S3—build artifacts, ML models, uploaded user files in early-stage products.

However, if you need complex queries, transactions across multiple keys, or sub-millisecond latency at extreme scale, a dedicated database remains the better choice. JetStream’s state stores excel at simplicity and operational consistency, not query flexibility. Think of them as a way to consolidate infrastructure when your state needs align with what streams naturally provide: ordered writes, versioned history, and push-based reactivity.

With streams, consumers, and state primitives covered, the final consideration is keeping everything running smoothly in production.

Production Patterns: Monitoring, Limits, and Operational Considerations

Running JetStream in production requires visibility into stream health and proactive safeguards against resource exhaustion. Unlike traditional brokers that require separate monitoring stacks, JetStream exposes metrics through NATS’s built-in monitoring endpoint and integrates cleanly with Prometheus.

Metrics That Matter

The NATS server exposes JetStream metrics at http://localhost:8222/jsz by default. Focus on these critical indicators:

Stream-level metrics reveal data accumulation patterns. num_pending shows unconsumed messages—a rising trend indicates consumers falling behind. state.bytes and state.messages track storage consumption against configured limits. Monitor state.num_subjects for streams using subject-based retention to detect unexpected cardinality growth.

Consumer-level metrics expose delivery health. num_pending at the consumer level shows lag for that specific consumer. delivered versus ack_floor indicates processing velocity—a widening gap signals backpressure. num_redelivered tracks redelivery attempts, which spike when consumers crash or fail to acknowledge messages within the configured ack_wait timeout.

For Prometheus integration, use the official NATS Prometheus exporter. Set up alerts on consumer lag thresholds (typically when num_pending exceeds 10,000 messages) and storage utilization (alert at 70% of configured max_bytes).

Preventing Resource Exhaustion

Unbounded streams consume disk space until your cluster fails. Set explicit limits when creating streams:

max_msgs caps total message count. max_bytes limits storage consumption (set to 80% of available disk to leave headroom). max_age enforces time-based retention—combine with size limits for defense in depth. max_msg_size prevents individual large messages from consuming disproportionate resources.

When limits are hit, the discard policy determines behavior. DiscardOld (default) removes the oldest messages, maintaining a sliding window of recent data. DiscardNew rejects incoming messages when full—use this for critical audit logs where losing old data is unacceptable.

Handling Slow Consumers

When consumers fall behind, JetStream’s push delivery switches to a flow-control mode, sending FlowControl protocol messages to prevent overwhelming the consumer. Configure max_ack_pending on push consumers to limit unacknowledged message buildup—set this to match your consumer’s processing capacity.

For pull consumers, backpressure is inherent in the pull model: consumers request batches only when ready to process them. Use max_batch and max_waiting parameters to tune throughput without overwhelming downstream systems.

💡 Pro Tip: Use consumer

rate_limit_bpsto throttle delivery speed for consumers that integrate with rate-limited external APIs. This prevents cascade failures when downstream dependencies slow down.

With these operational guardrails in place, your JetStream deployment maintains predictable performance even under traffic spikes and consumer failures. The monitoring foundation you’ve built provides the observability needed to tune these parameters based on real production behavior.

Key Takeaways

- Start with a replicated stream (R=3) and pull consumers for most use cases—you can optimize later

- Use explicit acknowledgments with max delivery limits and monitor your dead letter patterns

- Leverage JetStream’s KV store for coordination state before reaching for Redis or etcd

- Set storage limits early and monitor consumer lag—unbounded streams will fill your disks