Building Developer Tools with GPT: From API Wrapper to Production Platform

You’ve called the OpenAI API, gotten a response back, and shown it in your UI. Congratulations—you’ve built a chatbot. But when you try to turn that proof-of-concept into a developer tool that engineers actually use daily, you hit a wall: context windows fill up, costs spiral out of control, and responses become unpredictable. The gap between “it works in the demo” and “it works in production” is where most AI-powered developer tools die.

The problem isn’t the API itself. OpenAI’s interfaces are remarkably straightforward—send a prompt, get a completion. The challenge emerges when you try to maintain coherent multi-turn conversations, manage context across sessions, handle rate limits gracefully, and keep costs under control while serving real users. Suddenly you’re debugging why your code review tool forgot the project structure halfway through analysis, or why your documentation generator burned through $200 in tokens overnight.

Most teams respond by bolting on fixes: a Redis cache here, some prompt truncation there, maybe a cost alert in Datadog. These patches work until they don’t. The real issue is architectural. That first API integration was never designed to scale beyond a demo. It lacks the foundational patterns that production AI tools require: stateful context management, intelligent caching strategies, fallback mechanisms, and cost controls that don’t degrade user experience.

The path from wrapper to platform follows a predictable progression. Understanding these maturity levels—and more importantly, the specific technical decisions that separate them—determines whether your AI developer tool becomes indispensable or gets abandoned after the first billing cycle.

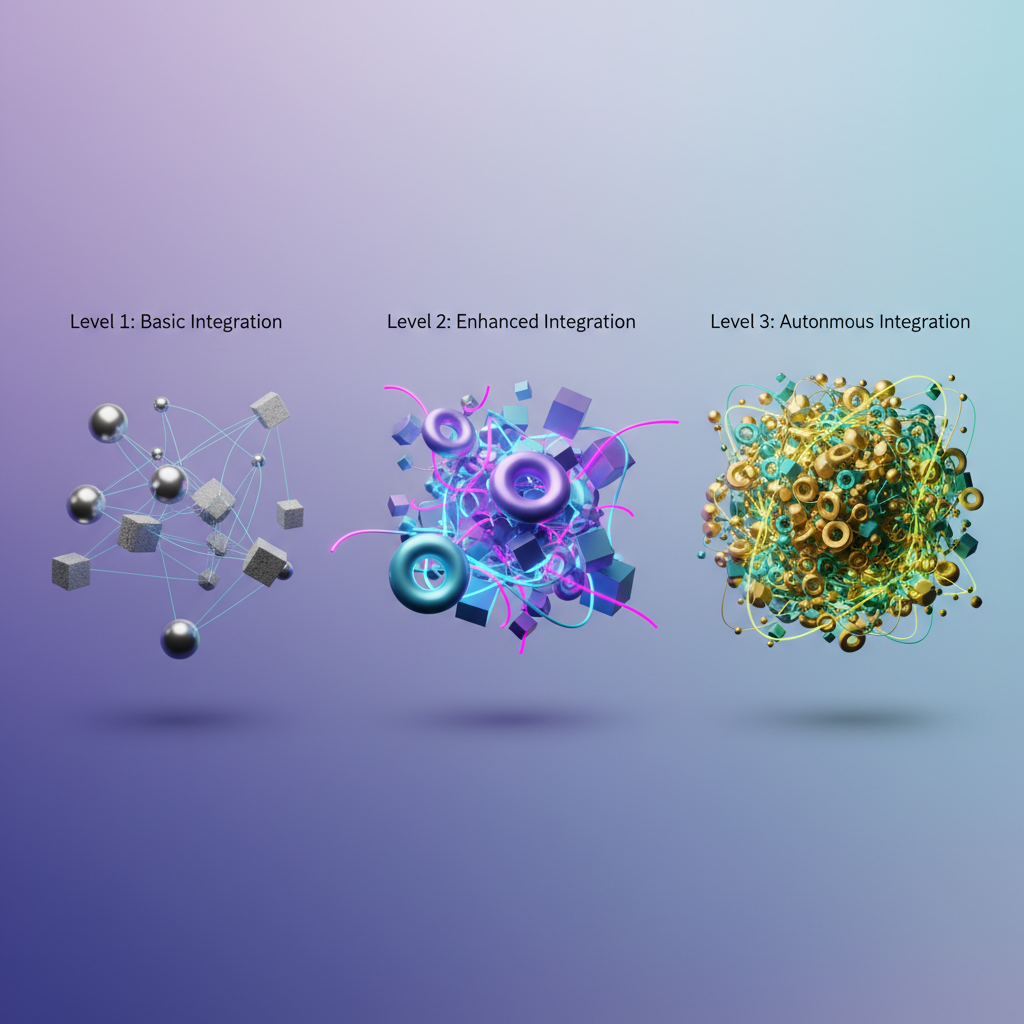

The Three Maturity Levels of GPT Integration

Most engineering teams building AI-powered developer tools follow a predictable architectural progression. Understanding where you are on this maturity curve determines whether your integration thrives in production or becomes a maintenance nightmare.

Level 1: The Direct API Wrapper

This is where every integration starts. You make synchronous calls to the OpenAI API, send a prompt, get a response, display it to users. The entire codebase is maybe 200 lines. It works perfectly in your demo, impresses stakeholders, and ships in two sprints.

Then production happens. Users paste 50,000-line log files that blow past context limits. Rate limits trigger during peak hours. A single malformed response crashes your parser. Your API costs spike to $3,000/month because you’re sending the entire Git repository with every request. The wrapper that took two days to build now demands two engineers full-time just to keep it stable.

Level 1 integrations are excellent for MVPs and proof-of-concepts. They fail in production because they treat GPT like a deterministic function call instead of an external service with failure modes, usage costs, and context constraints.

Level 2: Stateful Integration with Context Management

Teams that survive Level 1 build session management, conversation history, and basic caching. You implement token counting before API calls, chunk large documents, and maintain conversational context across requests. You add retry logic and graceful degradation when the API is unavailable.

This is where most teams plateau. Your system handles production load but feels brittle. Every new feature requires careful consideration of context window limits. You spend more time debugging edge cases in context assembly than building actual features. Costs are under control but not optimized. You have logging but no real insight into why certain prompts succeed while others fail.

The gap between Level 2 and Level 3 is not technical complexity but architectural philosophy. Level 2 treats GPT integration as a feature. Level 3 treats it as infrastructure.

Level 3: Production Platform with Observability

A production-grade GPT platform implements cost controls, comprehensive observability, and fallback strategies from day one. You instrument every API call with structured logging that captures token usage, latency, and prompt effectiveness. You run A/B tests on prompt variations with automated quality scoring. Your caching strategy is deliberate, not opportunistic. When GPT-4 is unavailable, your system gracefully degrades to GPT-3.5 or cached responses, not error pages.

You have dashboards showing cost per user session, success rates by prompt type, and P95 latency. You can answer “why did this query cost $2.50?” and “why did response quality drop 15% last Tuesday?” When product asks for a new feature, your answer includes expected token usage and monthly cost projections.

The architectural patterns that separate Level 3 from Level 2 are not exotic. They are the same patterns you use for databases, caches, and external APIs. The difference is applying them systematically instead of reactively.

Let’s start by building a Level 1 integration the right way—with the structural foundation that makes evolution to Level 2 and 3 straightforward rather than a rewrite.

Level 1: Building Your First Integration (The Right Way)

Most GPT integrations start as a synchronous API call in a request handler. This works until the first timeout brings down your production server. Production-grade LLM integration requires async patterns, comprehensive error handling, and observability from the first line of code.

Async-First Architecture

LLM API calls take 2-15 seconds. Blocking your application thread during this time creates cascading failures under load. Use async/await patterns to maintain responsiveness:

import asyncioimport httpxfrom typing import Optional

class LLMClient: def __init__(self, api_key: str, timeout: int = 30): self.api_key = api_key self.base_url = "https://api.openai.com/v1" self.timeout = timeout self.client = httpx.AsyncClient(timeout=timeout)

async def generate( self, prompt: str, model: str = "gpt-4", max_tokens: int = 1000 ) -> Optional[str]: headers = { "Authorization": f"Bearer {self.api_key}", "Content-Type": "application/json" }

payload = { "model": model, "messages": [{"role": "user", "content": prompt}], "max_tokens": max_tokens }

try: response = await self.client.post( f"{self.base_url}/chat/completions", json=payload, headers=headers ) response.raise_for_status() return response.json()["choices"][0]["message"]["content"]

except httpx.TimeoutException: print(f"Request timeout after {self.timeout}s") return None except httpx.HTTPStatusError as e: if e.response.status_code == 429: print("Rate limit exceeded, implement backoff") elif e.response.status_code >= 500: print("OpenAI service error, retry with exponential backoff") else: print(f"API error {e.response.status_code}: {e.response.text}") return None except Exception as e: print(f"Unexpected error: {str(e)}") return NoneThis client handles the three critical failure modes: network timeouts, rate limiting, and service outages. Each requires different retry strategies.

Request Logging and Cost Tracking

LLM costs scale with token usage. Without logging, you discover budget overruns after the fact. Instrument every request:

import jsonimport timefrom datetime import datetime

class LLMClient: def __init__(self, api_key: str, timeout: int = 30, log_file: str = "llm_requests.jsonl"): # ... existing init code ... self.log_file = log_file

async def generate(self, prompt: str, model: str = "gpt-4", max_tokens: int = 1000) -> Optional[str]: start_time = time.time()

# ... existing API call code ...

log_entry = { "timestamp": datetime.utcnow().isoformat(), "model": model, "prompt_length": len(prompt), "max_tokens": max_tokens, "latency_ms": int((time.time() - start_time) * 1000), "status": "success" if response else "error" }

if response: log_entry["completion_tokens"] = response.json()["usage"]["completion_tokens"] log_entry["prompt_tokens"] = response.json()["usage"]["prompt_tokens"]

with open(self.log_file, "a") as f: f.write(json.dumps(log_entry) + "\n")

return responseStructured logging as JSONL enables analysis with standard tools. Track token consumption trends, identify expensive prompts, and correlate latency with model versions.

Environment-Based Configuration

API keys in source code represent the most common security vulnerability in LLM applications. Use environment variables with validation:

import osfrom dataclasses import dataclass

@dataclassclass LLMConfig: api_key: str model: str = "gpt-4" timeout: int = 30 max_retries: int = 3

@classmethod def from_env(cls): api_key = os.getenv("OPENAI_API_KEY") if not api_key: raise ValueError("OPENAI_API_KEY environment variable required")

return cls( api_key=api_key, model=os.getenv("LLM_MODEL", "gpt-4"), timeout=int(os.getenv("LLM_TIMEOUT", "30")), max_retries=int(os.getenv("LLM_MAX_RETRIES", "3")) )

## Usageconfig = LLMConfig.from_env()client = LLMClient(config.api_key, config.timeout)💡 Pro Tip: Use different models per environment. Run GPT-3.5 in development to reduce costs during iteration, then deploy GPT-4 to production where response quality matters.

This foundation handles the mechanical integration correctly. The real complexity emerges when managing context across multi-turn conversations, where naive approaches quickly exhaust token limits.

Context Window Management: The Make-or-Break Problem

The moment your developer tool tries to analyze a real codebase, you’ll hit the wall: a single React component can easily consume 10K tokens, and GPT-4’s context window disappears fast. Context limits are the primary reason why impressive demos fail in production.

The naive approach—stuffing entire files into prompts—works until it doesn’t. You’ll see intermittent failures when users analyze large files, inconsistent behavior as context gets truncated mid-conversation, and bills that scale exponentially with codebase size. This isn’t a minor UX issue; it’s an architectural constraint that shapes every design decision.

A production codebase compounds this problem. Your typical enterprise monorepo contains thousands of files, with individual services spanning dozens of modules. When a developer asks “why is this API call failing?”, the relevant context might span authentication middleware, route handlers, database models, and configuration files—easily 50K+ tokens before you’ve even started the conversation. You need systematic strategies for context management, not ad-hoc solutions.

The Chunking Problem: Structure Matters More Than Size

The instinct is to split files at character or token boundaries, but this destroys the semantic structure that makes code comprehensible. Splitting a Python class between methods means the LLM loses critical context about class state and method relationships. Split a TypeScript interface definition from its implementation, and type inference breaks down.

Effective chunking preserves structural boundaries. For code analysis tools, split at function and class definitions. For documentation systems, split at section headers. For configuration files, split at top-level keys. The goal is ensuring each chunk remains independently meaningful—a fragment that makes sense in isolation while still connecting to the broader context.

Consider the difference: naive character-based chunking might split this class mid-method, leaving the LLM with an incomplete picture. Structure-aware chunking keeps each method intact, preserving the logical flow.

import astfrom typing import List, Dict

class CodeChunker: def __init__(self, max_tokens: int = 4000): self.max_tokens = max_tokens

def chunk_python_file(self, source: str) -> List[Dict]: """Split Python source at class and function boundaries.""" tree = ast.parse(source) chunks = []

for node in ast.iter_child_nodes(tree): if isinstance(node, (ast.ClassDef, ast.FunctionDef)): chunk_source = ast.get_source_segment(source, node) chunk_tokens = len(chunk_source) // 4 # Rough estimate

if chunk_tokens > self.max_tokens: # Function too large, include just signature + docstring chunk_source = self._extract_signature(node, source)

chunks.append({ 'type': node.__class__.__name__, 'name': node.name, 'source': chunk_source, 'lineno': node.lineno })

return chunks

def _extract_signature(self, node, source: str) -> str: """Extract function/class signature and docstring only.""" lines = source.split('\n') sig_line = lines[node.lineno - 1]

# Get docstring if it exists docstring = ast.get_docstring(node) or "" return f"{sig_line}\n \"\"\"{docstring}\"\"\""This approach maintains code semantics while keeping chunks below token limits. When analyzing a codebase, you feed the LLM relevant chunks rather than entire files. The chunker becomes your first line of defense against context overflow.

Sliding Window Context with Conversation History

For conversational tools, context accumulates rapidly. Each user message, assistant response, and code snippet consumes tokens. After a dozen exchanges, you’re out of room—but developers expect their AI assistant to remember the entire debugging session.

The solution is a sliding window that retains recent context while pruning old messages. Store the full conversation in PostgreSQL for audit trails and analytics, but only send a carefully selected window to the API. This means making hard choices about what to keep: recent messages matter most, but you also need to preserve the original question that started the conversation.

from collections import dequefrom typing import List, Dict

class ConversationContext: def __init__(self, max_context_tokens: int = 8000): self.max_tokens = max_context_tokens self.messages = deque() self.system_prompt_tokens = 500 # Reserved for system context

def add_message(self, role: str, content: str): """Add message and trim if needed.""" tokens = len(content) // 4 self.messages.append({'role': role, 'content': content, 'tokens': tokens}) self._trim_context()

def _trim_context(self): """Remove oldest messages until under token limit.""" total = self.system_prompt_tokens + sum(m['tokens'] for m in self.messages)

while total > self.max_tokens and len(self.messages) > 2: # Always keep at least the last exchange removed = self.messages.popleft() total -= removed['tokens']

def get_context(self) -> List[Dict]: """Return messages for API call.""" return [{'role': m['role'], 'content': m['content']} for m in self.messages]In practice, you’ll want a more sophisticated strategy: pin the initial user question, always include the last 3-5 exchanges, and use summarization for the middle section. When a conversation spans 50 messages, summarize messages 5-45 into a brief recap, preserving key decisions and context while freeing tokens for detailed recent history.

Embeddings vs. Smart Truncation: When to Use Each

Embeddings are powerful for semantic search across large codebases—finding relevant functions when the user asks “where do we handle authentication?” But they add latency (embedding generation + vector search) and complexity (vector database, reindexing). Every code change requires re-embedding, and vector databases introduce new infrastructure dependencies.

For many developer tools, smart truncation wins. If you’re building a code review assistant that analyzes pull request diffs, you don’t need embeddings—you need the exact changed lines plus surrounding context. Truncate intelligently: include function signatures, class definitions, and import statements even when omitting function bodies. Show method signatures for an entire class, but only include full implementations for methods that changed.

Use embeddings when you need semantic search across thousands of files—when the user’s question could relate to code anywhere in the repository. Use smart truncation when you know exactly which code sections matter—when you’re analyzing a specific file, reviewing a pull request, or debugging a particular module. Most developer tools need both: embeddings for initial file selection, truncation for focused analysis.

The hybrid approach looks like this: use embeddings to find the five most relevant files, then apply smart truncation to include only essential sections from each file. You get broad coverage without hitting context limits, and you maintain the semantic relationships that make code understandable.

The next challenge is structuring these context-aware prompts into reliable workflows. That’s where the agent-tool pattern transforms fragile scripts into robust systems.

The Agent-Tool Pattern for Developer Workflows

Moving beyond single-prompt interactions requires a fundamental architectural shift: splitting your LLM integration into an agent layer that makes decisions and a tool layer that executes actions. This pattern transforms GPT from a text generator into a system that can perform complex, multi-step development workflows.

The core insight is that LLMs excel at planning and decision-making but cannot directly interact with your codebase. By exposing developer operations as callable functions, you create a system where the LLM orchestrates while your code executes. OpenAI’s function calling API makes this pattern straightforward to implement.

Implementing the Tool Registry

Start by defining tools as structured schemas that GPT can invoke. Each tool represents a discrete operation: reading files, running tests, or executing shell commands. The schema structure is critical—GPT uses descriptions to understand when and how to call each tool.

export const tools = [ { type: "function", function: { name: "read_file", description: "Read the contents of a file from the project directory", parameters: { type: "object", properties: { path: { type: "string", description: "Relative path to the file from project root" } }, required: ["path"] } } }, { type: "function", function: { name: "run_tests", description: "Execute test suite and return results", parameters: { type: "object", properties: { pattern: { type: "string", description: "Test file pattern (e.g., '**/*.test.ts')" } }, required: ["pattern"] } } }, { type: "function", function: { name: "write_file", description: "Create or update a file with specified content", parameters: { type: "object", properties: { path: { type: "string" }, content: { type: "string" } }, required: ["path", "content"] } } }];Invest time in writing precise descriptions. Ambiguous tool descriptions lead to incorrect function calls, wasted tokens, and failed operations. Include examples in the description when the parameter format isn’t obvious—for instance, specifying that pattern expects glob syntax rather than regex.

Building the Agent Loop

The agent loop coordinates between GPT’s responses and tool execution. When GPT requests a function call, execute the corresponding tool and feed the results back as a new message. This creates a cyclical workflow: the agent analyzes the situation, calls tools to gather information or make changes, receives results, and decides on the next action.

async function runAgent(userPrompt: string) { const messages: ChatCompletionMessageParam[] = [ { role: "system", content: "You are a coding assistant with access to project files and test execution." }, { role: "user", content: userPrompt } ];

while (true) { const response = await openai.chat.completions.create({ model: "gpt-4-turbo", messages, tools, tool_choice: "auto" });

const message = response.choices[0].message; messages.push(message);

if (!message.tool_calls) { return message.content; }

// Execute all tool calls in parallel const toolResults = await Promise.all( message.tool_calls.map(async (toolCall) => { const result = await executeToolCall(toolCall); return { role: "tool" as const, tool_call_id: toolCall.id, content: JSON.stringify(result) }; }) );

messages.push(...toolResults); }}The loop continues until GPT returns a text response without tool calls, indicating the task is complete. This design handles arbitrarily complex workflows—GPT might read a file, discover it imports another file, read that one, identify a bug, write a fix, run tests, see a failure, and iterate until tests pass.

Setting tool_choice: "auto" lets GPT decide when to use tools versus when to respond directly. For workflows where you always want a tool call first, use tool_choice: "required". For debugging or when you want to force a specific tool, use tool_choice: { type: "function", function: { name: "tool_name" } }.

State Management and Error Recovery

The critical challenge is managing state across multiple tool invocations. Each interaction must preserve context about what has been done, what failed, and what remains. Without this context, the agent might repeatedly attempt failed operations or lose track of progress in multi-step workflows.

Maintain an execution context that tracks file modifications, test results, and error states. This context becomes part of the conversation history, allowing the agent to make informed decisions about recovery strategies.

interface ExecutionContext { modifiedFiles: Set<string>; failedOperations: Array<{ tool: string; error: string }>; testResults: { passed: number; failed: number };}

async function executeToolCall( toolCall: ChatCompletionMessageToolCall, context: ExecutionContext): Promise<{ success: boolean; data?: any; error?: string }> { try { const args = JSON.parse(toolCall.function.arguments);

switch (toolCall.function.name) { case "read_file": const content = await fs.readFile(args.path, "utf-8"); return { success: true, data: content };

case "write_file": await fs.writeFile(args.path, args.content); context.modifiedFiles.add(args.path); return { success: true, data: `Wrote ${args.content.length} bytes` };

case "run_tests": const result = await execAsync(`npm test -- ${args.pattern}`); context.testResults = parseTestOutput(result.stdout); return { success: true, data: context.testResults };

default: throw new Error(`Unknown tool: ${toolCall.function.name}`); } } catch (error) { const errorMsg = error instanceof Error ? error.message : String(error); context.failedOperations.push({ tool: toolCall.function.name, error: errorMsg }); return { success: false, error: errorMsg }; }}When tools fail, return structured error information rather than throwing exceptions. This allows the agent to see what went wrong and attempt alternative approaches. If a file read fails due to an incorrect path, the agent can list directory contents and retry with the correct location. If tests fail, the agent sees the failure output and can analyze the root cause.

The key is making errors actionable. Instead of returning “File not found”, return “File not found: src/utils.ts. Directory contains: [helpers.ts, validators.ts, parsers.ts]”. This gives the agent the information needed to self-correct without additional tool calls.

Handling Complex Debugging Workflows

Where the agent-tool pattern truly shines is in complex debugging scenarios that require multiple investigation steps. Consider a workflow where tests are failing: the agent needs to read the test file, identify what’s being tested, read the implementation, spot the bug, propose a fix, apply it, and verify with tests.

This requires sophisticated state tracking. The agent must remember which files it has already examined, what hypotheses it has tested, and which approaches failed. Structure your tool responses to support this reasoning process. When running tests, include not just pass/fail counts but also failure messages, stack traces, and timing information.

{ type: "function", function: { name: "run_tests_verbose", description: "Execute tests with detailed output including failures, timing, and coverage", parameters: { type: "object", properties: { pattern: { type: "string", description: "Test file pattern" }, options: { type: "object", properties: { coverage: { type: "boolean", description: "Include coverage data" }, timeout: { type: "number", description: "Timeout in milliseconds" } } } }, required: ["pattern"] } }}For debugging workflows, consider adding tools that expose higher-level operations: git_diff to see uncommitted changes, lint_file to check code quality, or find_references to understand how a function is used across the codebase. Each tool expands the agent’s capability to navigate and modify code intelligently.

Scaling Tool Complexity

The agent-tool pattern scales naturally. Start with basic file operations, then add tools for API calls, database queries, or deployment operations. Each new capability expands what the agent can accomplish without changing the core orchestration logic.

However, be strategic about tool granularity. Tools that are too fine-grained (e.g., read_single_line) force the agent to make many calls. Tools that are too coarse-grained (e.g., fix_all_bugs) give the agent no control over the process. Aim for tools that represent meaningful atomic operations in your domain.

💡 Pro Tip: Include execution time and resource consumption in tool responses. This feedback helps the agent learn which operations are expensive and adjust its strategy to minimize unnecessary calls.

With tool execution working reliably, the next bottleneck emerges: API costs. Every tool invocation adds tokens to your conversation history, and complex workflows generate substantial bills. The solution lies in strategic caching and token optimization.

Caching and Cost Optimization Strategies

At production scale, GPT API costs become a first-order concern. A developer tool making 10,000 GPT-4 requests per day can easily rack up $3,000-5,000 monthly bills. The good news: most applications exhibit significant query redundancy, and strategic caching can reduce costs by 60-80% without sacrificing response quality.

Semantic Caching with Redis

Traditional caching fails for LLM applications because queries are rarely identical. “How do I authenticate users?” and “What’s the user auth pattern?” should return the same cached response, but string-based cache keys miss this equivalence.

Semantic caching solves this by using embedding similarity. Hash the query’s embedding vector and check if a similar query exists within a threshold distance:

import openaiimport redisimport numpy as npfrom hashlib import sha256

class SemanticCache: def __init__(self, similarity_threshold=0.95): self.redis_client = redis.Redis(host='localhost', port=6379, db=0) self.threshold = similarity_threshold

def _get_embedding(self, text): response = openai.embeddings.create( model="text-embedding-3-small", input=text ) return np.array(response.data[0].embedding)

def get(self, query): query_embedding = self._get_embedding(query)

# Search cached embeddings cached_keys = self.redis_client.keys("cache:*") for key in cached_keys: cached_data = self.redis_client.hgetall(key) cached_embedding = np.frombuffer(cached_data[b'embedding'], dtype=np.float32)

similarity = np.dot(query_embedding, cached_embedding) / ( np.linalg.norm(query_embedding) * np.linalg.norm(cached_embedding) )

if similarity >= self.threshold: return cached_data[b'response'].decode('utf-8')

return None

def set(self, query, response, ttl=3600): embedding = self._get_embedding(query) cache_key = f"cache:{sha256(query.encode()).hexdigest()}"

self.redis_client.hset(cache_key, mapping={ 'query': query, 'response': response, 'embedding': embedding.astype(np.float32).tobytes() }) self.redis_client.expire(cache_key, ttl)Embedding API calls cost ~$0.0001 per request—a 100x reduction compared to GPT-4 calls. This makes the cache check economically viable even if you never get a hit.

💡 Pro Tip: Use

text-embedding-3-smallinstead oftext-embedding-ada-002. It’s 5x cheaper with comparable accuracy for semantic similarity tasks.

Native Prompt Caching

OpenAI’s prompt caching (available in GPT-4 and GPT-3.5) automatically caches shared prompt prefixes. This is particularly effective for developer tools where system prompts and context remain stable across requests:

from openai import OpenAI

client = OpenAI()

SYSTEM_CONTEXT = """You are a code review assistant for a Python backend team.Follow these guidelines:- Flag security vulnerabilities immediately- Suggest type hints where missing- Reference PEP 8 for style issues[... 2000 tokens of detailed context ...]"""

def get_code_review(code_snippet): response = client.chat.completions.create( model="gpt-4-turbo", messages=[ {"role": "system", "content": SYSTEM_CONTEXT}, {"role": "user", "content": f"Review this code:\n\n{code_snippet}"} ], # Prompt caching header extra_headers={"anthropic-cache-control": "ephemeral"} )

return response.choices[0].message.contentCached tokens cost 90% less than uncached tokens. For a 2000-token system prompt reused across 1000 requests daily, this saves approximately $120/month on GPT-4.

Model Selection Strategy

Not all queries justify GPT-4’s cost premium. Implement a routing layer based on query complexity:

def route_to_model(query, context_size): # Simple queries: use GPT-3.5 if context_size < 500 and not requires_reasoning(query): return "gpt-3.5-turbo"

# Complex reasoning: use GPT-4 if requires_reasoning(query) or context_size > 2000: return "gpt-4-turbo"

# Specialized tasks: use fine-tuned model if is_specialized_task(query): return "ft:gpt-3.5-turbo:org-id:model-name:abc123"

return "gpt-3.5-turbo"

def requires_reasoning(query): reasoning_indicators = [ "debug", "why", "explain", "architect", "design", "trade-off", "compare" ] return any(indicator in query.lower() for indicator in reasoning_indicators)Fine-tuned GPT-3.5 models cost 8x less per token than GPT-4 while often outperforming it on domain-specific tasks. For repetitive workflows like generating test cases or formatting documentation, invest in fine-tuning.

Cost Monitoring with CloudWatch

Set up proactive budget alerts before costs spiral:

import boto3from datetime import datetime, timedelta

cloudwatch = boto3.client('cloudwatch', region_name='us-east-1')

def publish_cost_metric(cost_usd, model): cloudwatch.put_metric_data( Namespace='AITools/LLM', MetricData=[{ 'MetricName': 'APITokenCost', 'Value': cost_usd, 'Unit': 'None', 'Timestamp': datetime.utcnow(), 'Dimensions': [{'Name': 'Model', 'Value': model}] }] )

## Create alarm for daily spend thresholdcloudwatch.put_metric_alarm( AlarmName='daily-gpt4-budget-alert', MetricName='APITokenCost', Namespace='AITools/LLM', Statistic='Sum', Period=86400, # 24 hours EvaluationPeriods=1, Threshold=150.0, ComparisonOperator='GreaterThanThreshold', AlarmActions=['arn:aws:sns:us-east-1:1234567890:billing-alerts'])Track cost per request, cache hit rates, and model distribution to identify optimization opportunities. A 2% cache hit rate improvement translates directly to meaningful savings at scale.

With these strategies in place, the final production concern is ensuring your system stays reliable under load. Observability and resilience patterns separate tools that work in demos from those that survive production traffic.

Production Deployment: Observability and Resilience

Deploying AI features to production requires the same operational rigor as your core infrastructure. The distributed nature of LLM workflows—spanning multiple API calls, embeddings, retrievals, and generations—creates unique observability challenges that standard APM tools don’t address out of the box.

Distributed Tracing for Multi-Step Workflows

LangSmith and similar platforms provide purpose-built tracing for AI workflows, but you can instrument your own system using OpenTelemetry. The critical insight is treating each LLM call as a span with rich metadata about tokens, cost, and latency.

from opentelemetry import tracefrom opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporterfrom opentelemetry.sdk.trace import TracerProviderfrom opentelemetry.sdk.trace.export import BatchSpanProcessor

tracer_provider = TracerProvider()tracer_provider.add_span_processor( BatchSpanProcessor(OTLPSpanExporter(endpoint="http://jaeger:4317")))trace.set_tracer_provider(tracer_provider)tracer = trace.get_tracer(__name__)

async def generate_with_tracing(prompt: str, context: dict): with tracer.start_as_current_span("llm_generation") as span: span.set_attribute("llm.model", "gpt-4") span.set_attribute("llm.prompt_tokens", len(prompt.split()))

response = await openai.ChatCompletion.acreate( model="gpt-4", messages=[{"role": "user", "content": prompt}] )

span.set_attribute("llm.completion_tokens", response.usage.completion_tokens) span.set_attribute("llm.total_cost_usd", calculate_cost(response.usage))

return response.choices[0].message.contentThis approach surfaces patterns like unexpectedly long retrieval chains or expensive prompt reformulations that inflate costs without user visibility. When you instrument RAG pipelines, create parent spans for the entire workflow with child spans for embedding generation, vector search, context assembly, and final generation. This hierarchical structure reveals bottlenecks—often vector database queries dominate latency, not the LLM calls themselves.

Beyond basic tracing, track semantic metrics specific to AI workloads. Monitor prompt diversity to detect users stuck in retry loops, track cache hit rates for embeddings, and measure the distribution of response lengths. These signals predict infrastructure scaling needs before users experience degraded performance.

Circuit Breakers and Fallback Strategies

OpenAI outages happen. Your product shouldn’t go dark when they do. Implement circuit breakers with graceful degradation rather than hard failures.

from circuitbreaker import circuitimport asyncio

@circuit(failure_threshold=5, recovery_timeout=60, expected_exception=OpenAIError)async def call_openai_with_circuit_breaker(prompt: str): return await openai.ChatCompletion.acreate( model="gpt-4", messages=[{"role": "user", "content": prompt}], timeout=30 )

async def generate_with_fallback(prompt: str): try: return await call_openai_with_circuit_breaker(prompt) except CircuitBreakerError: # Circuit open - fall back to local model or cached response return await local_llama_inference(prompt) except asyncio.TimeoutError: # Queue for async processing and return placeholder await queue_for_batch_processing(prompt) return {"status": "queued", "estimated_completion": "2 minutes"}For developer tools, queueing requests during outages and processing them later is often more acceptable than showing errors. Users understand asynchronous workflows when they’re clearly communicated. Consider task-specific fallback strategies: code completion might fall back to a smaller local model, while documentation generation could return cached results from similar queries. The key is matching degradation strategy to user expectations for each feature.

Health check endpoints should test actual LLM connectivity, not just API reachability. A provider returning 200 status codes but timing out on actual inference creates false positives. Periodically execute lightweight test prompts and measure end-to-end latency to catch subtle degradation before circuits trip.

Rate Limiting Per User and Team

Prevent runaway costs from misbehaving scripts or malicious users with hierarchical rate limiting at both user and organization levels.

from redis import Redisfrom datetime import datetime, timedelta

redis = Redis(host='redis', port=6379)

async def check_rate_limit(user_id: str, org_id: str) -> bool: now = datetime.utcnow() minute_key = f"ratelimit:{user_id}:{now.strftime('%Y%m%d%H%M')}" org_hour_key = f"ratelimit:org:{org_id}:{now.strftime('%Y%m%d%H')}"

user_count = redis.incr(minute_key) redis.expire(minute_key, 60)

org_count = redis.incr(org_hour_key) redis.expire(org_hour_key, 3600)

if user_count > 100: # 100 requests per minute per user raise RateLimitError("User rate limit exceeded")

if org_count > 10000: # 10k requests per hour per org raise RateLimitError("Organization rate limit exceeded")

return TrueImplement token-based rate limiting alongside request counts. A user generating 10 requests with 100K tokens each consumes vastly more resources than 100 requests with 1K tokens. Track sliding window token consumption and enforce limits that reflect actual infrastructure costs. Redis sorted sets provide efficient sliding window tracking without the memory overhead of storing every request timestamp.

Different features warrant different limits. Code completion needs high request throughput with tight latency budgets, while documentation generation tolerates lower throughput but longer processing times. Expose rate limit budgets through headers (X-RateLimit-Remaining) so users can build backoff logic into their integrations.

Kubernetes Deployment for GPU Alternatives

When running local models like Llama 3 or CodeLlama as fallbacks, you need GPU-accelerated pods with proper resource allocation.

apiVersion: apps/v1kind: Deploymentmetadata: name: llama-inferencespec: replicas: 2 template: spec: nodeSelector: accelerator: nvidia-tesla-t4 containers: - name: vllm image: vllm/vllm-openai:latest resources: limits: nvidia.com/gpu: 1 memory: 16Gi requests: nvidia.com/gpu: 1 memory: 16Gi env: - name: MODEL_NAME value: "meta-llama/Llama-3.1-8B-Instruct" ports: - containerPort: 8000💡 Pro Tip: Use node pools with GPU instances only for inference workloads. Keep your API layer on cheaper CPU-only nodes and route requests based on model selection to optimize infrastructure costs.

Configure horizontal pod autoscaling based on queue depth rather than CPU utilization. GPU utilization remains high even under light load due to model weights staying resident in VRAM, making it a poor scaling signal. Monitor pending requests in your inference queue and scale replicas when sustained queue depth exceeds target latency SLOs.

With observability and resilience patterns in place, your AI-powered developer tools operate with the same reliability guarantees as your core product. The next challenge is measuring whether these features actually improve developer productivity—and iterating based on real usage data.

Key Takeaways

- Start with async patterns and comprehensive error handling—retrofitting these later is painful and breaks existing integrations

- Implement context window management and caching before you scale; waiting until costs spiral means emergency architectural changes under pressure

- Use the agent-tool pattern to break complex workflows into manageable, testable pieces rather than trying to solve everything in a single prompt

- Build observability into your LLM calls from day one—you need distributed tracing and cost monitoring before problems emerge, not after