Building Cross-Cloud Identity and Network Bridges Between GCP and Azure

You’ve deployed microservices across both GCP and Azure to avoid vendor lock-in, but now your Azure-hosted auth service can’t securely communicate with your GCP data pipeline. Your team is routing traffic through the public internet with VPNs and API keys, racking up egress costs and security review meetings.

This isn’t a hypothetical scenario. Multi-cloud architectures promise resilience and flexibility, but they introduce a connectivity problem that most teams discover too late: cloud providers don’t make it easy to bridge their networks. When your Azure Kubernetes cluster needs to query a Cloud SQL database in GCP, or your GCP Cloud Functions need to authenticate against Azure Active Directory, you face a choice between bad options. Route everything through public endpoints with API keys and hope your WAF catches the threats. Stand up a site-to-site VPN and watch your latency spike. Or build a complex service mesh that requires three teams to maintain.

The root issue is that each cloud provider optimized their security model for workloads that stay within their ecosystem. Cross-cloud communication defaults to treating the other provider’s resources like any other internet endpoint—which means you’re paying egress rates, accepting additional latency, and explaining to your compliance team why database credentials are crossing availability zone boundaries over TLS tunnels that weren’t designed for this traffic pattern.

But there’s a better path. By implementing identity federation between Azure Active Directory and GCP’s Workload Identity, combined with private network connectivity through Cloud Interconnect and Azure ExpressRoute, you can build secure, performant bridges between both platforms. Before diving into the implementation, it’s worth understanding exactly why public internet routing falls short for production multi-cloud architectures.

The Multi-Cloud Connectivity Problem: Why Public Internet Isn’t Enough

When your GKE cluster in us-central1 needs to call an Azure SQL Database in eastus, the default path traverses the public internet—typically routing through 15-20 hops, introducing 80-120ms of latency, and exposing your traffic to the unpredictable performance characteristics of shared backbone networks.

For read-heavy workloads making hundreds of cross-cloud API calls per second, this latency compounds into measurable application slowdowns that degrade user experience.

Performance and Reliability Constraints

Public internet routing between cloud providers suffers from three fundamental limitations. First, you have no control over the path your packets take—traffic between GCP’s Iowa region and Azure’s Virginia region might route through peering points in Dallas or Atlanta based on BGP policies you cannot influence. Second, bandwidth is constrained by NAT gateway throughput limits (typically 50 Gbps per gateway in GCP, 100 Gbps in Azure) and subject to contention from other workloads. Third, packet loss rates on public links typically range from 0.1-1%, forcing TCP retransmissions that amplify latency for time-sensitive operations like database queries or gRPC service calls.

Security and Compliance Gaps

Exposing cross-cloud communication over public endpoints creates attack surface area that contradicts zero-trust security models. Even with TLS encryption, your traffic traverses untrusted networks where DNS hijacking, BGP hijacking, and man-in-the-middle attacks remain theoretical risks. For organizations subject to HIPAA, PCI-DSS, or GDPR requirements, auditors increasingly flag public internet transit as a compliance gap—especially when regulations mandate encryption in transit AND at rest within controlled network boundaries.

More critically, authentication flows relying on OAuth tokens or service account keys transmitted over public networks introduce credential exposure risks. A compromised Cloud NAT gateway or misconfigured firewall rule can leak authentication material that grants persistent access to your Azure resources.

Cost Implications at Scale

Cross-cloud egress charges accumulate quickly. Transferring 10 TB monthly from GCP to Azure over public internet costs approximately $1,200 in GCP egress fees (at $0.12/GB) plus Azure ingress processing charges. Cloud NAT gateways add $0.045 per GB processed, contributing another $450. For data-intensive workloads like analytics pipelines or real-time event streaming, these recurring costs justify the upfront investment in dedicated private connectivity solutions.

The next section examines how Cloud VPN and Cloud Interconnect with ExpressRoute address these limitations through dedicated private links.

Establishing Private Connectivity: Cloud VPN vs Cloud Interconnect + ExpressRoute

When connecting GCP and Azure networks, you face a fundamental decision: encrypted VPN tunnels over the public internet or dedicated physical interconnects. Each approach delivers distinct performance, cost, and operational characteristics that directly impact your architecture.

Cloud VPN: Fast Setup, Lower Commitment

Cloud VPN establishes IPsec tunnels between GCP and Azure Virtual WAN, providing encrypted connectivity within hours. This approach works well for workloads tolerating 10-50ms additional latency and throughput up to 3 Gbps per tunnel. The setup requires no physical infrastructure provisioning, making it ideal for proof-of-concept deployments and moderate-throughput integrations.

Start by creating a high-availability VPN gateway in GCP:

## Create VPN gateway in GCPgcloud compute vpn-gateways create gcp-to-azure-gateway \ --network=production-vpc \ --region=us-central1

## Create Cloud Router for BGPgcloud compute routers create gcp-azure-router \ --region=us-central1 \ --network=production-vpc \ --asn=65001

## Create VPN tunnel to Azuregcloud compute vpn-tunnels create tunnel-to-azure \ --peer-gcp-gateway=gcp-to-azure-gateway \ --region=us-central1 \ --ike-version=2 \ --shared-secret=your-preshared-key-here \ --router=gcp-azure-router \ --interface=0On the Azure side, configure Virtual WAN with corresponding settings:

## Create VPN Gateway in Azure Virtual WANaz network vpn-gateway create \ --name azure-to-gcp-gateway \ --resource-group cross-cloud-rg \ --vhub prod-vwan-hub \ --location eastus \ --scale-unit 1

## Create VPN site representing GCP endpointaz network vpn-site create \ --name gcp-vpn-site \ --resource-group cross-cloud-rg \ --virtual-wan prod-vwan \ --ip-address 203.0.113.45 \ --asn 65001 \ --bgp-peering-address 169.254.21.1

## Create connection with BGP enabledaz network vpn-gateway connection create \ --gateway-name azure-to-gcp-gateway \ --name gcp-connection \ --resource-group cross-cloud-rg \ --remote-vpn-site gcp-vpn-site \ --shared-key your-preshared-key-here \ --enable-bgp trueThe VPN gateway creation typically completes within 30-45 minutes, but full BGP convergence may take an additional 10-15 minutes as routes propagate. Monitor tunnel establishment through GCP’s VPN status dashboard and Azure’s connection health metrics to verify both control plane (BGP) and data plane (IPsec) connectivity.

BGP Routing for Dynamic Path Selection

BGP enables automatic failover and load distribution across multiple tunnels. Configure route preferences through AS path prepending or MED attributes to control traffic flow during normal operations and failures. This dynamic routing proves essential for production deployments where manual intervention during outages is unacceptable.

## Add BGP peer on GCP sidegcloud compute routers add-bgp-peer gcp-azure-router \ --peer-name=azure-peer \ --interface=0 \ --peer-ip-address=169.254.21.2 \ --peer-asn=65002 \ --region=us-central1 \ --advertised-route-priority=100BGP session establishment requires matching ASN configuration on both sides. GCP supports 16-bit and 32-bit ASNs, while Azure Virtual WAN accepts any valid ASN except those reserved for Azure’s internal use (12076, 65515-65520). Choose private ASNs from the 64512-65534 range for smaller deployments or 4200000000-4294967294 for enterprises requiring larger ASN pools.

For redundancy, configure active-active tunnel pairs with equal-cost multipath (ECMP) routing. This distributes traffic across both tunnels and provides sub-second failover if one tunnel degrades:

## Create second tunnel for high availabilitygcloud compute vpn-tunnels create tunnel-to-azure-backup \ --peer-gcp-gateway=gcp-to-azure-gateway \ --region=us-central1 \ --ike-version=2 \ --shared-secret=your-backup-preshared-key \ --router=gcp-azure-router \ --interface=1

## Add second BGP peer with same priority for ECMPgcloud compute routers add-bgp-peer gcp-azure-router \ --peer-name=azure-peer-backup \ --interface=1 \ --peer-ip-address=169.254.21.10 \ --peer-asn=65002 \ --region=us-central1 \ --advertised-route-priority=100💡 Pro Tip: Deploy VPN tunnels in pairs across different regions. If one tunnel degrades, BGP automatically shifts traffic to healthy paths without manual intervention. Verify failover behavior by intentionally disabling one tunnel and confirming traffic shifts within 30 seconds.

When Dedicated Interconnects Justify the Investment

Cloud Interconnect paired with Azure ExpressRoute delivers 10-100 Gbps throughput with sub-10ms latency, but requires longer provisioning (4-12 weeks) and higher baseline costs ($1,500+ monthly). The dedicated physical circuits bypass the public internet entirely, providing predictable performance and enhanced security.

Choose dedicated interconnects when:

- High-volume transfers: Moving multi-TB datasets daily between clouds makes the per-GB savings significant

- Latency-sensitive workloads: Running distributed databases (Spanner, Cosmos DB) or real-time analytics where milliseconds matter

- Regulatory compliance: Industries like healthcare or finance requiring air-gapped connectivity that never traverses public networks

- Cost optimization at scale: Monthly egress exceeding 50 TB reaches a break-even point where interconnect per-GB costs ($0.02) undercut VPN transfer fees ($0.05)

Dedicated interconnects also provide SLA-backed availability guarantees (99.99% for dual circuits) compared to VPN’s best-effort delivery. However, the upfront commitment includes circuit installation fees ($500-2,000), mandatory port fees ($200-1,000 monthly depending on capacity), and longer lead times that can delay project launches.

Performance Benchmarks and Cost Comparisons

Production deployments reveal measurable performance differences. Cloud VPN between us-central1 (GCP) and eastus (Azure) averages 2.8 Gbps throughput with 35ms latency, while dedicated interconnects achieve 9.4 Gbps with 8ms latency. These benchmarks assume optimized TCP window sizes and application-level parallelism.

Cost analysis for a workload transferring 100 TB monthly:

- VPN approach: Gateway fees ($0.05/hour × 2 gateways × 730 hours) + data transfer (100 TB × $0.05/GB) = $5,200/month

- Interconnect approach: Port fees ($1,500) + data transfer (100 TB × $0.02/GB) = $3,500/month

The 33% cost reduction justifies interconnect investment for sustained high-volume workloads, but VPN remains more economical for unpredictable or lower-volume traffic patterns.

Most hybrid architectures start with VPN for initial integration, then migrate high-volume paths to dedicated interconnects as traffic patterns stabilize and business value justifies the infrastructure investment. This phased approach minimizes risk while preserving the option to scale performance as requirements evolve. With private connectivity established, the next challenge emerges: enabling workloads to authenticate across cloud boundaries without managing separate credential systems.

Workload Identity Federation: Single Sign-On Across Clouds

Service account keys are the weakest link in cross-cloud authentication. They expire, get leaked in CI/CD logs, and create a sprawling key management problem when running workloads across GCP and Azure. Workload Identity Federation eliminates this entirely by letting Azure-based services authenticate to GCP using Azure AD tokens—no static credentials required.

The architecture is straightforward: Azure AD becomes an external identity provider for GCP. When an Azure VM or container needs to call a GCP API, it requests an Azure AD access token from its managed identity, exchanges that token for a short-lived GCP credential through the Security Token Service (STS), and authenticates as a GCP service account. The entire flow happens transparently without storing or rotating keys.

Setting Up the Identity Provider in GCP

Start by creating a workload identity pool in GCP that trusts Azure AD. This establishes the federation relationship and defines which Azure identities can authenticate.

gcloud iam workload-identity-pools create azure-pool \ --location="global" \ --description="Azure AD workload identity pool" \ --project="my-gcp-project"

gcloud iam workload-identity-pools providers create-oidc azure-provider \ --location="global" \ --workload-identity-pool="azure-pool" \ --issuer-uri="https://sts.windows.net/a1b2c3d4-e5f6-7890-abcd-ef1234567890" \ --attribute-mapping="google.subject=assertion.sub,attribute.tid=assertion.tid" \ --attribute-condition="assertion.tid == 'a1b2c3d4-e5f6-7890-abcd-ef1234567890'" \ --project="my-gcp-project"The issuer-uri contains your Azure tenant ID, which you can retrieve from the Azure portal under Azure Active Directory → Properties. The attribute mapping extracts the subject and tenant ID from the Azure token, while the condition ensures only tokens from your specific tenant are accepted. This prevents token substitution attacks from other Azure tenants.

The attribute mapping is critical for authorization granularity. By mapping assertion.sub to google.subject, you preserve the Azure managed identity’s unique identifier, enabling per-identity access controls. The tenant ID mapping (attribute.tid) provides an additional security boundary for multi-tenant architectures.

Mapping Azure Identities to GCP Service Accounts

Now bind the Azure managed identity to a GCP service account. This creates the permission boundary—the Azure identity assumes the role of the GCP service account with its associated IAM permissions.

gcloud iam service-accounts add-iam-policy-binding \ --role="roles/iam.workloadIdentityUser" \ --member="principalSet://iam.googleapis.com/projects/123456789/locations/global/workloadIdentityPools/azure-pool/attribute.tid/a1b2c3d4-e5f6-7890-abcd-ef1234567890" \ --project="my-gcp-project"This binding grants all managed identities in your Azure tenant permission to impersonate the gcp-storage-reader service account. For tighter controls, replace attribute.tid with subject and specify the exact Azure managed identity’s object ID:

gcloud iam service-accounts add-iam-policy-binding \ --role="roles/iam.workloadIdentityUser" \ --member="principal://iam.googleapis.com/projects/123456789/locations/global/workloadIdentityPools/azure-pool/subject/12345678-90ab-cdef-1234-567890abcdef" \ --project="my-gcp-project"This limits impersonation to a single Azure managed identity, following the principle of least privilege. Use this pattern for production workloads where each service should have its own dedicated GCP service account.

Authenticating from Azure Workloads

Azure-based applications use the Google Auth Library to transparently exchange their Azure token for GCP credentials. The flow is automatic once you configure the credential source.

from google.auth import identity_poolfrom google.cloud import storage

## Configure the credential source pointing to Azure IMDScredentials = identity_pool.Credentials.from_info({ "type": "external_account", "audience": "//iam.googleapis.com/projects/123456789/locations/global/workloadIdentityPools/azure-pool/providers/azure-provider", "subject_token_type": "urn:ietf:params:oauth:token-type:jwt", "token_url": "https://sts.googleapis.com/v1/token", "service_account_impersonation_url": "https://iamcredentials.googleapis.com/v1/projects/-/serviceAccounts/[email protected]:generateAccessToken", "credential_source": { "url": "http://169.254.169.254/metadata/identity/oauth2/token?api-version=2018-02-01&resource=https://iam.googleapis.com/projects/123456789/locations/global/workloadIdentityPools/azure-pool/providers/azure-provider", "headers": {"Metadata": "true"}, "format": {"type": "json", "subject_token_field_name": "access_token"} }})

## Use credentials with any GCP client libraryclient = storage.Client(credentials=credentials, project="my-gcp-project")buckets = list(client.list_buckets())print(f"Accessible GCP buckets: {[b.name for b in buckets]}")The credential source points to Azure’s Instance Metadata Service (IMDS), where the managed identity token is retrieved. The Google Auth Library handles the token exchange with GCP’s STS endpoint and service account impersonation automatically. Credentials are cached and refreshed before expiration, typically every 60 minutes.

The audience parameter must match exactly with your workload identity pool and provider configuration. A mismatch will result in authentication failures with cryptic error messages. The resource parameter in the IMDS URL must also match the audience value to ensure Azure issues a token with the correct scope.

💡 Pro Tip: Store the credential configuration JSON in Azure Key Vault instead of hardcoding it. This allows rotating the workload identity pool configuration without redeploying applications. Use Azure’s Key Vault references in App Service or mount secrets in AKS to inject the configuration at runtime.

This pattern works for any Azure compute service with managed identity support—VMs, App Service, Container Instances, AKS pods with Azure AD Workload Identity enabled. The same configuration scales from a single microservice to hundreds of cross-cloud integrations without managing a single service account key. Each workload authenticates using ephemeral tokens with automatic rotation, eliminating the operational burden of key lifecycle management.

For compliance and audit requirements, enable Cloud Audit Logs on the workload identity pool to track every token exchange. This creates an immutable record of which Azure identities accessed which GCP resources, satisfying requirements for SOC 2, ISO 27001, and similar frameworks.

With identity federation established, the next challenge is ensuring these authenticated services can communicate efficiently. Network topology choices determine whether cross-cloud traffic routes through expensive internet gateways or optimized private links.

Network Architecture Patterns: Hub-and-Spoke vs Mesh Topologies

Once you’ve established connectivity between GCP and Azure, the next decision is how to organize your network architecture. The topology you choose directly impacts latency, cost, operational complexity, and your ability to enforce consistent security policies across clouds.

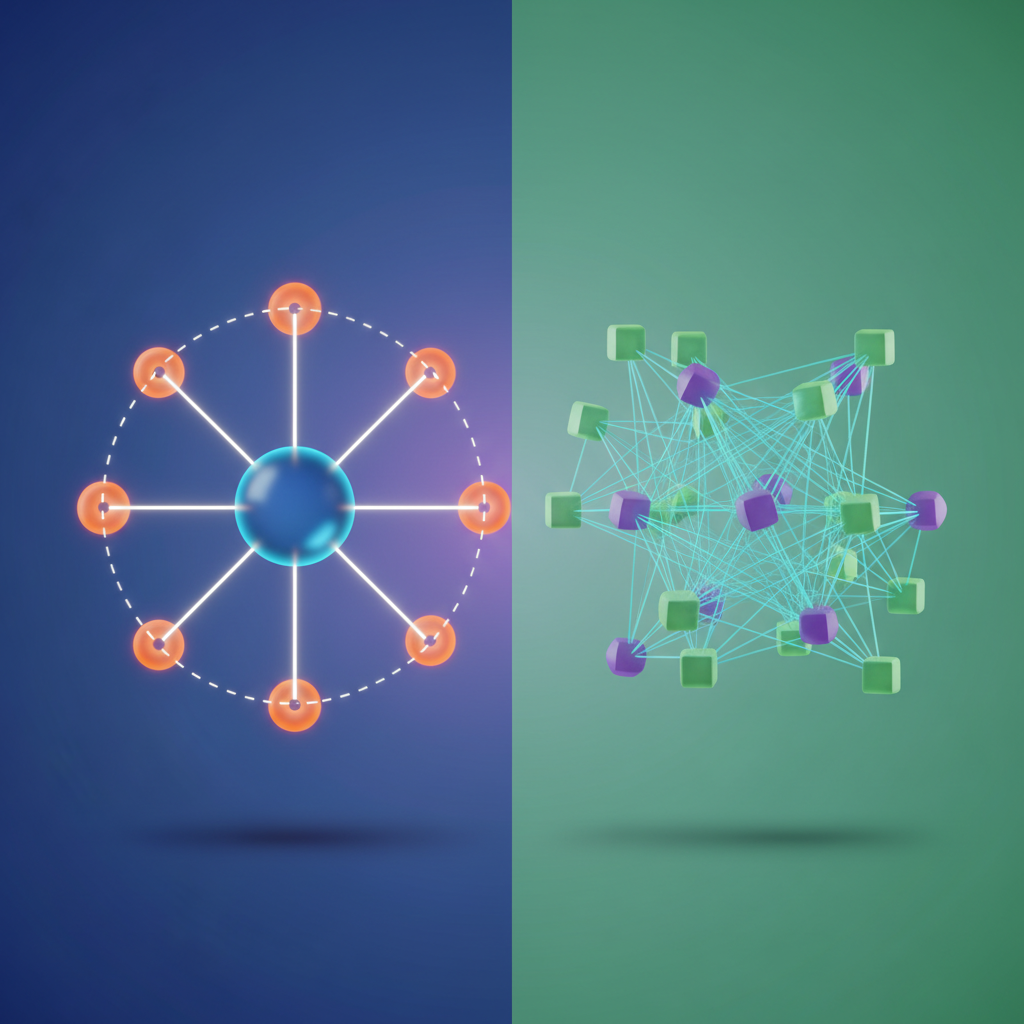

Hub-and-Spoke: Centralized Control with Azure Virtual WAN

A hub-and-spoke model consolidates cross-cloud traffic through a central hub, typically implemented using Azure Virtual WAN. In this pattern, your Azure Virtual WAN hub serves as the central point where GCP VPCs connect via VPN or ExpressRoute/Cloud Interconnect. All cross-cloud traffic flows through this hub, where you can apply centralized firewall rules, route inspection, and traffic logging.

This approach excels when you need strict governance. By funneling traffic through Azure Firewall or a third-party NVA (Network Virtual Appliance) deployed in the hub, you enforce consistent security policies regardless of which cloud originates the request. It also simplifies routing—spoke networks only need to know about the hub, not about every possible destination across both clouds.

The tradeoff is increased latency and potential bottlenecks. Every packet from a GCP Compute Engine instance to an Azure spoke VNet must traverse the hub, adding network hops. For workloads requiring sub-10ms latency between clouds, this overhead becomes significant. Cost also increases since you’re paying for both ingress/egress at the hub and the Virtual WAN service itself.

Mesh Topologies: Direct Paths for Performance

A mesh topology establishes direct connections between GCP VPCs and Azure VNets without intermediate hops. Using VPC Network Peering in GCP and VNet Peering in Azure, combined with direct ExpressRoute/Cloud Interconnect links, workloads communicate over the shortest possible path.

This pattern minimizes latency—critical for distributed databases, real-time analytics pipelines, or microservices architectures spanning both clouds. You eliminate the hub bottleneck and reduce data transfer costs by avoiding double ingress/egress charges.

The complexity surfaces in firewall rule management and DNS resolution. Without a central enforcement point, each VPC and VNet must maintain its own firewall rules for cross-cloud traffic. A simple “allow traffic from Azure production to GCP analytics” policy now requires coordinated NSG rules in Azure and VPC firewall rules in GCP. This distributed model demands robust infrastructure-as-code practices and centralized policy orchestration tools like Terraform or Cloud Deployment Manager.

DNS Resolution Across Clouds

Both topologies require DNS forwarding to enable service discovery. Configure Azure Private DNS zones that forward queries for GCP-hosted services to Cloud DNS forwarding zones, and vice versa. In hub-and-spoke, deploy DNS forwarders in the hub for centralized resolution. In mesh, each VPC and VNet needs conditional forwarding rules pointing to the appropriate cloud’s DNS servers.

💡 Pro Tip: Start with hub-and-spoke for governance and migrate to selective mesh connections for latency-sensitive workloads. Hybrid approaches—hub for management traffic, direct mesh for data plane traffic—offer practical middle ground.

With your network topology defined and DNS resolution in place, you’re ready to implement actual cross-cloud workload communication. The next section demonstrates this with a practical Kubernetes example.

Practical Example: Cross-Cloud Kubernetes Service Communication

With private connectivity established between GCP and Azure, we can now implement secure pod-to-pod communication between a GKE cluster and an AKS cluster. This example demonstrates workload identity federation, mutual TLS authentication, and cross-cloud service discovery—techniques that form the foundation of any serious multi-cloud Kubernetes deployment.

Architecture Overview

Our scenario involves a payment processing service running in GKE that needs to communicate with a fraud detection service in AKS. Both services use Istio as a service mesh, with a shared trust domain spanning both clouds. Traffic flows over the VPN tunnel established in previous sections, never traversing the public internet.

The identity flow works as follows: GKE workloads obtain Google service account tokens, exchange them for Azure AD tokens via workload identity federation, then use those tokens to authenticate to AKS services. Istio handles the mutual TLS handshake using SPIFFE identities anchored to each cluster’s certificate authority.

Network routing deserves attention: when a pod in GKE makes a request to fraud-detection.fraud-ns.svc.cluster.azure, the Istio sidecar intercepts the traffic, wraps it in mTLS, and routes it through the VPN tunnel to the AKS cluster’s ingress gateway. The gateway terminates the mTLS connection, validates the SPIFFE identity, and forwards the request to the target pod. Response traffic follows the reverse path.

Configuring Cross-Cloud Service Mesh

First, establish a shared root certificate authority that both Istio control planes trust. This creates a common trust anchor, allowing workloads in either cloud to verify certificates issued by the other. Generate the root CA and intermediate certificates:

## Generate root CAopenssl req -x509 -sha256 -nodes -days 3650 -newkey rsa:4096 \ -subj "/O=MultiCloud/CN=Root CA" \ -keyout root-key.pem -out root-cert.pem

## Generate intermediate CA for GKEopenssl req -newkey rsa:4096 -nodes \ -subj "/O=MultiCloud/CN=GKE Intermediate CA" \ -keyout gke-ca-key.pem -out gke-ca-cert.csropenssl x509 -req -days 1825 -CA root-cert.pem -CAkey root-key.pem \ -set_serial 0 -in gke-ca-cert.csr -out gke-ca-cert.pem

## Generate intermediate CA for AKS (similar process)openssl req -newkey rsa:4096 -nodes \ -subj "/O=MultiCloud/CN=AKS Intermediate CA" \ -keyout aks-ca-key.pem -out aks-ca-cert.csropenssl x509 -req -days 1825 -CA root-cert.pem -CAkey root-key.pem \ -set_serial 1 -in aks-ca-cert.csr -out aks-ca-cert.pemStore the root CA private key in a secure vault—HashiCorp Vault or Google Secret Manager—and distribute only the public certificate to clusters. Compromise of the root key would allow an attacker to mint trusted certificates for any workload in your mesh.

Deploy Istio to both clusters with the shared trust configuration:

meshConfig: trustDomain: multicloud.local defaultConfig: proxyMetadata: ISTIO_META_DNS_CAPTURE: "true"

pilot: env: EXTERNAL_ISTIOD: "false" PILOT_ENABLE_CROSS_CLUSTER_WORKLOAD_ENTRY: "true"

security: selfSigned: false

global: meshID: multicloud-mesh multiCluster: clusterName: gke-prod-us network: gcp-vpc-networkThe PILOT_ENABLE_CROSS_CLUSTER_WORKLOAD_ENTRY setting allows Istio’s control plane to manage service endpoints across cluster boundaries. The network identifier maps to the VPN tunnel configuration, enabling Istio to route traffic through the correct gateway when crossing network boundaries.

Enabling Cross-Cloud Service Discovery

Configure Istio to discover services across clusters using ServiceEntry resources. These resources teach the GKE cluster about services that exist in AKS but aren’t registered in its internal DNS. On the GKE side, define the AKS fraud detection service:

apiVersion: networking.istio.io/v1beta1kind: ServiceEntrymetadata: name: fraud-detection-aks namespace: paymentsspec: hosts: - fraud-detection.fraud-ns.svc.cluster.azure addresses: - 10.240.5.10 # AKS service ClusterIP ports: - number: 8443 name: https protocol: HTTPS location: MESH_INTERNAL resolution: STATIC endpoints: - address: 10.240.5.10 network: azure-vnet-network---apiVersion: networking.istio.io/v1beta1kind: DestinationRulemetadata: name: fraud-detection-mtls namespace: paymentsspec: host: fraud-detection.fraud-ns.svc.cluster.azure trafficPolicy: tls: mode: ISTIO_MUTUAL subjectAltNames: - "spiffe://multicloud.local/ns/fraud-ns/sa/fraud-detection"The subjectAltNames field is critical: it specifies the exact SPIFFE identity that the remote service must present. This prevents an attacker who has compromised a different service in AKS from impersonating the fraud detection endpoint. Update these ServiceEntry resources when services move or scale—stale endpoint addresses will cause connection failures.

Implementing Workload Identity Federation

Configure the GKE payment service to exchange its Google service account token for Azure AD credentials. This eliminates the need to store long-lived Azure credentials in the GKE cluster:

apiVersion: v1kind: ServiceAccountmetadata: name: payment-processor namespace: payments annotations:---apiVersion: apps/v1kind: Deploymentmetadata: name: payment-processor namespace: paymentsspec: template: metadata: labels: app: payment-processor spec: serviceAccountName: payment-processor containers: - name: processor image: gcr.io/my-gcp-project/payment-processor:v2.1 env: - name: FRAUD_SERVICE_URL value: "https://fraud-detection.fraud-ns.svc.cluster.azure:8443" - name: AZURE_FEDERATED_TOKEN_FILE value: "/var/run/secrets/azure/token" volumeMounts: - name: azure-identity-token mountPath: /var/run/secrets/azure volumes: - name: azure-identity-token projected: sources: - serviceAccountToken: path: token expirationSeconds: 3600 audience: api://AzureADTokenExchange💡 Pro Tip: Set token expiration to 3600 seconds (1 hour) to balance security with performance. Shorter expirations increase token exchange overhead, while longer periods expand the blast radius of compromised tokens.

The application code must implement the token exchange logic. When the payment processor starts, it reads the Google service account token from the mounted volume, sends it to Azure AD’s token endpoint along with the workload identity federation configuration, and receives an Azure AD token in return. Cache this token until it approaches expiration, then refresh it. The Azure SDK’s DefaultAzureCredential class handles this exchange automatically if configured correctly.

Monitoring Cross-Cloud Traffic

Deploy Prometheus and Grafana with federation to aggregate metrics from both clouds. Configure each cluster’s Prometheus instance to scrape local metrics, then set up a central Grafana instance that queries both Prometheus servers via federation. This approach avoids egress costs from pulling all raw metrics to a single location.

Configure Istio telemetry to tag traffic with source and destination cloud metadata:

apiVersion: telemetry.istio.io/v1alpha1kind: Telemetrymetadata: name: cross-cloud-metrics namespace: istio-systemspec: metrics: - providers: - name: prometheus dimensions: source_cloud: source.workload.namespace | "unknown" destination_cloud: destination.workload.namespace | "unknown" cross_cloud_latency: response.duration | 0This enables dashboards that visualize cross-cloud latency, error rates, and certificate expiration times. Monitor VPN tunnel health metrics alongside application metrics to correlate network issues with service degradation. Set up alerts for certificate expiration at 30, 14, and 7 days before expiry—certificate rotation failures are a common cause of cross-cloud mesh outages.

With service-to-service communication secured and monitored, the final challenge becomes operational: troubleshooting connectivity issues across cloud boundaries and optimizing costs when data egress fees compound across multiple network hops.

Operational Challenges: Troubleshooting and Cost Optimization

Running production workloads across GCP and Azure introduces operational complexity that single-cloud environments avoid. Network latency spikes, unexpected egress charges, and authentication failures across cloud boundaries require systematic approaches to diagnosis and optimization. Effective multi-cloud operations demand proactive monitoring, cost-aware traffic engineering, and infrastructure automation that prevents configuration drift.

Debugging Cross-Cloud Connectivity Issues

When cross-cloud traffic stops flowing, VPC Flow Logs provide the first line of defense. In GCP, enable flow logs on subnets carrying inter-cloud traffic to capture packet-level metadata:

gcloud compute networks subnets update azure-interconnect-subnet \ --region=us-central1 \ --enable-flow-logs \ --logging-aggregation-interval=interval-5-sec \ --logging-flow-sampling=1.0 \ --logging-metadata=include-allAzure’s equivalent, NSG Flow Logs, requires attaching a Network Watcher to your ExpressRoute-connected VNet. Query flow logs using BigQuery or Azure Data Explorer to identify asymmetric routing—a common issue where outbound traffic uses Cloud Interconnect but return traffic routes through public internet due to misconfigured route priorities.

from google.cloud import bigquery

client = bigquery.Client()

query = """SELECT jsonPayload.connection.src_ip, jsonPayload.connection.dest_ip, jsonPayload.bytes_sent, TIMESTAMP_TRUNC(timestamp, MINUTE) as minuteFROM `project-id.vpc_flows.compute_googleapis_com_vpc_flows_*`WHERE jsonPayload.connection.dest_ip LIKE '10.20.%' AND DATE(timestamp) = CURRENT_DATE()GROUP BY 1, 2, 3, 4ORDER BY bytes_sent DESCLIMIT 100"""

results = client.query(query).result()for row in results: print(f"{row.src_ip} -> {row.dest_ip}: {row.bytes_sent / 1e9:.2f} GB at {row.minute}")Beyond flow logs, implementing active health checks between cloud environments surfaces connectivity degradation before it impacts production. Deploy lightweight monitoring agents in both clouds that continuously measure ICMP reachability, TCP connection establishment time, and application-layer response latency. Set alert thresholds at p95 latency rather than averages—cross-cloud network paths often exhibit bimodal latency distributions where 95% of requests complete quickly but 5% experience 10x slowdowns due to routing instability.

💡 Pro Tip: Set up alerting when flow logs show TCP retransmissions exceeding 1% of total packets. This indicates either path MTU discovery issues or intermediate network congestion on your interconnect circuit.

Optimizing Data Transfer Costs

Cross-cloud egress is expensive—GCP charges $0.08-$0.12/GB for internet egress, while private interconnects run $0.01-$0.02/GB. Traffic engineering becomes critical when moving terabytes daily. Use GCP’s Cloud Router to implement BGP communities that prefer Cloud Interconnect paths over internet gateways:

from google.cloud import monitoring_v3from datetime import datetime, timedelta

client = monitoring_v3.MetricServiceClient()project_name = f"projects/my-gcp-project"

interval = monitoring_v3.TimeInterval({ "end_time": datetime.now(), "start_time": datetime.now() - timedelta(days=7)})

results = client.list_time_series( request={ "name": project_name, "filter": 'metric.type="compute.googleapis.com/instance/network/sent_bytes_count"', "interval": interval, "view": monitoring_v3.ListTimeSeriesRequest.TimeSeriesView.FULL })

total_egress_gb = sum( point.value.int64_value for result in results for point in result.points) / 1e9

estimated_cost = total_egress_gb * 0.02 # Private interconnect rateprint(f"Weekly egress: {total_egress_gb:.2f} (${estimated_cost:.2f})")Cost optimization extends beyond choosing the right network path. Implement data compression at the application layer before transmission—gzip compression typically achieves 3-5x reduction for JSON payloads and log streams. For batch data transfers exceeding 100GB, evaluate whether temporarily spinning up a dedicated interconnect VLAN justifies the setup cost versus paying premium egress rates. Some organizations find that scheduling large transfers during off-peak hours and leveraging committed use discounts on interconnect capacity reduces monthly networking costs by 40-60%.

Monitoring SLA Compliance and Performance

Monitor SLA compliance by tracking Private Service Connect endpoint availability metrics. Both GCP and Azure guarantee 99.9% uptime for dedicated interconnects, but measuring actual latency percentiles (p50, p95, p99) reveals performance degradation before it impacts users. Deploy distributed tracing across cloud boundaries using OpenTelemetry to attribute latency to specific network hops versus application processing time.

Create custom dashboards that correlate network metrics with business KPIs. For example, tracking the ratio of successful cross-cloud API calls to total attempts reveals whether network issues or application errors drive user-facing failures. Establishing baseline performance during known-good periods enables anomaly detection when latency suddenly increases by 2x or packet loss climbs above 0.1%.

Automation Strategies

Managing cross-cloud networking through consoles guarantees configuration drift. Treat network infrastructure as code using Terraform with separate state files per cloud provider. Implement CI/CD pipelines that validate BGP route propagation after each change, using synthetic probes to verify bi-directional reachability before merging infrastructure updates.

Automate the creation of network baselines after each infrastructure change. Store routing tables, firewall rules, and flow log configurations in version control, then run automated diff checks to detect unauthorized modifications. For organizations managing dozens of interconnects across regions, consider building a central network inventory service that periodically polls both clouds’ APIs to reconcile actual state against desired configuration.

With monitoring and cost controls established, the final consideration is maintaining security posture across cloud boundaries—but even perfectly secured networks require ongoing validation that identity federation continues functioning correctly under real-world conditions.

Key Takeaways

- Use Cloud VPN for initial proof-of-concept cross-cloud connectivity, then migrate to dedicated interconnects for production workloads requiring high throughput or strict latency SLAs

- Implement GCP Workload Identity Federation with Azure AD to eliminate static credentials and achieve unified identity management across both platforms

- Choose hub-and-spoke topology with Azure Virtual WAN when centralizing security controls, or mesh topology when optimizing for lowest latency between specific regions

- Monitor cross-cloud egress costs weekly and implement traffic engineering rules to route bulk transfers through dedicated interconnects rather than VPN tunnels