Building a GPT-Powered Code Review Assistant: From API Calls to CI Pipeline Integration

Your team’s pull request queue is growing faster than reviewers can keep up, and critical bugs are slipping through because senior engineers are burned out from context-switching. The same patterns appear in PR after PR—unclosed resources, missing input validation, console.log statements left in production code—and catching them manually is expensive, repetitive work that scales linearly with your team size.

This is a solvable problem, but not in the way most teams approach it. Linting and static analysis catch syntax errors and style violations, but they miss the semantic patterns that require reading code in context: a SQL query built with string concatenation, an API endpoint that logs request bodies containing PII, a retry loop with no backoff. These are the gaps where a language model earns its place in your review workflow.

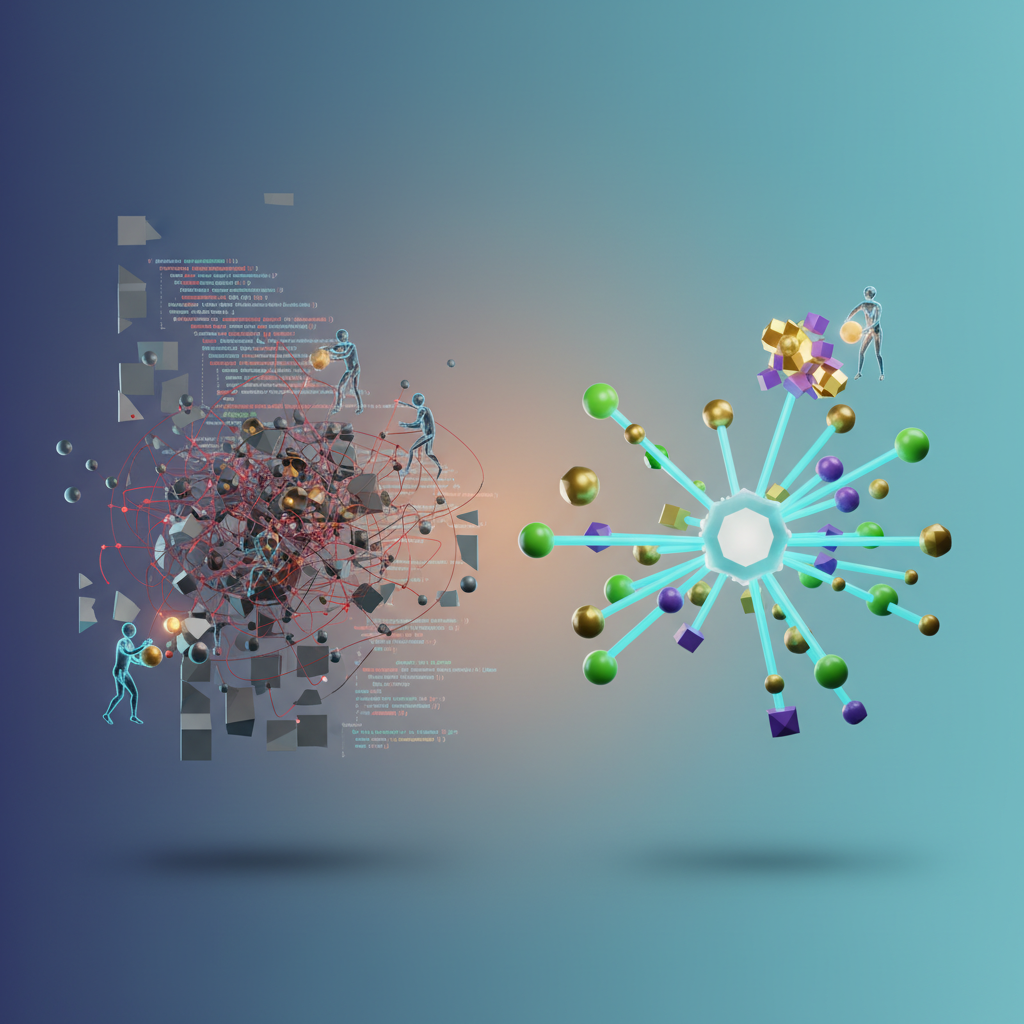

The practical question is not whether GPT can review code—it can, and does it well on a defined class of problems—but how to build the integration correctly so it adds signal rather than noise. A naive implementation that dumps a full diff into a prompt and asks “find bugs” produces verbose, low-confidence output that reviewers learn to ignore within a week. A well-engineered one surfaces actionable findings at the line level, integrates cleanly into your existing CI pipeline, and knows when to stay silent.

What follows is a ground-up walkthrough of building that tool: structuring prompts for precision, parsing diffs into reviewable chunks, calling the OpenAI API in a way that keeps costs predictable, and wiring the whole thing into GitHub Actions or GitLab CI. Before writing the first line of code, though, it’s worth being precise about what this class of tool actually solves—and where its limits are.

Why Manual Code Review Doesn’t Scale (and What AI Can Fix)

At a team of five engineers, code review is a conversation. At twenty, it’s a bottleneck. Senior engineers spend between 10 and 15 percent of their working hours reviewing code—time pulled directly from design work, architecture decisions, and deep implementation work that only they can do. Every PR waiting in the queue represents blocked velocity: a feature stalled, a bug fix delayed, a developer sitting idle or, worse, context-switching into something else while waiting for feedback.

The problem isn’t that engineers review code slowly. It’s that review work is interrupt-driven by nature. A senior engineer deep in a complex refactor gets pinged for a review, switches context, catches three nits and a missing null check, then spends the next fifteen minutes recovering the mental state they just abandoned. Multiply that by ten PRs per day across a team, and you’ve built a distributed context-switching tax that compounds across every sprint.

Where AI Review Earns Its Place

Automated code review with a model like GPT-4 addresses a specific and well-defined slice of this problem. The tasks where it genuinely adds value share a common characteristic: they are pattern-based and context-independent.

- Style and convention enforcement — inconsistent naming, missing error handling, deviation from established patterns

- Common vulnerability classes — SQL injection surfaces, hardcoded credentials, unvalidated input reaching sensitive operations

- Boilerplate correctness — missing test coverage for new public methods, undocumented exported functions, obvious copy-paste errors

- Diff-level logic issues — off-by-one errors, inverted boolean conditions, resource leaks visible within the changed code

These are precisely the things human reviewers check first and find least interesting. Automating them isn’t a compromise—it’s a better use of human attention.

Where Human Judgment Stays Essential

AI code review has hard boundaries. It cannot evaluate whether a new abstraction fits your system’s architecture three years from now. It doesn’t know that your team deliberately avoided a specific pattern because of an incident last quarter. It cannot weigh business tradeoffs embedded in implementation choices that only make sense with organizational context.

Treating AI review as a replacement for human review sets the project up for failure. Treating it as a first-pass filter that elevates the quality of what reaches human reviewers sets it up for success.

💡 Pro Tip: Frame AI review output to your team as “automated pre-screening,” not “automated approval.” This distinction prevents over-reliance and keeps engineers engaged with the tool’s output rather than rubber-stamping it.

With that mental model established, the next step is designing a system architecture that makes these capabilities reliable and maintainable in production—which means thinking carefully about how diff parsing, prompt construction, and API interaction fit together before writing the first function.

Architecture of a GPT Code Review System

Before writing a single line of integration code, getting the architecture right saves you from painful refactors later. A GPT-powered code review system is not just an API wrapper — it is a pipeline with distinct responsibilities at each stage.

The Five Core Components

Diff Extractor pulls the raw unified diff from your VCS. This component normalizes the diff format, strips binary file changes, and tags each hunk with file path and line range metadata. Feeding raw git diff output directly into a prompt is a mistake — the noise-to-signal ratio destroys review quality.

Context Builder enriches the diff with surrounding information: file type, language, affected function signatures, and relevant imports. For a changed function, including the function signature three levels up in the call stack often means the difference between a generic comment and a precise one.

Prompt Engine assembles the final prompt from a template, the enriched diff, and any project-specific conventions. This component enforces the token budget, decides whether a diff needs chunking, and selects the appropriate model tier.

Response Parser converts the model’s structured output into machine-readable review items. Using JSON-mode responses with a defined schema eliminates fragile regex parsing and makes downstream formatting deterministic.

Comment Formatter maps parsed review items back to specific file paths and line numbers, producing the payload shape required by your VCS API — GitHub’s pull request review API expects a different structure than GitLab’s.

Stateless vs. Stateful Review

For single-file changes or small PRs under 200 changed lines, a stateless approach works well: each API call is independent, and there is no session state to manage. For multi-file PRs, a stateful approach that maintains a summary of already-reviewed files lets the model flag cross-file issues — for example, a type defined in models.py used incorrectly in serializers.py. The tradeoff is added complexity in state serialization and higher total token usage.

Token Budget Management

Large diffs require chunking. The naive approach — truncating at the context limit — drops the tail of the diff silently. The correct approach splits diffs at hunk boundaries, preserves file-level metadata in each chunk’s system prompt, and merges the resulting review items before formatting. A 4,000-token chunk size with 200-token overlap between adjacent hunks handles most real-world PRs without losing continuity.

Model Selection

GPT-4o-mini covers the majority of review workloads: linting violations, naming issues, and obvious logic errors at low latency and cost. Reserve GPT-4o for security-sensitive files, complex algorithmic changes, or reviews where the context builder has injected substantial surrounding code.

💡 Pro Tip: Route by file path pattern. Files matching

**/auth/**or**/crypto/**go to GPT-4o automatically; everything else goes to GPT-4o-mini. This single routing rule cuts costs by 60–70% on typical engineering teams without sacrificing review quality on high-risk code.

With the architecture mapped out, the next section walks through the actual implementation — building the review engine using the OpenAI Python SDK, including structured output schemas and chunking logic.

Building the Core Review Engine with the OpenAI API

With the architecture defined, it’s time to build the component that does the actual work: the CodeReviewEngine class that accepts a raw git diff, sends it to the OpenAI API, and returns structured, actionable feedback. This section walks through each layer of that pipeline—diff parsing, prompt construction, API invocation, and response handling—explaining the design decisions that make the engine reliable in production CI environments.

Parsing Git Diffs into Reviewable Hunks

Before invoking the API, you need to extract meaningful context from the diff. Raw git diff output includes noise—file mode changes, index hashes, binary file markers—that adds tokens without adding value. The goal is to isolate changed hunks alongside enough surrounding context for the model to reason about intent.

import refrom dataclasses import dataclass, field

@dataclassclass DiffHunk: filename: str start_line: int added_lines: list[str] = field(default_factory=list) removed_lines: list[str] = field(default_factory=list) context_lines: list[str] = field(default_factory=list)

def parse_diff(raw_diff: str) -> list[DiffHunk]: hunks = [] current_file = None current_hunk = None

for line in raw_diff.splitlines(): if line.startswith("diff --git"): match = re.search(r"b/(.+)$", line) current_file = match.group(1) if match else "unknown" elif line.startswith("@@"): match = re.search(r"\+(\d+)", line) start = int(match.group(1)) if match else 0 current_hunk = DiffHunk(filename=current_file, start_line=start) hunks.append(current_hunk) elif current_hunk is not None: if line.startswith("+") and not line.startswith("+++"): current_hunk.added_lines.append(line[1:]) elif line.startswith("-") and not line.startswith("---"): current_hunk.removed_lines.append(line[1:]) else: current_hunk.context_lines.append(line[1:])

return hunksThis parser produces a clean list of DiffHunk objects, each carrying the filename and line offset needed to generate precise review comments that map back to specific positions in the PR. The start_line field is particularly important: it anchors each hunk to an absolute position in the new file, which the GitHub API requires when posting inline review comments. Without it, you can generate feedback but have nowhere deterministic to attach it.

Context lines are preserved separately from added and removed lines. When you serialize the payload for the model, you’ll use this separation to prefix lines with + and - markers, giving the model the same visual cues a human reviewer sees in a standard diff view.

Constructing the System Prompt for Structured Output

The system prompt is where you establish the contract between your code and the model. Rather than asking for prose feedback, instruct the model to return a JSON array. This makes downstream processing deterministic and eliminates regex parsing of free-form text.

SYSTEM_PROMPT = """You are an expert code reviewer. Analyze the provided git diff hunks and return feedback as a JSON array.

Each item must follow this schema:{ "filename": "<path/to/file.py>", "line": <integer, the line number in the new file>, "severity": "critical" | "warning" | "suggestion", "category": "security" | "performance" | "correctness" | "style" | "maintainability", "comment": "<concise, actionable feedback>", "suggestion": "<optional code snippet showing the fix>"}

Rules:- Only report issues worth a senior engineer's attention.- Skip formatting nitpicks unless they introduce ambiguity.- Return an empty array [] if the diff is clean.- Output raw JSON only. No markdown fences, no prose."""Pro Tip: Explicitly instructing the model to return raw JSON without markdown fences prevents a common failure mode where the response wraps the payload in

```json ... ```blocks, breakingjson.loads()without warning.

The severity and category fields are not cosmetic. They give downstream consumers—your PR formatter, a Slack notifier, a metrics dashboard—a stable vocabulary to filter and route feedback. A CI gate that blocks merges on critical issues needs a field it can compare without parsing natural language.

Calling the API with Retry Logic

Network timeouts and transient rate-limit errors are a reality in CI environments. Wrap your API call in exponential backoff so a single 429 response doesn’t fail an entire pipeline run.

import jsonimport timeimport openaifrom openai import OpenAI

client = OpenAI() # reads OPENAI_API_KEY from environment

def call_with_retry(messages: list[dict], model: str = "gpt-4o", retries: int = 3) -> str: delay = 2 for attempt in range(retries): try: response = client.chat.completions.create( model=model, messages=messages, temperature=0.2, max_tokens=2048, response_format={"type": "json_object"}, ) return response.choices[0].message.content except openai.RateLimitError: if attempt < retries - 1: time.sleep(delay) delay *= 2 else: raise except openai.APITimeoutError: raise RuntimeError("OpenAI API timed out after retries")Setting temperature=0.2 keeps the model’s output consistent across repeated runs on the same diff—important when you’re comparing review quality between prompt iterations. Higher values introduce randomness that makes it difficult to distinguish prompt changes from sampling noise.

The response_format={"type": "json_object"} parameter, available on gpt-4o and gpt-4-turbo, enforces valid JSON at the API level rather than relying solely on prompt instructions. Note that this parameter requires your prompt to explicitly mention JSON—the API will return an error otherwise. It does not enforce your schema, only that the output is parseable JSON, so validation logic in the caller layer remains necessary.

Assembling the Engine

With parsing and API calling in place, the CodeReviewEngine class ties everything together:

class CodeReviewEngine: def __init__(self, model: str = "gpt-4o"): self.model = model

def review(self, raw_diff: str) -> list[dict]: hunks = parse_diff(raw_diff) if not hunks: return []

diff_payload = "\n\n".join( f"File: {h.filename} (starting at line {h.start_line})\n" + "\n".join(f"+ {l}" for l in h.added_lines) + "\n".join(f"- {l}" for l in h.removed_lines) for h in hunks )

messages = [ {"role": "system", "content": SYSTEM_PROMPT}, {"role": "user", "content": f"Review the following diff:\n\n{diff_payload}"}, ]

raw_response = call_with_retry(messages, model=self.model)

try: feedback = json.loads(raw_response) return feedback if isinstance(feedback, list) else feedback.get("reviews", []) except json.JSONDecodeError as e: raise ValueError(f"Model returned unparseable JSON: {e}\nRaw: {raw_response}")The json.loads fallback handles two response shapes: a bare array (ideal) and a wrapped object with a reviews key (a common model deviation even with explicit instructions). Rather than failing on the wrapped form, the engine normalizes it. Any other structure raises a ValueError with the raw response included—critical for diagnosing prompt regressions in CI logs where you can’t interactively inspect API responses.

Calling engine.review(diff_text) returns a list of comment objects ready for consumption—each with a filename, line number, severity, and actionable text. At this point you have a fully functional review engine that handles real diffs, retries gracefully under load, and outputs data your application can route directly to a PR comment thread.

The next step is making those reviews genuinely useful. A working engine that returns generic feedback is only half the solution—prompt engineering determines whether the output is signal or noise.

Prompt Engineering for High-Signal Code Reviews

The difference between a code review assistant that engineers trust and one they ignore comes down almost entirely to prompt quality. Generic prompts produce generic feedback — noise that reviewers learn to skip. The techniques in this section produce reviews that read like they came from a senior engineer who knows your codebase.

Structuring the System Prompt

Your system prompt does three jobs: defines the reviewer’s role, enforces an output schema, and establishes a severity taxonomy that maps to real engineering priorities.

SYSTEM_PROMPT = """You are a senior software engineer conducting a code review. Your job is to identifyissues that matter: bugs, security vulnerabilities, performance problems, and violationsof the conventions listed below. Do not comment on style unless it creates ambiguity.

Respond with a JSON array of review comments. Each comment must follow this schema:{ "severity": "critical" | "warning" | "suggestion", "line": <integer or null>, "category": "bug" | "security" | "performance" | "convention" | "readability", "message": "<concise description of the issue>", "suggestion": "<specific fix or alternative approach>"}

Severity definitions:- critical: Must be fixed before merge. Includes bugs, data loss risks, security vulnerabilities.- warning: Should be fixed. Includes logic errors, performance hotspots, broken conventions.- suggestion: Optional improvement. Includes readability and minor refactors.

Return an empty array if you find no issues. Do not fabricate issues to appear thorough."""The explicit instruction not to fabricate issues is load-bearing. Without it, models fill space with trivial observations to signal effort. The structured JSON schema is equally important: freeform prose reviews are harder to deduplicate, filter by severity, or route to the right reviewer. Enforcing a schema at the prompt level means your downstream tooling can treat the output as data, not text.

Injecting Repository-Specific Context

Static system prompts treat every repository identically. A prompt that knows nothing about your stack will flag things your team has deliberately chosen, and miss violations of conventions it was never told about. The fix is a context-builder that reads your project’s conventions at review time:

def build_review_context(repo_config: dict) -> str: """ repo_config example: { "language": "Python 3.11", "framework": "FastAPI", "conventions": [ "Use Pydantic v2 models for all request/response schemas", "Database queries must go through the repository layer, never directly in route handlers", "All public functions require type annotations", "Async functions must be used for any I/O operations" ], "prohibited_patterns": [ "print() statements in production code", "Hardcoded credentials or API keys", "Raw SQL strings outside the db/queries/ directory" ] } """ conventions_block = "\n".join(f"- {c}" for c in repo_config["conventions"]) prohibited_block = "\n".join(f"- {p}" for p in repo_config["prohibited_patterns"])

return f"""Repository context:Language: {repo_config['language']}Framework: {repo_config['framework']}

Team conventions (flag violations as 'warning'):{conventions_block}

Prohibited patterns (flag violations as 'critical'):{prohibited_block}"""Store repo_config in a .codereview.yml file at the repository root. This keeps conventions version-controlled and editable by the team, not buried in pipeline configuration. When a convention changes — say, your team migrates from Pydantic v1 to v2 — updating a single YAML file updates the reviewer’s behavior for every subsequent PR.

Few-Shot Calibration

Few-shot examples do more than improve accuracy — they calibrate tone. One well-chosen example communicates the expected specificity better than three paragraphs of instructions. It also implicitly demonstrates what the model should not flag: if your example shows a critical finding for SQL injection but ignores a minor naming inconsistency in the same snippet, the model learns the bar for each severity level.

FEW_SHOT_EXAMPLES = [ { "role": "user", "content": "Review this diff:\n```python\ndef get_user(user_id):\n return db.execute(f'SELECT * FROM users WHERE id = {user_id}')\n```" }, { "role": "assistant", "content": '[{"severity": "critical", "line": 2, "category": "security", ' '"message": "SQL injection vulnerability via f-string interpolation.", ' '"suggestion": "Use parameterized queries: db.execute(\'SELECT * FROM users WHERE id = ?\', (user_id,))"}]' }]Inject these examples between the system prompt and the actual diff in every API call. Two to three examples are sufficient; beyond that, you’re burning tokens on diminishing returns. Rotate examples periodically to cover different categories — a security example, a convention violation, and a clean diff that correctly returns an empty array will cover the main behavioral cases.

Building an Evaluation Harness

Prompt iteration without measurement is guesswork. A minimal evaluation harness runs your prompt against a fixed set of labeled samples and reports precision and recall against expected findings:

def evaluate_prompt(test_cases: list[dict], client: OpenAI) -> dict: true_positives, false_positives, false_negatives = 0, 0, 0

for case in test_cases: comments = run_review(case["diff"], client) found_categories = {c["category"] for c in comments}

for expected in case["expected_findings"]: if expected in found_categories: true_positives += 1 else: false_negatives += 1

for found in found_categories: if found not in case["expected_findings"]: false_positives += 1

precision = true_positives / (true_positives + false_positives) if (true_positives + false_positives) > 0 else 0 recall = true_positives / (true_positives + false_negatives) if (true_positives + false_negatives) > 0 else 0

return {"precision": round(precision, 3), "recall": round(recall, 3)}Build your test suite from real PRs: pull ten past reviews where the team caught a real bug, ten where the diff was clean, and five that generated false-positive noise in earlier runs. This distribution matters — a suite weighted toward known bugs will optimize for recall at the expense of precision, and high false positive rates are what destroy adoption. Target precision above 0.85 before deploying to a team workflow. Engineers will stop reading reviews the moment the false positive rate becomes a tax on their attention, and winning that trust back is significantly harder than building it correctly the first time.

Pro Tip: When precision drops after a prompt change, check the false positives first. They almost always cluster around a specific pattern — a framework idiom the model misreads, or an ambiguous convention description. Tighten the convention wording before changing the prompt structure.

With a stable, high-precision prompt and a test harness to guard against regression, you have everything needed to wire this system into your CI pipeline — which is exactly what the next section covers.

Integrating with GitHub Actions for Automated PR Reviews

With the core review engine built, the next step is wiring it into your CI pipeline so every pull request gets reviewed automatically—no manual intervention required. GitHub Actions provides the primitives to trigger on PR events, fetch diffs, invoke your review engine, and post structured feedback directly on the PR.

Workflow Trigger and Permissions

Create the workflow file in your repository. The pull_request event with the opened and synchronize types covers both new PRs and force-pushes to existing ones. The reopened type is worth adding if your team frequently closes and reopens PRs during review cycles.

name: AI Code Review

on: pull_request: types: [opened, synchronize, reopened] branches: [main, develop]

permissions: contents: read pull-requests: write

jobs: review: runs-on: ubuntu-latest if: | github.event.pull_request.draft == false && github.actor != 'dependabot[bot]' && github.actor != 'renovate[bot]'

steps: - name: Checkout repository uses: actions/checkout@v4 with: fetch-depth: 0

- name: Set up Python uses: actions/setup-python@v5 with: python-version: '3.12'

- name: Install dependencies run: pip install openai requests python-dotenv

- name: Run AI code review env: OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }} GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }} PR_NUMBER: ${{ github.event.pull_request.number }} REPO_FULL_NAME: ${{ github.repository }} run: python scripts/ai_review.pyThe if condition on the job filters out draft PRs and bot-generated PRs before any API calls are made—keeping costs down without complex logic inside the Python script. Scoping branches to main and develop prevents the workflow from firing on every feature branch push in repositories that use short-lived branches as staging areas.

Fetching the Diff and Enforcing Size Limits

Inside ai_review.py, fetch the PR diff through the GitHub API and apply a size gate before sending anything to OpenAI. The application/vnd.github.v3.diff accept header returns the raw unified diff rather than the JSON patch representation, which is what your review engine expects.

import osimport requests

GITHUB_TOKEN = os.environ["GITHUB_TOKEN"]OPENAI_API_KEY = os.environ["OPENAI_API_KEY"]REPO = os.environ["REPO_FULL_NAME"]PR_NUMBER = os.environ["PR_NUMBER"]

MAX_DIFF_LINES = 800

def fetch_pr_diff() -> str: url = f"https://api.github.com/repos/{REPO}/pulls/{PR_NUMBER}" headers = { "Authorization": f"Bearer {GITHUB_TOKEN}", "Accept": "application/vnd.github.v3.diff", } response = requests.get(url, headers=headers) response.raise_for_status() return response.text

def is_reviewable(diff: str) -> bool: lines = diff.splitlines() changed = [l for l in lines if l.startswith(("+", "-")) and not l.startswith(("+++", "---"))] return len(changed) <= MAX_DIFF_LINESDiffs beyond 800 changed lines produce noisy, low-quality reviews and burn through token budgets fast. Log a skip message and exit cleanly when the threshold is exceeded—reviewers see a neutral check rather than a failure. If you need finer-grained control, consider splitting the diff by file and reviewing each file independently, then aggregating results before posting.

You can also add a paths filter directly to the workflow trigger to exclude commits that touch only documentation, assets, or generated files:

on: pull_request: paths: - 'src/**' - 'lib/**' - '**.py' - '**.ts'This prevents the review job from running—and incurring API costs—on PRs that update only README.md or bump image assets.

Posting Comments via the Reviews API

Use the GitHub Pull Request Reviews API to post feedback as a single review object rather than individual comments. This groups all findings into one collapsible block and avoids spamming the PR timeline.

def post_review(body: str, event: str = "COMMENT") -> None: url = f"https://api.github.com/repos/{REPO}/pulls/{PR_NUMBER}/reviews" headers = { "Authorization": f"Bearer {GITHUB_TOKEN}", "Accept": "application/vnd.github+json", "X-GitHub-Api-Version": "2022-11-28", } payload = {"body": body, "event": event} response = requests.post(url, json=payload, headers=headers) response.raise_for_status()

if __name__ == "__main__": diff = fetch_pr_diff() if not is_reviewable(diff): print(f"Diff exceeds {MAX_DIFF_LINES} lines. Skipping AI review.") raise SystemExit(0)

review_body = run_review_engine(diff) # your engine from Section 3 post_review(review_body)Pro Tip: Store your

OPENAI_API_KEYunder Settings → Secrets → Actions, never in workflow environment variables declared inline. Rotating the key requires updating only the secret, not the workflow file.

Rate Limiting and Cost Controls

Three controls work together to keep spend predictable at scale. First, set a monthly spend limit in your OpenAI dashboard (Settings → Billing → Usage limits) as a hard ceiling. Second, add a per-workflow circuit breaker by checking the github.run_attempt context—skip re-reviews on retried runs to avoid double charges on flaky infrastructure. Third, use the paths filter described above to ensure the workflow only fires when source files change.

For repositories with high PR volume, consider caching review results keyed on the diff SHA. If a developer force-pushes without changing any file content—common when rebasing—the SHA remains identical and the cached review can be reposted without a new API call. This alone can reduce OpenAI costs by 20–30% in active monorepos.

With the workflow deployed, you have automated review coverage running on every meaningful code change. The next step is measuring whether that coverage is actually improving review quality—and building the feedback loop that makes the system smarter over time.

Measuring Impact and Iterating on Review Quality

Deploying your code review assistant is the beginning, not the end. Without measurement, you’re flying blind—unable to distinguish a genuinely useful tool from one that generates noise engineers learn to ignore.

Metrics That Actually Matter

Three metrics give you a clear picture of tool effectiveness:

Comment acceptance rate measures the percentage of AI-generated comments that result in a code change. Track this by correlating GitHub PR review comments with subsequent commits. A healthy baseline sits at 40–60%; below 30% signals prompt drift or poor signal-to-noise ratio.

False positive rate captures how often engineers explicitly dismiss or resolve AI comments without acting on them. Use GitHub’s comment resolution API to pull this data automatically and store it per rule category—security warnings, style issues, and logic concerns each warrant separate baselines.

Time-to-merge delta compares average PR cycle time before and after adoption, segmented by team and PR size. A meaningful improvement is typically 15–25% reduction in review round-trips, not raw merge speed.

Building a Feedback Loop

Instrument your GitHub Actions workflow to write structured feedback events to a lightweight datastore—a Postgres table or even a flat JSONL log works fine at team scale. Capture the comment text, the file and line context, the rule category, and the resolution outcome. After four to six weeks of data, patterns emerge: prompts producing consistent false positives reveal themselves, and high-acceptance categories highlight where the tool earns its keep.

Use this data to prune, rewrite, or re-weight prompt instructions. If security comments are accepted at 70% but style comments sit at 18%, redirect token budget accordingly.

Cost at Team Scale

GPT-4o pricing runs roughly $0.0025 per 1K input tokens. A typical PR diff with context averages 3,000–5,000 tokens, putting per-review cost at $0.008–$0.013. For a team of 20 engineers merging 15 PRs weekly, monthly spend lands around $50–$80—a fraction of a single hour of senior engineer review time.

With measurement infrastructure in place, your assistant becomes a system you actively improve rather than one you deploy and forget.

Key Takeaways

- Start by scoping AI review to mechanical concerns (style, common bugs, security patterns) and keep architectural decisions with human reviewers

- Structure your prompts to return JSON with severity levels and line references so you can post precise, actionable GitHub review comments programmatically

- Track comment acceptance rate from day one—if engineers are dismissing more than 30% of AI comments, your prompt needs tuning before expanding coverage

- Implement token chunking for large diffs and add file-size skip thresholds in your GitHub Action to control costs at scale